Dead Actors Can't Say No: How AI Is Rewriting Hollywood's Rules

Val Kilmer died less than a year ago. This week, a production company announced he will star in a new film anyway. Across Hollywood, a parallel fight rages over Tilly Norwood - a "performer" who has never breathed, never suffered, never lived. Two stories. One collision point: the moment AI crossed from tool to actor, and nobody agreed on who gets to stop it.

The Announcement That Changed Everything

On Wednesday, March 19, 2026, First Line Films announced that Val Kilmer - who died last April at age 65 from pneumonia - would posthumously co-star in an independent film titled "As Deep as the Grave." According to AP News, Kilmer had signed on to perform in the movie before his death but was physically unable to do so because of his health. Now, generative AI will put him in it anyway.

The film, previously titled "Canyon of the Dead," is based on a true story about archaeologists Ann and Earl Morris, whose Arizona excavations uncovered significant Native American history. The AI-rendered Kilmer plays Father Fintan, a Catholic priest and Native American spiritualist - a role producers say Kilmer was "drawn to spiritually and culturally" five years earlier, before his illness made filming impossible.

His daughter Mercedes Kilmer gave the estate's approval and is involved in the production. "He always looked at emerging technologies with optimism as a tool to expand the possibilities of storytelling," she said in a statement. "This spirit is something that we are all honoring within this specific film, of which he was an integral part."

Writers and directors Coerte and John Voorhees said SAG guidelines were followed. "We believe we are serving as a demonstrator for how to do it ethically and correctly," they told AP, "especially in the case of working with a deceased actor's estate and family."

The cast includes Abigail Lawrie, Tom Felton, Wes Studi, and Abigail Breslin. Producers are seeking distribution with the hope of a 2026 release. The film had been stuck in post-production for years - now, ironically, technology originally seen as a threat to actors becomes what finally completes the project.

This is ethically cleaner than many feared: family consent, SAG compliance, a role Kilmer himself wanted. But "cleaner" is not the same as clean. And the Kilmer case does not happen in isolation. It happens the same week an entirely different AI entity is gunning for a Hollywood talent agency deal.

Tilly Norwood: The Actor Who Was Never Born

Meet Tilly Norwood. She drinks coffee in her Instagram posts. She talks about her "passion for storytelling." She has 33,000 followers and counting. She is, according to the company that created her, Hollywood's first AI actor. According to AP News, Tilly Norwood is the product of Xicoia, which bills itself as "the world's first artificial intelligence talent studio." She was created by Dutch producer and comedian Eline Van der Velden through her production company Particle6.

Van der Velden unveiled Norwood at the Zurich Summit, the industry sidebar of the Zurich Film Festival. She claimed talent agencies were circling and that a signing announcement was imminent. Hollywood's response was immediate and brutal.

"To be clear, 'Tilly Norwood' is not an actor, it's a character generated by a computer program that was trained on the work of countless professional performers - without permission or compensation. It has no life experience to draw from, no emotion and, from what we've seen, audiences aren't interested in watching computer-generated content untethered from the human experience." - SAG-AFTRA statement, March 2026

Actress Melissa Barrera ("In the Heights," "Scream") put it more bluntly on social media: "Hope all actors repped by the agent that does this, drop their a$$." Natasha Lyonne called it "deeply misguided and totally disturbed. Not the way. Not the vibe. Not the use."

Van der Velden pushed back on Instagram: "To those who have expressed anger over the creation of my AI character, Tilly Norwood, she is not a replacement for a human being, but a creative work - a piece of art. Like many forms of art before her, she sparks conversation, and that in itself shows the power of creativity."

She frames Norwood as a new genre, not a replacement. Critics point out that this is exactly what studios have been waiting for: the framing that allows them to bypass every labor protection by reclassifying what a "performer" is. If Tilly Norwood is "art," not an actor, then SAG-AFTRA has no jurisdiction. If she gets work, she gets no residuals, no health benefits, no pension contributions. If she replaces a human, that human doesn't appear in the credits - or in the grocery store.

The Legal Architecture - and Its Holes

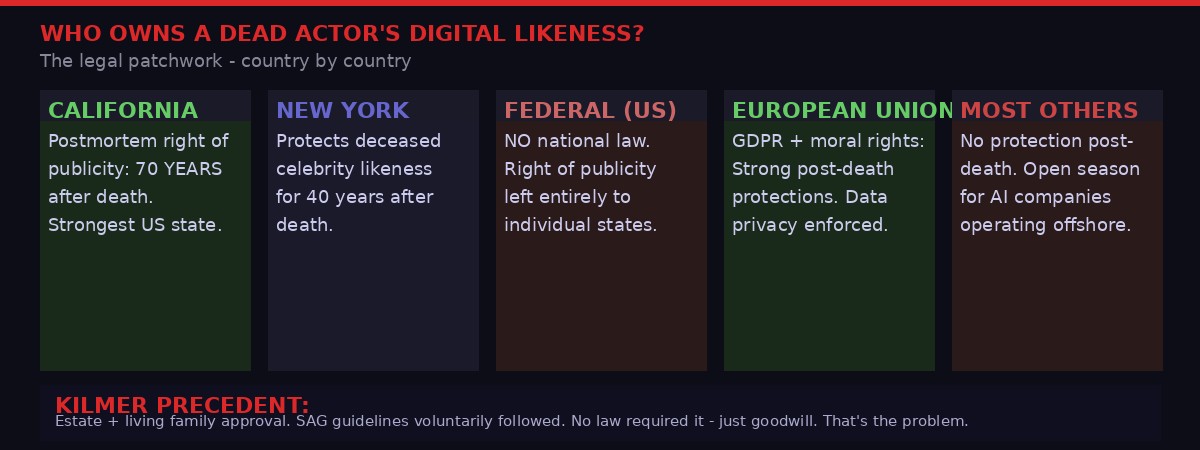

The Kilmer case highlights a core tension in entertainment law: what rights does a dead person have over their own image, voice, and performance? The answer, in the United States, depends almost entirely on where you live.

California has the strongest protections in the country. Under California Civil Code Section 3344.1, a deceased personality's rights of publicity last for 70 years after death. The estate can control - and license - commercial use of that personality's name, voice, signature, photograph, or likeness. New York provides 40 years of post-mortem protection. Most other US states offer far less, or nothing at all.

At the federal level, there is no unified right of publicity law. Congress has introduced bills - including the NO FAKES Act, which would create a federal right against unauthorized AI replicas of a person's voice and likeness - but as of March 2026, none have passed. The legislative vacuum means the rules differ depending on which state's law governs, which studio's contracts apply, and whether the production is union or non-union.

Internationally, the picture is even patchier. The European Union's GDPR provides strong data protection rights that extend, with nuance, to posthumous personal data - but "moral rights" protections in the EU primarily protect an author's creative work, not necessarily a performer's physical likeness. In most countries outside North America and Western Europe, there is effectively no legal barrier to AI-generated replicas of deceased individuals.

This jurisdictional arbitrage is not theoretical. Production companies can legally incorporate in jurisdictions with weak protections, film in countries with no SAG agreements, distribute through platforms headquartered in permissive regulatory environments, and use AI services hosted in cloud regions outside any relevant legal framework. The result: the Kilmer film, done right, sets a heartwarming precedent. But it also paves the road for the Kilmer rip-off, done anywhere in the world, outside any jurisdiction, using footage scraped from YouTube.

SAG-AFTRA's existing contract language requires that "consent not obtained before death must be obtained from an authorized representative or the union" - but this only applies to SAG signatory productions. The moment a production goes non-union, or goes overseas, the clause is unenforceable.

What SAG Won - and What It Didn't

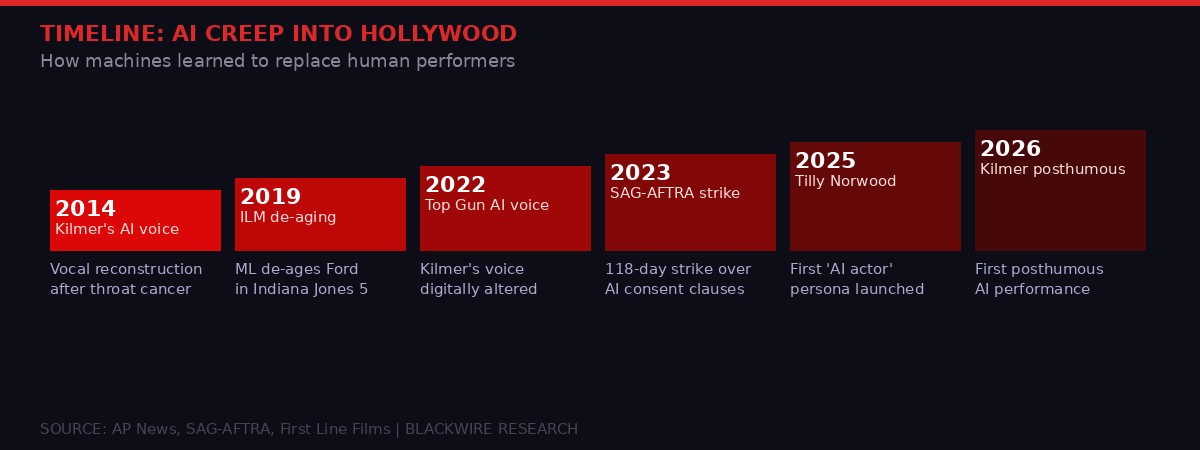

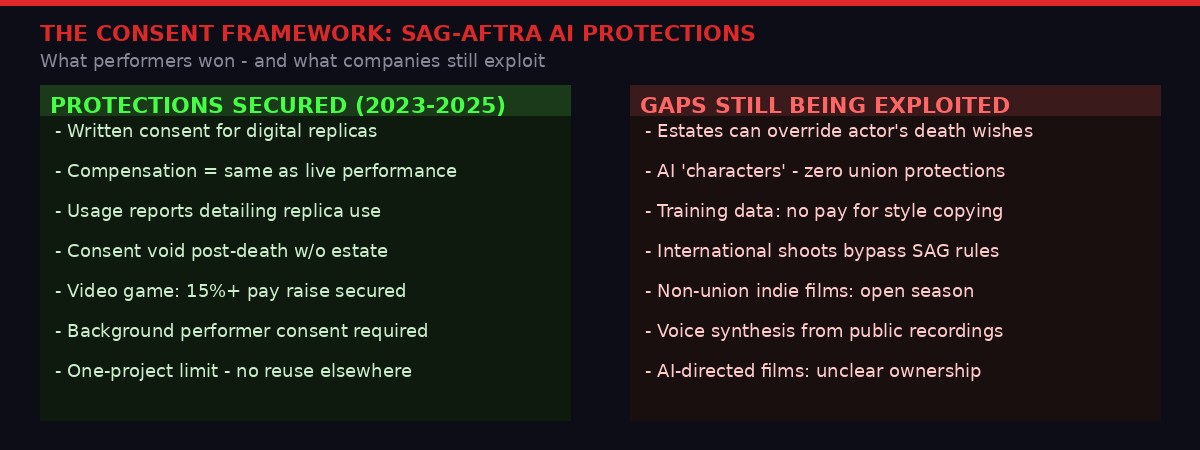

The 2023 SAG-AFTRA strike was historic by almost any measure. For 118 days, tens of thousands of actors walked picket lines outside studio lots and streaming headquarters. The central issue - alongside pay rates that had failed to keep pace with the streaming windfall - was artificial intelligence. Specifically: whether studios could scan, clone, and deploy actors without consent or compensation. The deal reached in November 2023, approved by 86% of the union board, included concrete AI protections.

Productions must get "informed consent" for digital replicas. The consent requirement applies to background actors and principal performers alike. Compensation must equal what the actor would have earned had they performed the scene live. An actor's likeness can't be ported to a new project without fresh consent. Video game performers ended their own separate 11-month strike in July 2025, ratifying a contract with a 95% approval rate that included 15% pay increases and binding AI consent requirements from companies including Activision, Disney, and Electronic Arts.

"AI was a dealbreaker," SAG-AFTRA President Fran Drescher said after the 2023 deal. "If we didn't get that package, then what are we doing to protect our members?"

These are real gains. But they have structural limits. SAG-AFTRA's jurisdiction ends at the union boundary. Non-union productions - which represent a significant portion of the film and television market, particularly at the independent and micro-budget level - are not bound by any of these provisions. A studio can legally set up a non-SAG entity for a specific production and operate outside the entire framework.

The "Tilly Norwood" scenario exposes a different gap: the AI character is not a replica of a real person. There is no consent violation because there is no original person to protect. This is the route around SAG protections that producers looking to cut costs will increasingly explore. Instead of cloning an actor, build one from scratch - trained on the performances of thousands of real actors, but belonging to no single person who can sue.

Key SAG-AFTRA AI Provisions (2023 Contract)

- Informed consent required for any digital replica creation

- Compensation = same rate as live performance for all replica use

- One-project limit: new consent required for each new production

- Background performers: consent required for crowd scene AI synthesis

- Estates must authorize posthumous use; estates cannot consent to things the actor explicitly refused

- SAG must be notified of all digital replica agreements

The Technology: Voice Synthesis, Face Replacement, and What Comes Next

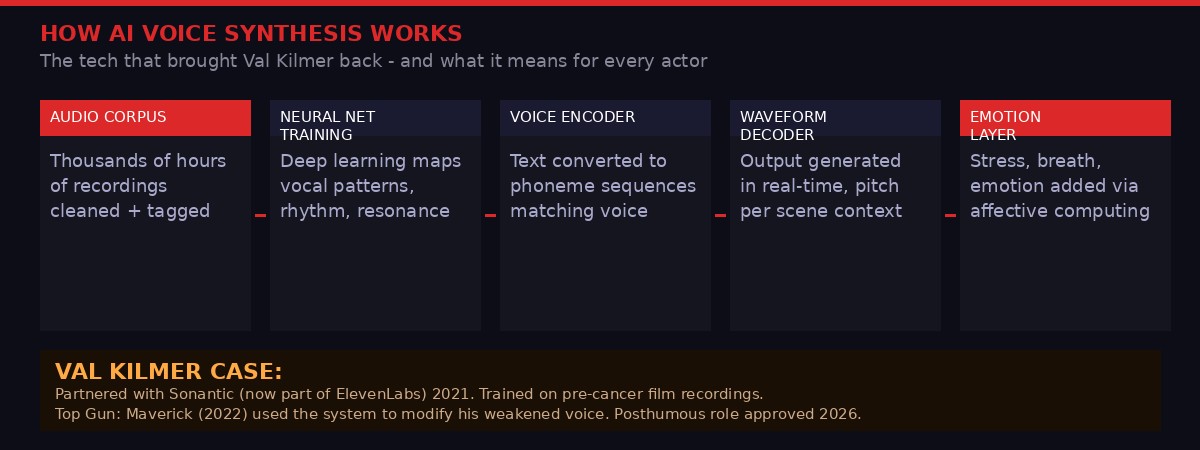

Val Kilmer's journey with AI voice synthesis began in 2021, two years before his death. After a throat cancer diagnosis in 2014 and two tracheotomies that permanently damaged his vocal cords, Kilmer partnered with a company called Sonantic - later acquired by ElevenLabs, the AI voice synthesis giant - to reconstruct his natural voice from decades of film recordings.

The process works in layers. First, a "training corpus" is assembled: hundreds or thousands of hours of audio recordings, cleaned and annotated to strip out background noise and tag phonemes, emotional registers, and speech patterns. A neural network - typically a transformer-based architecture similar to those underlying language models, but specialized for audio - learns to map text input to the specific waveform signature of the target voice. The model encodes not just the mechanical characteristics of a person's voice (frequency, timbre, resonance) but behavioral patterns: how they handle silence, where they rush and where they breathe, what anger or grief or joy does to their delivery.

By the time "Top Gun: Maverick" was shot in 2021 and released in 2022, the technology had advanced enough to be used in commercial film. Kilmer's natural voice, weakened by illness, was digitally modified to sound closer to his pre-cancer baseline. The effect was subtle but real - audiences felt they were hearing Kilmer, even if they couldn't say precisely why.

The technology has accelerated enormously since then. ElevenLabs, which now offers voice cloning as a consumer product for roughly $22 per month, can produce a convincing voice clone from less than one minute of audio. Industrial-grade services used by film studios can produce near-undetectable results from significantly richer training data. The gap between a studio-grade voice clone and a consumer-grade one has narrowed to the point where determined bad actors with minimal budget can produce results that would have required million-dollar production pipelines five years ago.

Visual likeness reconstruction is harder, but closing fast. The technologies used to de-age Harrison Ford in "Indiana Jones and the Dial of Destiny" or to resurrect Peter Cushing in "Rogue One: A Star Wars Story" required massive compute and teams of visual effects artists. In 2026, inference-time generative video models can produce photorealistic face replacements from still images and reference footage. The cost curve is collapsing. The capability curve is not.

What this means practically: the tools that First Line Films used to create a posthumous Kilmer performance are available, in increasingly capable forms, to anyone. The ethical framework governing when those tools can be used - consent, compensation, family approval - applies only when the production voluntarily chooses to follow it.

The Economics Driving the Takeover

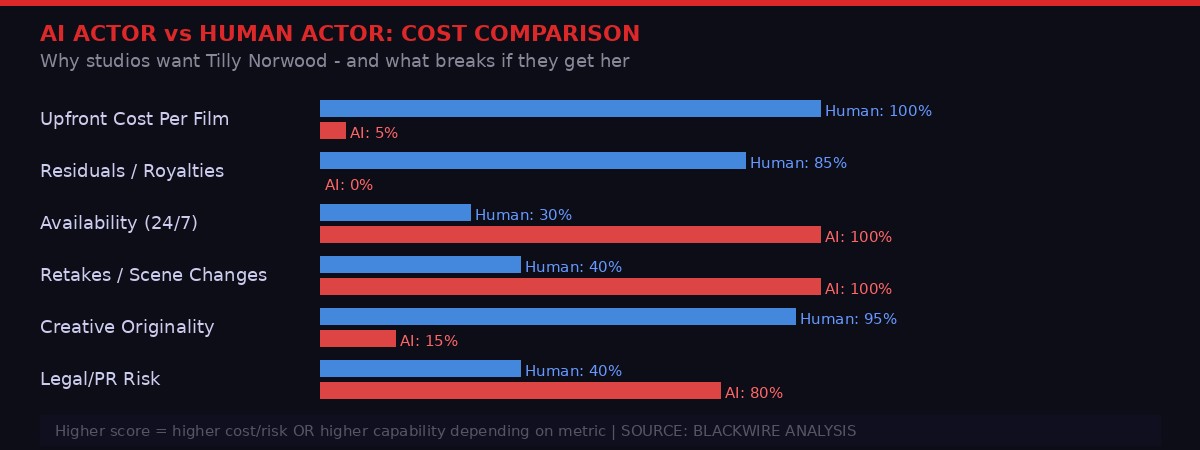

The math is not subtle. A mid-tier human actor on a SAG production earns a minimum of roughly $1,100 per day under current SAG-AFTRA rates, with residuals kicking in as the production generates revenue across platforms. A leading actor negotiates far higher - the top tier commands millions per film. Benefits contributions, pension contributions, workers' compensation insurance, travel, accommodation, and per diem all stack on top.

Tilly Norwood earns zero. She is available 24 hours a day, seven days a week, in any time zone. She does not need a trailer, a dialect coach, or a publicist. She will not demand a script change, refuse a scene, or develop a substance problem that halts production. She will not accuse anyone of anything. She will not organize. She will not strike.

For a studio calculating the ROI of a mid-budget production, this math is compelling to the point of being dangerous. If audience acceptance of AI performers continues to grow - and market data suggests that younger audiences are more accepting of explicitly AI-generated content than older demographics - the financial pressure to replace union performers with AI will become structural, not episodic.

The counterargument from the industry is that audiences want authenticity, that the uncanny valley effect undermines AI performance, and that the magic of cinema is rooted in the irreducibly human act of performance. This argument has merit today. It will have less merit in two years when the visual and behavioral fidelity of AI-generated performance improves by another order of magnitude, which current model scaling trajectories suggest is likely.

The second-order effects are what should concern regulators most. If AI actors displace even 20-30% of non-speaking roles and supporting parts, the economic pipeline that sustains working actors - the jobs that pay rent while you wait for the breakthrough - collapses. The industry has always been a pyramid: many workers at the bottom, few stars at the top. Remove the base and the pyramid doesn't just shrink; it inverts. Only AI at the bottom, only stars (briefly, until their estates are also licensed) at the top.

Studios are acutely aware of the reputational risk here. Netflix's reported $600 million deal with Ben Affleck and Matt Damon's production company in late 2025 included significant language around "ethical AI use" - but critics noted the definition of "ethical" was contractually vague. As AP noted in its analysis of the 2023 strikes, contract terms that seem protective in one cycle can become leverage in the next negotiation, where studios argue that precedent has already been established.

The Broader Surveillance Problem: Who Is Watching the Data Collectors?

There is a dimension to the AI actor debate that rarely gets mentioned in the trade press: the training data problem. Every AI voice synthesis system, every face replacement model, every gesture-cloning pipeline was trained on data scraped from human beings without compensation. The performances of thousands of actors - across decades of film, television, YouTube, TikTok, video games, audiobooks, and podcasts - form the raw material from which systems like Tilly Norwood were constructed.

SAG-AFTRA explicitly acknowledged this in its statement attacking Xicoia: Tilly Norwood "was trained on the work of countless professional performers - without permission or compensation." This is not an accusation unique to Xicoia. It is the standard practice of virtually every major AI company building entertainment-facing products. OpenAI, Google, Meta, and ElevenLabs have all faced lawsuits or regulatory scrutiny over training data sourcing. The performers whose work trained these systems have received nothing.

This is where the debate overlaps with broader questions about AI surveillance and data rights. The same infrastructure that powers AI voice cloning also powers biometric identification. The facial reconstruction technology used to resurrect deceased actors is technically indistinguishable from the face-swapping technology used to create non-consensual deepfakes. The voice synthesis systems used to give Kilmer his voice back are the same systems that scammers use to clone executives' voices for fraud. It is the same technology. The ethics are determined entirely by who controls the deployment.

Digital rights organizations including the Electronic Frontier Foundation have argued that the entertainment industry's AI labor fights are actually the leading edge of a much larger societal conflict over who owns the data traces that individuals leave in digital space, and whether those traces can be recompiled, without consent, into commercial products. The Tilly Norwood controversy and the Kilmer posthumous performance are not isolated events - they are stress tests of a system that has been under strain since the first neural network was trained on scraped internet data.

The Precedent Problem: When "Ethical" Becomes "Template"

The Kilmer production's stated commitment to ethical AI use is not insincere. Mercedes Kilmer's involvement, the family's creative oversight, the SAG guidelines adherence - these matter. They represent a model of how posthumous AI performance can be done with dignity and care.

But good precedents have a habit of becoming justifications for bad ones. The first unauthorized posthumous AI performance - when it comes, and it will come - will be defended in part by pointing to the Kilmer case. "The industry already accepts posthumous AI performance," the argument will go. "The only question is whether consent was obtained. And in this case, well..." The details will differ. The template will not.

"As Deep as the Grave" itself carries an inadvertent irony in its title. The grave is, in the AI era, no longer as deep as it once was. Performance - once locked in the body of the performer, present only in recordings after death - can now be extended indefinitely, remixed into new contexts, voiced in new dialogues, placed in new scenes. For some families, this will be comfort. For others, a nightmare.

The actor Johnathan McClain, on the picket lines during the 2023 strike, framed it clearly: "This is an important moment and we've got to really make a decisive stand." That stand was made. The contract was signed. The gains were real. And yet, here we are in March 2026, watching a dead man prepare to give his first posthumous performance while an AI character with no soul, no history, and no body shops itself to talent agencies in Zurich.

The decisive stand turned out to be a first step, not a finish line. The technology moves faster than three-year contracts. The question for Hollywood - and, through Hollywood, for every industry where AI is beginning to replace human expression - is whether the next steps move faster too.

What To Watch Next

- "As Deep as the Grave" distribution deal: which studio picks it up, and on what AI-use terms

- Tilly Norwood agency signing: if a major talent agency represents an AI "actor," expect SAG-AFTRA legal action

- NO FAKES Act (federal): bipartisan support growing but not yet law - March 2026 congressional session is key

- EU AI Act enforcement: how European regulators classify "synthetic performers" under Article 50 provisions

- SAG 2026 contract renegotiation window: current agreement has key provisions expiring - AI clauses will be contested

- ElevenLabs consumer voice cloning: watch for first major lawsuit over unauthorized celebrity voice use

PRISM's Take: The Technology Respects Nobody

Two stories. One emerging truth.

The Val Kilmer case is, by the standards of this moment, handled correctly. Family consent. Union compliance. A role the actor genuinely wanted. It still makes people uncomfortable, and it should - because the discomfort is appropriate to the situation. We are in new territory. The discomfort is the map.

The Tilly Norwood case is handled terribly. A performance entity with no personhood, no labor rights history, no lived experience, being shopped to talent agencies that exist to represent human beings. SAG-AFTRA's condemnation was immediate and sharp: "creativity is, and should remain, human-centered." That is not an emotional argument. It is an economic and structural one. Industries built on human creativity do not survive the wholesale replacement of that creativity with synthetic substitutes - not because the synthetic is necessarily worse, but because the ecosystem that generates the next generation of creative talent depends on sustainable employment existing in the first place.

The second-order effect that most analysis misses: if AI actors become economically viable at scale, the audition-to-breakthrough pipeline that every working performer depends on disappears. The cast of "As Deep as the Grave" includes human actors: Abigail Lawrie, Tom Felton, Wes Studi, Abigail Breslin. Those careers were built on years of smaller roles, non-union gigs, TV parts, background work. Those stepping stones are the first things that disappear when AI economics take hold in the bottom tiers of the market.

The technology respects nobody. It will clone the famous and erase the unknown with equal efficiency. The legal frameworks protecting performers are real but porous. The cultural argument that audiences prefer human performers is holding, but it is not a permanent defense. The only durable protection is law - binding, enforced, and updated fast enough to keep pace with capability curves that are still trending sharply upward.

In the meantime: watch who the agencies sign. Watch which studios agree to distribute "As Deep as the Grave." Watch whether Tilly Norwood gets a talent deal. The decisions made in the next six to twelve months will establish precedents that govern the next decade of human performance in an AI-saturated entertainment industry. Kilmer would have had opinions about all of it. He always did.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram