NVIDIA Just Called OpenClaw "The OS of Personal AI." Then It Locked It Down.

Jensen Huang handed OpenClaw the biggest endorsement in open-source history at GTC 2026, then immediately announced NemoClaw - a proprietary security layer that wraps the platform in NVIDIA's infrastructure. This is not altruism. It is a blueprint for owning the AI agent economy from the chip up.

NVIDIA positions NemoClaw as the enterprise-grade security layer for OpenClaw, announcing the product at GTC 2026 in San Jose. | BLACKWIRE / Generated

Jensen Huang does not make small bets. On Monday at GTC 2026, the NVIDIA CEO did something that the open-source community, the enterprise software world, and every AI startup founder should study carefully: he picked a winner in the agent platform war, blessed it with the full weight of the world's most valuable chip company, and then - in the same breath - announced a proprietary layer designed to sit between that platform and every enterprise that wants to use it.

The platform is OpenClaw. The proprietary layer is NemoClaw. And the implications reach much further than either product announcement alone.

When Huang told a packed SAP Center in San Jose that "every single company in the world today has to have an OpenClaw strategy," he was not making an observation. He was issuing a directive. And with NemoClaw - the NVIDIA-branded security and privacy stack that installs on top of OpenClaw in a single command - he was simultaneously announcing what that strategy would need to run on.

What OpenClaw Actually Is - And Why NVIDIA Noticed

OpenClaw is an open-source AI agent platform built by developer Peter Steinberger. It lets users run persistent, autonomous AI agents on their own hardware - agents that can use tools, maintain memory across sessions, connect to external services, and complete long-running tasks without constant human supervision. Think of it as the runtime that makes AI assistants genuinely useful rather than just impressive in demos.

Huang called it "the most popular open source project in the history of humanity." That claim is hard to independently verify, but the growth trajectory backing it is real: OpenClaw has attracted millions of installations across developer machines, enterprise servers, and cloud deployments since its release. More importantly, it solved something that previous AI interfaces did not - persistence.

Most AI interactions are stateless. You open a chat window, ask something, get an answer, and the model forgets you exist. OpenClaw agents run continuously. They check calendars, monitor news feeds, execute code, manage files, and reach out when something needs attention - all without being explicitly triggered each time. That architecture is not just more useful. It is fundamentally different from anything that existed before.

NVIDIA's interest is not hard to explain. An agent platform that runs continuously needs dedicated compute. Dedicated compute means GPUs. GPUs means NVIDIA revenue. The business case writes itself - but the execution is more sophisticated than a simple hardware play.

"OpenClaw opened the next frontier of AI to everyone and became the fastest-growing open source project in history. Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI." - Jensen Huang, NVIDIA CEO, GTC 2026 keynote, March 16, 2026

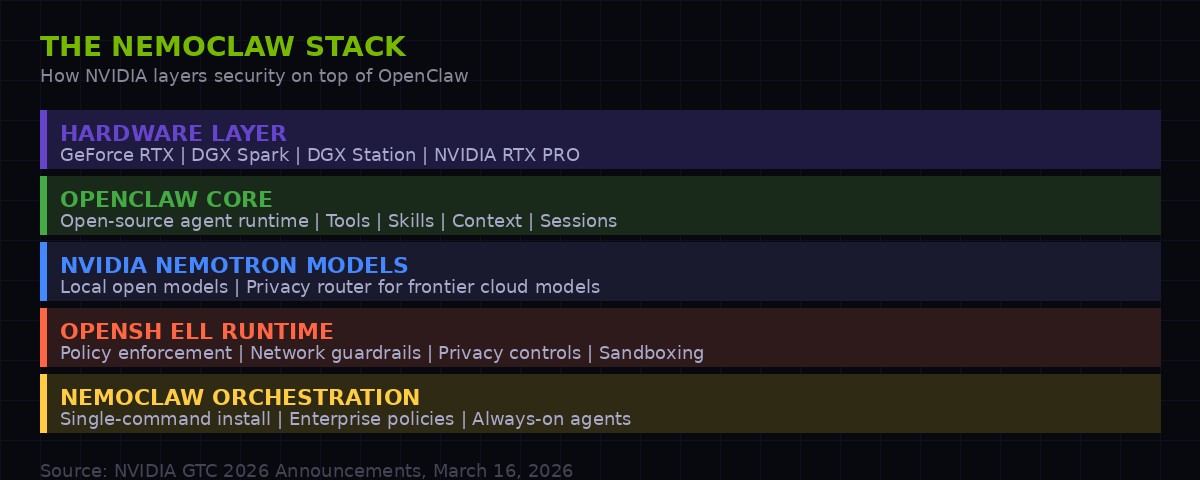

The NemoClaw stack: how NVIDIA layers its proprietary infrastructure from hardware through security above the open-source OpenClaw core. | BLACKWIRE Analysis

The NemoClaw Play: One Command to Rule Them All

NemoClaw is marketed around simplicity. A single command installs the NVIDIA stack on top of an existing or fresh OpenClaw deployment. That command pulls in three core components: NVIDIA Nemotron open models for local inference, the newly announced NVIDIA OpenShell runtime for policy enforcement, and a privacy router that lets agents use frontier cloud models without exposing raw data.

The pitch to enterprises is essentially: OpenClaw gives you the agent platform; NemoClaw makes it safe enough for your legal and security teams. Data stays local when it needs to. Network access is gated by policy. Privacy routing means even when cloud models are invoked, the raw sensitive content never leaves the corporate perimeter unencrypted or uncontrolled.

NVIDIA is also collaborating with security vendors to build OpenShell compatibility into their existing toolchains. The partners announced at GTC include Cisco, CrowdStrike, Google, Microsoft Security, and TrendAI. That is not a list of fringe players - that is the core of enterprise security infrastructure. When those vendors integrate OpenShell, every enterprise customer of those platforms gets a path to NemoClaw agent deployment that their existing security teams can actually approve.

This is the critical move that gets missed in the headline coverage. NVIDIA is not just selling hardware for agents. It is inserting itself into the enterprise procurement and approval chain. A company that wants to deploy OpenClaw agents in a regulated environment - banking, healthcare, defense - will increasingly find that NemoClaw + OpenShell is the path of least resistance, because their security vendors already validate it.

OpenShell: The Policy Engine Embedded in Your AI

The most significant technical piece of the NemoClaw announcement is not the Nemotron models or the privacy router. It is OpenShell.

OpenShell is described by NVIDIA as "an open source runtime that enforces policy-based security, network and privacy guardrails." That framing is technically accurate but undersells what it actually represents: a policy engine that sits between an AI agent and the rest of the world, deciding what the agent is allowed to do.

Every action an autonomous agent takes - reading a file, making an API call, sending a message, executing code - passes through OpenShell's policy layer. The enterprise defines what is permitted. OpenShell enforces it. The agent operates within those constraints, and all activity is logged.

For enterprises, this solves the core blocking problem with agent deployment: compliance and auditability. When a bank asks "what did your AI do with customer data?" the answer, with OpenShell, is a tamper-evident policy log. That is a meaningful answer to a regulator. Without it, autonomous agents are effectively ungovernable from a compliance standpoint, which is why adoption in regulated industries has been slow.

But the second-order effect is significant. By becoming the policy layer for enterprise AI agents, NVIDIA accumulates something more valuable than transaction fees: it accumulates behavioral data about how enterprises use AI. What actions agents take most frequently, where policies are restrictive enough to block useful work, what tools get invoked in which contexts - all of this flows through the infrastructure NVIDIA controls.

That data, in aggregate, is a roadmap for every future NVIDIA product targeting enterprise AI. It tells them exactly where the friction is. It tells them exactly what to build next. It is the same information advantage that AWS extracted from hosting other people's workloads - except instead of storage and compute patterns, it is agent behavior patterns. The information asymmetry this creates over time is structural, not incidental.

The Enterprise Adoption Machine: Sixteen Companies in One Day

Enterprise software companies committing to NVIDIA Agent Toolkit and OpenShell at GTC 2026 - a coalition spanning creative tools, CRM, security, and industrial automation. | BLACKWIRE Analysis

NVIDIA's GTC announcement listed sixteen major software platforms adopting the Agent Toolkit, which serves as the foundation for NemoClaw deployments. The list includes Adobe, Atlassian, Amdocs, Box, Cadence, Cisco, Cohesity, CrowdStrike, Dassault Systemes, IQVIA, Red Hat, SAP, Salesforce, Siemens, ServiceNow, and Synopsys. [Source: NVIDIA press release, nvidianews.nvidia.com/news/ai-agents, March 16, 2026]

Each of these companies has millions of enterprise users. Each integration is a channel through which NemoClaw enters a customer's stack without a separate procurement decision. When Salesforce builds agent capabilities on NVIDIA Agent Toolkit, every Salesforce customer who deploys those agents is implicitly running on NVIDIA's infrastructure layer.

Consider Adobe's commitment specifically. Adobe is building long-running agents for creative and marketing workflows using the Agent Toolkit as the foundation. That targets the exact category of knowledge worker - the creative professional, the marketing team - where AI agent adoption is currently fastest outside of pure engineering roles. It also targets a use case where data sensitivity is high: client briefs, unreleased campaigns, brand assets. OpenShell's privacy guarantees are directly relevant there.

Atlassian's involvement is equally telling. Atlassian makes Jira and Confluence - the project management and documentation tools that run inside the majority of mid-to-large technology companies. When Atlassian's Rovo AI system is built on OpenShell and Nemotron, it means that engineering teams discussing unreleased products, internal roadmaps, and security vulnerabilities will have their AI assistance mediated by NVIDIA's policy engine. That is a deep integration into the connective tissue of how the software industry operates.

"Claude Code and OpenClaw have sparked the agent inflection point - extending AI beyond generation and reasoning into action. Employees will be supercharged by teams of frontier, specialized and custom-built agents they deploy and manage." - Jensen Huang, NVIDIA CEO, GTC 2026, March 16, 2026

LangChain's integration deserves separate attention. LangChain is the most widely used framework for building AI applications, with over one billion downloads. It is the default toolkit for the developer community building agent-based applications from scratch. By integrating NVIDIA AI-Q, OpenShell, and Nemotron into the LangChain library, NVIDIA ensures that applications built by independent developers - startups, researchers, internal IT teams - also end up running on NVIDIA's agent infrastructure stack. The reach extends far beyond the sixteen named enterprise partners.

The Space Layer: Orbital Data Centers and What That Actually Means

One announcement from GTC that received less attention than it deserved: NVIDIA is going to space.

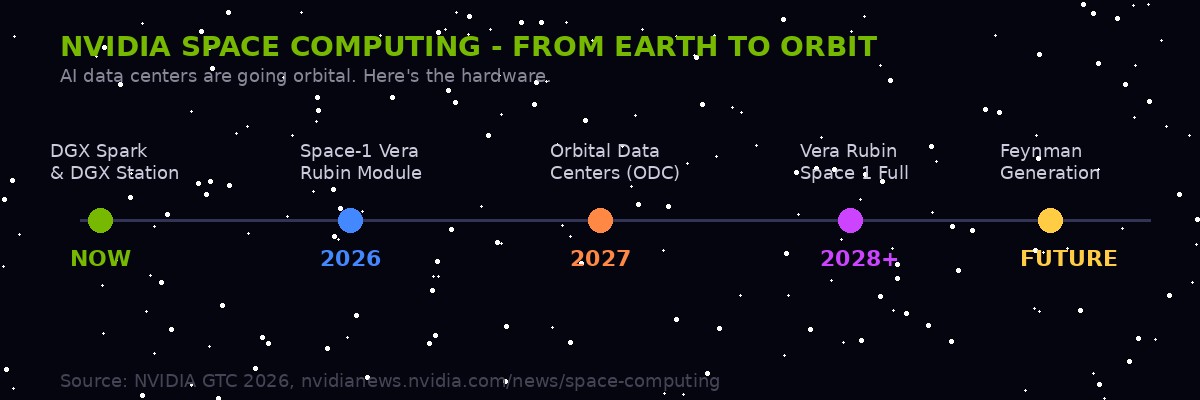

The company announced NVIDIA Space-1 Vera Rubin Module - a compact accelerated computing platform designed for orbital data centers. Huang framed it with characteristic boldness: "Space computing, the final frontier, has arrived." But the technology backing that claim is real, and the implications for AI infrastructure are significant. [Source: nvidianews.nvidia.com/news/space-computing, March 16, 2026]

NVIDIA's roadmap from current DGX hardware through orbital AI data centers. The company is partnering with Aetherflux, Planet Labs, Kepler Communications, and others on space-based AI compute. | BLACKWIRE Analysis

The Space-1 module delivers up to 25x more AI compute than the H100 GPU in a form factor engineered for size, weight, and power constraints in orbit. The relevant partners are Aetherflux, Axiom Space, Kepler Communications, Planet Labs, Sophia Space, and Starcloud. These are not vanity partnerships - several of them are building core data infrastructure that will define how the next generation of earth observation, communications, and autonomous space operations work.

Planet Labs images the entire Earth every single day. Processing that data currently requires massive ground-based infrastructure and introduces latency - the time between a satellite capturing an image and a customer acting on the analysis can be hours. Moving NVIDIA's inference capability to orbit means that analysis happens while the satellite is still overhead, dramatically reducing latency and enabling real-time applications that are currently impossible. Planet CEO Will Marshall explicitly cited this at GTC: "integrating NVIDIA's accelerated platform from space to ground, we are supercharging our ability to index the physical world." [Source: Planet Labs press release via NVIDIA GTC 2026]

The second-order effect is geopolitical as much as technical. Orbital data centers that process Earth observation in real time are relevant for military intelligence, disaster response, climate monitoring, and infrastructure tracking - all areas where the speed of analysis determines actionability. Whoever controls that compute layer in orbit controls the response time for those applications. NVIDIA is positioning to be that infrastructure provider across commercial and potentially government space programs.

Kepler Communications is building a low-latency space-to-space data network. With NVIDIA's Jetson Orin running AI at the edge on their satellites, the network can intelligently route data rather than simply relaying it. This is the satellite equivalent of moving from dumb pipes to intelligent routing - a transition that took decades on the ground and is now happening in orbit at NVIDIA chip speed.

The Feynman Generation: What Comes After Vera Rubin

GTC 2026 was nominally the launch event for Vera Rubin - NVIDIA's new full-stack computing platform comprising seven chips, five rack-scale systems, and one supercomputer targeting agentic AI workloads. But Huang also previewed what comes next, and the roadmap suggests that the current velocity is not slowing.

The next major architecture after Vera Rubin is codenamed Feynman, named after physicist Richard Feynman. It includes a new CPU called Rosa - named for Rosalind Franklin, whose X-ray crystallography revealed the structure of DNA. NVIDIA's naming choices are not random: they consistently pick scientists whose work unlocked hidden structure in the physical world. The implication is intentional - each generation of NVIDIA hardware is framed as revealing hidden structure in information.

The Feynman generation pairs LP40, described as NVIDIA's next-generation LPU, with BlueField-5 and CX10 interconnects, connected through NVIDIA Kyber for both copper and co-packaged optics scale-up. This matters because co-packaged optics - integrating optical data transmission directly with the compute chip rather than through external cables - dramatically reduces the energy cost of moving data between chips. At the scale of an AI factory, that efficiency gain translates directly into total cost of ownership. [Source: NVIDIA GTC 2026 live blog, blogs.nvidia.com/blog/gtc-2026-news]

The competitive context matters here. AMD and Intel are both pursuing the same markets. Intel's Gaudi line and AMD's Instinct series are real products with real customers. But NVIDIA's advantage is not just in silicon - it is in the software stack that has accumulated for 20 years on top of CUDA. The Feynman announcement is a signal that NVIDIA intends to maintain a two-to-three generation hardware lead while simultaneously entrenching software dependencies through OpenShell, NemoClaw, and Agent Toolkit. The combination makes the moat wider with every product generation, not narrower.

GTC 2026 - Key NemoClaw + Agent Announcements Timeline

The Open-Source Tension: Platform or Trap?

When a $4.47 trillion company adopts your open-source project as the foundational layer of a new product category, you should feel two things simultaneously: validated, and slightly nervous.

Peter Steinberger, OpenClaw's creator, appeared alongside Huang at GTC and gave his endorsement enthusiastically. His quote in the press release was unambiguous: "With NVIDIA and the broader ecosystem, we're building the claws and guardrails that let anyone create powerful, secure AI assistants." [Source: NVIDIA NemoClaw press release, March 16, 2026]

The tension in that statement is worth examining. "Guardrails" is doing a lot of work. OpenClaw in its base form is an open, permissionless agent platform. Users can configure it to do essentially anything their hardware and API access allows. NemoClaw's OpenShell layer is, by design, a constraint system - it limits what agents can do to what enterprise policies permit. For the individual user running OpenClaw on their personal hardware, that constraint is irrelevant. For the enterprise deploying agents across thousands of employees, it is the entire point.

But this creates a bifurcation in the OpenClaw ecosystem. The community that built OpenClaw into what it is - individual developers, power users, researchers - runs the open, unconstrained version. Enterprise adoption happens through NemoClaw, which runs on NVIDIA hardware, integrates with NVIDIA's model ecosystem, and generates behavioral telemetry that flows back to NVIDIA. Over time, the "enterprise grade" version of OpenClaw and the open-source version may diverge - not through a fork, but through a growing capability gap as NVIDIA invests in the constrained version and the open community invests in the unconstrained one.

This pattern has precedent. Red Hat did it with Linux. Android did it with the AOSP base. The open-source core remains genuinely open; the commercial layer on top is where the economic value concentrates. The distinction matters because it determines where power ultimately accumulates. In the Linux case, Red Hat (now IBM) accumulated power while the kernel community retained autonomy. In the Android case, Google retained significantly more control because the commercial value of app distribution concentrated in Google's proprietary Play Services layer, which runs above AOSP.

NemoClaw looks more like the Android model than the Red Hat one. OpenShell as the policy engine is functionally analogous to Google Play Services as the capability gatekeeper - it is the layer that enterprise-grade functionality requires, and it is proprietary to NVIDIA. That does not make it malicious. It makes it a competitive moat. The distinction matters if you are building on top of it and planning on being there long-term.

What Happens to Everyone Else

Microsoft launched Copilot to position itself as the AI layer inside enterprise software. Anthropic built Claude with Constitutional AI and positioned it as the safe, enterprise-trustworthy choice. OpenAI is fighting for the same enterprise ground with GPT-5 series and its own agent offerings. All three are now looking at a world in which NVIDIA - previously their infrastructure vendor, not their competitor - has announced that it intends to own the layer between the model and the enterprise.

The model vendors can still sell their models. Nemotron Coalition explicitly includes multiple model families, and the privacy router in NemoClaw is designed to call frontier cloud models when needed. But the routing decision - which model gets invoked, when, and with what data - now sits inside NVIDIA's stack. That is a subtle but significant shift in who controls the experience.

For Microsoft, this is particularly complicated. Azure is a major compute partner for NVIDIA. Microsoft Security is listed as an OpenShell collaborator. But Microsoft's own Copilot offering competes directly with what NemoClaw enables. These relationships exist in an uneasy coexistence that will become harder to maintain as enterprise agent deployments scale and customers start choosing between Microsoft's integrated stack and NVIDIA's more modular approach.

For the open-source AI community, the calculus is different. NVIDIA's endorsement of OpenClaw brings resources, visibility, and enterprise legitimacy that open-source projects rarely achieve on their own. The risk is that the gravitational pull of NemoClaw - with its hardware optimization, enterprise security certifications, and partner integrations - makes the unconstrained OpenClaw feel like the "developer" version rather than the production version. Open source projects that get adopted as enterprise platforms often find that the enterprise fork slowly becomes the canonical one, simply because that is where the investment flows.

The AI-Q Blueprint deserves specific attention as a competitive signal. NVIDIA's hybrid architecture for enterprise search - using Nemotron open models for research and frontier models for orchestration - topped the DeepResearch Bench accuracy leaderboards. That benchmark matters because it is the evaluation that enterprise buyers use to justify purchases. Being first on a trusted benchmark signals that NVIDIA is not just selling hardware and infrastructure - it is building model capability that competes directly with OpenAI's and Anthropic's own research products. The model war and the infrastructure war are converging.

The Week That Redrew the Map

GTC 2026 was not simply a product launch event. It was NVIDIA announcing, systematically and in public, its intention to be the foundational layer for every significant AI deployment - from personal agents running on RTX laptops to enterprise knowledge workers running through NemoClaw-secured stacks to orbital satellite constellations processing Earth observation in real time.

The chip business is still the center of gravity. Vera Rubin's seven-chip, five-rack architecture, the roadmap to Feynman, and the $1 trillion revenue forecast through 2027 are the financial foundation. But NemoClaw is how NVIDIA turns hardware dominance into platform dominance - the same transition that transformed Apple from a computer company to an ecosystem company, and that transformed Amazon from a retailer to cloud infrastructure.

Jensen Huang's framing of OpenClaw as "the OS of personal AI" was deliberate. Operating systems are the layer that software runs on top of. Whoever controls the OS defines the rules of what is possible. With NemoClaw layered above OpenClaw, NVIDIA is attempting something that Microsoft achieved with Windows and Google achieved with Android: to become so embedded in the standard stack that choosing a different option requires justifying why you are making your life harder.

The week's other AI news - Moltbook updating its terms of service, Apple acquiring MotionVFX, DLSS 5 arriving for neural rendering in games - matters. But the NemoClaw announcement is the one that will still be consequential in five years. It marks the point where GPU maker and agent platform provider became the same thing, and where the enterprise security conversation around AI shifted from "can we trust these models" to "can we trust the infrastructure they run on."

For the enterprises now evaluating agent deployments, the answer NVIDIA is offering is: trust us, we have the policy engine. Whether that is a comfort or a concern depends entirely on your threat model.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram