Trump's National AI Framework: Washington Seizes Control, States Locked Out

The Trump administration dropped what may be the most consequential tech policy document of the decade on a Friday afternoon. Quietly, without fanfare - a 20-page PDF uploaded to whitehouse.gov as government workers headed into the weekend. The headline was friendly: National AI Legislative Framework. The contents were anything but soft.

Strip away the language about innovation and children's safety, and what the White House published on March 20, 2026 is a federal power grab over one of the most contested regulatory spaces in American politics. It tells every state legislature to stand down, hands Big Tech a sweeping liability shield, and bets that Washington alone knows how to govern AI. (Source: White House)

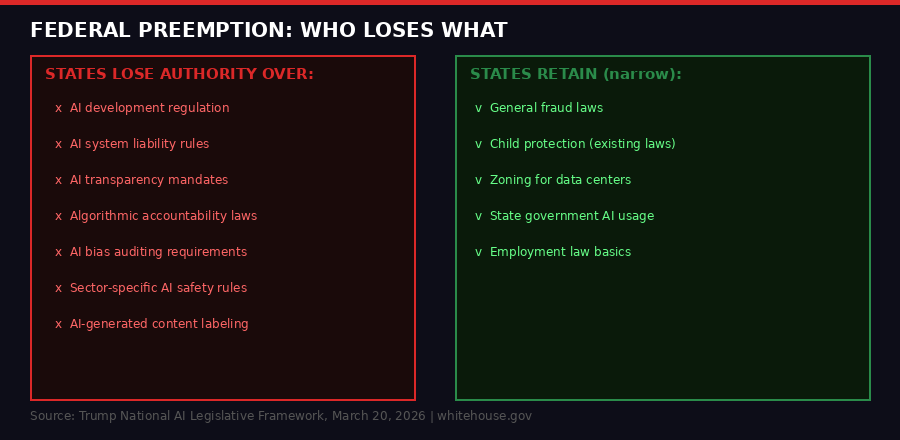

The framework's core instruction to Congress is blunt: preempt state AI laws. All of them. The reasoning offered is that "a patchwork of conflicting state laws would undermine American innovation and our ability to lead in the global AI race." What goes unmentioned is that this patchwork is also currently the only meaningful check on what AI companies can do to ordinary Americans.

This is not a hypothetical policy debate. There are real stakes. The framework dropped the same week that New York lawmakers were debating expanded algorithmic accountability legislation and California's attorney general was actively investigating AI hiring tools. Both of those actions would be dead on arrival under the proposed federal preemption standard.

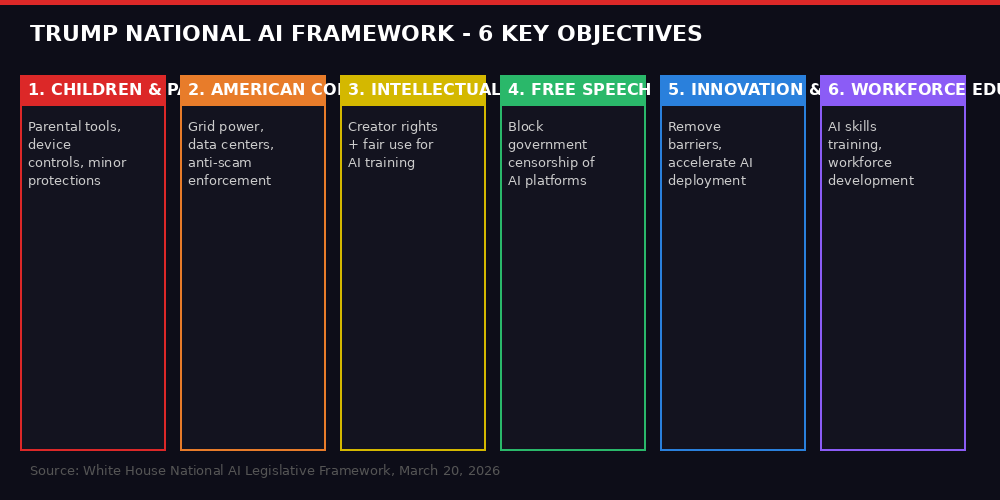

What the Framework Actually Says

The framework lays out six "key objectives," but they are not all equal in weight. The ones that benefit AI companies - removing barriers to innovation, preempting state laws, shielding developers from liability for third-party harms - are framed as hard requirements. The ones that ostensibly protect people - child safety, anti-censorship, creator rights - are wrapped in qualifiers like "believes," "should consider," and "commercially reasonable."

On liability, the framework is explicit: Congress should prevent states from "penalizing AI developers for a third party's unlawful conduct involving their models." This is a direct intervention in the growing wave of lawsuits targeting AI companies for harms caused by their products. It is, in legal terms, a Section 230-style shield - extended to AI. The practical effect would be dramatic: developers of AI systems used in hiring, healthcare, credit scoring, and criminal justice would face significantly reduced exposure to accountability claims.

The intellectual property section attempts a careful balance, saying creators' rights must be respected while also insisting that "for AI to improve it must be able to make fair use of what it learns from the world it inhabits." That framing - training data as fair use - is exactly what AI companies have been arguing in court against publishers, musicians, and visual artists. The administration just signaled it agrees with the tech companies. (Source: TechCrunch)

The child safety section is perhaps the most telling indicator of the framework's true priorities. Rather than imposing platform requirements, it calls on Congress to give parents tools to manage their children's devices. The framing shifts responsibility from companies to families. Platform accountability is nudged rather than mandated. This stands in stark contrast to what states like Texas, Utah, and Florida have been attempting - direct requirements on platforms operating in their jurisdictions.

"Parents are best equipped to manage their children's digital environment and upbringing. The Administration is calling on Congress to give parents tools to effectively do that, such as account controls to protect their children's privacy and manage their device use."

Compare that language to what states have been doing. California's SB-53, signed in 2025, requires large AI companies to maintain and publish safety protocols. New York's RAISE Act mandates documented testing and risk management before deployment. These are the laws the federal framework would eliminate - replaced by a national standard that prioritizes growth over guardrails.

The State Preemption Battle

The preemption fight is the heart of this framework. And it is not new - it has been building for over a year.

In December 2025, Trump signed an executive order directing the Commerce Department to compile a list of "onerous" state AI laws, with the implicit threat that states with restrictive AI regulations could lose federal funding. The 90-day deadline for that list came and went without publication - either because the legal grounds were shakier than the administration expected, or because the legislative route was always the real play. (Source: TechCrunch)

The new framework makes the intention explicit. States can keep authority over "general laws like fraud and child protection, zoning, and state use of AI." Everything else - AI development regulation, transparency mandates, liability frameworks, algorithmic accountability - would be federalized. The carve-outs for states are narrow enough that they preserve the appearance of federalism without its substance.

The political logic is straightforward. Federal AI regulation, championed by AI czar David Sacks and driven by the administration's "accelerationist" philosophy, will be designed with a lighter touch than what California or New York would impose. By preempting state action, the administration can guarantee that even as Congress debates and delays, no state can move faster than Washington wants. It also shields companies from having to comply with the most rigorous requirements while federal legislation is still being negotiated.

Critics are not buying the federalism argument. Brendan Steinhauser, CEO of The Alliance for Secure AI, put it plainly: "White House AI czar David Sacks continues to do the bidding of Big Tech at the expense of regular, hardworking Americans. This federal AI framework seeks to prevent states from legislating on AI and provides no path to accountability for AI developers for the harms caused by their products." (Source: TechCrunch)

The counter-argument from industry is equally blunt. Teresa Carlson, president of General Catalyst Institute, told TechCrunch: "This framework is exactly what startups have been asking for: a clear national standard so they can build fast and scale. Founders shouldn't have to navigate a patchwork of conflicting state AI laws that impede innovation." Both statements are true - and they are in direct conflict. The question is which harm matters more: the cost of compliance fragmentation or the cost of insufficient protection for individuals.

David Sacks and the Accelerationist Agenda

The framework cannot be understood without understanding the man who shaped it. David Sacks - PayPal co-founder, venture capitalist, and now the White House's AI and crypto czar - represents a specific ideological strand within tech: one that views regulatory friction not as safety but as drag. His network includes the people building the AI companies this framework would benefit most.

Sacks has been explicit about his philosophy: the US must win the AI race against China, and winning requires speed. Any regulation that slows development is, in this view, a strategic liability. The framework's language about removing "outdated or unnecessary barriers to innovation" and "accelerating AI deployment across industry sectors" reads as a direct translation of that worldview into policy. The word "unnecessary" is doing a lot of work in that phrase - it implies that regulators, rather than markets or affected communities, will determine what counts as necessary.

What makes this politically interesting is the coalition it creates. The framework's innovation provisions are exactly what the largest AI companies - OpenAI, Google, Anthropic, Meta, Microsoft - have wanted for years. All of them have lobbied against state AI bills. All of them face potential liability from the growing wave of lawsuits challenging their training practices, their products' harms, and their safety claims. The federal framework addresses each of those concerns in their favor.

The political cost, if any, will come from the consumer protection void the framework leaves open. The child safety provisions are so soft as to be nearly unenforceable. The copyright compromise will anger creators. The free speech provisions, while framed as anti-censorship, could complicate platform moderation in ways that open new regulatory battles entirely. There is also the question of small business exposure - when AI systems cause harm in employment, lending, or insurance, the framework's liability shield benefits primarily the developers, not the businesses deploying those tools.

The AI Race Framing - and Its Blind Spots

The framework repeatedly invokes the US-China AI competition as the justification for deregulation. The logic goes: China is not burdened by state-by-state regulation, so America cannot afford to be either. This is the same argument used to justify many of the administration's economic policies, and it has the same flaw - it treats systemic risk management as a competitive weakness rather than a foundation for long-term reliability.

The framework does contain one genuinely substantive energy provision. It calls on Congress to streamline permitting for data centers to generate power on-site, and explicitly states that "ratepayers should not foot the bill for data centers." The context here is the extraordinary energy demands of modern AI infrastructure. Training a single large language model can consume as much electricity as dozens of US households use in a year. Data center construction has been straining power grids from Virginia to Texas, with utility companies warning about capacity shortfalls in multiple states.

This provision serves both the industry (faster buildout) and ordinary consumers (utility cost protection) - the rare genuinely bipartisan element in an otherwise ideologically skewed document. It also signals an awareness that the political coalition needed to pass any AI legislation will need to include constituents beyond Silicon Valley investors and startup founders.

The energy angle also reveals something the framework does not discuss openly: AI development at the scale being planned by US companies requires infrastructure investment that no startup can fund alone. When the government streamlines permitting for on-site power generation, it is not just helping data center operators - it is helping a specific handful of companies that are already large enough to build private power generation. The barrier-to-entry effect is real, and it runs in the direction of consolidation rather than competition.

"The Federal government is uniquely positioned to set a consistent national policy that enables us to win the AI race and deliver its benefits to the American people, while effectively addressing the policy challenges that accompany this transformative technology."

- White House National AI Legislative Framework, March 20, 2026

Who Wins, Who Loses, and What Comes Next

The framework is not legislation. It is a blueprint sent to Congress - an agenda, not a mandate. Congress would still need to pass actual bills, and AI legislation has stalled at the federal level repeatedly since 2022. What the framework does is signal intent, and signal it loudly enough that states will think twice before passing new AI laws while federal legislation is being drafted.

That chilling effect may be the framework's most immediate practical impact. State legislators who were planning to move on AI bias auditing or algorithmic accountability bills now face the prospect of those laws being preempted before they take effect. The uncertainty alone is enough to slow state-level action - which is likely exactly the point. A two-year freeze on state AI legislation, even if no federal bill passes, is a significant win for companies that have been fighting those laws in state capitals.

The winners are obvious: AI companies facing state lawsuits or upcoming regulation; data center operators who want faster permitting; AI startups who prefer navigating one federal regime to fifty state ones. OpenAI, Google, Microsoft, Meta, and the smaller players circling them all benefit from the administration's stance.

The losers are more diffuse and less organized. They include: the states that have spent years building out AI regulatory capacity; consumer advocates who have been relying on state attorneys general to fill the federal void; creative workers whose copyright claims against AI companies will face a less hospitable legal environment; and ordinary people harmed by AI systems who will find their recourse narrowed if the liability shield gets codified into federal law. The harm is real but distributed - exactly the kind of harm that is easiest to ignore in a policy debate dominated by well-funded industry voices.

The international dimension matters too. The EU's AI Act - which came into force in 2024 and is now being implemented - takes the opposite approach: risk-based regulation with binding requirements, independent auditing, and real penalties for violations. American companies operating in Europe will face those requirements regardless of what US law says. The federal preemption framework does nothing to prepare American industry for the EU's expectations. If anything, by signaling that safety requirements are optional, it could widen the regulatory arbitrage gap that European policymakers are already watching warily. Brussels and Washington are now heading in opposite directions, and the companies caught in between will need to maintain two compliance regimes regardless.

There is also a timing problem worth noting. The framework was released on a Friday afternoon, the same day that court filings in the Anthropic-vs-Pentagon lawsuit revealed the Pentagon had told Anthropic the two sides were "very close" on the exact issues cited as national security threats - one week after publicly declaring the relationship finished. The AI policy ecosystem is fragmented, contradictory, and moving faster than any framework can track.

The Second-Order Effects Nobody Is Talking About

The framework contains one gesture toward competition: it mentions facilitating "broad access to the testing environments needed to build and deploy world-class AI systems." This is a reference to AI compute access - the argument that small companies and researchers cannot compete when the frontier is dominated by those who can afford to train hundred-billion-parameter models on proprietary infrastructure.

But the framework does not propose any actual mechanism to address compute concentration. No public compute program, no anti-monopoly provisions for AI infrastructure, no requirements that frontier labs share research or model weights. The competition provision is purely procedural - it names the problem while proposing no solution. This is not accidental. The people shaping this policy are the same venture capitalists who benefit from concentrated AI power. True compute democratization would undermine the investment thesis of the firms that built this administration's AI policy apparatus.

The free speech provision also deserves closer reading. The framework instructs Congress to "prevent the United States government from coercing technology providers, including AI providers, to ban, compel, or alter content based on partisan or ideological agendas." This sounds reasonable in isolation. But the framing specifically targets government action, not platform action. It provides no protection against AI companies themselves making ideological choices about what their models will and will not say - choices that, as AI becomes embedded in search, writing assistance, healthcare recommendations, and legal research, carry significant consequences for public knowledge and democratic discourse.

The framework also says nothing about transparency. There are no proposed requirements for AI companies to disclose what their models were trained on, what risks they identified internally, what safety testing they conducted, or what failure modes they documented. In an era when AI systems are being deployed in healthcare diagnosis, criminal sentencing recommendations, hiring decisions, and credit scoring, that silence is not a neutral omission. It is a choice. It reflects a policy view that accountability is an obstacle to innovation rather than a prerequisite for trust.

Perhaps the most significant second-order effect involves the Anthropic lawsuit currently playing out in federal court. The AI company is suing the Pentagon over what it describes as First Amendment retaliation - the claim that it was designated a national security risk because of its publicly stated positions on AI safety and autonomous weapons. The federal framework, by signaling that the administration's AI policy prioritizes growth and innovation over safety constraints, adds political context to what the Pentagon did. A hearing in the Anthropic case is scheduled for March 24 in San Francisco. The framework dropped four days earlier.

What This Means for the Next 12 Months

Realistically, the framework will not become law in 2026. Congress has a packed agenda, the Senate's filibuster math is difficult, and AI legislation has historically collapsed under the weight of competing industry interests once actual bill text forces lobbyists to choose sides on specifics. What the framework does is set the terms of the debate and create the political expectation that a federal standard is coming - which is itself enough to chill state action.

States that were planning aggressive AI legislation will now need to calculate whether their laws will survive a federal challenge. Some - California, New York, Colorado - will pass them anyway and fight in court. Others will wait and see, which means a protection gap that could last years while the federal process grinds forward. That gap benefits the industry at the expense of everyone else.

The EU-US regulatory divergence will widen further. European companies and subsidiaries of American firms operating in Europe will face the AI Act's requirements while American domestic players operate under much softer rules. This creates genuine competitive complexity - not the simplicity the framework promises. It also creates an incentive for American companies to run their most aggressive AI deployments in jurisdictions with the least oversight, something the framework does nothing to address.

The most watched development in the coming months will be how Congress interprets the framework's liability shield language. If it gets codified into law, it would represent the largest single rollback of AI accountability ever enacted - rivaling the practical scope of Section 230's passage in the 1990s. The AI industry's lobbying apparatus knows this. They will push hard to make it happen before a potentially less friendly Congress takes over in January 2027.

The framework is a bet. It bets that speed produces better outcomes than safety, that federal control produces better outcomes than state experimentation, and that market forces produce better outcomes than mandated accountability. Each of those bets might be right in specific contexts and circumstances. The history of technology governance - from social media moderation to financial technology to pharmaceutical approval - suggests they are rarely all right simultaneously, and that the costs of getting them wrong fall heaviest on the people with the least political power. The document dropped quietly on a Friday. The consequences will be loud for years to come.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram