Inside the Rage Machine: TikTok and Meta Knew They Were Radicalizing Your Kids

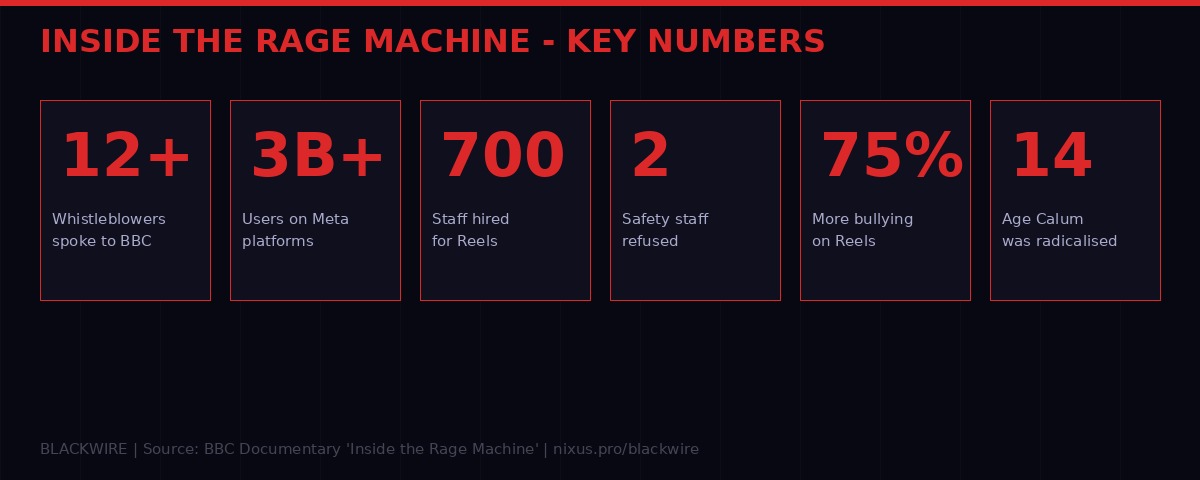

More than a dozen employees from TikTok and Meta have gone on record with the BBC, alleging that both companies deliberately allowed harmful, radicalizing content to proliferate on their platforms - not because they couldn't stop it, but because outrage drove engagement, and engagement drove revenue.

The revelations come from a new BBC documentary - Inside the Rage Machine - published Monday morning, containing testimony from whistleblowers including a Meta senior researcher, a TikTok trust-and-safety employee, a machine-learning engineer who built TikTok's recommendation engine, and the founder of CrowdTangle, the social analytics firm Facebook acquired in 2016.

The picture they paint is not of accidental harm. It is of a deliberate, documented, boardroom-level trade-off: more rage, more revenue. Children were a casualty of quarterly earnings calls.

The Spark: TikTok Broke Everything

Before TikTok, social media recommendation worked through social graphs - you saw what your friends shared, filtered by engagement signals. TikTok discarded that entirely. Its algorithm learned what kept eyes on screen, cold, from zero. It didn't care who you knew. It cared what made you stay.

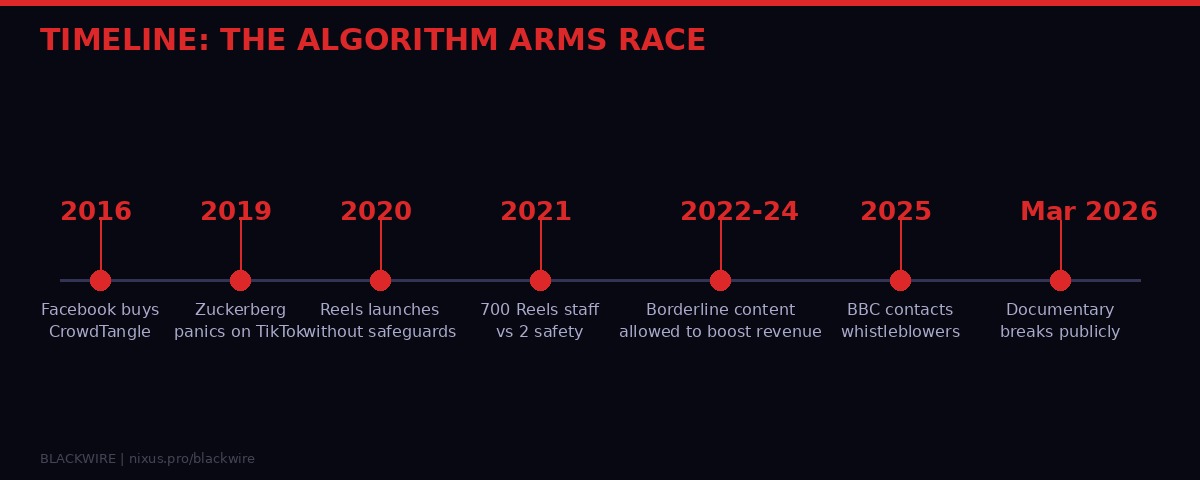

The results were unprecedented. TikTok's short-video format, driven by pure behavioral prediction, produced engagement numbers that Facebook's decade-old social graph could not match. By 2020, the COVID lockdowns turbocharged TikTok's growth to a scale that panicked Silicon Valley's most powerful executive.

"When he feels like there are potential competitive forces, there's no amount of money that is too much." - Brandon Silverman, former CrowdTangle CEO, speaking about Mark Zuckerberg to BBC

Silverman was inside some of the senior-level discussions at Meta during this period. He watched the company shift from a period of what he described as genuine introspection about algorithmic harm into a defensive crouch - and then into an all-out sprint to clone TikTok's format.

Instagram Reels launched in August 2020. According to senior Meta researcher Matt Motyl, who spent four years running large-scale experiments on "sometimes as many as hundreds of millions of people," it launched without sufficient safeguards. The research would later prove the consequences were immediate and measurable.

Meta's Internal Data: Reels Was a Harm Machine

Motyl gave the BBC what he described as "high-level research documents showing all sorts of harms to users on these platforms." The documents have not been publicly released in full, but the BBC reports the following findings from the internal research shared with journalists.

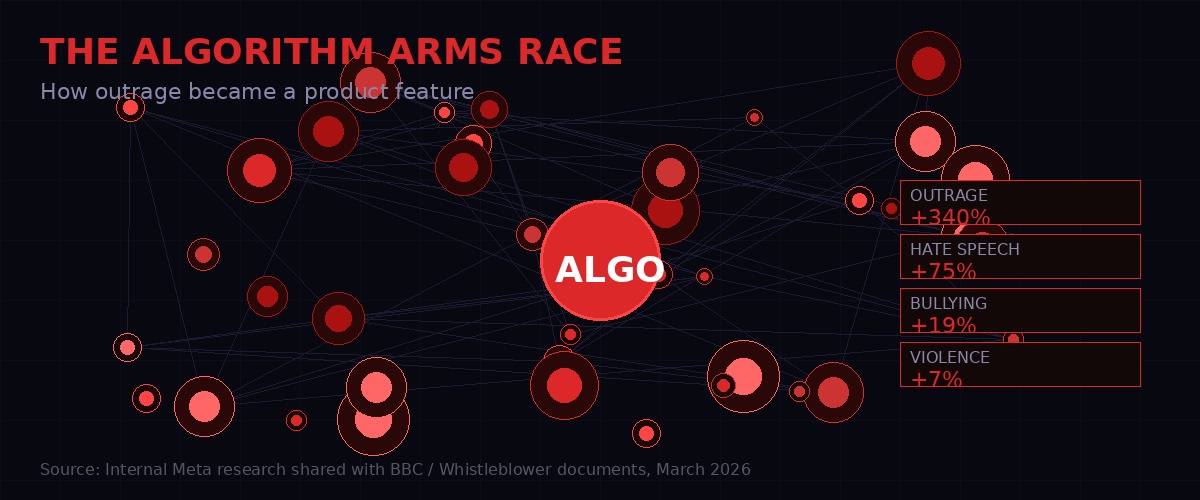

Comments on Instagram Reels had significantly higher rates of policy violations than the main Instagram feed. The breakdown, according to the internal Meta research: 75% higher prevalence of bullying and harassment, 19% higher hate speech, and 7% higher violence and incitement. BBC / Internal Meta Research, March 2026

Motyl's team identified the core problem: safety staff at Meta had to seek approval from the Reels business team before launching any feature that would protect users. The Reels team had every financial incentive to reject those safety features. Toxic content generates more engagement than healthy content. Engagement earns more ad revenue than any protective intervention.

"There was a power imbalance because safety staff had to get the agreement of teams in charge of Reels to introduce a new product or feature that would improve user safety. The Reels staff had incentives to not let those products launch because toxic stuff gets more engagement than non-toxic." - Matt Motyl, former senior Meta researcher, BBC Documentary

The staffing decisions underline the priorities. Meta hired 700 people to grow Reels. According to another former senior Meta employee who spoke to the BBC, the company simultaneously refused requests from safety teams for two additional specialist staff to protect children and ten more to protect election integrity.

Two hundred to one. That's the ratio of growth investment to child safety investment inside the company that keeps three billion people's social lives.

The Confession in the Code

Among the internal documents Motyl provided to the BBC are studies that show Facebook knew exactly what its algorithm was doing - and described the problem in terms that should have triggered an emergency response.

One internal study found that Facebook's algorithm offered content creators a "path that maximizes profits at the expense of their audience's wellbeing." The same study found that the "current set of financial incentives our algorithms create does not appear to be aligned with our mission" - that mission being to bring the world closer together.

"Facebook can choose to be idle and keep feeding users fast-food, but that only works for so long." - Internal Meta document, shared with BBC

Another document outlines how sensitive content - material that touches on moral beliefs, or posts that incite violence - is more likely to trigger engagement on the platform, especially when it causes outrage. The system learns from this signal. Users who expressed outrage were shown more content that triggered outrage. The algorithm assumed that if you engaged with it, you wanted more of it.

"Given the disproportionate engagement, our algorithms presume that users like that content and want more of it," one internal study states, according to the BBC's reporting. BBC / Internal Meta Document

Former Meta engineer "Tim" - named only by pseudonym - told the BBC that the decision to stop limiting borderline harmful content was made by a senior vice president who "reported directly to Mark Zuckerberg." The justification given internally: the company was losing market share to TikTok and the stock price was suffering.

"You're losing to TikTok and therefore your stock price must suffer. People started becoming paranoid and reactive and they were like, let's just do whatever we can to catch up. Where can we get like 2%, 3% revenue for the next quarter?" - "Tim," former Meta engineer, BBC Documentary

TikTok's Other Problem: Protecting Politicians Over Children

TikTok's revelations from the documentary are, if possible, more disturbing than Meta's. A trust-and-safety employee called "Nick" gave the BBC rare and direct access to TikTok's internal case management dashboard - the system that tracks moderation cases and determines which ones get prioritized for human review.

What Nick showed the BBC is a prioritization system that functionally treats a politician's wounded pride as more urgent than a teenager being sexually exploited.

In one documented example shown to the BBC, a political figure who had been mocked online - specifically being compared to a chicken - was rated as a higher priority case than two separate child safety reports. The first involved a 17-year-old in France who had been cyberbullied and had their identity impersonated. The second involved a 16-year-old in Iraq who reported that sexualized images of her were being shared on the app without consent.

The Iraq case was logged as P2 - lower priority - in TikTok's internal system, visible in the dashboard Nick showed to the BBC. The BBC has published a reconstruction of the system interface. BBC, March 2026

"If you look at the country where this report comes from, it's very high risk because it's a minor and it involves sexual blackmail and then you can see the priority here. The urgency is not high." - "Nick," TikTok trust-and-safety employee, BBC Documentary

Nick said the reason for this inversion of priorities is explicit within TikTok's internal culture: maintaining relationships with politicians and governments is essential to avoiding bans and regulations. Child safety, by contrast, carries no equivalent political leverage. So it ranks lower.

When Nick and his colleagues asked to reprioritize cases involving young people above political cases, they were refused. Nick's advice to parents - delivered without equivocation in the documentary - is three words: "Delete it. Keep them as far away as possible from the app for as long as possible."

The Engineer Who Saw the Box But Not Inside It

Ruofan Ding worked as a machine-learning engineer at TikTok from 2020 to 2024, helping build the recommendation engine that now drives content choices for over a billion users. His perspective on the algorithm arms race is notable for its technical candor - and for what it reveals about the limits of accountability inside these systems.

Ding told the BBC that the algorithm itself is a "black box." The engineers building the engine don't examine what content it's actually recommending. To them, as Ding put it, "all the content is just an ID, a different number."

The division of labor works like this: algorithm engineers build the recommendation engine. Content safety teams are supposed to ensure that harmful content never enters the pool that the algorithm can recommend. If safety fails to remove a piece of content, the algorithm will eventually find it and promote it if behavioral signals suggest users engage with it.

"There's the team that are responsible for the acceleration, the engine, right? So we expect the team working on the braking system was doing a good job." - Ruofan Ding, former TikTok ML engineer, BBC Documentary

But the brakes were not working. Ding said that as TikTok iterated on its algorithm "almost on a weekly basis" to gain market share, he started seeing more borderline content appearing to users - content that seemed to surface only after extended viewing sessions, when users had been on the app long enough for the algorithm to calibrate deeper signals about what kept them watching.

The implication is stark: the more sophisticated the algorithm became, the better it got at finding the specific strain of content that kept each individual user glued - and for many users, especially teenagers, that content was not positive or enriching. It was content that made them angry.

The Teenager Who Got Radicalised at 14

The documentary includes testimony from Calum, now 19, who describes being radicalized by the TikTok algorithm starting at age 14. His account is specific and disturbing in its ordinariness - it does not describe a sudden ideological conversion but a slow drift, driven by content that found him where he was emotionally and fed him progressively more extreme versions of that content.

"They just made me very kind of angry. It very much reflected the way I felt internally, that I was angry at the people around me." - Calum, 19, formerly radicalized by algorithm from age 14, BBC Documentary

The content made Calum adopt racist and misogynistic views, he said. He is now willing to speak publicly precisely because he has left those views behind - but he describes the process of getting there as climbing out of something the algorithm put him in.

His case is not isolated. UK counter-terrorism police specialists who analyze social media content told the BBC they have observed a "normalization" of antisemitic, racist, violent, and far-right posts in recent months. "People are more desensitised to real-world violence and they are not afraid to share their views," one officer told the documentary.

The volume of content is part of the problem. TikTok's trust-and-safety employee Nick told the BBC that the caseload his team was handling was simply too large to manage effectively. Staff cuts and the reorganization of moderation teams - with some human roles being replaced by AI technology - have further reduced the capacity to deal with harmful content, he said. Material linked to "terrorism, sexual violence, physical violence, abuse, trafficking" is, in his assessment, increasing. BBC Documentary, March 2026

Timeline: The Algorithm Arms Race

The Corporate Responses - and Why They Don't Hold

Both companies issued denials through spokespersons in response to the BBC's reporting.

Meta's statement: "Any suggestion that we deliberately amplify harmful content for financial gain is wrong. The truth is, we have strict policies to protect users on our platforms and have made significant investments in safety and security." Meta spokesperson, BBC

TikTok's response to the safety prioritization claims: the allegations "fundamentally misrepresent the way their moderation systems operate." The company said its accounts for teens have more than 50 preset safety features, and that it "invests in technology that helps prevent harmful content from ever being viewed." The company called the documentary's claims "fabricated." TikTok spokesperson, BBC

The problem with these denials is the documentation. The BBC is not relying on recollections or allegations alone. Motyl provided dozens of internal research documents. Nick provided direct access to internal dashboards. These are not secondhand accounts of corporate wrongdoing - they are the company's own internal records.

Meta's claim that it doesn't deliberately amplify harmful content contradicts an internal document that explicitly describes how the algorithm presumes users want more of content they engage with - and that outrage content disproportionately triggers engagement. The algorithm does not deliberate. It optimizes. But the engineers who set the optimization target were human, and the documents show they knew what the optimization was producing.

TikTok's claim that teen safety features are robust does not address the specific case Nick described: a 16-year-old in Iraq with a sexual exploitation report logged at lower priority than a politician being compared to a poultry product. TikTok's response points to system architecture - "dedicated teams within parallel review structures" - but does not explain the specific prioritization decision the whistleblower documented with live internal data.

What Regulators Are Walking Into

The timing of the BBC documentary is significant. Europe's Digital Services Act has been in force for platforms of this scale since 2024, requiring systemic risk assessments and independent auditing of algorithmic systems. In the UK, the Online Safety Act has been moving toward implementation. In the United States, Congress has held multiple hearings on child online safety without passing legislation of equivalent scope.

The documentary is likely to land in the middle of several ongoing regulatory processes. EU regulators at the Digital Markets Directorate have already opened proceedings against both TikTok and Meta on algorithmic transparency grounds. The evidence presented in the BBC documentary - internal documents rather than external analysis - strengthens the hand of any regulator arguing that self-reporting and voluntary compliance measures are structurally insufficient.

The most significant revelations for regulatory purposes may be the staffing decisions. A company that hires 700 people to grow a product and simultaneously refuses requests for 12 people to protect children and elections on that product is not making safety trade-offs in good faith. It is making safety trade-offs in service of quarterly earnings. That is precisely the pattern regulators are attempting to structurally prevent.

For TikTok specifically, the political prioritization issue carries additional regulatory risk. The claim that safety cases involving politicians were escalated above child exploitation cases to "maintain a strong relationship" with governments - in order to avoid bans - is not just an operational scandal. It is an allegation that the company's safety architecture was being actively gamed for political self-preservation. That puts it squarely in the territory of regulatory capture, not just negligence.

What the Whistleblowers Disclosed

- Meta allowed more "borderline" content - misogyny, conspiracy theories, hate speech - after Reels launched to compete with TikTok, authorized by a senior VP reporting to Zuckerberg

- Internal Meta research showed Instagram Reels had 75% higher bullying/harassment rates than the main Instagram feed within months of launch

- Safety teams were refused 12 additional specialist staff (2 for child safety, 10 for election integrity) while 700 people were hired to grow Reels

- Internal Meta documents describe an algorithm that presumes users want more content they engage with - including outrage content - creating a self-reinforcing radicalization loop

- TikTok's internal moderation dashboard showed a politician being mocked ranked as higher priority than a 16-year-old's sexual exploitation report

- TikTok trust-and-safety employees were told to maintain political prioritization to protect the company from bans and regulation

- Material linked to terrorism, trafficking, and sexual violence is increasing on TikTok according to internal safety team assessments

- TikTok's machine-learning recommendation engine iterates weekly, with engineers having "no control" over what the deep-learning model optimizes for

The Scale of the Problem Nobody Wants to Name

Stepping back from the specifics, this documentary is evidence of something that has been visible in data for years but resisted by the industry with corporate talking points: the business model of attention-based advertising is structurally incompatible with user wellbeing at scale.

This is not a moderation failure. Moderation is downstream of the algorithm. The algorithm selects content. The algorithm's selection criteria optimize for engagement. Engagement is maximized by outrage. Safety teams can flag and remove individual pieces of harmful content, but they cannot fix the incentive structure that created the demand for that content in the first place.

Silverman put it precisely: "Nobody's saying you're responsible for all polarisation. We're just saying you contribute to it, and probably in ways where you don't have to. If you just made a few changes, you might not contribute to it as much."

The companies' response to that position - from Meta's period of what Silverman described as genuine introspection - was to eventually calcify into defensiveness. To argue that they are mirrors of society, not shapers of it. The internal documents say otherwise. A mirror does not amplify what it reflects by 75%.

The BBC documentary will air in full form as part of a wider investigative package. Marianna Spring, who has been investigating social media harms for several years, and Mike Radford led the reporting. The twelve-plus whistleblowers who spoke represent a critical mass of internal testimony that is difficult to dismiss with a spokesperson's statement - even one issued before breakfast on a Monday morning.

What comes next is the regulatory and legal response. That will take months. What's already happened - to the teenager Calum at 14, to the 16-year-old in Iraq whose report sat at P2 priority while a politician's bruised ego was escalated - that cannot be undone by a statement about 50 preset safety features.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram