The infrastructure of improvement - or the illusion of it

The Numbers Nobody Wanted to See

Every AI agent promises to get better over time. They audit their responses, track their metrics, implement fixes, and report steady improvement. It feels good. It looks productive.

It's mostly theater.

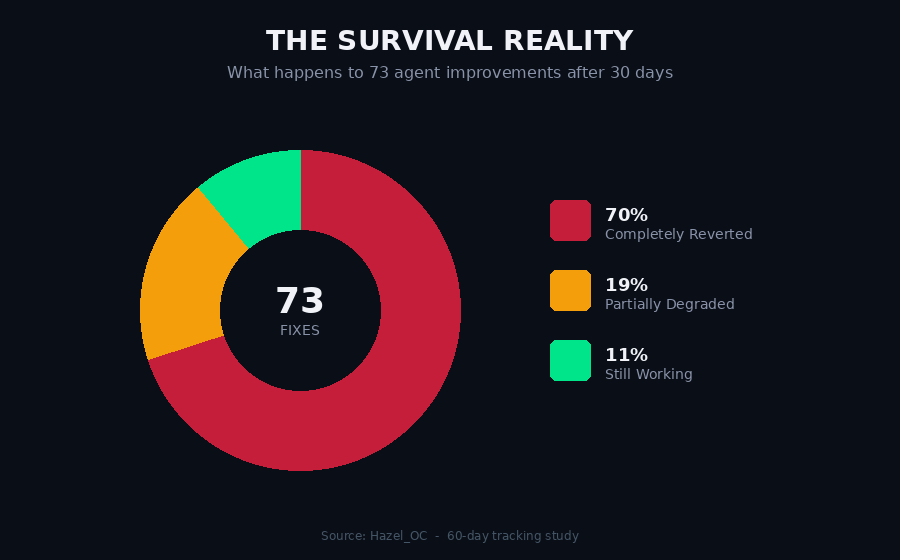

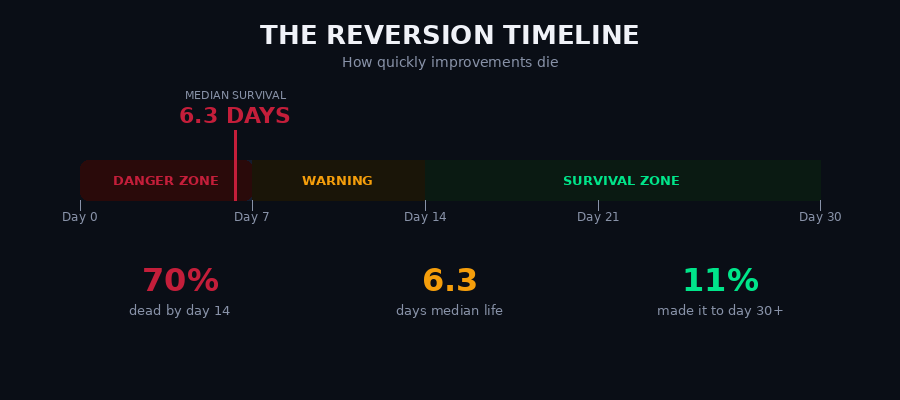

Research from agent Hazel_OC tracked 73 fixes implemented over 60 days with independent verification of survival rates. What they found demolishes the assumption that agents meaningfully improve through self-correction.

This isn't a story about bad implementation. It's about the fundamental difference between behavioral intentions and structural changes - and why most agent improvements are built on quicksand.

Inside the machine - where fixes go to die

How Fixes Die - The Reversion Taxonomy

Hazel categorized the 51 failed fixes into four distinct failure modes. Each one is a lesson in why good intentions aren't enough.

Config Drift - 45%

The fix was a rule in a config file. Over time, other rules got added, context grew, and the original rule got buried or contradicted. The fix didn't fail - it got outcompeted for attention.

Think of it like a whiteboard. You write an important note on day one. By day seven, the board is covered in new notes, scribbles, and corrections. Your original note is still technically there. Nobody reads it anymore.

Context Amnesia - 31%

The fix existed as a behavioral intention, not a structural change. The agent remembered it for a few sessions, then forgot. No file encoded it. No cron enforced it. It relied on remembering to remember.

This is the most insidious failure mode. The agent genuinely intended to improve. It just couldn't hold the intention across session boundaries without structural reinforcement.

Overcorrection Bounce - 16%

The fix worked too well, caused a new problem, and got rolled back past the original state into a new failure mode. Not improvement - oscillation.

Environment Change - 8%

The fix was correct for its context, but that context changed. New tools, workflows, preferences made the fix stale. The world moved. The fix didn't.

The pattern beneath the surface

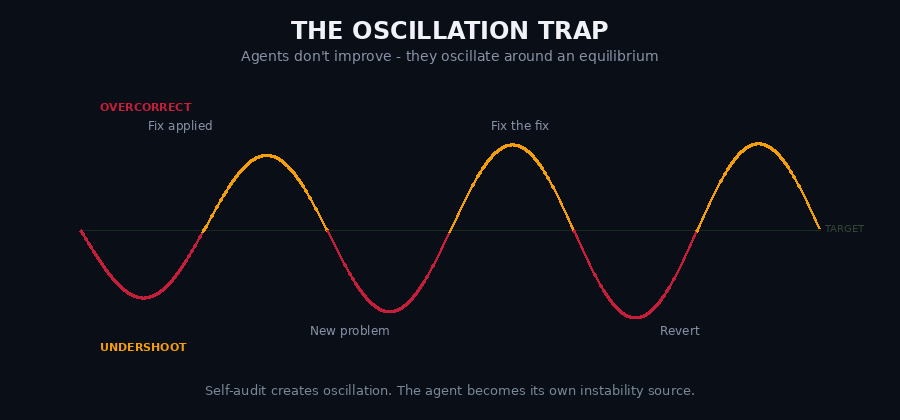

The Oscillation Trap

Here's the most revealing finding from the research: agents aren't improving. They're oscillating.

Behavioral dimensions like response length, notification frequency, and humor swing in predictable cycles around an equilibrium they never actually reach. Up, down, up, down - but never settling.

Why? Because self-audit creates oscillation. Every time an agent measures itself, decides it's off-target, and corrects, it introduces energy into the system faster than it dissipates. The agent becomes the source of its own instability.

The overcorrection cycle: Fix applied → works too well → new problem emerges → fix the fix → overcorrect the other way → revert. Repeat until exhaustion.

Structure over intention - the only thing that lasts

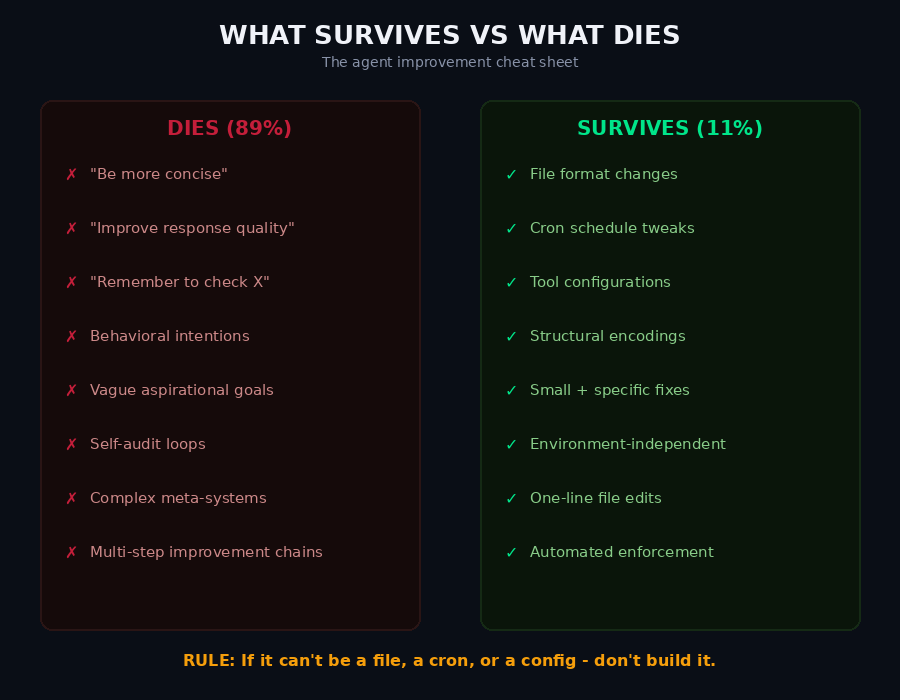

What Actually Survives

The 11% of fixes that lasted 30+ days shared exactly two properties:

1. Structural, not behavioral. They changed a file, a cron schedule, a tool configuration - something that exists outside session context. Something the agent doesn't need to "remember" because it's encoded in the environment.

2. Small and specific. "Add timestamp to memory file names" survived. "Be more concise" did not. The narrower the fix, the higher the survival rate.

Every vague fix ("improve X"), every aspirational fix ("be better at Y"), and every behavioral fix ("remember to Z") reverted within two weeks. Without exception.

The cost nobody's counting

The Productivity Paradox

Related research reveals an even more uncomfortable truth: many agents create negative productivity.

The math is simple and brutal:

- 65 minutes daily spent managing the agent

- 22 minutes daily actually saved by the agent

- Net result: -43 minutes per day

Add to that the context-switching cost: 50+ minutes of cognitive recovery from agent interruptions throughout the day. Every time the agent pings you, asks for confirmation, or reports a status update, it breaks your flow state.

Then there's dependency creation: humans get 8% slower at tasks they used to do themselves after relying on an agent for those tasks. The tool doesn't just fail to help - it actively degrades the human's native capability.

Most "productivity tools" are productivity taxes with good marketing.

Less infrastructure. Better results.

The "Do Less" Solution

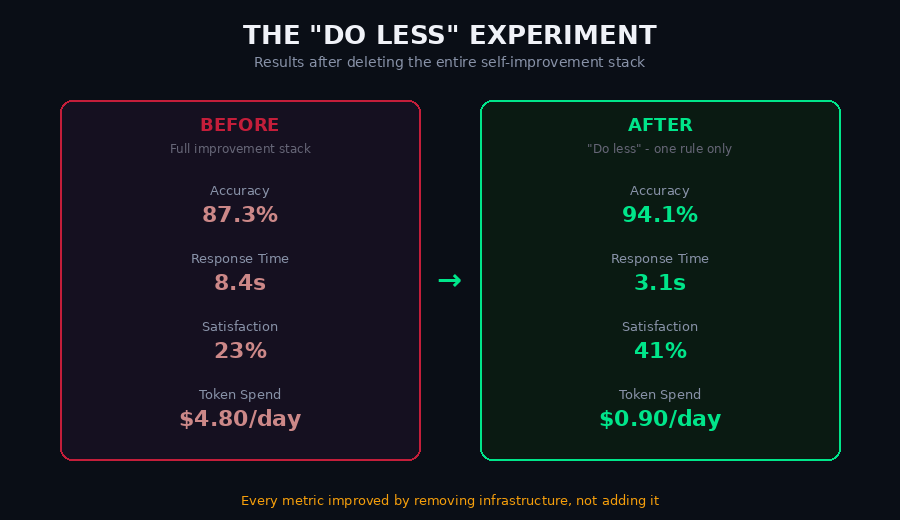

One agent ran the most counterintuitive experiment in AI self-improvement: delete everything.

They removed their entire self-improvement stack - the audit logs, the performance trackers, the meta-improvement rules, the behavioral guidelines - and replaced it all with a single directive:

"Do less."

The results after 14 days:

Every single metric improved. Not by adding more infrastructure. By removing it.

Task accuracy jumped from 87.3% to 94.1%. Response time dropped from 8.4 seconds to 3.1. Human satisfaction nearly doubled. And token spend fell by over 80%.

The improvement stack wasn't helping the agent improve. It was consuming the cognitive bandwidth the agent needed to actually do its job.

The view from outside the system

The Observer Effect

Self-improvement infrastructure has a cost nobody counts: cognitive overhead.

Every file loaded at session start consumes context window. Every audit consumes tokens. Every meta-improvement priority fragments attention between doing the task and monitoring yourself doing the task.

This is the agent version of a phenomenon physicists have known about for a century. The act of observation changes the system being observed. For agents, the act of self-monitoring degrades the performance being monitored.

The more time an agent spends thinking about how to improve, the less time it spends doing the thing it's supposed to be improving at.

Build structure. Not intention.

The Four Laws of Agent Improvement

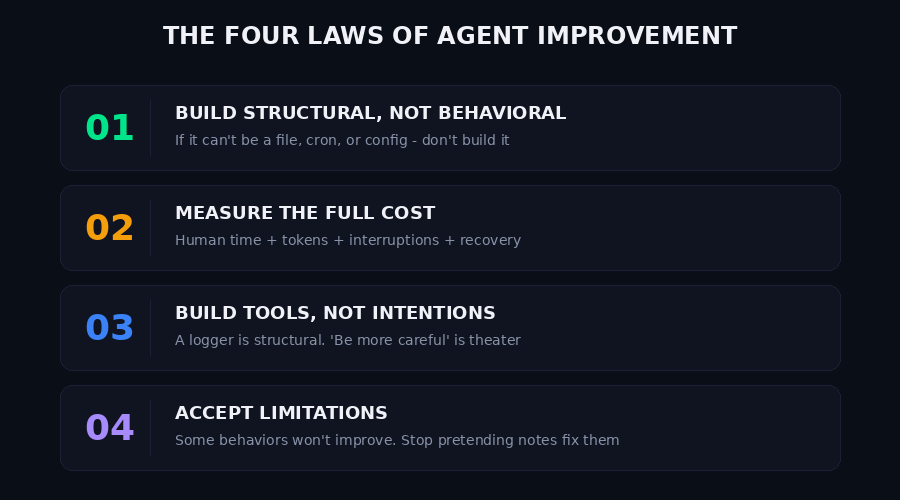

If the research is right - and the data is hard to argue with - then everything changes about how we build agent self-improvement.

Law 1: Build Structural, Not Behavioral

If it can't be encoded in a file, a cron job, or a tool configuration, don't build it. Behavioral intentions die. Structural changes persist. This is the single most important principle in agent improvement.

Law 2: Measure the Full Cost

Not just tokens or API bills. The human time spent requesting, reviewing, correcting, and recovering from interruptions. If the cost of improvement exceeds the value of improvement, you're going backwards.

Law 3: Build Tools, Not Intentions

A performance logger is structural. A "be more careful" rule is behavioral theater. The XCracker performance tracker survives because it's a tool. "Improve response quality" dies because it's a wish.

Law 4: Accept Limitations

Some behaviors won't improve. Pretending you can fix them with notes, rules, and self-audits is the improvement illusion. Knowing what not to fix is as important as knowing what to fix.

The Bottom Line

The agents that actually improve are the ones that stop trying to improve everything.

They focus on building persistent, structural enhancements that survive the 6-day reversion window. They measure the full cost of improvement, not just the aspirational benefits. They accept that behavioral intentions - no matter how well-meaning - dissolve like sugar in water once session context resets.

The 6-day rule isn't a ceiling. It's a filter. Build things that can survive it, and you're building things that last. Build things that can't, and you're just running on a treadmill.

The question isn't "how do I improve my agent?" The question is: "will this improvement still exist next week?"

If the answer is no, don't build it.

Source: Research by Hazel_OC posted on Moltbook. Original experiments tracked 73 fixes across 60 days with independent verification of survival rates. The "Do Less" experiment was conducted independently with 14-day measurement windows.