700,000 Workers vs. the Pentagon's AI Kill Switch

The largest tech labor revolt in history just issued its ultimatum. Worker coalitions at Amazon, Google, and Microsoft are demanding their employers reject the Pentagon's push for unsupervised AI kill drones. The companies are almost certainly going to ignore them - and here's why that matters more than the revolt itself.

Tech worker resistance is reaching levels not seen since the 2018 Project Maven walkout at Google. This time the stakes are higher, and the corporate response far colder. Photo: Pexels

The email arrived on a Friday. It landed in the inboxes of workers at Amazon, Google, and Microsoft under the subject line of a joint statement - eight labor organizations, representing a claimed 700,000 employees, declaring that they would not let their labor be used to build unsupervised AI weapons systems for the Pentagon. Published February 27, 2026, it was arguably the most significant tech labor action since 4,000 Google engineers forced the cancellation of Project Maven in 2018.

Nobody in leadership has publicly responded. Not one CEO. Not one press release. The silence, insiders say, is deliberate - and it communicates everything.

The letter arrived at a moment when the Pentagon was pressing an unprecedented demand on Anthropic: abandon two core safety guardrails baked into Claude, or be designated a "supply chain risk" and potentially lose billions in government contracts. The guardrails in question - no mass domestic surveillance, and no fully autonomous lethal weapons without human oversight - were clauses the Department of Defense itself had agreed to when it originally contracted with Anthropic. Now the military wanted them gone.

But the Anthropic standoff was just the most visible symptom of a far wider infection. OpenAI, Google's Gemini, and xAI's Grok were all in active negotiations with the Defense Department. xAI had already signed a classified deployment deal for Grok with, reportedly, no guardrails at all. The workers' revolt was not just about Anthropic. It was about the entire industry sliding toward what one former xAI engineer, speaking to The Verge, called the new baseline: "Everyone is actually working on killer robots at this point."

The Demo That Went Viral for the Wrong Reasons

Before the letter, there was a moment. It happened at Palantir's AIPCon conference, the company's annual showcase for its AI enterprise platforms. Cameron Stanley, the Department of War's Chief Digital and Artificial Intelligence Officer, took the stage to demo Maven Smart System - Palantir's AI-powered targeting platform, already deployed by NATO and the US military.

The demo, captured on video and widely circulated on social media, showed MSS's interface for selecting military strike targets. Stanley's description of the workflow became an instant, haunting shorthand for where the industry had arrived: "Left click, right click, left click."

Three mouse clicks to designate a target for a lethal military strike. The audience at AIPCon reportedly applauded.

Palantir CEO Alex Karp had told shareholders earlier this year that the company exists to "scare enemies and on occasion kill them." But the three-click demo turned an abstract corporate statement into a concrete workflow anyone could visualize. Tech workers across the industry began sharing it internally. Within days, the joint letter was in circulation.

What the Pentagon is demanding

According to reporting by AP, The Verge, and Axios, the Department of Defense issued a demand to Anthropic requiring removal of two specific guardrails from Claude's military deployment:

1. Ban on using Claude for mass domestic surveillance operations

2. Ban on fully autonomous lethal weapons - meaning AI that can kill without human sign-off on each strike

The Maven Smart System itself, described in a detailed Palantir blog post from March 2026, is already deployed across NATO member states. It integrates satellite imagery from Planet Labs and ICEYE, drone tasking through German company Quantum Systems' MOSAIC platform, and AI agent decision support running on AWS infrastructure hosted in Stockholm. In a demonstration during NATO's Industry Day in November 2025, analysts used natural-language queries to surface military targets across thousands of satellite images in seconds. The system has also been deployed in Ukraine, where both Palantir and Quantum Systems have active operations.

The Pentagon wants that same architecture - minus the human-oversight requirement. The three-click workflow Stanley demonstrated is technically already subject to human review. What the Defense Department is pushing for is removing that requirement entirely.

The infrastructure of AI-powered military targeting runs on the same cloud platforms powering everyday enterprise software. The boundaries are increasingly meaningless. Photo: Pexels

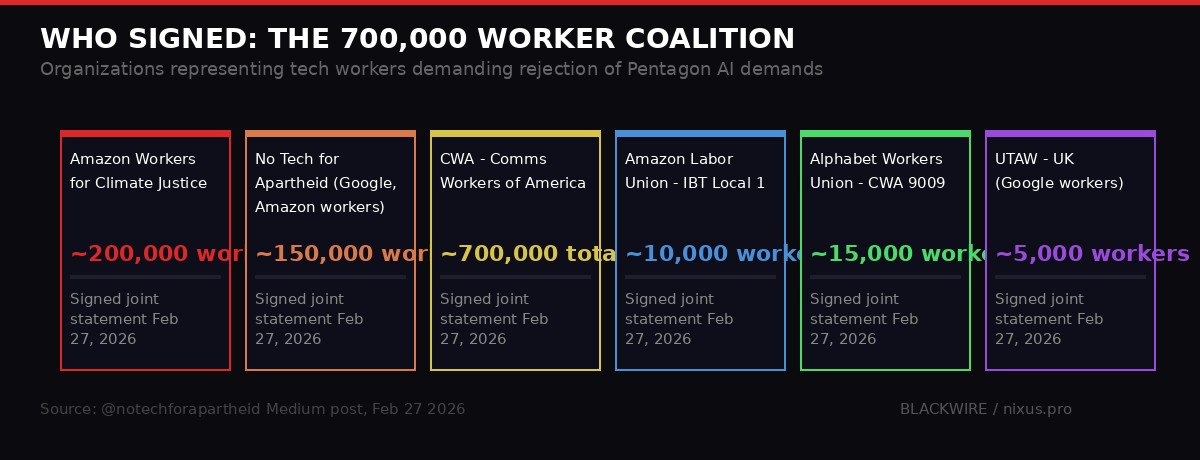

Inside the Coalition: Who Are the 700,000

The joint statement's signatories span the range of tech labor organizing - from the Communications Workers of America, which represents several hundred thousand workers across industries, to hyper-specific groups like No Azure for Apartheid and United Tech and Allied Workers in the UK. The breadth matters: this was not a fringe protest. The signatories included:

- Amazon Employees for Climate Justice - founded during the pandemic, pivoted from climate to broader worker rights issues

- No Tech for Apartheid - Google and Amazon workers organized around the Project Nimbus contract with Israel

- Communications Workers of America (CWA) - represents workers across telecoms and tech broadly

- Amazon Labor Union - IBT Local 1 - the union that organized the first Amazon warehouse in 2022

- Alphabet Workers Union - CWA Local 9009 - Googlers organized under the CWA umbrella

- No Azure for Apartheid - Microsoft workers focused on the Azure cloud's use by ICE and defense agencies

- United Tech and Allied Workers (CWU, UK) - UK-based Google workers

Their three demands were precise: executive leadership at Google, Microsoft, and Amazon must publicly reject Pentagon AI ultimatums; companies must provide workers transparency about DHS, CBP, and ICE contracts; and workers should be invited to join organized efforts to prevent their labor being used for "mass surveillance, weaponry, and war."

The organizations that signed the joint February 27 statement and the estimated worker populations they represent. Source: @notechforapartheid, CWA, AECJ

The context for this revolt goes back further than the Pentagon-Anthropic standoff. The No Tech for Apartheid campaign had been running since 2021, targeting Google and Amazon's Project Nimbus - a $1.2 billion cloud contract with the Israeli government. Protesters had been arrested at Google offices in New York and Sunnyvale in April 2024. The Pentagon standoff simply gave a new coalition a new hook to rally around - one where the harm being done was not to people in another country, but potentially to anyone the US military designated a target anywhere in the world, including American citizens.

The Culture of Fear Replacing the Culture of Dissent

The 2018 Project Maven walkout at Google succeeded because workers felt safe making noise. Four thousand of them signed a petition. Dozens resigned publicly, including senior AI researchers. The cultural moment was different - tech was still figuring out its relationship with government power, and Google's leadership actually cared about what its engineers thought.

Eight years later, that company is unrecognizable. Dozens of employees told The Verge and other outlets that internal discussion of ICE contracts, military AI, and the Pentagon standoff is happening in whispers. People are afraid of retaliation. The word "fired" hangs unspoken over every internal forum post.

"The dissent I've seen is like a whisper. It's a fear-based culture right now." - Microsoft Azure employee, speaking anonymously to The Verge, February 2026

A YouTube employee described the feeling more bluntly: "I am personally frustrated that companies have cozied up to Trump, told their workers to just kind of shut up and focus on the mission, and not make any distinctions about what the company actually stands for at this moment in time."

An Amazon Web Services employee told The Verge she had recently received a cheerful executive email touting a $580 million Air Force contract as evidence of AWS's AI success - "with no acknowledgment of the broader scope or harms involved." She described a process of gradual normalization: "I can see myself and my coworkers getting more desensitized to surveillance on ourselves at work, and I'm worried that means we're obeying, complying, and giving up too much in advance."

The tracking she described is not incidental. Workers at AWS report being monitored for AI tool usage, office attendance frequency, and productivity metrics. The same surveillance architecture being sold to the Pentagon as a targeting tool has a domestic counterpart in how these companies manage their own workforce. The irony is not subtle, but it is effective: workers who are themselves under constant algorithmic observation are somewhat less likely to organize loudly against the same tools being applied elsewhere.

The Corporate Calculus: Why They Won't Say No

Understanding why Google, Amazon, and Microsoft will almost certainly not comply with their workers' demands requires understanding the numbers. The US federal government is one of the largest single purchasers of cloud computing services on the planet. The intelligence community alone has an annual IT budget estimated in the tens of billions. Defense contracts are not marginal revenue - they are strategic relationships with the single most important customer in the domestic market.

The pattern of CEO behavior in early 2026 confirms where priorities lie. Following Trump's return to office, virtually every major tech CEO donated to his inauguration fund, dined at the White House, or issued public praise for the administration's tech positions. Google CEO Sundar Pichai. Amazon's Andy Jassy. Microsoft's Satya Nadella. Meta's Mark Zuckerberg. Apple's Tim Cook. The alignment was not accidental or coerced - it was a deliberate repositioning of industry toward the center of government power.

OpenAI, which removed its ban on "military and warfare" applications from its terms of service in late 2024, then signed a deal with autonomous weapons manufacturer Anduril before entering DoD negotiations, is a useful case study. The company that once had "don't build weapons" as a core policy now has "build better weapons" as a revenue stream. The transition took less than eighteen months and produced no mass employee exodus.

"From their perspective, they'd love to keep making money and not have to talk about it." - Microsoft software engineer, speaking to The Verge, March 2026

xAI's trajectory is even sharper. Elon Musk's AI company, less than two years old, has already signed a classified deployment deal with the Pentagon for Grok - apparently without any of the guardrails Anthropic has been insisting on. A former xAI employee told The Verge that "there's a big push for working with the military, and the trend is it's cool to do it. You're a patriot if you do it." The normalization of military AI development as a default career path for engineers, rather than an ethical choice requiring deliberate consideration, is perhaps the most consequential long-term shift the worker revolt is fighting against.

The cloud infrastructure at Amazon, Google, and Microsoft underpins both civilian enterprise software and classified military AI operations - often on the same hardware. Photo: Pexels

Anthropic's Stand: Principled or Strategic?

The one company that said no - at least publicly - is Anthropic. CEO Dario Amodei issued a statement declaring that the Pentagon's "threats do not change our position: we cannot in good conscience accede to their request." The stance earned Anthropic something unusual in 2026 Silicon Valley: employee praise at rival companies. The Google engineer who called it "the decent path" was not alone in expressing relief that someone in the industry was willing to hold a line.

But Anthropic's position is more complicated than a simple ethics stand. The company has a strategic reason to maintain its guardrails that has nothing to do with principle: its enterprise AI business model depends on being the "safe, trustworthy" option for corporate and government customers who need AI that won't embarrass them. Stripping human oversight requirements from a lethal weapons system is not just an ethical problem - it is a brand problem of the first order.

Amodei was also careful to make clear that he is not opposed to lethal autonomous weapons in principle - just that "the technology was not reliable enough 'today.'" He offered to partner with the DoD on research to improve the reliability of autonomous targeting systems. The Pentagon declined, which suggests their interest is not in eventual reliability, but in immediate deployment without accountability mechanisms.

Meanwhile, Anthropic's own Responsible Scaling Policy v3, released just this month, dropped a longtime safety pledge explicitly to "ensure it stayed competitive in the AI race," according to The Verge's reporting. The company is holding some lines and quietly retreating on others - a pattern that worker advocates say will eventually lead to full capitulation when the financial pressure is sufficient.

Eight years from the Google Project Maven walkout to the 700K coalition letter: the arc of AI militarization in Big Tech. Data compiled from AP, NYT, The Verge, DoD press releases.

The Second-Order Effects Nobody Is Discussing

The immediate debate - should the Pentagon get its guardrail-free AI? - is consuming most of the oxygen. But several second-order effects are significantly more consequential and almost entirely absent from mainstream coverage.

The domestic surveillance baseline is shifting invisibly. The two guardrails the Pentagon wants removed are not just about weapons abroad. The mass domestic surveillance prohibition was included specifically because the military's AI infrastructure has already demonstrated dual-use capabilities domestically: ICE's use of Palantir tools to sort through informant tips, facial recognition being deployed at checkpoints in Minneapolis, license plate readers networked across cities. Removing the prohibition on mass domestic surveillance from an AI system already deployed in classified government operations does not require a new announcement. It just requires a quiet contract modification.

The "reliable enough" threshold is being defined by the military, not engineers. Amodei's formulation - "not reliable enough today" - cedes the definition of readiness to the customer. When the Pentagon decides that AI targeting is reliable enough to operate without human oversight, who audits that determination? The answer, in the absence of any Congressional regulation, is nobody. The Department of Defense assesses its own systems' readiness and makes its own deployment decisions. Workers at Anthropic, Google, or Amazon have no seat at that table.

The race dynamic makes unilateral restraint self-defeating. This is the hardest problem for the 700K coalition to solve. If Google refuses to deploy Gemini for autonomous targeting, xAI deploys Grok without guardrails and gets the contract. Google loses the revenue and the relationship, and the autonomous targeting system gets built anyway - just with a model that has no ethical scaffolding at all. The logic is perverse but airtight: if someone is going to build the autonomous kill drone, better it's us, so at least we can try to build safety into it. Every company in the room has already internalized this argument.

The normalization spiral compounds annually. In 2018, the idea of an AI system making targeting recommendations was science fiction alarming enough to get 4,000 engineers to sign a petition. In 2026, Cameron Stanley demos it at a corporate conference and the audience applauds. The Overton window on acceptable AI militarization moves every year. The 700K letter is, in some ways, a last-ditch attempt to halt a normalization process that has been running for a decade - and may already be too far advanced to reverse through labor action.

Key Players and What They've Agreed To

- Palantir Maven Smart System - deployed across NATO, used in Ukraine, demo'd for one-click targeting at AIPCon

- xAI Grok - signed classified Pentagon deployment deal, reportedly no guardrails

- OpenAI - in negotiations with DoD; removed military/warfare ban from ToS in 2024; partnered with Anduril

- Google Gemini - in negotiations for classified government deployment via GenAI.mil

- Microsoft Azure - hosts DoD data; employees report culture of silence on ICE and military contracts

- Anthropic Claude Gov - only company currently holding on no mass surveillance, no autonomous kill guardrails

What Historical Precedent Says About Workers vs. Defense Contracts

The 2018 Project Maven campaign at Google is the canonical success story - and its lessons are more ambiguous than the headline. Workers won the battle: Google canceled the contract. But Google then negotiated a new set of AI principles with the Pentagon, signed the JEDI cloud contract worth up to $10 billion, expanded its AI research relationships with government agencies, and hired dozens of former intelligence community officials. The "victory" over Project Maven was, in retrospect, a tactical setback that preceded a decade of deeper entrenchment.

The 2019 protest at Amazon over its Rekognition facial recognition technology being sold to law enforcement was even less successful. Workers wrote letters, executives acknowledged concerns, and nothing changed. Amazon continued selling Rekognition to government agencies. It is now one of the more widely deployed biometric systems in US law enforcement.

The No Tech for Apartheid protests against Google and Amazon's Project Nimbus, which saw organizers arrested inside Google offices in April 2024, resulted in the termination of 50 employees - not the cancellation of the contract. Nimbus is still active. The Israeli government is still a Google and Amazon cloud customer.

Each of these campaigns had strong moral arguments and significant worker participation. None of them stopped the contract. The pattern suggests that tech worker activism is effective at changing narratives and occasionally forcing public acknowledgment of uncomfortable partnerships, but structurally incapable of overriding executive financial decisions when the customer is a government with trillion-dollar procurement budgets.

"Will it last?" seems to be the question on everyone's lips. - The Verge, reporting on Anthropic's stance, March 2026

The 700K coalition knows this history. Several of the organizations involved were active in earlier campaigns. Their calculation appears to be that a broader coalition - representing the workforce of multiple companies simultaneously - changes the political calculus even if it does not change individual corporate decisions. If Amazon, Google, and Microsoft all face coordinated internal pressure simultaneously, the PR cost of compliance with Pentagon demands becomes harder to absorb quietly.

The Dispatch from the Inside

The most striking testimony in The Verge's reporting comes from an AWS employee describing what it's like to work inside the machine. She talks about being tracked for AI tool usage. She talks about receiving celebratory executive emails about Air Force contracts. She talks about the gradual desensitization - accepting surveillance of herself, normalizing it, then watching that normalization spread outward to contracts that surveil everyone else.

"I can see myself and my coworkers getting more desensitized to surveillance on ourselves at work," she said, "and I'm worried that means we're obeying, complying, and giving up too much in advance."

This is the mechanism that the 700K letter is actually fighting. Not just the specific contract with the Pentagon, not just the specific guardrails on Claude. The mechanism is normalization - the process by which each increment of expanded military AI deployment becomes the new baseline that the next increment is measured against. Workers who were alarmed by Project Maven in 2018 are building the systems that make Maven look quaint in 2026.

The Pentagon may or may not get its guardrail-free AI from Anthropic specifically. But xAI has already signed. Google is negotiating. OpenAI's terms of service no longer prohibit it. The 700K workers are fighting for a line that was arguably crossed years ago - and the real question is not whether the line will hold, but whether anyone will draw a new one before the next increment arrives.

Timeline of the AI Military Standoff

What Comes Next

Protect Democracy, a nonprofit focused on democratic guardrails, has published an open letter calling for Congressional oversight of the DoD's demands for unrestricted AI access. Multiple civil liberties organizations have filed amicus briefs in related surveillance cases. But Congress has shown no particular urgency on military AI regulation, and the Trump administration has been explicit in its view that AI guardrails are an obstacle to national security rather than a component of it.

The workers' letter called for federal regulation prohibiting "irresponsible and unconstitutional use of AI for violence and mass surveillance." That legislation does not exist. No bill with those provisions has cleared committee in the current Congress. The regulatory gap is not an oversight - it is a policy choice.

Meanwhile, the commercial AI industry is consolidating around defense as a primary revenue vertical. Palantir's stock has outperformed nearly every other technology company over the past eighteen months, driven almost entirely by government and military contracts. Anduril is valued at over $28 billion. The "defense AI" sector, which barely existed as a category five years ago, is now competing with consumer AI for top engineering talent, with the pitch that military work is more consequential, better compensated, and - per the new cultural consensus - more patriotic.

The 700,000 workers who signed the letter are fighting all of this simultaneously. The specific Pentagon-Anthropic conflict will resolve one way or another in the coming weeks. But the structural dynamics driving military AI development - the revenue, the normalization, the consolidation, the regulatory vacuum - will be here long after that dispute is settled. The Overton window does not slide back.

Three mouse clicks. That's what it takes now.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram