RAMageddon: How AI Is Killing Gaming One Memory Chip at a Time

Data centers will consume 70% of global RAM production in 2026. The gaming industry - which powered a pandemic-era boom unlike anything the market had ever seen - is now absorbing the full weight of AI's insatiable appetite for memory. The damage is already done, and it is accelerating.

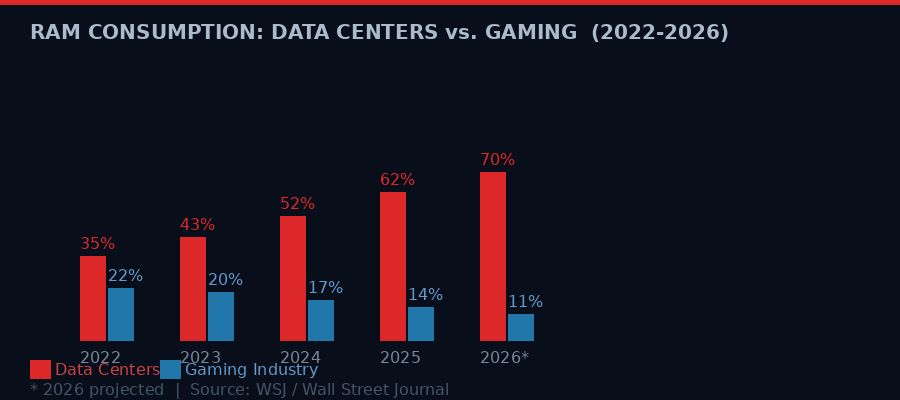

RAM consumption projections: data centers versus gaming industry, 2022-2026. Source: Wall Street Journal, WIRED analysis.

Six years ago, gaming was the industry that could do no wrong. Animal Crossing: New Horizons sold 13.4 million digital units in a single month. Global gaming revenue spiked 23 percent in 2020 alone. The PlayStation 5 launched to unprecedented demand. Steam Deck preorders sold out in hours. Job postings in game development rose 40 percent during the pandemic years. It looked unstoppable.

Today, Valve has discontinued the Steam Deck LCD. Sony has likely delayed the PS6 to 2029. Nintendo is suing the US government over tariffs threatening the Switch 2. Xbox is described by its own creator as being in "palliative care." Game studios are shedding workers at rates not seen since the post-pandemic correction, this time accelerated by AI automation.

The cause of all of it is one word: RAM.

The random-access memory chip - the humble, unflashy component that every electronic device needs to function - has become the single most constrained resource in the global technology economy. And the entity consuming most of it is not the gaming industry, or smartphones, or even data centers in general. It is specifically the AI boom, and the insatiable appetite of neural network inference clusters that never stop running.

According to reporting by The Wall Street Journal, data centers - AI-specific and otherwise - are expected to consume approximately 70 percent of global RAM production in 2026. The gaming industry, once a reliable anchor of semiconductor demand, has been crowded almost entirely off the field. This is RAMageddon - and it is not a future problem. It is happening right now.

The Memory Chip Nobody Talks About

When most people hear about chip shortages, they think of the 2021 crisis: new cars sitting on lots without CPUs, PlayStation 5 consoles selling for triple retail price on eBay. That crisis was acute, driven by pandemic supply chain disruptions. It resolved, roughly, within two years.

The 2026 RAM shortage is different in a fundamental way. It is structural, not cyclical. There is no pandemic to recover from and no supply chain to unsnarl. The demand for AI memory is not spiking and receding - it is growing compounding each year, tied directly to the number of AI model parameters, the number of inference queries, and the scale of model training that companies are willing to fund.

RAM - technically DRAM, or Dynamic Random-Access Memory - is the component that holds data actively being used by a processor. Unlike long-term storage (hard drives, SSDs), RAM is volatile: it loses everything the moment you cut power. It is fast, expensive to manufacture, and physically constrained by the limits of DRAM cell architecture.

AI systems need enormous amounts of it. A single modern large language model can require hundreds of gigabytes of VRAM and system RAM just to run inference, let alone train. When you multiply that by thousands of GPUs running around the clock across dozens of hyperscale facilities, the numbers become staggering.

According to data cited by Bloomberg, the US now accounts for more than half of all "hyperscale facilities" - data centers built specifically for AI workloads, many of which represent multibillion-dollar investments. US data centers have doubled since 2022, according to the Lincoln Institute, and electricity costs in communities near these facilities have risen up to 267 percent above where they were five years ago.

The memory suppliers - Samsung, SK Hynix, Micron - cannot build fabs fast enough to satisfy both AI demand and the rest of the consumer electronics market simultaneously. So prices rise, allocation tightens, and the customers with the deepest pockets win the chips. In 2026, those customers are Amazon Web Services, Microsoft Azure, Google Cloud, and a constellation of AI startups spending hundreds of millions on inference infrastructure.

The gaming industry is not in that league. Not even close.

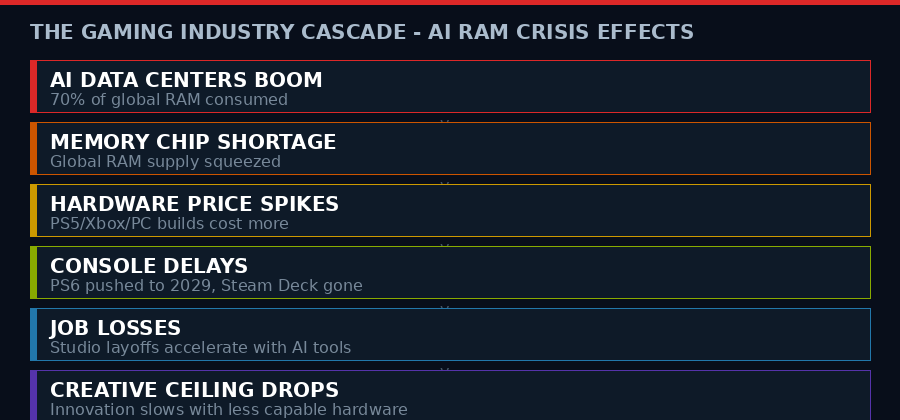

The cascade of consequences from the AI RAM shortage hitting gaming, from memory supply constraints to creative ceiling collapse.

A Pandemic Boom Turned Ruin

To understand how severe the reversal is, you need to remember what the gaming industry looked like in 2020 and 2021. It was not merely doing well - it was experiencing a structural upgrade in its cultural position.

Gaming became, for the first time in its history, the dominant entertainment medium for tens of millions of people who would not previously have called themselves gamers. Parents played Animal Crossing with their kids. Twitch and YouTube gaming channels gained viewership that rivaled traditional television. Major publishers were flush with cash. Microsoft spent $68.7 billion to acquire Activision Blizzard. Sony put $1.45 billion into Epic Games. Job postings in game development rose 40 percent according to Bain & Company research. The industry looked set to permanently expand its footprint.

Then the AI buildout began its exponential phase.

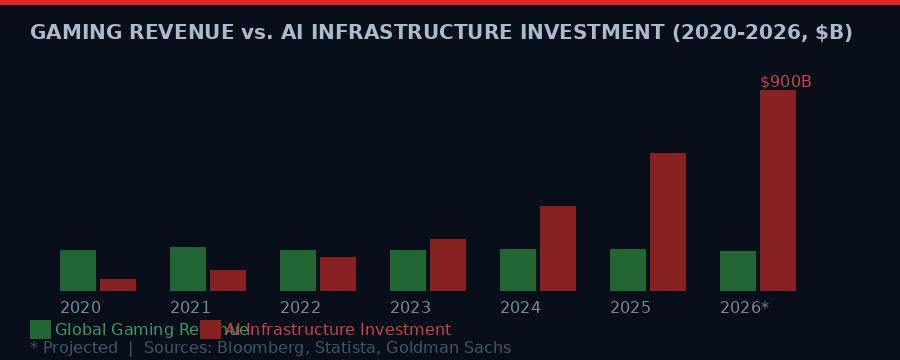

The decline has been slow enough to miss in any given quarter but unmistakable over the arc of four years. Global gaming revenue - which hit roughly $196 billion in 2021 - has barely moved since, despite inflation and growing user bases in emerging markets. The raw dollar figure masks a more troubling story: the industry's ability to innovate hardware and drive consumer upgrade cycles has been directly hampered by the memory shortage driving up component costs.

"Gaming is the only mass media entertainment where the creative ceiling is limited by consumer hardware. So, if consumers can't afford or access higher grade tech like sufficient RAM, the innovation will slow down." - Gene Park, video game critic, Washington Post (via WIRED)

This is the key second-order effect that most analysts miss. Gaming is not suffering merely because consoles cost more. It is suffering because the creative ambitions of game developers are bottlenecked by what average consumers can run. If a PS5 costs $100 more than it did two years ago because memory prices are up, fewer players upgrade, the installed base grows more slowly, and developers cannot justify building for the highest-capability hardware. The creative ceiling drops.

The creative ceiling of the medium - the kinds of worlds that can be simulated, the fidelity of assets, the complexity of AI-driven NPC behavior - is directly determined by the RAM that consumer hardware can afford. When RAM gets expensive, gaming gets worse. Not metaphorically. Measurably.

Hardware Graveyard: Steam Deck, PS6, Xbox

The hardware casualties are mounting in a way that would have seemed impossible in 2021.

In December 2025, Valve discontinued the Steam Deck LCD 256GB model - its entry-level handheld console, released just three years earlier. This was, as WIRED noted in its coverage of the broader crisis, "the first discontinuation of a major console before the launch of a worthy upgrade." Valve's successor device - a Steam Machine roughly six times more powerful than the Steam Deck - has an unconfirmed release window and unknown price. The current Steam Deck market has been left to wither.

Sony's situation is grimmer. According to Bloomberg, the company has "yet to confirm or deny" that its PS6, originally slated for late 2027, has been delayed to 2028 or beyond. Given the RAM cost pressures affecting every component of a next-gen console, the silence is itself telling. Sony's hardware division cannot commit to a margin-viable price point for a next-generation machine when the memory market remains this hostile.

The Xbox situation drew high-profile attention in March when Seamus Blackley - the original creator of the Xbox console in 2001 - described the platform as being in "distress" and characterized the February 2026 shuffle of Asha Sharma from AI executive to CEO of Microsoft Gaming as placing the product in "palliative care." Microsoft has been openly pivoting toward AI and cloud gaming, which requires far less consumer-grade RAM but also represents a fundamental retreat from the hardware ambitions that defined Xbox's identity for 25 years.

The Xbox paradox: Microsoft's shift to cloud gaming is rational - it removes the company's dependence on expensive consumer hardware. But cloud gaming requires exactly the kind of data center RAM that is currently being consumed at record rates by AI workloads. Microsoft is both a victim and a driver of the same shortage.

Nintendo navigated the tariff crisis around the Switch 2 launch in 2025 but has not ruled out further price hikes, and has filed suit against the US government over the tariff regime. Nintendo's relative resilience comes from its strategy of targeting lower-performance, lower-RAM hardware specifications - the company's engineering philosophy of "doing more with less" has ironically become a survival advantage in a world where memory is rationed.

Global gaming revenue has flatlined as AI infrastructure investment has grown exponentially, competing for the same memory chips. Sources: Bloomberg, Statista, Goldman Sachs projections.

Job Losses: A Double Blow

The RAM shortage is only one half of gaming's current crisis. The other half is AI itself - not as a consumer of chips, but as a replacement for human labor within game studios.

The mass layoffs in the gaming industry began in earnest in late 2023, and by 2026 the cumulative toll is severe. Studios that expanded aggressively during the pandemic hiring boom have shed thousands of positions - artists, writers, QA testers, level designers, technical directors. The proximate causes vary: canceled projects, disappointing launches, corporate restructurings. But the underlying current running through most of the cuts is AI automation eating the roles that were most easily quantified and contracted.

Art generation tools have made it possible to produce concept art, environmental textures, and promotional imagery at a fraction of the headcount required two years ago. AI-assisted coding tools have compressed development cycles in ways that reduce the demand for junior and mid-level engineers. Voice acting, animation blending, and NPC scripting are all increasingly handled by machine-generated content at studios under financial pressure to cut costs.

The anti-AI backlash among gamers has been intense and organized. Online communities have formed specifically to boycott games produced with AI-generated assets. Some studios have been forced to clarify their AI usage policies in response to consumer pressure. The irony is cutting: the same AI systems consuming the RAM that is pricing gamers out of new hardware are also consuming the jobs of the people who make the games those gamers play.

"There's a dark cloud over the industry right now. AI's proliferation in the gaming industry is already accelerating job loss and cheapening the work of developers at studios now scrutinized by anti-AI gamers." - WIRED, March 2026

The gaming workforce that expanded by 40 percent between 2020 and 2022 has not merely stopped growing. In many segments, it is actively contracting. The industry that once competed with Silicon Valley for software engineers is now offering fewer positions, lower pay, and shorter tenure than it did five years ago.

DLSS 5, Generative Pixels, and the AI Colonization of Games

Perhaps no development more clearly illustrates the tension between AI and gaming than the controversy over Nvidia's DLSS 5 - and the CEO's combative response to the backlash.

DLSS (Deep Learning Super Sampling) is Nvidia's AI-powered upscaling technology, which uses neural networks to generate high-resolution frames from lower-resolution inputs. Previous versions were widely praised for delivering performance gains with minimal visual quality tradeoffs. DLSS 5, however, takes a more radical approach: it uses generative AI to reconstruct not just resolution but actual scene geometry, textures, and temporal elements that were never rendered in the first place.

Gamers immediately pushed back. The concern is philosophical as much as technical: if the GPU is essentially hallucinating parts of the game frame rather than rendering them, is the player experiencing the game as the developers intended? Are visual bugs in AI-generated frames a GPU failure or a game bug? Who is responsible for the output?

Jensen Huang, Nvidia's CEO, dismissed these concerns at a recent industry event. According to Tom's Hardware, Huang told critics they were "completely wrong," arguing that DLSS 5 "fuses controllability of the geometry and textures and everything about the game with generative AI" and that developers can "fine-tune the generative AI."

The response was poorly received. Huang's dismissiveness - treating legitimate technical and artistic concerns as simple ignorance - reflects how Nvidia thinks about gaming: as a secondary use case for hardware primarily designed for AI workloads. The RTX 5000 series GPUs that support DLSS 5 are priced at levels that make them inaccessible to most consumers, their architecture optimized for AI tensor operations first and real-time gaming rasterization second.

This is the slow colonization of gaming by AI infrastructure: the chips, the software stack, the pricing, the design priorities - all of it now flows from AI first. Gaming receives whatever is left over, wrapped in marketing language about how AI makes it better. The gamers who do not accept this framing are told, by one of the most powerful men in the semiconductor industry, that they are "completely wrong."

The Electricity Tax Nobody Voted For

The RAM shortage has a less discussed cousin: the electricity price shock in communities near major AI data center clusters.

Hyperscale data centers consume extraordinary amounts of power. A single large facility can draw 100 megawatts or more - comparable to a small city. When dozens of these facilities concentrate in a geographic region, as has happened in northern Virginia, central Texas, the Pacific Northwest, and parts of Ireland and the Netherlands, the local grid cannot absorb the load without significant infrastructure upgrades. Those upgrades are typically paid for through rate increases distributed across all customers in the region.

Bloomberg's analysis found electricity costs in communities near AI data center hubs rising up to 267 percent above their five-year baselines. For households already struggling with post-pandemic cost pressures, this is a regressive tax levied without any democratic process. Residents who have no connection to AI development, who gain nothing from the productivity gains it supposedly delivers, are subsidizing the infrastructure that runs the systems.

This is also a gaming issue. Running a gaming PC for extended sessions is an energy-intensive activity. When base electricity rates double or triple, the cost of gaming as a leisure activity rises with them. This disproportionately affects lower-income households - exactly the consumers gaming companies depend on for high-volume game sales in the $30-60 range.

Timeline: Gaming's Five-Year Fall

Meta's VR Obituary: A Canary in the Coal Mine

On March 17, 2026, Meta announced that it would shut down the VR version of Horizon Worlds on June 15. The announcement confirmed what the industry had quietly acknowledged for months: the metaverse, as Mark Zuckerberg originally envisioned it, is dead.

Meta spent an estimated $36 billion on Reality Labs between 2021 and 2024. It shut down three VR game studios. It discontinued its metaverse for enterprise work. It stopped new content for its VR fitness platform Supernatural. And now it is pivoting Horizon Worlds to become a "mobile-first" platform competing with Roblox and Fortnite - platforms that require vastly less hardware to run and reach vastly larger audiences.

The VR metaverse dream was, at its core, a hardware dream. It required high-performance headsets with powerful processors and generous RAM to deliver immersive experiences. In a world where RAM is being rationed to AI data centers, building a consumer product that demands premium memory-intensive hardware at accessible price points is nearly impossible.

Meta's pivot reveals a broader strategic logic taking hold across the industry: if you cannot win the hardware fight, abandon the territory. Go mobile. Go cloud. Go to the platforms that run on thin clients and leave the heavy computing to server farms - which are, of course, the very facilities consuming all the RAM.

VR gaming will not disappear. But the mass-market virtual reality future that Zuckerberg bet $36 billion on has been indefinitely deferred, and the RAM shortage is a non-trivial part of why.

COBOL and the Ghost in the Machine

There is a counterintuitive force keeping older game hardware running longer than it should: the same chip shortages that are hurting gaming are also slowing the upgrade cycles that typically make legacy systems obsolete.

WIRED's recent profile of COBOL - the 60-year-old programming language still running roughly 95 percent of ATM transactions, most major airline reservation systems, and enormous swaths of government infrastructure - identified a structural parallel. Technologies that were supposed to be replaced "any year now" have instead become permanently embedded because the resources required to replace them never materialized.

The same dynamic is playing out in gaming. Developers and publishers who would normally push consumers toward new hardware every four to six years are instead supporting older platforms longer, because the addressable market on older hardware is now massive relative to the tiny installed base of people who have upgraded to the latest generation.

This is not healthy stability - it is stagnation dressed as continuity. The creative ambitions of games released for PS5-era hardware in 2026 are being constrained not by artistic limits but by the economics of a fragmented market where memory-intensive new hardware remains too expensive for most consumers to justify.

What Comes Next: Rationing, Routing, and Revolt

The RAM shortage is not going away in 2026. DRAM fab construction takes years - Samsung's P3 fab in Pyeongtaek, SK Hynix's M15X in Cheongju, Micron's facilities in Boise and overseas - all of these represent multi-year capital deployments that will eventually add supply. But the demand trajectory for AI inference is also not slowing. Every model generation requires more memory per parameter. Every new use case - AI-generated video, real-time AI agents, multimodal systems - adds more persistent memory demand to the global pool.

The most likely near-term outcomes for gaming are all forms of managed decline:

Cloud gaming capture: Microsoft, Sony, and Nvidia are all pushing cloud streaming services where the heavy computation runs on their server farms and consumers receive video streams on thin clients. This partially decouples gaming from consumer RAM requirements but permanently shifts the power dynamic toward platform owners and away from developers and players.

Mobile consolidation: The highest-growth segment of global gaming is mobile, which runs on ARM chips with modest RAM requirements. Meta's pivot, Nintendo's handheld dominance, and Valve's aborted Steam Machine strategy all point toward mobile and hybrid-mobile as the realistic frontier for gaming growth.

AI-native game design: Studios will increasingly build games designed from the ground up to use AI-generated content - environments, dialogue, quests, characters - reducing the human labor required and partially offsetting the hardware cost pressure with lower development costs. Gamers who oppose AI content will form a niche, not a majority.

Geographic divergence: Gaming markets in regions without major AI data center concentration - much of Southeast Asia, South Asia, Latin America - will face less severe electricity and RAM cost pressure than US and European markets. The global gaming audience will increasingly concentrate in these regions, forcing publishers to design for lower hardware specs as a default.

"The rapid rise of artificial intelligence has upended every corner of the tech industry. Nearly a third of adults and most teens in the US use AI on a daily basis. Data centers have doubled in the US since 2022, raising electricity costs up to 267 percent more than they were five years ago for households near those warehouses." - WIRED, March 2026 (citing Pew Research, Bloomberg)

None of these trajectories restores what gaming lost. The pandemic-era boom created real expectations: that gaming would remain a hardware-driven, creativity-forward, developer-empowered medium. Those expectations are being systematically unwound, not because gaming failed on its own terms, but because it is competing for resources against the largest capital deployment in the history of consumer technology.

AI did not set out to destroy gaming. It did not need to. It simply needed resources - memory, electricity, engineering talent - and in a market economy, it had more money to bid for those resources than a $180 billion entertainment industry could match.

The gamers calling it RAMageddon are not being dramatic. They are naming something real. The question now is not whether gaming survives the AI era - some version of it will - but whether what survives is still recognizable as the medium that built the largest entertainment industry on earth.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram