Data Centers in Orbit: Why Big Tech is Racing to Move AI Compute into Space

Jeff Bezos wants to put 51,912 satellites into low Earth orbit. Not for internet access - for AI compute. Blue Origin filed that application with the FCC this month, seeking permission to deploy what would be one of the largest satellite constellations ever built, each unit solar-powered, each designed to crunch the kind of arithmetic that makes large language models run.

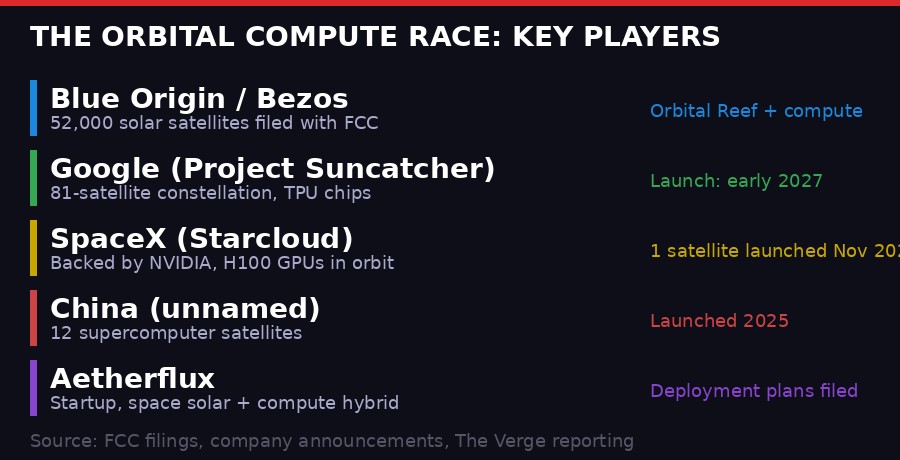

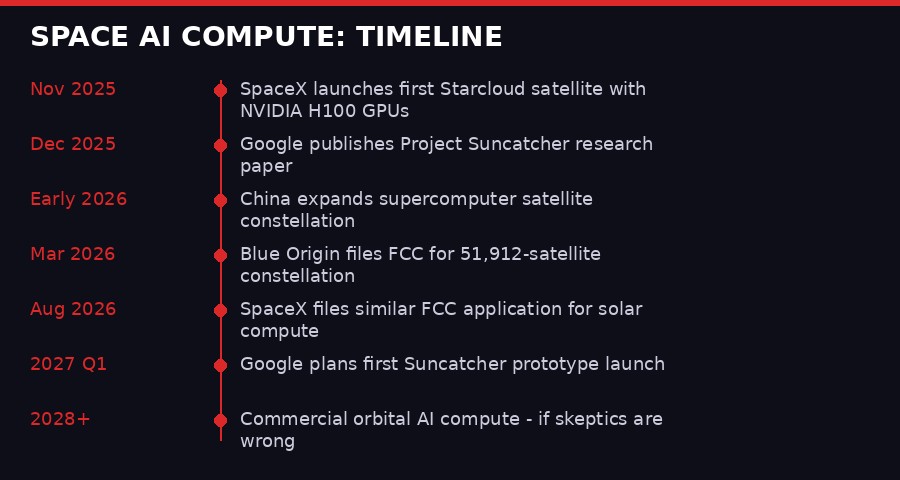

He's not alone. Google has a research moonshot called Project Suncatcher - clusters of TPU-equipped satellites flying in tight formation, connected by laser links instead of fiber. SpaceX already launched a satellite carrying NVIDIA H100 GPUs in November 2025, backed by a startup called Starcloud. China is ahead of all of them in one sense: it quietly launched a dozen supercomputer satellites in 2025 that can process data while orbiting.

The pitch sounds compelling on paper. Data centers are running into hard physical limits: land, water, electricity, community opposition, heat. Space offers unlimited solar power, no water for cooling (vacuum does that job), and a location nobody can block a permit for. The AI boom needs compute at a scale the grid may not be able to support. So why not look up?

Because the skeptics have a point. And understanding both sides of this argument tells you something important about where AI infrastructure is actually going - and how desperate the compute race has become.

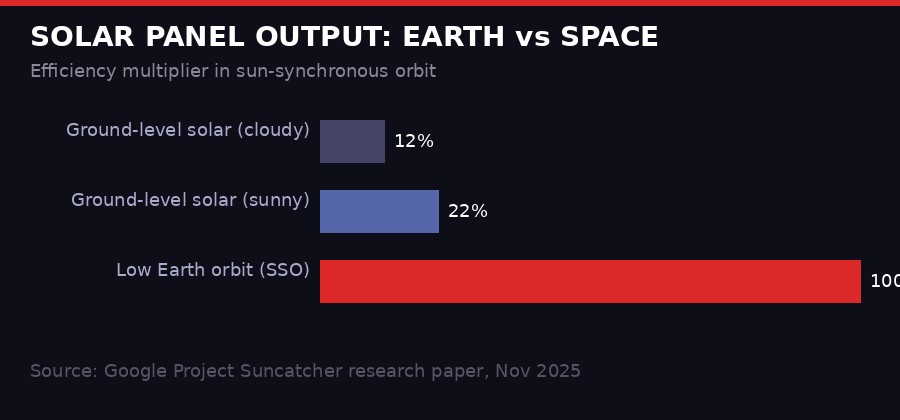

Solar panels in sun-synchronous low Earth orbit produce up to 8x more power than ground-level equivalents, with near-continuous exposure. This is the core physics argument for orbital AI compute. Source: Google Project Suncatcher, Nov 2025.

The Physics That Makes This Sound Reasonable

The fundamental case for orbital data centers comes down to sunlight. A solar panel sitting on Earth deals with atmosphere, weather, seasons, and a day/night cycle. In sun-synchronous orbit at around 600-1,000 kilometers altitude, a satellite traveling from pole to pole at just the right angle stays in near-constant sunlight. Google's own research puts the efficiency gain at roughly 8x compared to ground-level solar, according to their November 2025 Project Suncatcher paper published on the Google Research blog.

For AI training runs, which can sustain near-100% GPU utilization for weeks or months, continuous power matters enormously. Terrestrial data centers need enormous battery backup or grid reliability guarantees. In the right orbit, you don't. The sun just keeps shining on the panels.

Then there's the cooling problem. AI chips generate staggering amounts of heat. Modern GPU clusters require liquid cooling loops, cooling towers, and significant water consumption - data centers are increasingly competing with agriculture and municipalities for freshwater access. In the vacuum of space, heat radiates away passively. No pumps, no water, no cooling towers. It's thermodynamics working in your favor instead of against you.

"In space, they theorize, the sun's unlimited rays could provide endless amounts of energy to power your latest AI-generated video. But it's not likely to be that easy." - The Verge analysis of orbital data center proposals, March 2026

The land and water pressures on ground-based data centers are real and intensifying. In 2025 alone, six proposals for AI data centers needing multiple gigawatts of power were announced - a scale that was only rumored possible in 2024. Communities in Ireland, Virginia, Texas, and Singapore are pushing back against data center expansion due to power draw, water use, and the fact that these facilities provide almost no jobs relative to their resource consumption. Virginia has become so saturated with data centers that state regulators have imposed moratoriums on new construction in some counties.

Against that backdrop, the appeal of a location where you face zero community opposition, zero permit fights, and effectively free cooling is obvious. The question is whether the engineering challenges can actually be solved.

What Blue Origin Filed and Why It Matters

Blue Origin's FCC application, filed in early March 2026, describes a constellation of 51,912 solar-powered satellites designed to handle AI computing workloads. This is not a Starlink-style internet access play. The filing is explicitly about AI compute offloading - moving processing tasks from terrestrial data centers to orbital platforms powered by solar energy.

The scale of the proposal is staggering. Starlink, the current largest active satellite constellation, operates around 6,000 satellites. Blue Origin's filing envisions nearly ten times that number, all in coordinated orbits, all functioning as distributed compute nodes. The application is still pending FCC review and many experts expect significant modifications before any approval.

Blue Origin is betting that by the time such a constellation could be launched - a project measured in years, not months - the economics of rocket launches will have dropped enough to make it viable. Jeff Bezos has said publicly that making access to space cheap enough is the prerequisite for everything else he wants to do in orbit. The New Glenn rocket, which had its first successful flight in early 2025, is designed to dramatically reduce launch costs compared to United Launch Alliance's offerings.

The Bezos angle here is also strategic. Amazon Web Services (AWS) is the dominant player in cloud computing, but it faces existential competitive pressure from Microsoft Azure (with its deep OpenAI integration) and Google Cloud. If orbital compute infrastructure becomes viable and Blue Origin controls significant capacity, AWS gains a differentiation that no terrestrial competitor can easily copy. Land in Virginia is scarce. Orbital slots are theoretically abundant - if you can get there cheaply enough.

SpaceX filed a similar FCC application, also in March 2026, though details have not yet been made public as of this writing. The Starcloud venture, which NVIDIA backed and which launched its first H100-equipped satellite in November 2025 aboard a SpaceX Falcon 9, is seen as a proof-of-concept for the broader model. Musk's vertical integration - rockets, satellites, and now compute - mirrors Bezos's logic exactly.

The orbital AI compute race now involves four major players across two continents, with a range of approaches from focused compute constellations to hybrid solar-compute platforms. Sources: FCC filings, company announcements, The Verge, Uptime Institute.

Google's Project Suncatcher: The Most Technically Detailed Plan

Of all the orbital compute proposals, Google's Project Suncatcher is the most technically documented. The company published a research paper in November 2025 describing the system design in precise engineering terms, which allows for actual evaluation rather than press release analysis.

The proposed architecture consists of 81 satellites flying in sun-synchronous low Earth orbit, traveling together in a tight formation roughly one kilometer square. Each satellite carries Google TPU chips - the custom AI accelerators Google uses in its data centers - connected to neighboring satellites via free-space optical links (essentially lasers firing through vacuum) rather than radio frequency communications.

The key engineering insight is that by flying the satellites in extremely close formation, Google can use laser communication at very short ranges. The company's early lab tests achieved 800 Gbps bidirectional transmission (1.6 Tbps total) using a single transceiver pair. At data center scale, the target is tens of terabits per second between satellites - comparable to the fiber connections that link GPU clusters in terrestrial facilities.

According to Google's research blog, the team has worked through the orbital dynamics using a JAX-based differentiable physics model that accounts for Earth's non-spherical gravitational field and atmospheric drag. The math is genuinely complex: 81 satellites flying 100-200 meters apart need to maintain precise formation while constantly dodging each other's gravitational perturbations and drag effects.

"Project Suncatcher envisions compact constellations of solar-powered satellites, carrying Google TPUs and connected by free-space optical links. This approach would have tremendous potential for scale, and also minimizes impact on terrestrial resources." - Google Research blog, Project Suncatcher announcement, November 2025

Google plans a prototype launch of two satellites in early 2027. If that goes well, the full 81-satellite constellation could follow. The company explicitly compared the ambition of this project to its early quantum computing program - a "moonshot" where the engineering challenges are real but the physics permits a path forward.

The radiation environment is another documented challenge. High-energy cosmic rays and solar protons damage silicon chips. Military and scientific satellites have long used radiation-hardened processors that are orders of magnitude slower than commercial GPUs. Google's approach involves using consumer-grade TPUs (much more powerful, much cheaper) and accepting a certain rate of radiation-induced errors, then using software error correction to compensate. Whether that tradeoff holds at AI training scale is something the prototype will test.

The Skeptics Have Tenure

The people most skeptical of orbital data centers are not tech commentators or competitors. They're orbital mechanics specialists and space physicists who have been studying the environment these satellites will operate in for decades.

Jonathan McDowell, an astrophysicist at the Harvard-Smithsonian Center for Astrophysics who has tracked every object launched into space since the late 1980s, described the concept to The Verge as often starting from "space is cool, let's do something in space" rather than a genuine need to be in orbit. His concern about Google's tightly-clustered constellation is specific: "If a thruster gets stuck, stuck on, or fails, and now you've got a rogue one in among all the others in this cluster of 81," he explained. A single thruster failure in a 81-satellite formation flying 100-200 meters apart could cause a cascading collision sequence.

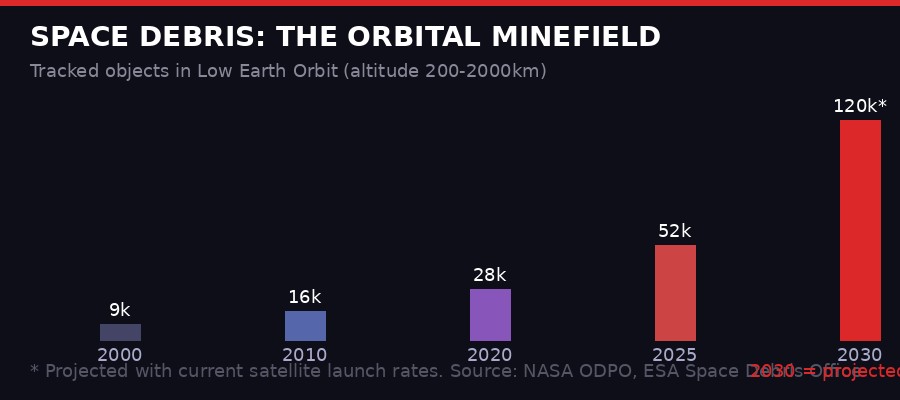

Mojtaba Akhavan-Tafti, associate research scientist of space sciences and engineering at the University of Michigan, raised a different concern: space debris. The sun-synchronous orbit that Google has chosen for Suncatcher is one of the most congested in the debris environment. Akhavan-Tafti told The Verge that the approximately 8,300 Starlink satellites made over 140,000 collision avoidance maneuvers in just the first half of 2025. A 81-satellite cluster flying in tight formation would likely need to maneuver as a unit - 81 satellites simultaneously performing collision avoidance - or risk breaking formation entirely.

Tracked objects in low Earth orbit have grown roughly 5x since 2000, with commercial satellite deployments accelerating the trend. Adding compute constellations of tens of thousands of satellites raises serious collision risk questions. Sources: NASA ODPO, ESA Space Debris Office.

Then there's the latency problem. Terrestrial AI training runs require GPUs to communicate with each other in microseconds. Even the best orbital laser links introduce propagation delay. For tasks where the satellites are passing processed results down to Earth - and then users send new requests back up - the round-trip latency can be 20-30 milliseconds per exchange, compared to sub-millisecond for on-site GPU clusters. That's fine for inference (generating a response to a query). It's problematic for training (iteratively adjusting billions of parameters with fast feedback loops).

The cost calculation is also contentious. Launch costs have fallen dramatically - SpaceX's Starlink deployment drove per-kilogram costs to around $1,500-2,000 to low Earth orbit. But TPU and GPU hardware still costs tens of thousands of dollars per unit. Deploying, say, 81 satellites each containing the equivalent of a rack of AI hardware requires fabricating space-qualified versions of consumer chips (much more expensive), launching them (still not cheap at scale), and then accepting that when they fail - and they will fail - you cannot service them. Terrestrial data centers replace failed servers for a few hundred dollars and a technician's time. Orbital replacements cost millions.

"As the number of spacecraft increases, you have to dodge more often, so you have to use more fuel. It's not a free lunch." - Jonathan McDowell, Harvard-Smithsonian Center for Astrophysics, via The Verge

China's Silent Lead

While American billionaires generate press releases and FCC filings, China has already launched orbital compute infrastructure - quietly and without announcing target satellite counts to any regulatory body that would publish them.

In 2025, China deployed a constellation of a dozen supercomputer satellites capable of processing data in orbit. The details are limited because Chinese aerospace programs don't file with the FCC and don't publish Google-style research papers in English. What is known comes from state media announcements and tracking by organizations like McDowell's own catalog and the European Space Agency's debris monitoring network.

The Chinese approach appears to differ from the American one in a key way. Rather than building compute-first satellites designed for AI training, China's orbital supercomputers seem designed for data processing - running inference and analysis on satellite sensor data without beaming raw data to ground stations first. The latency of downlinking terabytes of imagery from reconnaissance satellites is a real military and scientific problem. Processing it in orbit and downlinking only results (coordinates, classifications, alerts) is a genuine efficiency gain.

This distinction matters. China may be solving a real, bounded problem that existing satellite technology can address. The American proposals - particularly Blue Origin's 52,000-satellite constellation - are aimed at a much larger and less-defined opportunity: general-purpose AI compute infrastructure that can serve commercial cloud workloads.

Europe has noticed. The European Space Policy Institute published a report calling orbital data centers "the next rapidly emerging opportunity" and urging European governments and space agencies to develop policy frameworks before American and Chinese companies establish dominant positions. The EU's approach to AI regulation - restrictive, rights-focused - is likely to conflict with military-adjacent orbital compute programs in ways Brussels is only starting to think through.

The orbital AI compute race has accelerated from concept to FCC filings in under 18 months. Google, SpaceX, and Blue Origin are all moving simultaneously toward commercial deployments, with China already operational at small scale.

What the Grid Crisis Is Actually Driving

To understand why these proposals are gaining traction now, you have to understand what's happening to electricity grids. The AI buildout has created a demand surge that utility planners did not anticipate and cannot easily accommodate.

In Virginia's data center corridor - Northern Virginia hosts more data center capacity than anywhere else on Earth - Dominion Energy has told prospective customers that new large load connections may not be available until 2030 or later. Ireland, which became a data center hub partly due to favorable EU regulation and renewable energy, is now grappling with data centers consuming nearly 20% of national electricity generation. Texas grid operators have raised concerns about multi-gigawatt AI data center proposals straining a grid already stressed by population growth and extreme heat events.

The grid constraint is not just a permitting problem. In many regions, there is literally not enough generation capacity planned or funded to serve the AI compute buildout that is currently being announced. Utility-scale solar and wind projects take 3-5 years from permitting to operation. New nuclear takes a decade. The AI labs and cloud providers want compute now, not in 2032.

This is what makes the orbital pitch land differently than it would have five years ago. In 2019, proposing to solve the data center power problem by putting GPUs in space would have sounded like science fiction. In 2026, when utilities are telling hyperscalers they cannot connect new campuses for four years, "build it in orbit" sounds like a radical but not obviously insane alternative.

The key question is timeline. Google's prototype launch is in 2027. Full constellation deployment, assuming the prototype works, is at minimum 2029-2030. Blue Origin's 52,000-satellite constellation, if approved and funded, would take years of production and launch cadence to deploy. The AI compute crisis is happening now. Orbital solutions are years away from any commercial scale.

"The idea is often 'space is cool, let's do something in space' rather than starting from 'we really need to be in space to do this.' Sometimes those align. Sometimes they don't." - Jonathan McDowell, Harvard-Smithsonian Center for Astrophysics

The Second-Order Effects Worth Watching

If even one of these orbital compute programs succeeds at meaningful scale - say, 1,000 satellites each with significant processing capacity - the ripple effects go well beyond AI infrastructure costs.

First, sovereignty. A compute constellation in orbit is not subject to any national jurisdiction in the traditional sense. This has obvious appeal to companies wanting to serve customers across regulatory borders. It also raises genuinely novel legal questions. If a European user's query gets processed on a satellite over international waters (or over Russia, or China), which country's data protection law applies? The answer is currently "nobody knows," which is either a feature or a bug depending on your perspective.

Second, military applications. The line between commercial orbital compute and military surveillance infrastructure is thin. A constellation of satellites with powerful onboard processing capability could, with software updates, shift from running ChatGPT queries to analyzing targeting data. The US military's interest in commercial space infrastructure is well-documented - the commercial imagery industry built on Planet, Maxar, and BlackSky has become integral to Pentagon operations. Orbital compute is a natural extension.

Third, the Kessler cascade risk. The 1978 hypothesis by NASA scientist Donald Kessler proposed that once orbital debris density reaches a certain threshold, collisions generate more debris, which causes more collisions, in a runaway cascade that makes certain orbital altitudes permanently unusable. The low Earth orbit environment is not at that threshold yet, but adding tens of thousands of compute satellites to an already-crowded environment raises the probability of triggering it. Unlike ground-based data center pollution, a Kessler cascade is irreversible on human timescales.

Fourth, power projection. Right now, internet infrastructure is largely terrestrial - undersea cables, ground-based data centers, land-based ISP networks. All of it can be physically targeted, interdicted, or regulated. Orbital compute infrastructure is significantly harder to physically attack. That makes it attractive for applications where resilience matters - financial settlement systems, military command and control, intelligence agency operations. The companies filing FCC applications today may find that their best customers in ten years are not consumer AI applications but national security agencies.

The Honest Assessment

Orbital AI data centers will probably work, in some form, eventually. The physics genuinely permits it. Solar power in space is real. Vacuum cooling is real. Laser communication between satellites is real - it's already used in Starlink's inter-satellite links. None of this is fantasy physics.

What remains genuinely uncertain is whether the economics close, whether the debris environment can absorb tens of thousands more satellites, and whether the latency constraints limit orbital compute to inference applications rather than full training runs.

Google's approach - a focused, technically documented research moonshot with a clear prototype plan and realistic timelines - is the most credible of the current proposals. Blue Origin's 52,000-satellite filing reads more like a land grab for FCC spectrum and orbital slot allocation than an immediate deployment plan. Filing for orbital rights is cheap. Actually building and launching 52,000 satellites is not.

The Starcloud prototype is interesting precisely because it exists. A single satellite with H100 GPUs in orbit, actually running workloads (at whatever efficiency), is worth more than 10,000 press releases. The data from that satellite - radiation effects on consumer GPUs, actual power generation versus projections, thermal management in practice - will tell the engineers things that no simulation can.

The compute crisis that is driving these investments is real and not going away. AI models are getting larger, not smaller. The scaling laws that predicted each generation of capability require roughly 10x the compute of the previous one have held for five years. Somewhere in the 2028-2032 window, ground-based compute infrastructure will hit hard limits - not just permitting and grid constraints, but the physical limits of how much silicon you can cool with water and power from a stressed grid.

At that point, looking up will stop being a billionaire's moonshot and start being an engineering necessity. The question is who owns the orbital positions, who owns the launch capacity, and who set the technical standards when it was still optional. Those decisions are being made right now, in FCC filings and research papers and VC term sheets, while most of the world is focused on what the AI models can do rather than where the compute to run them is coming from.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram