OpenAI Buys the Python Ecosystem: What the Astral Acquisition Really Means

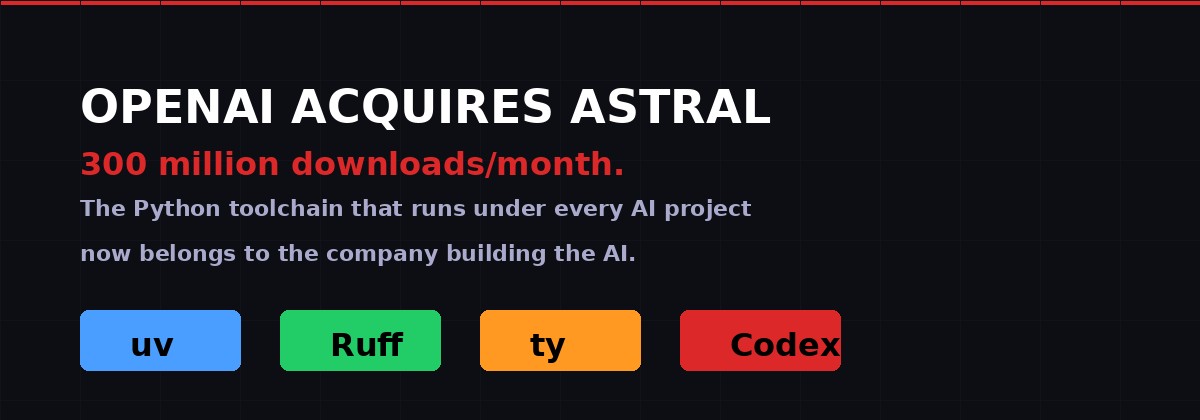

uv. Ruff. ty. Three tools downloaded 300 million times a month. Now property of OpenAI. This is not a developer tools deal - it's a land grab for the infrastructure layer that sits underneath every AI coding workflow on the planet.

Astral's three tools - uv (package manager), Ruff (linter/formatter), and ty (type checker) - collectively downloaded 300M+ times monthly. As of March 19, 2026, they are OpenAI assets. Credit: BLACKWIRE/PRISM

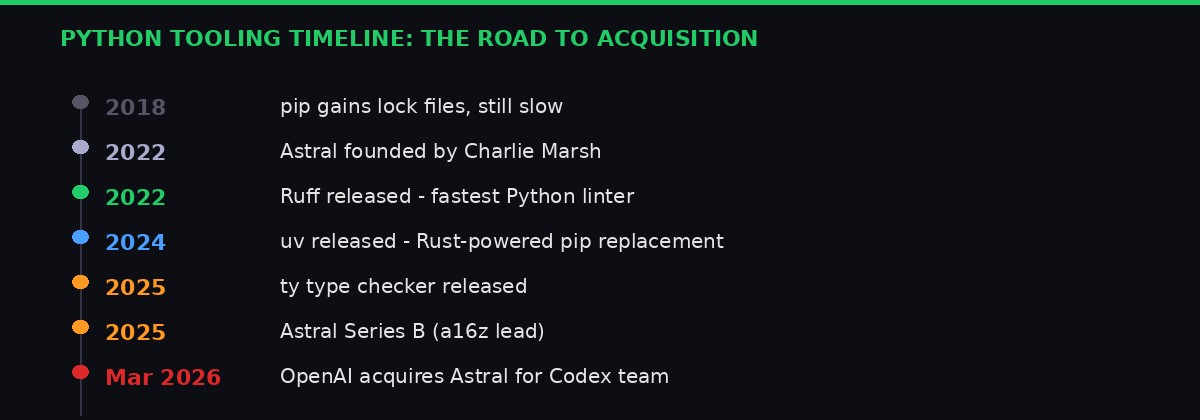

The announcement dropped on Hacker News at around 9 AM Pacific time on March 19, 2026, and within ten hours it had become the most-discussed tech story of the week. OpenAI is acquiring Astral - the startup behind uv, Ruff, and ty, three tools that have quietly become load-bearing pillars of modern Python development.

The Hacker News post gathered 1,169 points and 723 comments. Most of that discussion wasn't celebratory. Developers who depend on Astral's tools every day were asking a question that nobody at OpenAI or Astral has fully answered yet: what happens when the company that controls your AI coding assistant also controls the tools your code uses to run, lint, and type-check itself?

This isn't paranoia. It's pattern recognition. And to understand why this acquisition matters far beyond the developer tools market, you need to understand what Astral actually built.

What Astral Actually Is - And Why It Matters

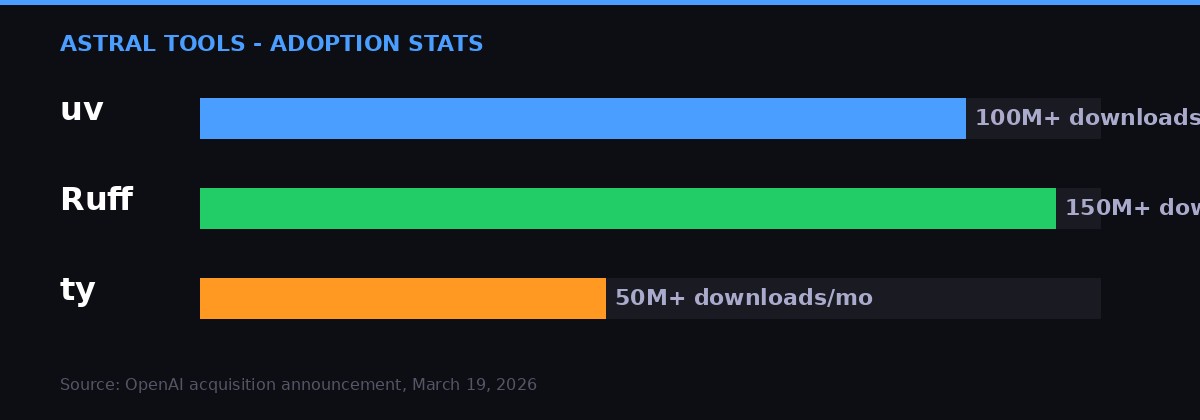

Download volumes for Astral's three tools. Ruff became the dominant Python linter faster than any tool in the ecosystem's history. uv replaced pip in millions of workflows within two years. Credit: BLACKWIRE/PRISM

Astral was founded in 2022 by Charlie Marsh, a solo technical founder who had previously worked at Stripe. The thesis was simple but ambitious: Python's developer tooling was slow, fragmented, and badly in need of a rewrite in a systems language. Rust, specifically.

The first tool Marsh shipped was Ruff - a Python linter and formatter written in Rust that ran 10 to 100 times faster than its competitors. It was the kind of performance gap that's hard to ignore. A linter that takes 300 milliseconds across a large codebase versus one that takes 30 seconds isn't just a convenience improvement - it changes how you work. Ruff spread through Python projects like a wildfire. By early 2026 it was pulling over 150 million downloads per month, according to the OpenAI acquisition announcement.

The second, and arguably more strategically significant tool, was uv. Released in 2024, uv replaced pip - Python's package installer - along with venv, pyenv, pipx, poetry, and virtualenvwrapper. It did all of this while being 10 to 100 times faster than the tools it replaced, thanks to parallel downloads, intelligent caching, and a resolver written from scratch in Rust rather than ported from Python-era thinking.

The reaction from developers who switched to uv bordered on religious. The Hacker News thread discussing the acquisition is littered with testimonials: "The day I first used uv is as memorable to me as the day I first started using Python." "Everything is instant - so you never experience any cognitive distraction." These aren't marketing quotes. They're unsolicited comments from engineers who spend their days in terminals.

The third tool, ty, is a static type checker for Python - Astral's answer to mypy and pyright. It launched more recently and has 50+ million downloads monthly, still growing rapidly.

Combined, these three tools form something that didn't exist three years ago: a coherent, Rust-powered Python toolchain that handles the full development lifecycle from environment creation to dependency resolution to code quality enforcement to type safety. [Source: OpenAI acquisition announcement, astral.sh/blog/openai, March 19, 2026]

The Strategic Logic: Why OpenAI Wanted This

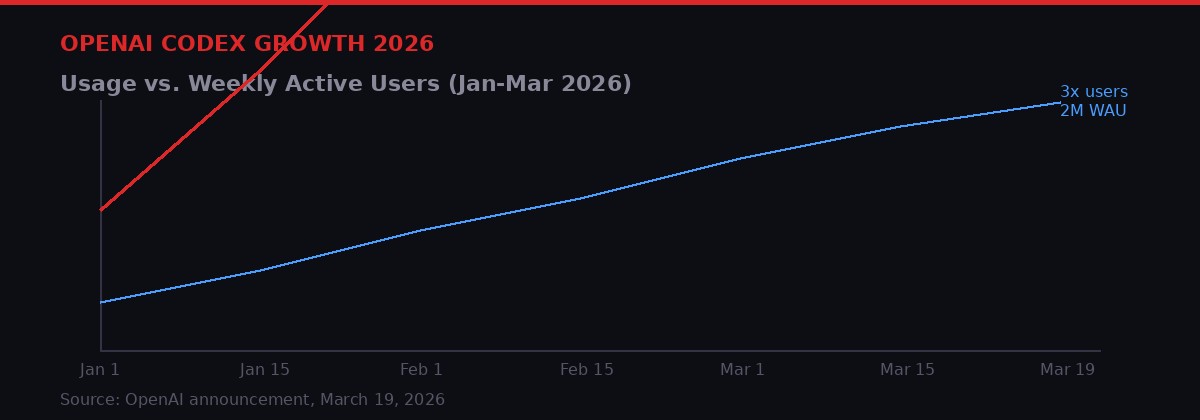

OpenAI Codex has seen 3x user growth and 5x usage increase since January 2026, with over 2 million weekly active users by March. The Astral acquisition is designed to extend Codex deeper into the development workflow. Credit: BLACKWIRE/PRISM

OpenAI's announcement was unusually candid about the strategic rationale. Codex - OpenAI's AI coding agent - has been growing fast: 3x user growth and 5x usage increase in the first quarter of 2026 alone, with 2 million weekly active users. But OpenAI has articulated a vision for Codex that goes beyond generating code snippets. They want Codex to be an AI that participates in "the entire development workflow - helping plan changes, modify codebases, run tools, verify results, and maintain software over time."

Astral's tools sit directly in that workflow. When Codex generates Python code, it needs to run in an environment (uv handles that), the code needs to be linted and formatted (Ruff), and type errors need to be caught before deployment (ty). By acquiring Astral, OpenAI doesn't just get a team of talented engineers - it gets the hooks into the Python development cycle at every stage.

"By bringing Astral's tooling and expertise to OpenAI, we will accelerate our work on Codex and expand what AI can do across the software development lifecycle." - Thibault Sottiaux, Codex Lead at OpenAI

The second-order reading of this is more pointed. If Codex can interact natively with uv to spin up environments, call Ruff to format its own output, and run ty checks before presenting code to users - the result is an AI coding agent that is dramatically more capable than competitors who have to integrate with these tools via public APIs. OpenAI would have first-mover advantage on tight integration, and it could theoretically move the goalposts by releasing updates to the toolchain that work best with Codex's specific output patterns.

This is not hypothetical. It's exactly what the developer community on Hacker News spent 700 comments discussing. One commenter put it bluntly: "When the tooling authors are employees of one provider or another, you can bet that those providers will be at least a few versions ahead of the public releases of those build tools, and will enjoy local economies of scale in their pipelines that may not be public at all." [Source: Hacker News discussion, item #47438723, March 19, 2026]

The Open Source Promise - And Why It May Not Hold

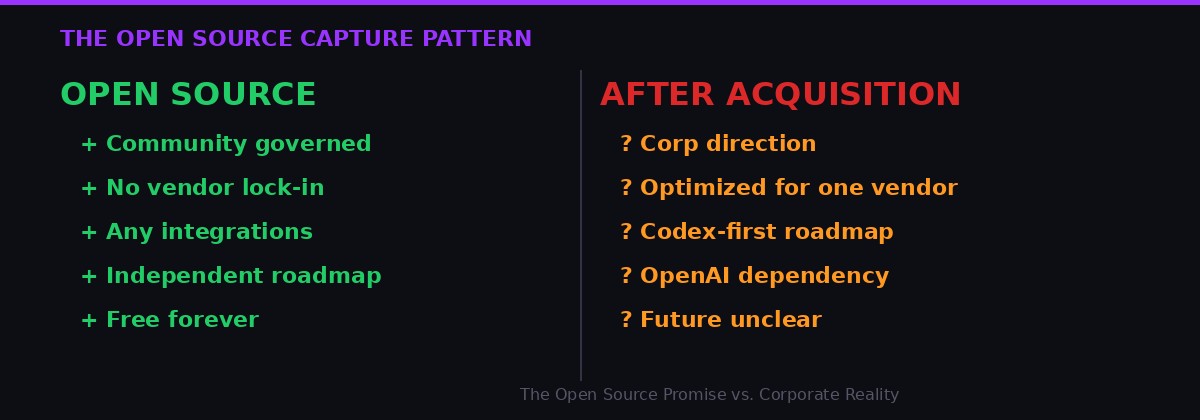

The pattern of corporate acquisition of open-source tooling. The code remains accessible; the roadmap, priorities, and deep integrations increasingly align with the acquirer's commercial interests. Credit: BLACKWIRE/PRISM

Both OpenAI and Astral's founder Charlie Marsh were emphatic on one point: the tools will remain open source after the deal closes. "OpenAI will continue supporting our open source tools after the deal closes," Marsh wrote. "We'll keep building in the open, alongside our community - and for the broader Python ecosystem - just as we have from the start." [Source: astral.sh/blog/openai, March 19, 2026]

The tech industry has heard variations of this promise before. The details matter. What does "supporting" mean in practice? Does it mean the tools stay MIT-licensed and community-governed? Or does it mean OpenAI employs the engineers and sets the priorities?

The history of corporate open-source acquisitions is not encouraging. The pattern is not that companies acquire open-source projects and immediately turn them proprietary. The pattern is subtler: the roadmap gradually aligns with the acquirer's commercial interests. Features that benefit the parent company ship faster. Integrations with competing services get deprioritized or abandoned. The community retains the code but loses the architects.

In this case, the concern is particularly acute because the Astral team is joining OpenAI's Codex team specifically - not a neutral developer tooling team. They will be building an AI coding product. Their incentive structure, performance reviews, and career trajectories will be tied to Codex's success. The idea that uv and Ruff will continue being developed in a purely community-oriented way, free from the gravitational pull of OpenAI's commercial priorities, is an act of faith rather than a structural guarantee.

One Hacker News commenter made the key point with precision: "The leadership and product direction work are at least as hard as the code work. Astral/uv has absolutely proven this, otherwise Python wouldn't be a boneyard for build tools. Projects - including forks - fail all the time because the leadership/product direction on a project goes missing despite the tech still being viable." [Source: HN comment, item #47438723]

In other words: the license isn't the only thing that matters. The people are.

The Python Ecosystem's Dependency Problem

The Python tooling landscape has been chaotic for years - pip, poetry, pipenv, conda, pyenv all competing without a clear winner. Astral's tools resolved that chaos in three years. The acquisition puts that resolution into OpenAI's hands. Credit: BLACKWIRE/PRISM

To appreciate why the developer community is concerned, you have to understand how bad Python's tooling situation was before Astral. The language had pip (slow, resolver issues), virtualenv (creates isolated environments but requires separate management), pyenv (manages Python versions), poetry (better but slow and complex), pipenv (tried to unify things, largely failed), and conda (heavyweight, enterprise-skewed). Each project had its own quirks, failure modes, and devoted factions.

The result was that getting a new Python developer up to speed required a lecture on package management that Python veterans still disagree about. "These scenarios seem really implausible to me, but [Google has] not revealed any specific numbers" - wait, that's the Android story. The Python equivalent would be: debugging environment conflicts was a rite of passage, not a bug.

uv solved this by being so fast and comprehensive that the argument about which tool to use became moot. You just used uv. The downloads prove it: when a tool goes from zero to tens of millions of monthly downloads in under two years, it's not just popular - it's become default infrastructure. Companies run uv in CI pipelines. Enterprises depend on it for reproducible builds. Open source projects adopt it because contributors expect it.

That kind of adoption creates leverage. Whoever controls uv now has a say - direct or indirect - in how hundreds of millions of Python environments are created and managed. They can prioritize certain package registries. They can optimize for certain hosting providers. They can define what "correct" dependency resolution means, and their definition will propagate across the ecosystem.

This isn't to say OpenAI will immediately abuse that leverage. It's to say they now have it. And the tech industry has not historically been good at leaving leverage on the table. [Source: Ars Technica, BLACKWIRE analysis, March 2026]

The Bigger Pattern: Capture of Developer Infrastructure

The developer infrastructure stack in 2026, layer by layer. AI companies are now present at every level from hardware to tooling. Neutrality is no longer the default. Credit: BLACKWIRE/PRISM

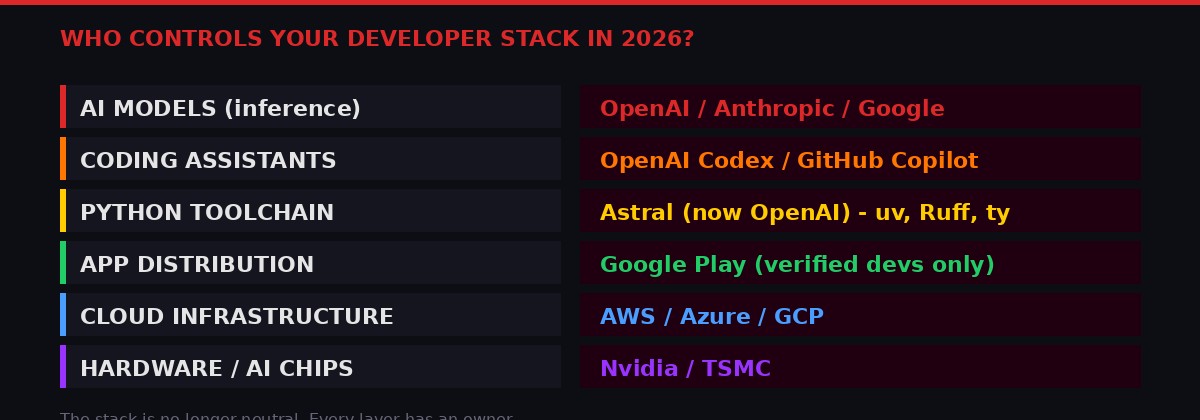

The Astral acquisition doesn't stand alone. It's the latest move in a broader pattern that has been accelerating throughout 2026: AI companies are acquiring the infrastructure that developers depend on, creating integration advantages that compound over time.

Consider what has happened in recent months. Microsoft, through GitHub, has Copilot embedded in VS Code, which controls the editing environment where most developers spend their days. Anthropic has made partnerships with major cloud providers and is targeting enterprise developer workflows with Claude Code. Google has Gemini embedded throughout the Android and Cloud development stacks. And now OpenAI has not just a code-generating AI, but the package manager, linter, and type checker that code will be run through.

The second development happening simultaneously is Google's decision to lock down Android app sideloading, announced on March 19, 2026. Starting in September, Android will require developers to pay a $25 fee and submit their identity to Google before their apps can be installed on certified devices - which is virtually all Android phones globally. [Source: Ars Technica, "Google details new 24-hour process to sideload unverified Android apps," March 19, 2026]

These two stories are more connected than they appear. Whether it's Google requiring identity verification before you can distribute an Android app, or OpenAI acquiring the tools that manage how Python code gets deployed - both represent the gradual transfer of control over developer workflows from the public commons to private corporate infrastructure.

Google's stated rationale is security: users are 50 times more likely to encounter malware from apps installed outside the Play Store than inside it. That statistic is probably true. But the mechanism chosen - mandatory developer identity, locked to Google's approval - also conveniently centralizes all app distribution through Google's infrastructure, exactly at the moment when court orders from the Epic Games antitrust case are forcing Google to allow third-party app stores.

"In a lot of countries, there is chatter about if this isn't safer, then there may need to be regulatory action to lock down more of this stuff. I don't think that it's well understood that this is a real security concern in a number of countries." - Sameer Samat, Android Ecosystem President, to Ars Technica

Security is real. It is also convenient cover for consolidation. The two are not mutually exclusive, and treating them as mutually exclusive is naive. [Source: Ars Technica, Google Android developer verification coverage, March 19, 2026]

The Android Sideloading Crisis: A 9-Step Bureaucracy

Google's new "advanced flow" for installing unverified apps - a nine-step process including a mandatory 24-hour waiting period. This feature will be buried in developer settings and won't be surfaced to most users. Credit: BLACKWIRE/PRISM

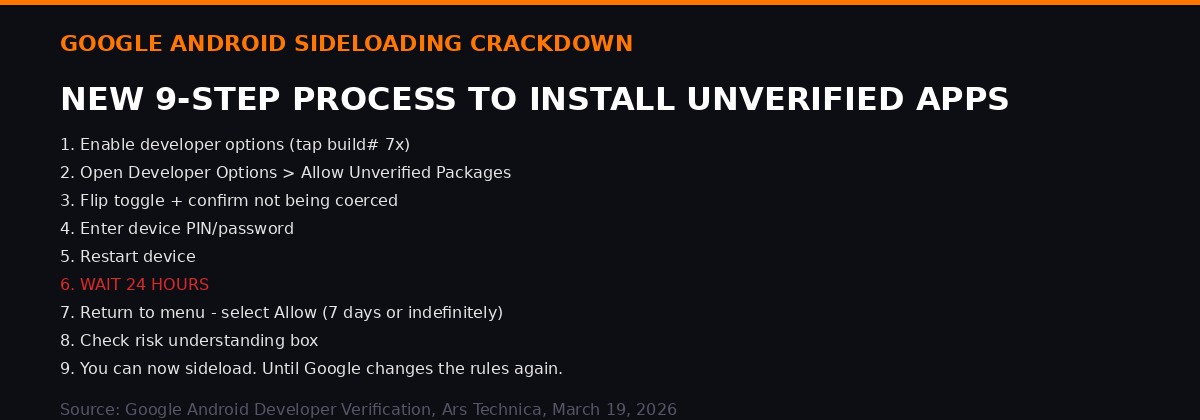

To appreciate the scale of the Android policy change, consider what it will require of a developer who wants to distribute an app outside Google's Play Store without submitting their identity to Google:

First, enable developer options by tapping the software build number seven times in About Phone. Then navigate to Settings, open Developer Options, scroll to "Allow Unverified Packages," flip the toggle, and confirm you are not being coerced - yes, there is a screen that asks you to confirm you're installing this app of your own free will. Then enter your device PIN. Restart the device. Wait 24 hours. Return to the menu. Scroll past additional warnings. Select "Allow temporarily" or "Allow indefinitely." Check a box confirming you understand the risks. You can now install the app.

That nine-step process, with a mandatory 24-hour delay, is Google's answer to the question: "What if a power user just wants to install an APK they found on the internet?"

The 24-hour delay is explicitly designed to prevent high-pressure social engineering attacks - scenarios where a scammer calls you pretending to be your bank and tells you to install an app immediately. There's genuine merit to that specific use case. But the same friction applies equally to a developer testing their own app, a security researcher deploying a custom tool, an F-Droid user installing open source software, or anyone in a country that won't be in the initial September rollout but still needs this capability.

Privacy advocates have flagged another dimension: the verification database. To verify their identity, developers outside Google Play must submit personal identification and pay a fee. That data now lives with Google, and it's subject to legal demands from governments worldwide. F-Droid board member Marc Prud'hommeaux made the concern explicit: "They say, 'Oh, we want to stop malware,' and that sounds all well and good, but show me your definition and demonstrate that this definition is going to be agreed upon by an independent consensus of security experts and the community. They don't do that. They just say malware's whatever we say it is, and when tomorrow they say, 'VPNs are malware,' then say goodbye to VPNs." [Source: Ars Technica, "With developer verification, Google's Apple envy threatens to dismantle Android's open legacy," March 2026]

There is also the question of developers in sanctioned nations. Someone building open-source software in Cuba or Iran will face additional barriers to verification that a developer in San Francisco won't. Google says the process "may vary across countries" but has provided no specifics on how this will be handled - which means the answer is unclear. [Source: Ars Technica, March 19, 2026]

The Tesla FSD Wake-Up Call: When AI Degrades Silently

The NHTSA opened a preliminary investigation on March 18, 2026 into Tesla's FSD (Full Self-Driving) degradation detection system failure. The system designed to alert drivers to AI performance degradation was itself broken. Credit: BLACKWIRE/PRISM based on NHTSA document INOA-EA26002-10023

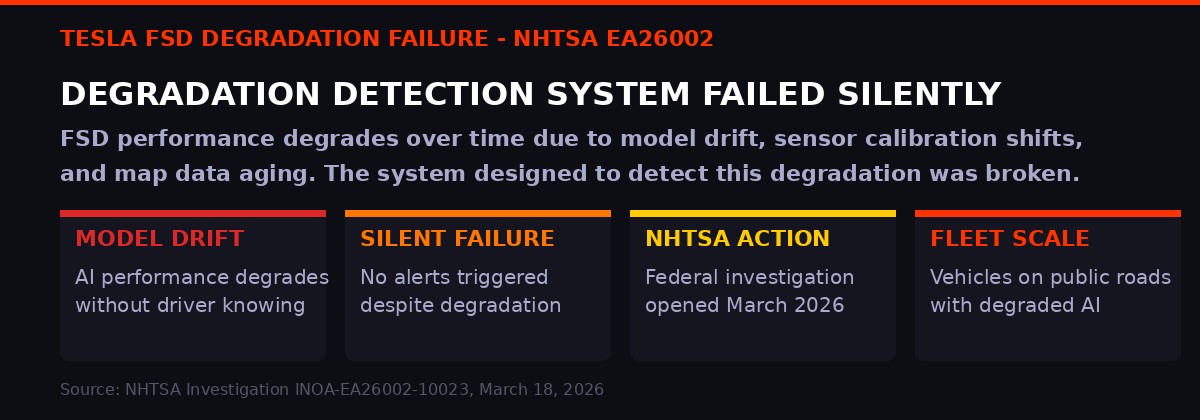

Separate from the developer tooling story, Thursday also surfaced a reminder of what happens when AI systems fail in physical-world contexts without adequate monitoring. The National Highway Traffic Safety Administration posted a preliminary investigation document, referenced as INOA-EA26002-10023, into Tesla's Full Self-Driving system's degradation detection failure. [Source: NHTSA, static.nhtsa.gov/odi/inv/2026/INOA-EA26002-10023.pdf, filed March 18, 2026]

The specific failure is worth understanding precisely, because "Tesla FSD bug" understates it. This isn't about FSD making a bad decision in a specific driving scenario. This is about the system designed to detect when FSD performance has degraded over time - and alert the driver or flag the vehicle for review - not working. The degradation detection mechanism was broken.

AI systems in vehicles degrade for multiple reasons: the underlying model may drift as road conditions evolve, sensor calibration shifts over time and miles driven, map data ages, and the statistical distribution of inputs the car encounters may shift in ways the original training data didn't account for. A well-engineered system includes monitoring that tracks whether the AI is performing within acceptable parameters and flags when it isn't.

Tesla's FSD reportedly had such a monitoring system. The NHTSA investigation suggests that system was not functioning correctly - meaning vehicles on public roads with degraded AI performance may have been operating without the safety layer that was supposed to catch that degradation. This is a second-order failure: not the AI making a mistake, but the quality-control system for the AI itself failing silently.

The parallel to the developer tooling story is not superficial. In both cases, the question is: who is monitoring the systems that monitor other systems? When uv and Ruff are run inside Codex's AI-generated workflows, who verifies that the toolchain integration hasn't drifted in ways that favor certain outputs? When Android's developer verification database is queried to decide what software can run on billions of phones, what checks are in place to ensure that "malware" remains narrowly defined? And when FSD's performance monitoring breaks, how many miles pass before anyone notices?

These aren't isolated bugs. They're structural questions about who has visibility into AI systems that are becoming load-bearing infrastructure for modern life. [Source: NHTSA investigation INOA-EA26002-10023; Hacker News discussion item #47445175, March 19, 2026]

The Acquisition Terms and What Happens Next

The acquisition is structured as a standard corporate acquisition subject to regulatory approval. The Astral team - including Marsh and the engineers who built uv, Ruff, and ty - will join the Codex team at OpenAI. Until the deal closes, Astral and OpenAI remain separate and independent companies. No purchase price has been publicly disclosed.

The regulatory question is interesting. Developer tools acquisitions don't typically trigger antitrust scrutiny in the way that social media or ad-tech acquisitions might. There's no obvious market share calculation to perform. But regulators in the EU and UK have become increasingly attentive to the ways AI companies accumulate technical infrastructure. The EU AI Act creates new categories of oversight for "general purpose AI" systems, and while it's not obvious that uv or Ruff fall under those categories, the acquisition of foundational Python tooling by the world's most prominent AI company is exactly the kind of structural move that AI policy discussions have been warning about.

Closer to the ground, the open-source community has a few options. The tools are MIT-licensed, which means forking is legally permissible. Several forks of uv-adjacent tools exist or are being discussed. But as the Hacker News community noted extensively: code is not the hard part. The hard part is maintaining a coherent vision, shipping improvements at a competitive pace, and keeping a community engaged. Astral's team was exceptional at all three. Whoever might fork these tools faces the challenge of doing that work without the founding team.

Charlie Marsh's framing in his announcement post was earnest and probably sincere: he believes building at the frontier of AI and software is "the highest-leverage thing we can do." He may be right. The question isn't whether Marsh is acting in bad faith. The question is whether a founder's intentions survive intact when the company they built becomes a division of a much larger organization with its own commercial imperatives. [Source: Charlie Marsh, astral.sh/blog/openai, March 19, 2026]

What Developers Should Do Right Now

The practical implications for developers who rely on Astral's tools are not immediate. uv, Ruff, and ty will continue to work exactly as they do today. The acquisition hasn't closed yet. And even after it closes, the tools will remain available.

The question to monitor over the next 12 to 24 months is whether the roadmap shifts. Watch for: features that work significantly better when used through Codex than through other AI coding tools. Watch for integration with OpenAI's hosting infrastructure. Watch for changes to the dependency resolver that prioritize certain package sources. None of these things may happen. But they are worth watching, because they would be the early indicators of a gradually captured toolchain.

For developers who want to hedge, the options are: evaluate alternative tools (pdm and poetry still exist, though neither is as fast as uv), contribute to any community fork efforts that materialize, and document what they value about current Astral tools so they can articulate what a "fork test" would look like if needed.

For everyone else - the hundreds of millions of people who use Android phones and the drivers in Tesla vehicles - the news of this week is a reminder that the infrastructure of modern digital life is increasingly concentrated in a small number of corporate hands. That concentration has delivered genuine benefits: uv is genuinely faster than pip. Play Protect genuinely catches malware. FSD is demonstrably safer than some human drivers in some conditions. But concentrated infrastructure is infrastructure that can change, break, or be weaponized with consequences at scale.

Distributed by default was not just an ideology. It was a risk management strategy. The industry has been slowly abandoning it, and this week's news is just the latest step in a direction that has been clear for years. [BLACKWIRE analysis, March 20, 2026]

TIMELINE: KEY EVENTS - MARCH 19-20, 2026

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram