NVIDIA's AI Factory OS: Dynamo 1.0 Drops With 7x Boost as Physical AI Goes Industrial

On a single day in March, NVIDIA launched ten products and partnerships that reframe how AI inference, enterprise agents, robotics, and autonomous systems are built. The central piece is Dynamo 1.0 - billed as the "operating system for AI factories." It's a bigger shift than it sounds.

NVIDIA GTC 2026 - March 16 saw a wave of 10 platform-level announcements spanning inference, robotics, enterprise agents, and autonomous vehicles. Source: BLACKWIRE/PRISM

Jensen Huang walked onto the GTC 2026 stage on March 16 and, over the course of one keynote, reshaped what NVIDIA is. Not a GPU company. Not even an AI chip company. A platform company building the full stack for what Huang calls "AI factories" - the industrial-scale infrastructure where models train, infer, plan, and act.

The marquee product was Dynamo 1.0. But anchored behind it were nine other announcements: a new CPU class called Vera, the third version of Cosmos world models, updated humanoid robot foundation models, enterprise agent toolkits, autonomous driving adoption from BYD and Nissan, a physical AI data factory blueprint, robotics alliances with over a dozen major manufacturers, a healthcare robotics expansion, and a T-Mobile partnership for edge AI networks.

Each announcement is significant. Together they describe a company that is not waiting for the AI boom to pick a winner. NVIDIA is building the pipes that every winner will need to run through.

1. Dynamo 1.0 - Why "OS for AI Factories" Is Not a Marketing Phrase

The framing matters here. Calling Dynamo an "operating system for AI factories" is a deliberate analogy. An OS doesn't run your applications - it allocates resources, schedules tasks, manages memory, and coordinates hardware so applications can run predictably at scale. That is exactly what Dynamo does for AI inference clusters.

Before Dynamo, running a large AI inference workload across a GPU cluster meant dealing with unpredictable request bursts, variable prompt lengths, massive context windows, and the challenge of multi-step agentic workflows where each step depends on the output of the last. Each of these creates a different pressure on GPU memory and compute. Without coordination, GPUs sit idle between steps, or run out of memory mid-inference, or route requests inefficiently. The result is wasted compute and high cost per token.

Dynamo 1.0 adds what NVIDIA describes as "smarter traffic control" - it routes inference requests to GPUs that already hold the most relevant KV-cache (the model's short-term memory from earlier steps in a chain), avoiding redundant computation. It also uses NVIDIA's KVBM (KV-cache Buffer Manager) to move cached data between GPUs and lower-cost storage when not needed, freeing memory without killing context.

"Inference is the engine of intelligence, powering every query, every agent and every application. With NVIDIA Dynamo, we've created the first-ever 'operating system' for AI factories. The rapid adoption across our ecosystem shows this next wave of agentic AI is here, and NVIDIA is powering it at global scale." - Jensen Huang, Founder and CEO, NVIDIA (NVIDIA press release, March 16, 2026)

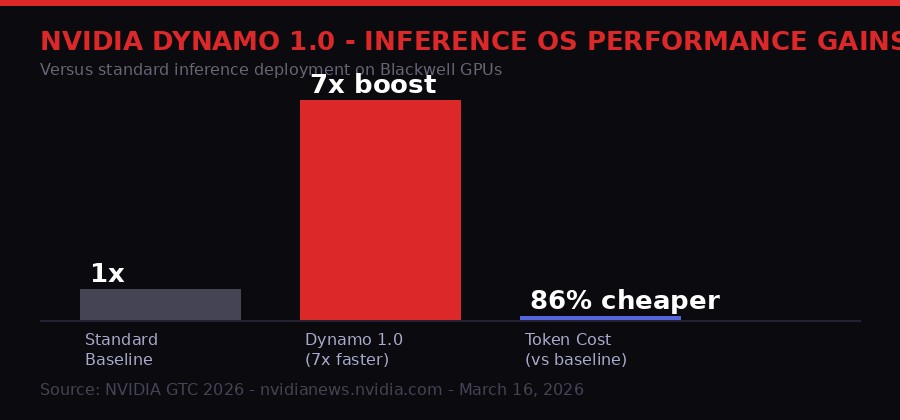

The benchmark result is striking: Dynamo 1.0 boosts inference performance of NVIDIA Blackwell GPUs by up to 7x versus baseline. That is a 7x reduction in cost per token, or equivalently, 7x more revenue opportunity from the same physical hardware - for free, since Dynamo is open source software.

Dynamo 1.0 delivers up to 7x inference performance improvement on NVIDIA Blackwell GPUs. Token cost drops by approximately 86% versus unoptimized deployment. Source: NVIDIA GTC 2026

The adoption list reads like a who's who of AI infrastructure. Cloud providers - AWS, Microsoft Azure, Google Cloud, Oracle Cloud Infrastructure - have integrated Dynamo. NVIDIA cloud partners including CoreWeave, Alibaba Cloud, Together AI, and Nebius are on board. Enterprise users already include ByteDance, Meituan, PayPal, Pinterest, AstraZeneca, and BlackRock. AI-native companies Cursor and Perplexity are adopting it directly.

Cursor's adoption is particularly telling. Cursor is among the most compute-intensive consumer AI products - each code suggestion may require multiple model calls in sequence. Dynamo's ability to route chained agentic requests to GPUs that already hold relevant context is exactly the workload Cursor needs to optimize. The company's CEO Michael Truell confirmed Cursor is deploying Vera CPUs alongside Dynamo to improve throughput and responsiveness for its coding agent users.

Dynamo also integrates natively with the major open source inference frameworks: LangChain, vLLM, SGLang, LMCache, and llm-d. This is a deliberate strategy. NVIDIA is not creating a closed-loop system that only works with NVIDIA software. It is integrating into the open source ecosystem that most AI engineers already use, so adoption friction is near zero.

2. The Vera CPU - When NVIDIA Designs Its Own Processor for Agent Workloads

The CPU has historically been an afterthought in AI infrastructure conversations. GPUs do the heavy lifting; CPUs handle orchestration and data movement. That framing is becoming obsolete as agentic AI workloads proliferate.

In an agentic AI system, the CPU is not just moving data. It is running tool execution, managing state machines, orchestrating multi-step plans, handling API calls, running code interpreters, and managing the control flow that decides which GPU workload to dispatch next. As agents become more complex - managing dozens of parallel tool calls or coordinating multiple sub-agents - CPU performance becomes a real bottleneck.

NVIDIA's answer is Vera, which the company describes as "the world's first processor purpose-built for the age of agentic AI and reinforcement learning." The technical specs support the claim:

- 88 custom Olympus cores - each capable of running two simultaneous tasks via NVIDIA Spatial Multithreading, delivering consistent performance in multi-tenant AI factory environments where many jobs run concurrently

- LPDDR5X memory - delivering 1.2 TB/s of bandwidth at half the power consumption of general-purpose CPUs with equivalent bandwidth

- NVLink-C2C interconnect - when paired with NVIDIA GPUs in the Vera Rubin NVL72 platform, this delivers 1.8 TB/s of coherent bandwidth between CPU and GPU, which is 7x higher than PCIe Gen 6

- Vera rack configuration - 256 liquid-cooled Vera CPUs sustaining more than 22,500 concurrent CPU environments, each running independently at full performance

The efficiency claim is significant: twice the efficiency and 50% faster than traditional rack-scale CPUs. For hyperscalers running millions of agent instances, this compounds quickly into enormous energy and cost savings.

Adoption is already confirmed at the highest level. Meta and Oracle Cloud Infrastructure are collaborating on Vera deployment. CoreWeave, Lambda, Nebius, and Nscale are deploying it. On the server manufacturing side, Dell Technologies, HPE, Lenovo, Supermicro, ASUS, Foxconn, and Quanta are already supporting Vera in their hardware. National laboratories including Los Alamos, Lawrence Berkeley, and the Texas Advanced Computing Center are testing it for scientific workloads.

"Vera's per-core performance and memory bandwidth represent a giant step forward for scientific computing." - John Cazes, Director of HPC, Texas Advanced Computing Center (NVIDIA press release, March 16, 2026)

Vera will be available from partners in the second half of 2026. The second-order effect here is that Intel and AMD are being squeezed from above. NVIDIA is no longer just a GPU company competing for data center GPU budget - it is now competing for CPU budget too, in the exact workloads where data centers are growing fastest.

3. Physical AI - Every Industrial Company Will Become a Robotics Company

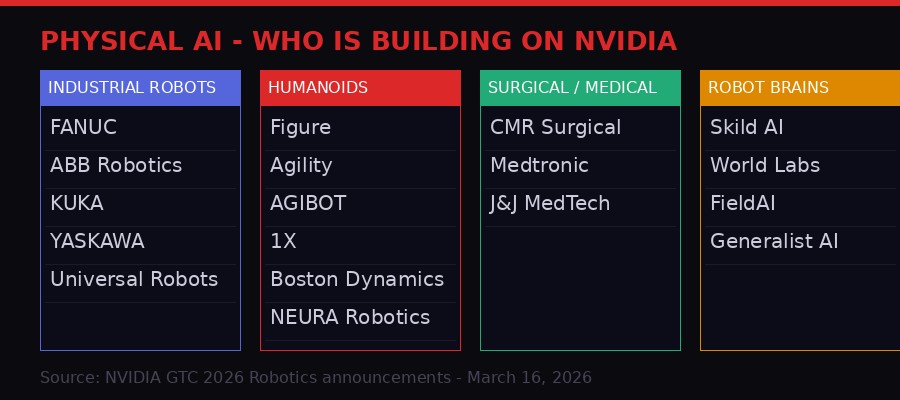

Physical AI ecosystem at GTC 2026: Industrial giants, humanoid pioneers, robot brain developers, and surgical/medical robotics all building on NVIDIA's platform. Source: BLACKWIRE/PRISM analysis of NVIDIA announcements

"Physical AI has arrived - every industrial company will become a robotics company," Huang said at GTC. That is a bold claim, but the announcement list makes a convincing case that it is directionally correct, and that NVIDIA intends to be the infrastructure layer underneath all of it.

The physical AI stack NVIDIA is building has three components. First, simulation - NVIDIA Omniverse and the Isaac simulation frameworks let manufacturers design, test, and validate robotic systems in a physically accurate digital twin before touching real hardware. Second, training data - the new Physical AI Data Factory Blueprint provides an open reference architecture for generating, augmenting, and evaluating synthetic training data for robots, vision AI, and autonomous vehicles. Third, foundation models - Cosmos 3 (world model), GR00T N1.7 (humanoid robot), and Isaac Lab 3.0 (robot learning environment) give every robotics company a shared starting point rather than requiring each to train from scratch.

The industrial robotics names attached to this platform are not startups. FANUC has more than 2 million robots in its global install base. ABB Robotics, KUKA, YASKAWA, and Universal Robots collectively represent the majority of industrial automation globally. All four are integrating NVIDIA Omniverse and Isaac frameworks into their virtual commissioning pipelines, and adding NVIDIA Jetson modules to their controllers for real-time AI inference at the edge.

On the humanoid side, the companies building on NVIDIA's platform include 1X, AGIBOT, Agility, Agile Robots, Boston Dynamics, Figure, Hexagon Robotics, Humanoid, Mentee, and NEURA Robotics. The new GR00T N1.7 model, now available in early access with commercial licensing, gives these companies generalized dexterous control capabilities as a foundation rather than as a proprietary advantage they built themselves. Differentiation shifts from "can our robot pick up a cup" to "what can our robot do that others can't."

Cosmos 3, announced at GTC, is the first world foundation model that unifies synthetic world generation, vision reasoning, and action simulation in a single architecture. For robotics developers, this closes a critical loop: they can generate synthetic environments, validate robot perception and decision-making, and simulate the physical consequences of robot actions - all within the same model framework. Previously, these were three separate pipelines using three separate tools.

4. Healthcare Robotics - The Hardest Frontier

Healthcare robotics is where physical AI faces its hardest constraints. Error rates in surgical robotics are not just a product problem - they are a patient safety problem, which means every model update requires regulatory clearance, every simulation validation must meet clinical standards, and every hardware deployment must satisfy safety certifications that general-purpose industrial automation does not require.

NVIDIA's announcements at GTC suggest the company is taking this seriously rather than treating it as a sales channel. CMR Surgical, which makes the Versius surgical system, is using Cosmos-H simulation - a healthcare-specific variant of the Cosmos world model - to train and validate robotic intelligence before clinical deployment. This is meaningful because it means digital twin validation rather than cadaver or clinical trial validation for initial training, dramatically reducing development timelines.

Johnson and Johnson MedTech is using Isaac Sim and Cosmos-based post-training workflows for the Monarch Platform for Urology. Medtronic is exploring NVIDIA IGX Thor for functional safety in surgical robotic systems. The IGX Thor is notable here - it is NVIDIA's industrial computing platform designed specifically for safety-critical edge AI, with hardware-enforced fault detection and real-time determinism.

The second-order effect is regulatory. Right now, surgical robotics companies submit their AI models for FDA clearance and then essentially freeze them - updating a cleared AI model requires a new clearance. If simulation and digital twin validation become accepted by regulators as part of the clearance pathway, the update cycle for surgical AI could compress from years to months. NVIDIA's investment in Cosmos-H is partly a bet that this regulatory shift will happen.

5. The Enterprise Agent Toolkit - OpenShell and the Self-Evolving Agent

Separate from the physical AI and inference announcements, NVIDIA launched its Agent Toolkit for enterprise knowledge work - a platform for companies to build and deploy AI agents that integrate with their internal data, processes, and tools.

The centerpiece is NVIDIA OpenShell, an open source runtime for building what NVIDIA calls "self-evolving agents" - systems that can adapt their behavior based on feedback and new information rather than being static policy models. The OpenShell runtime handles the execution layer: tool calls, state management, context tracking, and multi-step planning.

The enterprise market for AI agents is currently fragmented. Microsoft Copilot, Salesforce Einstein, ServiceNow's AI platform, and dozens of point solutions are all competing for enterprise budgets without any common infrastructure layer. NVIDIA's bet is that as companies try to build custom agents - not just use vendor-supplied ones - they will need a common runtime, and NVIDIA wants OpenShell to be that runtime.

The risk for Microsoft and Salesforce is real. If enterprise AI agents increasingly run on NVIDIA's stack, the AI model layer becomes a commodity input rather than a moat. The intelligence providers become fungible; the infrastructure provider - NVIDIA - becomes essential.

NVIDIA GTC 2026 on a single day dropped six major platform-level products, each targeting a different layer of the AI factory stack. Source: BLACKWIRE/PRISM

6. Mistral's Leanstral - A Different Kind of AI Progress Happening in Parallel

While NVIDIA dominated the GTC news cycle, another significant announcement landed with less fanfare. Mistral AI released Leanstral, an open-source AI model designed for formal code verification using Lean 4, the mathematical proof assistant developed at Microsoft Research.

The significance of Leanstral is not that it writes code faster. It is that it can prove code is correct - formally, mathematically, with no room for the kind of subtle logical errors that standard AI code generation tools produce and that human reviewers miss.

Lean 4 is a proof assistant that can express mathematical objects from university-level abstract algebra and formal software specifications in the same framework. Code written and verified in Lean 4 comes with a mathematical certificate of correctness. The bottleneck has always been human expertise - writing proofs in Lean 4 requires specialized training that most software engineers do not have.

Leanstral-120B-A6B uses only 6 billion active parameters (via a sparse mixture-of-experts architecture) while outperforming Anthropic's Claude Sonnet on the FLTEval benchmark - a suite that tests completion of formal proofs in the Fermat's Last Theorem formalization project, one of the most challenging ongoing mathematical formalization efforts. The cost difference is stark: Leanstral at pass@2 scores 26.3 points on FLTEval at a cost of $36. Claude Sonnet scores 23.7 at a cost of $549. Claude Opus 4.6 leads at 39.6 points but costs $1,650 to run.

"We envision a more helpful generation of coding agents to both carry out their tasks and formally prove their implementations against strict specifications. Instead of debugging machine-generated logic, humans dictate what they want." - Mistral AI announcement, March 16, 2026 (mistral.ai/news/leanstral)

The practical implications are significant for high-stakes software. Aerospace, medical device firmware, financial settlement systems, and cryptographic protocols are all domains where a single logical error can cause catastrophic failure. These are also domains where verification costs are prohibitive. Leanstral opens a path where AI writes the code and formally proves it correct in the same workflow, at commodity cost.

Mistral released Leanstral under Apache 2.0 licensing and is offering free API access for a limited period. The model supports MCP (Model Context Protocol) integrations and integrates with the lean-lsp-mcp server for interactive Lean development. Download the weights, run on your own hardware, or call the API - all three options are available immediately.

7. Palantir in the Walls of British Government - A Warning From MoD Sources

The NVIDIA expansion tells one story about AI infrastructure. A parallel story is unfolding about who already controls the AI infrastructure running inside governments - and whether that is a problem.

Reporting published March 13 in The Nerve by Charlie Young and Carole Cadwalladr, citing MoD sources, describes a growing internal concern about Palantir Technologies' expanding role in British government systems. A previous investigation by The Nerve in January 2026 found that Palantir had won at least 34 UK government contracts totaling more than £670 million, including contracts with the nuclear weapons agency.

The new concern, per MoD sources, is not just about contract value. It is about capability accumulation. Palantir builds analytical platforms that integrate and connect data across government agencies. Even if individual datasets remain technically under government ownership, Palantir's software develops a picture of the British population, policy patterns, and possibly sensitive operational details that the company itself can access and analyze.

One expert cited by The Nerve framed it this way: the claim that UK data "remains under government ownership" misses the point. The operational intelligence derived from analyzing that data is the real asset. If Palantir can build its own detailed picture of British state behavior, military procurement patterns, and population data from its government deployment - and potentially infer sensitive operational details - that is a national security issue regardless of where the raw records technically reside.

Peter Thiel, Palantir's co-founder, is a Trump ally. The intersection of a US tech company with deep access to UK government AI infrastructure, during a period when US-UK relations are under strain over NATO commitments, raises questions that go beyond standard vendor management. The MoD sources warning about this are not arguing Palantir is malicious. They are arguing the structural dependency is itself a vulnerability - the kind of dependency that adversaries or political dynamics can exploit, whether or not any actor intends to use it that way.

This is the shadow side of the AI infrastructure story. When NVIDIA sells GPUs and Dynamo software to governments and enterprises, the infrastructure concern is primarily about supply chain - what happens if China restricts rare earth materials or TSMC production is disrupted? When Palantir sells analytical software that grows more deeply integrated with each passing contract cycle, the concern is different: the software begins to understand the government from the inside in ways that are hard to reverse.

What GTC 2026 Actually Signals - Second-Order Effects

The individual announcements are notable. The pattern they form is more significant. Three themes emerge:

The CPU is back as a strategic battleground. Intel's decline over the past decade was partly about the shift from CPU-heavy workloads to GPU-heavy AI training. Vera represents NVIDIA entering the CPU market not to compete with Intel for server CPUs in general - it has no interest in running enterprise databases or web servers. It is capturing the CPU workload that exists at the intersection of GPU compute and AI agents: orchestration, state management, tool execution, and the increasingly complex control planes of agentic AI. This is a growing workload, not a shrinking one.

Software lock-in through open source. Dynamo 1.0 is open source. OpenShell is open source. The Physical AI Data Factory Blueprint is an open reference architecture. NVIDIA is not using proprietary software as the lock-in mechanism. It is using hardware-software co-optimization as the lock-in: these tools work everywhere, but they work dramatically better on NVIDIA hardware. The 7x performance boost from Dynamo is real and measurable - but it is 7x on Blackwell GPUs specifically. The open source strategy drives adoption; the performance asymmetry on NVIDIA hardware drives retention.

The physical world is next. The robotics and physical AI announcements are not about the next five years. The companies at GTC - FANUC, ABB, KUKA, Boston Dynamics, Figure, CMR Surgical - are all deploying now. The 2 million FANUC robots integrating NVIDIA Omniverse and Jetson modules are not a roadmap item. They are an installed base being upgraded. When Huang says "every industrial company will become a robotics company," the real statement is: every industrial company that does not adopt AI-driven automation will become uncompetitive with the ones that do, and NVIDIA intends to power the ones that do.

The GTC 2026 announcements are not about AI replacing human jobs in offices. They are about AI replacing human judgment in physical systems - factory floors, surgical suites, logistics hubs, and autonomous vehicles. The transition from software intelligence to physical intelligence is the next leg of the AI buildout, and NVIDIA just positioned itself as the foundation layer for all of it.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram