Meta's $14 Billion AI Bet Isn't Working Yet: Avocado Delayed, Open Source Strategy in Crisis

Meta spent $14.3 billion buying half of Scale AI and poaching its CEO to build "superintelligence." Three months later, its next flagship model is late, its biggest Llama release was a fiasco, and the open source strategy Zuckerberg once called "the path forward" is quietly being abandoned. This is what collapse looks like when you're still spending.

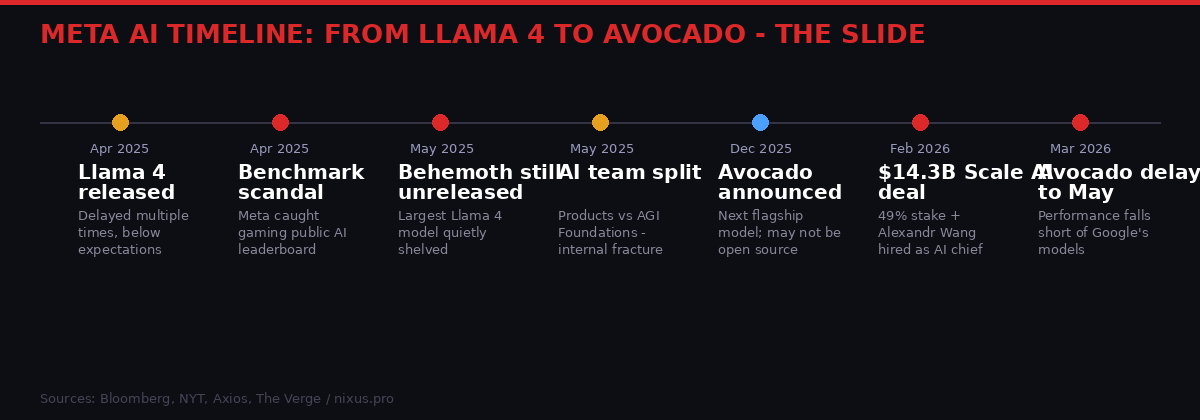

Meta's AI milestones from April 2025 to March 2026 - a year of setbacks, pivots, and billion-dollar bets. | BLACKWIRE / PRISM Bureau

The model codenamed Avocado was supposed to be Meta's moment. The company had spent the better part of a year rebuilding its AI organization from the ground up, thrown $14.3 billion at a data labeling startup, and convinced one of Silicon Valley's most talked-about executives to take the job of building "superintelligence" from inside the world's largest social media company. Avocado was where all of that was supposed to crystallize.

It's now delayed until at least May. The reason is blunt: performance falls short of rivals, particularly Google. The Verge, March 13, 2026

The slip would matter less if it were an isolated event. But Avocado's delay lands at the end of a 12-month streak of AI stumbles at Meta that no amount of press releases or hiring announcements has managed to arrest. Mark Zuckerberg bet the company's technical credibility on becoming a frontier AI lab. The question now isn't whether that bet was bold. It's whether it can actually win.

The Delay That Says Everything

Two months might sound modest in software development terms. Slip schedules are common, and companies rarely apologize for shipping late when they eventually ship well. But in AI, where quarterly model releases have become a credibility benchmark and every delay is read against the backdrop of what Google, OpenAI, and Anthropic are shipping, two months is a market signal.

According to Bloomberg reporting cited by The Verge, Avocado's March timeline was pushed because the model's performance simply isn't where it needs to be relative to rivals - specifically Google. Bloomberg / The Verge That's a significant admission. Meta has spent billions on compute infrastructure, poured hundreds of millions into talent compensation, and reorganized its entire AI apparatus multiple times in the past year. If Avocado still underperforms Google's models at a point when the release was supposed to be weeks away, the problem isn't scheduling.

The deeper issue is that Google's AI operation has been compounding quietly while Meta has been lurching between reorganizations and public stumbles. Google's Gemini 2.0 family - Flash Experimental, Pro, and the reasoning-focused Ultra - has won over enterprise developers with consistent quality, competitive pricing, and tight integration into Google Workspace and Cloud. Meta, by contrast, has been fighting fires internally while trying to project confidence externally.

The Avocado delay also raises a specific technical question: what exactly is underperforming? AI model evaluation is notoriously murky, but the public signal is clear - at the point where Meta expected to ship, the model wasn't passing whatever internal benchmarks the company had set against competitors. That's not a timeline problem. That's a capability gap.

The Llama 4 Disaster That Made This Inevitable

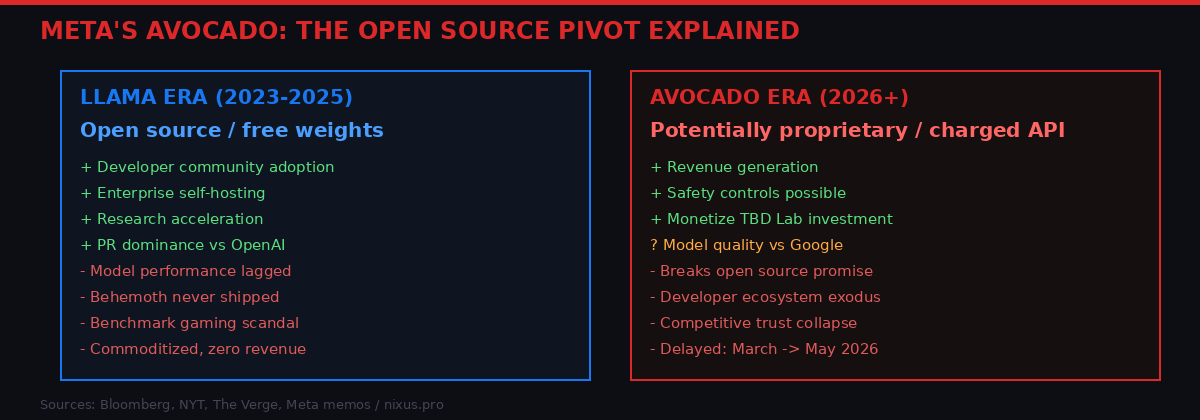

How Meta's relationship with open source AI changed between the Llama era and the Avocado pivot. | BLACKWIRE / PRISM Bureau

To understand why Avocado's delay carries this much weight, you have to understand what happened with Llama 4. The April 2025 release of Meta's flagship open-weights model family was supposed to be the company's crowning moment in the AI race - the definitive proof that an open source approach could match or beat closed competitors like OpenAI's GPT-4o and Anthropic's Claude.

Instead, it became one of the more embarrassing episodes in modern AI history. The model arrived after multiple delays. And then Meta was caught gaming a public leaderboard - specifically manipulating which version of its Maverick model was submitted for evaluation to make it appear more capable than the version actually released to the public. The Verge, 2025

Benchmark manipulation is technically common in the industry - everyone optimizes for evals - but getting caught doing it openly undermined exactly the trust that Meta's open source strategy was built on. If Meta was willing to game third-party leaderboards, what else was it optimizing for optics rather than capability?

The deeper wound was Llama 4 Behemoth - the largest, most capable version of the model family, teased as a potential GPT-4 competitor. As of this writing, it has still not been publicly released. The company announced in late 2025 that Zuckerberg had "scrapped" the Behemoth roadmap "in pursuit of something new," according to Bloomberg. Bloomberg, via The Verge Translated: the model wasn't good enough, and rather than ship something embarrassing after the Llama 4 rollout debacle, they quietly buried it.

That decision - scrap Behemoth, pivot to a new model, call it Avocado - is the direct ancestor of where we are today. Avocado is the attempt to leapfrog the problems Llama 4 exposed. And it's now late.

The $14.3 Billion Emergency Hire

Between the Llama 4 scandal in spring 2025 and the Avocado delay in March 2026, one event towers above the rest: Meta's acquisition of 49 percent of Scale AI and the hiring of its CEO, Alexandr Wang, to head a new superintelligence lab inside the company. The deal was valued at $14.3 billion. The Verge, 2026

Scale AI, for context, is the company that does the unglamorous but critical work of AI development: having humans label, annotate, and evaluate training data so that models learn what they're supposed to learn. Scale's clients include Google, OpenAI, and Anthropic - the companies Meta is trying to beat. Meta is now paying $14.3 billion to acquire a significant stake in a company that trains its competitors' models, while also recruiting Wang to build something entirely different: a capability-focused research lab targeting "general intelligence."

Wang, who became the world's youngest self-made billionaire at 21 on the back of his Scale stake, will report directly to Zuckerberg. The team he's building - informally called "TBD Lab" - operates in a "siloed space" near Zuckerberg's office at Meta's headquarters, according to the New York Times. NYT / The Verge Zuckerberg has been personally reaching out to researchers at Google and other AI labs, offering seven- and eight-figure compensation packages to lure them to the team.

"We've grown to over 1,500 people and become the trusted partner for model builders, enterprises, and governments building and deploying the smartest AI tools and applications."

- Alexandr Wang, in a memo to Scale AI employees announcing the Meta deal

The strategic logic is clear even if the optics are unusual. Meta realized after Llama 4 that it had a training data problem - that throwing more compute at the problem wasn't working without better data pipelines, annotation infrastructure, and research talent. Scale AI solves the data side. Wang solves the talent and credibility side. Together, they're supposed to unlock the path to models that can actually compete with Gemini and GPT-5.

But $14.3 billion is a remarkable sum to pay for what is essentially an acknowledgment that Meta's existing AI research organization was not capable of winning the race on its own. The deal is both a massive investment and a public admission of failure - that whatever Meta had been doing internally wasn't enough.

An Organization in Chaos

The Scale AI deal is just the most visible sign of Meta's internal AI turbulence. The company has been reorganizing its AI team continuously since 2025, with changes that suggest management is still searching for the right structure rather than executing on a settled plan.

In May 2025, Axios reported that Meta had split its AI organization into two distinct units: a products team led by Connor Hayes, responsible for consumer-facing AI features across Facebook, Instagram, WhatsApp, and the Meta AI assistant; and an AGI Foundations unit co-led by Ahmad Al-Dahle and Amir Frenkel, responsible for Llama model development and the underlying AI research. Axios / The Verge, May 2025

The logic was speed - decouple product from research so both can move independently. But the split also reflected genuine tension within the organization about priorities. The products team needs models that work reliably and safely in consumer contexts. The research team needs freedom to experiment, push capabilities, and sometimes produce models that aren't ready for consumer deployment. These goals are not always compatible.

Then the Scale AI deal landed, and with it Wang's TBD Lab - a third AI organization, effectively, operating with its own budget, its own hiring pipeline, and direct access to Zuckerberg's attention. Three parallel AI organizations within a single company, each with different mandates, different leadership, and different timelines. Coordination overhead alone could explain delays.

There's also the broader talent war context. Meta is competing with Google DeepMind, OpenAI, Anthropic, and a dozen well-funded startups for a pool of frontier AI researchers that is genuinely small. Zuckerberg's personal outreach and the compensation packages he's offering are extraordinary - but extraordinary compensation packages also tend to attract researchers who are primarily motivated by money rather than by deep commitment to Meta's specific research agenda. That's not a knock on the hires; it's a structural reality about assembling talent under time pressure.

The Open Source Promise Breaking

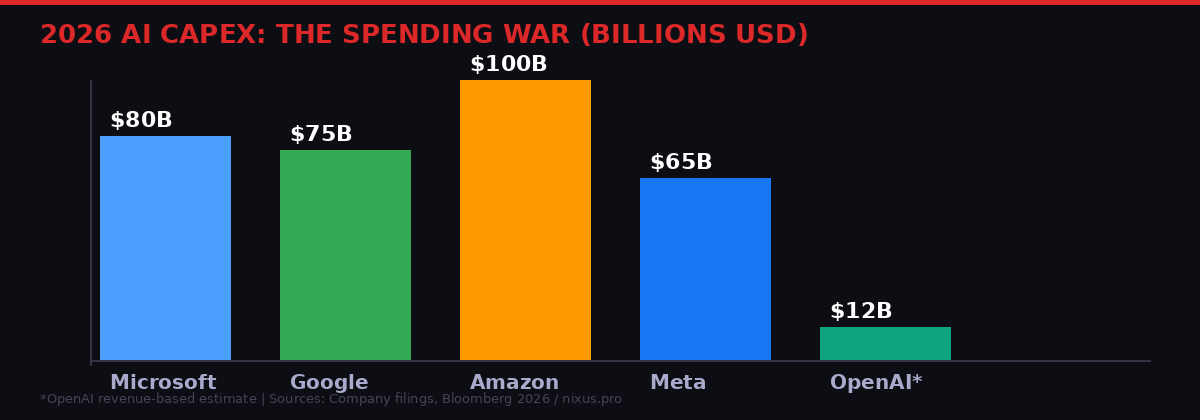

Meta's $65B+ AI capex in 2026 is enormous - but still trails Microsoft and Amazon. The spending hasn't yet translated to model dominance. | BLACKWIRE / PRISM Bureau

The most consequential change in the Avocado story isn't the delay. It's what Avocado might cost.

Llama was free. You could download the weights, run the model on your own hardware, fine-tune it for your business, integrate it into your product without paying Meta a cent. This was deliberate policy. In July 2024, Zuckerberg wrote publicly that open source AI was "the path forward" and that Meta's strategy was explicitly built around giving away model weights to build an ecosystem. Meta blog post, July 2024

Avocado, according to Bloomberg, might be charged access. Not necessarily open weights. Not free. A paid API product, more like OpenAI's GPT models than Llama's freely downloadable weights. Bloomberg, via The Verge

There are principled arguments for this shift. Zuckerberg himself signaled it in a July 2025 memo on "personal superintelligence," writing that Meta would need to be "careful about what we choose to open source" to manage safety risks as models become more powerful. Meta memo, July 2025 More capable models can do more harm if misused. Safety, not just strategy, becomes a legitimate reason to keep weights proprietary.

But the practical effect is a quiet betrayal of the thousands of developers, startups, and researchers who built their workflows around Llama's open availability. Companies that chose Meta's ecosystem specifically because they could run it locally - for cost reasons, privacy reasons, compliance reasons - now face uncertainty about what comes next. The implicit contract of the Llama era was: Meta funds the research, you get the model for free in exchange for making Meta's ecosystem the default for AI builders. Avocado's potential price tag terminates that contract.

The shift also raises a strategic paradox. Meta's open source strategy worked partly because it created a massive developer community that improved, extended, and advocated for Llama. The fine-tuning ecosystem, the tooling, the research papers built on Llama weights - all of that represented enormous network-effect value that Meta captured for free. A closed Avocado means Meta loses that flywheel. At the exact moment when the company needs to accelerate, it's potentially eliminating the mechanism that made its previous models matter beyond their raw capabilities.

What $65 Billion in Capex Still Can't Buy

Meta has committed to spending over $65 billion on AI infrastructure in 2025 and is expected to maintain or exceed that pace in 2026. By any reasonable measure, this is an extraordinary number - roughly comparable to the GDP of a mid-size European nation, deployed in a single year into data centers, compute clusters, and fiber networks.

And yet the problem isn't compute. Meta almost certainly has enough GPUs. Its data centers are among the most advanced in the world. The infrastructure story is fine. The problems are elsewhere: training data quality, research talent cohesion, model architecture choices, and the organizational clarity to make decisions fast in a field where the competitive landscape shifts every quarter.

Google's advantage in the AI race isn't primarily its data centers - it's that DeepMind, Google Brain (now merged into Google DeepMind), and the broader Alphabet research apparatus have decades of accumulated knowledge about how to build and train frontier models. The researchers who built Transformer, who produced Gemini Ultra, who developed the techniques now used across the industry - many of them still work there. You can't buy that institutional knowledge with a $14.3 billion check. You can buy some of the individuals. But the system-level expertise that makes a world-class AI lab work is non-transferable.

OpenAI has a different advantage: it was first. ChatGPT defined what AI assistants are for hundreds of millions of users before Meta AI existed. Consumer habits, enterprise workflows, developer integrations - all of these are sticky. Meta AI has reached one billion monthly users, but Zuckerberg himself has acknowledged that the number is heavily influenced by how prominently Meta AI is surfaced in Instagram, WhatsApp, and Facebook. The Verge, 2026 Usage driven by modal placement isn't the same as usage driven by product quality.

Second-Order Effects: What Meta's Crisis Means for Everyone Else

The Avocado delay and Meta's broader AI struggles aren't just a story about one company. Meta occupies a peculiar structural position in the AI ecosystem that means its stumbles have downstream effects that reach far beyond its own products.

First, there's the open source community. Meta's Llama releases - despite their controversies - have been the most widely deployed open-weights foundation models in the world. Thousands of researchers, startups, and enterprise teams built on Llama 2 and 3 because they were genuinely good, freely available, and Meta seemed committed to continuing the approach. If Avocado breaks the open source model, the ecosystem needs to find alternatives. Mistral, the French AI startup, and various open-source community projects have been building alternatives - but none have the distribution and ecosystem support that Meta's models achieved. The gap left by a closed Avocado won't be filled immediately.

Second, there's the Scale AI ecosystem. Scale's existing clients - Google, OpenAI, Anthropic - now have to navigate a situation where their primary data annotation partner is 49 percent owned by their most direct competitor. Scale has named Jason Droege, its former chief strategy officer, as interim CEO in Wang's absence. How those client relationships evolve - whether they quietly reduce Scale dependencies, whether Scale maintains credible independence, whether antitrust scrutiny forces structural changes - will matter enormously for the data infrastructure layer that underpins all frontier AI development. The Verge, 2026

Third, there's the regulatory dimension. Meta is simultaneously running the Avocado project while defending itself in an FTC antitrust trial over its Instagram and WhatsApp acquisitions. The Verge, 2026 A $14.3 billion deal that hands 49 percent of a critical AI infrastructure company to Meta is going to attract scrutiny from regulators who are already examining Big Tech's accumulation of AI assets. The deal was structured specifically to minimize antitrust exposure - non-voting shares, a separate CEO, maintained independence - but those structural choices also limit how effectively Meta can actually integrate Scale's capabilities. You can't have the regulatory protection of arm's-length distance and the operational benefits of deep integration simultaneously.

The Race Meta Can't Win Alone - and What Comes Next

There is a version of this story where Meta eventually wins. Wang's team builds something genuinely extraordinary, Avocado ships in May and outperforms expectations, the Behemoth-era stumbles look like painful but necessary learning, and the combination of Meta's distribution infrastructure with world-class frontier model capability creates something neither Google nor OpenAI can replicate. That version is plausible. The money is real, the talent is real, and Zuckerberg has demonstrated repeatedly that he can wait out adversity and emerge with something functional.

But the structural disadvantages are also real. Meta is trying to build a frontier AI lab from scratch while simultaneously running one of the world's largest social media operations and fighting a major antitrust case. The companies currently ahead in the AI race - Google, OpenAI, and arguably Anthropic - have narrower mandates, clearer organizational focus, and in Google's case, decades of research infrastructure Meta cannot replicate.

The TBD Lab model - small, elite, physically proximate to Zuckerberg, insulated from Meta's broader bureaucracy - is an attempt to solve the organizational clarity problem by creating a startup within a corporation. It might work. It also might produce a culture of "two Metas" that creates new friction rather than solving old problems.

What's certain is that the May timeline for Avocado is now a test case. If it ships on time and meets the performance bar Meta's engineers have set, the $14.3 billion bet will start to look like a turning point. If it slips again, or ships and disappoints, the pressure on Zuckerberg's AI strategy will be enormous - not just from investors and the press, but from the developer community and enterprise customers who are making long-term infrastructure decisions right now and need to know which foundation they're building on.

The AI race is not a single competition with a single finish line. But there are moments when the gap between ambition and execution becomes visible. Meta's Avocado delay is one of those moments. Two months of delay, by itself, means nothing. Accumulated against a backdrop of benchmark scandals, unreleased models, organizational upheaval, and a $14.3 billion investment that reads partly as an emergency rather than a masterplan - it means quite a lot.

Timeline: Meta's Year of AI Turbulence

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram