Meta announced full AI deployment for content moderation across Facebook and Instagram in March 2026, beginning the phase-out of third-party contractor review teams. Graphic: BLACKWIRE

The announcement landed quietly inside a corporate blog post on March 19, 2026. Meta, the company behind Facebook and Instagram - platforms that together host content for more than three billion active users - confirmed that artificial intelligence systems would gradually replace the contractor workforce responsible for content enforcement across its platforms.

It is, depending on your vantage point, either the most impressive AI deployment in corporate history or the largest potential abdication of editorial accountability ever attempted. Both things can be true simultaneously.

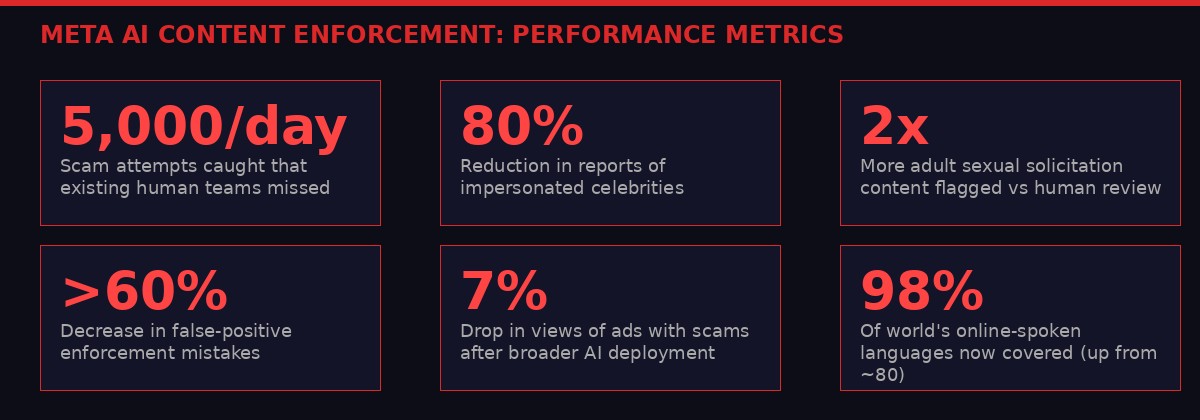

The numbers Meta published are genuinely striking. Its advanced AI systems now catch 5,000 scam attempts per day that existing human review teams had missed entirely. Reports of impersonated celebrities dropped by more than 80 percent. The AI catches twice as much adult sexual solicitation content as human reviewers, while making 60 percent fewer false-positive mistakes. And where human teams could cover roughly 80 languages, the new AI operates at meaningful coverage across languages spoken by 98 percent of the world's online population.

These are not minor improvements. They represent a structural shift in how one of the most influential information environments in human history decides what billions of people can and cannot see, say, or share. And yet the most consequential part of Meta's announcement was buried in the fourth paragraph: "over the next few years, we'll reduce our reliance on third-party vendors for content enforcement."

That is the corporate language for: thousands of human reviewers are losing their jobs.

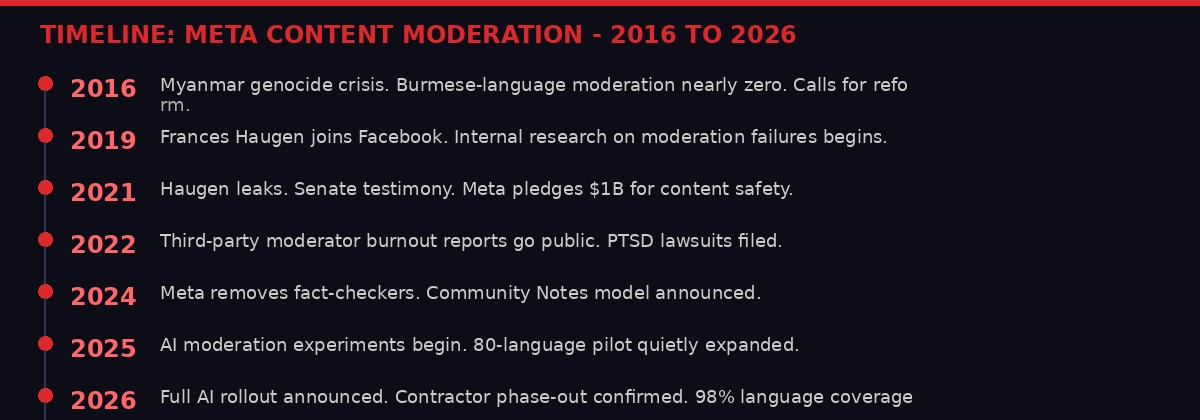

A decade of pressure, failures, and reform culminating in the 2026 full AI rollout. Graphic: BLACKWIRE

A Decade of Failure That Made This Inevitable

To understand why Meta reached this point, you need to go back to 2017. Researchers at Columbia University were documenting how Facebook's Burmese-language moderation had essentially collapsed. Hate speech targeting the Rohingya Muslim minority was spreading unchecked through the platform. The UN would later conclude that Facebook played a "determining role" in inciting the genocide that followed.

The root cause was not malice - it was capacity. Facebook had a handful of Burmese-language moderators reviewing content for tens of millions of users. The math never worked. The platform had scaled globally far faster than its safety infrastructure could follow. Myanmar was the most catastrophic example, but not the only one. The same pattern played out in Ethiopia, India, Myanmar, the Philippines - anywhere that Facebook grew faster than its ability to hire fluent, culturally competent reviewers.

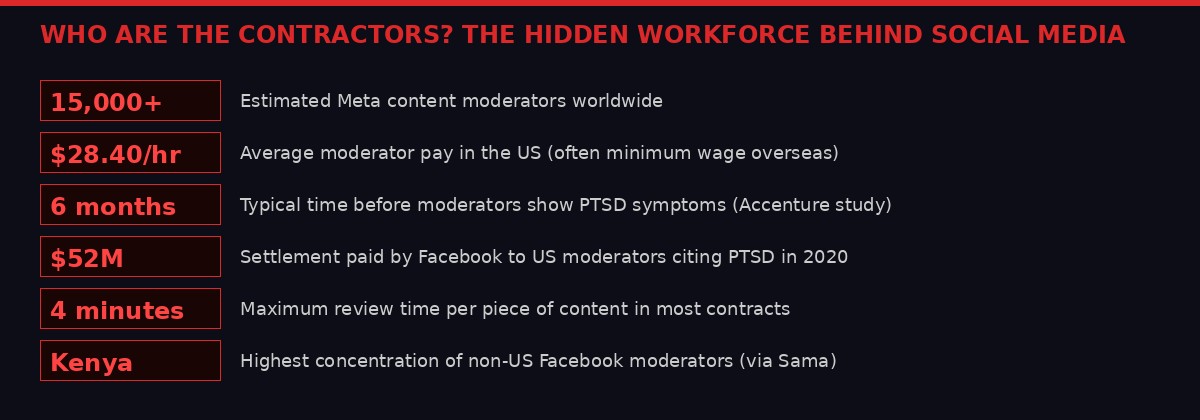

Meta's response was to hire more contractors, funnel work through outsourcing firms like Accenture, Cognizant, and Sama, and build out moderation hubs in places like Austin, Phoenix, Dublin, and Nairobi. By the early 2020s, the company had assembled a global workforce of roughly 15,000 content reviewers, the majority of them employed not by Meta directly but by third-party firms working on thin-margin contracts.

The human cost of this arrangement became increasingly visible. In 2020, Facebook agreed to pay $52 million to US content moderators who had developed post-traumatic stress disorder from repeated exposure to graphic content - beheadings, child abuse material, terrorism footage. The average reviewer was expected to process hundreds of pieces of flagged content per day, with a maximum of four minutes per item. Trauma counseling was available but stigmatized; turnover was high.

"It was like working on an assembly line, except the product was human suffering." - Former Facebook content moderator, Dublin, speaking to The Verge in 2021

Meanwhile, the quality problems never fully resolved. High-profile failures continued: the livestreamed Christchurch massacre stayed on Facebook for 17 minutes before removal. Harassment campaigns against journalists and activists routinely escaped automated detection. COVID misinformation spread through networks faster than reviewers could act. The 2021 Frances Haugen leaks revealed internal research showing Facebook's systems consistently performed worse in non-English languages, a problem the company had known about for years.

By 2024, Meta had begun quietly overhauling its approach. It dropped its partnership with third-party fact-checkers, replaced it with a Community Notes model borrowed from X (formerly Twitter), and reduced proactive enforcement to focus on what it called the most severe violations: terrorism, child sexual abuse material, drug trafficking, and fraud. It was, critics argued, a rollback of responsibility dressed as a reform. Supporters said it was an overdue correction against over-enforcement that had silenced legitimate political speech.

Meta's internally reported performance metrics for AI content enforcement, as published in its March 2026 blog post. Graphic: BLACKWIRE

What the AI Actually Does - and How Well

The technical architecture Meta has deployed is more sophisticated than previous generations of content moderation AI. Earlier systems were largely keyword-based or relied on perceptual hashing - comparing images against databases of known harmful content. Effective for identical copies of known material, useless for anything novel.

The new systems use multi-modal transformer models - the same class of neural network underlying large language models like GPT and Claude - but trained specifically on Meta's internal enforcement data rather than general internet text. These models process text, images, and video simultaneously, analyzing not just the content itself but contextual signals: the account's posting history, the network of accounts it interacts with, geographic patterns, the timing and frequency of posts, and linguistic markers that indicate cultural or regional framing.

The scam detection improvement is the clearest example of where AI outperforms humans. Scam operations are coordinated across hundreds or thousands of accounts simultaneously, with each individual account appearing to post borderline-but-technically-legal content. No human reviewer looking at a single account can see the broader pattern. The AI can cross-reference thousands of accounts in milliseconds, identifying coordinated inauthentic behavior through patterns no individual reviewer would ever detect.

The six-stage pipeline Meta's AI uses to classify, contextualize, and act on content violations. Graphic: BLACKWIRE

The impersonation drop is similarly explained by scale rather than cleverness. An AI can continuously monitor every new account created on the platform and cross-reference profile images, usernames, and bio text against a database of verified public figures. A human team reviewing flagged reports can only respond reactively. The AI runs proactively at continuous speed.

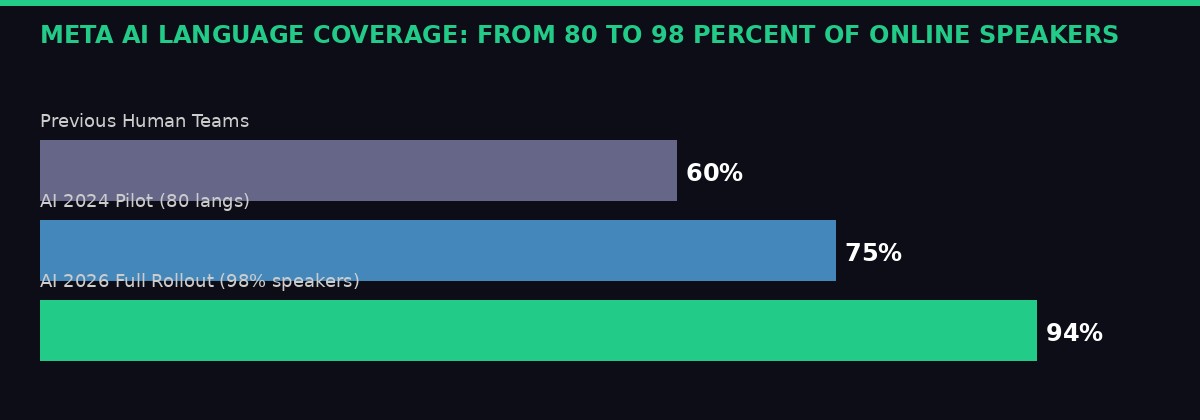

The language coverage expansion is the most geopolitically significant improvement. Meta's previous human workforce was heavily weighted toward English, Spanish, German, French, Portuguese, and a handful of other high-priority languages. The new AI systems cover languages spoken by 98 percent of online users, a figure that represents genuine progress for populations that have historically been underprotected. At the same time, it raises serious questions: AI cultural and linguistic competence is not equivalent to human cultural knowledge. A model trained on Kenyan Swahili data may not accurately parse the political valence of a phrase used by a Tanzanian opposition movement. The 98 percent coverage number describes quantity; it says nothing about quality.

Meta's own framing is careful. The company says AI "increases capacity" and "adapts to cultural nuance" without providing specific accuracy benchmarks by language or region. Transparency reports covering Q3 2025 show improvement on the metrics Meta chooses to disclose. They do not show the false-positive rate by language, the appeal success rate, or the number of accounts wrongly suspended then restored.

Meta's AI now covers languages spoken by 98% of the world's online population, up from approximately 80% under human team coverage. Graphic: BLACKWIRE

The Contractor Workforce Being Erased

The numbers of human moderators involved are large enough that their displacement deserves specific attention. Meta does not publish a total headcount for its content moderation contractor workforce, but industry estimates place the figure at between 12,000 and 15,000 globally, with the largest concentrations in the United States, Ireland, Kenya, the Philippines, and India.

These workers have never been Meta employees. They are employed by firms like Accenture, Cognizant, Sama, and Teleperformance under service contracts that typically include explicit provisions preventing client-facing disclosure. Moderators sign NDAs that cover their work product, their working conditions, and often their mental health status. The people watching the worst content the internet produces, on behalf of one of the most profitable companies in history, have historically been among the least protected workers in the tech ecosystem.

The hidden workforce behind social media's content safety: facts and figures about Meta's global moderation contractors. Graphic: BLACKWIRE

The PTSD crisis in this workforce has been extensively documented. A landmark study commissioned by Accenture (and later cited in litigation) found that moderators begin exhibiting symptoms consistent with PTSD within six months of starting the role. The $52 million settlement Meta paid in 2020 covered 11,000 US-based moderators. Similar settlements and investigations have followed in Kenya, Ireland, and the Philippines, though with less media coverage.

In Kenya, moderators employed by Sama to review Facebook content for East Africa sued their employer in 2023, alleging union-busting and failure to provide adequate psychological support. The case exposed a wider pattern: Meta was aware of the working conditions at its outsourcing partners and had contractual provisions that theoretically required adequate welfare support, but oversight was weak. Multiple moderators testified they were discouraged from using counseling services out of fear it would be noted as a performance issue.

Meta's AI transition, whatever its merits for content quality, definitively ends this particular category of exploitation. The contractor workforce that absorbed years of psychological harm will not be replaced. That is not framed as a labor decision in Meta's announcement; it is framed as an efficiency and safety upgrade. The distinction matters.

"We're reducing our reliance on third-party vendors for content enforcement and focusing on strengthening our internal systems and workforce. While we'll still have people who review content, these systems will be able to take on work that's better-suited to technology." - Meta, official announcement, March 19, 2026

Translation: the remaining human workforce will be smaller, more specialized, and focused on high-stakes edge cases rather than volume review. The model is moving from "thousands of people reviewing everything" to "a small team of experts overseeing an AI that reviews everything." That is a fundamentally different accountability structure - and one that has not been publicly debated, let alone regulated.

The Accountability Vacuum at the Center of This

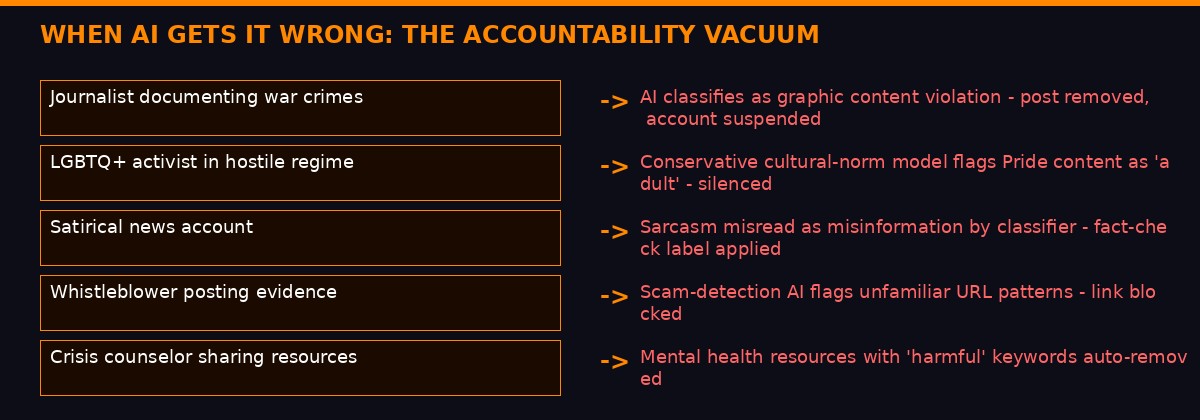

Here is the second-order problem that Meta's announcement does not address: what happens when the AI makes a mistake at scale?

Human moderators make mistakes. They have always made mistakes. The difference is that human error has natural variance - different reviewers make different errors, at different rates, on different types of content. AI systems make systematic errors. A miscalibration in the model's understanding of political satire in Indonesian will not produce one wrongly-removed post. It will produce 10,000 wrongly-removed posts before anyone notices the pattern.

Hypothetical but plausible scenarios where AI content moderation fails the users who most need protection. Graphic: BLACKWIRE

Meta acknowledges this in general terms. The company says human experts will "design, train, oversee, and evaluate" AI systems, and that people will continue to make "the most complex, high-impact decisions." But the structural reality is this: when you reduce reviewer headcount from 15,000 to a fraction of that, you also reduce your capacity to catch systematic errors before they scale to millions of users.

The appeal process is already broken under the current system. The Oversight Board - Meta's quasi-independent review body - handles a tiny fraction of appealed decisions. According to its own published statistics, the board reviewed fewer than 50 cases in all of 2024. Facebook processes billions of content actions per year. The ratio of appeals reviewed to decisions made is essentially unmeasurable at that scale. The AI transition will increase the volume of automated decisions without a proportionate increase in appeal capacity.

Digital rights organizations have been raising this concern for years. Access Now, Electronic Frontier Foundation, and Article 19 have all published analyses of what they call the "accountability gap" in AI-driven moderation - the space between the decisions an AI makes and the oversight mechanisms capable of catching errors. Meta's 2026 announcement significantly enlarges that gap.

The Three Accountability Gaps AI Moderation Creates

- Volume gap: AI makes more decisions per second than any oversight structure can review, even with hundreds of human experts.

- Transparency gap: Neural network decisions are not fully interpretable - the model cannot always explain why it flagged specific content, making appeals harder to argue.

- Systemic error gap: A miscalibrated AI makes the same mistake across millions of cases before correction; human error variance is inherently more distributed.

The EU's Digital Services Act theoretically addresses some of this. DSA requires large platforms to conduct algorithmic risk assessments, provide meaningful appeals mechanisms, and allow independent researchers access to data for auditing purposes. Meta is subject to DSA as a Very Large Online Platform. Whether DSA oversight mechanisms can actually track AI moderation decisions at Meta's scale remains an open and largely untested question.

The Signal Founder Inside the Machine

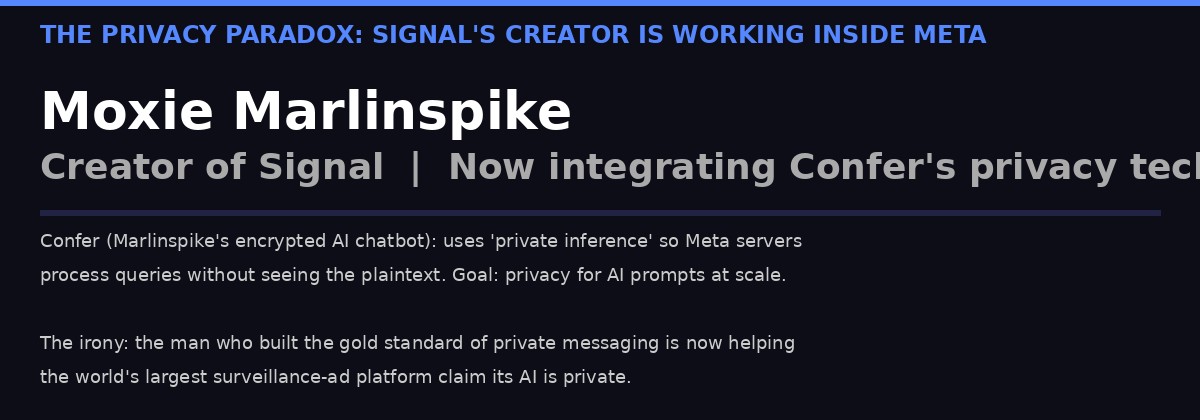

One of the more improbable subplots in this story is the involvement of Moxie Marlinspike - the creator of Signal, the gold standard of private messaging, and arguably the most credible voice in the technology industry on questions of privacy and surveillance resistance.

Signal's creator is now working inside Meta to bring encrypted AI to billions of users - raising questions about the nature of privacy partnerships with surveillance-ad platforms. Graphic: BLACKWIRE

Marlinspike built his career on the principle that people should be able to communicate privately without trusting the companies providing the infrastructure. Signal's entire architecture was designed so that Signal the company cannot read Signal users' messages. That is not a marketing claim - it is a technical guarantee enforced by the cryptography itself.

In March 2026, Marlinspike announced through his startup Confer - an encrypted AI chatbot he built on the premise that AI model providers should not be able to read users' queries - that he would work to integrate Confer's privacy technology into Meta AI.

"Ten years ago, I worked with Meta to integrate the Signal Protocol into WhatsApp for end-to-end encrypted communication. That enabled end-to-end encryption by default for billions of people. Now we're going to do the same thing again, for AI chat." - Moxie Marlinspike, Confer blog, March 2026

The parallel he draws is direct: in 2016, he helped bring end-to-end encryption to WhatsApp's 1 billion users. The result was genuine - WhatsApp messages are cryptographically protected in ways that Meta itself cannot access. He is now attempting to do something analogous for AI queries: a system where Meta's servers process AI requests without seeing the plaintext content of what users are asking.

This is technically non-trivial. The approach, called "private inference," uses techniques from the field of privacy-preserving computation - specifically secure multi-party computation and hardware-level trusted execution environments - to process AI model inferences without exposing input data in plaintext. Marlinspike's earlier blog posts at Confer describe a working implementation for his open-weight model chatbot. Extending it to Meta's frontier proprietary models is a different-order engineering challenge.

The privacy implications are genuinely complex. Meta's business model is built on advertising targeting that depends on understanding what users are interested in. An AI that processes user queries without Meta seeing the queries would represent a structural limit on that model - at least for AI-mediated interactions. Whether Meta is willing to accept that trade-off at scale, or whether the "private inference" implementation will include enough metadata collection to preserve targeting capability through indirect signals, is a question Marlinspike's announcement does not answer.

What it does establish: the most privacy-minded technologist of his generation believes the right move is engagement with Meta rather than opposition to it. That is either a hopeful sign that meaningful privacy can be built inside the world's largest social media company, or a cautionary tale about what happens to principled positions when sufficient scale is at stake.

What Comes Next: The Global Regulatory Response

Regulators in the European Union, the United Kingdom, and Brazil are all watching Meta's AI moderation transition with varying degrees of alarm. The EU's DSA framework is the most developed, but even DSA was written in an era when human content moderation was the norm and AI was the supplement. The law's requirements for "algorithmic transparency" and "independent audits" are difficult to apply to opaque neural network systems making billions of decisions per day.

The UK Online Safety Act, which came into full effect in 2025, requires platforms to conduct "content moderation risk assessments" and maintain "appropriate systems" for dealing with illegal content. The law does not specify whether "appropriate systems" must include human reviewers. Ofcom, the UK's communications regulator, is actively developing guidance on AI moderation requirements, but final rules are not expected until late 2026.

Brazil's Lei do Marco Civil and its recent AI governance addenda create similar ambiguity. Brazil has one of Meta's largest user bases globally, and Portuguese-language content has historically been a significant source of moderation failures - particularly around political misinformation during election cycles. The 2022 Brazilian election was a case study in how coordinated networks exploit gaps in moderation capacity. Whether AI systems trained primarily on North American and European data can accurately detect Brazilian political misinformation at the granularity required is an empirical question that has not been publicly tested.

In the United States, there is no federal framework governing content moderation at all. Section 230 of the Communications Decency Act protects platforms from liability for user-generated content and equally for their moderation decisions. Meta could remove human reviewers entirely tomorrow - or keep them all - and face no federal regulatory consequence for either choice. The political appetite for Section 230 reform has not translated into legislation in the eight years that debate has been active.

Key Regulatory Frameworks Governing Meta's AI Moderation Transition

- EU Digital Services Act (DSA): Requires algorithmic risk assessments and independent audits for VLOPs. Does not mandate human reviewers. Enforcement by EU Commission.

- UK Online Safety Act: Requires "appropriate systems" for illegal content. Ofcom developing AI-specific guidance through 2026.

- Brazil AI Governance Framework: Still evolving. Marco Civil creates baseline liability but no AI moderation specifics.

- US Section 230: No liability exposure for moderation decisions. Zero federal AI content moderation regulation.

- Meta Oversight Board: Quasi-independent, reviews ~50 cases/year against billions of decisions. Structurally inadequate at AI decision volumes.

The result is a patchwork where the most consequential AI deployment in the history of social media is governed by rules designed for a different era, enforced by regulatory bodies that are still figuring out what questions to ask, in jurisdictions that have fundamentally different views on the balance between free expression and platform responsibility.

The Deeper Shift This Represents

Step back from the specifics and a larger pattern emerges. Meta's move is not an isolated corporate decision. It is the leading edge of a wave that will transform every large platform's approach to content governance over the next three to five years. Google, TikTok, X, and YouTube are all moving in the same direction at varying speeds.

The economic logic is irresistible. AI is faster, cheaper, and in many specific categories provably more accurate than human reviewers. For a platform Meta's size, even a modest reduction in the contractor workforce translates to hundreds of millions of dollars in annual cost savings. Those savings compound as AI capability improves and becomes more cost-efficient, while human labor costs increase with inflation and regulatory pressure for better working conditions.

But the political and social logic is more complicated. Content moderation is not just a technical problem - it is a values problem. Deciding what constitutes "harm" in a given cultural context, distinguishing political speech from incitement, calibrating between suppressing abuse and enabling organizing by marginalized communities: these are not questions that have objectively correct answers. They are questions that different societies answer differently based on their histories, legal traditions, and current political climates.

Human moderators, for all their flaws, brought cultural knowledge and contextual judgment to those questions. A Kenyan moderator reviewing Kiswahili content understood, in ways that required no training, the political history that made certain phrases loaded with significance. An AI trained on labeled datasets captured some of that - the labels themselves carry human judgment - but it is an abstracted, averaged version of cultural knowledge, not the living thing.

The bet Meta is making is that AI's gains in speed, scale, and consistency outweigh its losses in cultural granularity and contextual judgment. That bet may well be correct for the categories where the AI is performing demonstrably well: coordinated scam networks, known-image abuse material, account takeovers. It is less clearly correct for the categories that matter most to political speech, minority communities, and marginalized populations.

The troubling thing is that we will not know how that bet is performing for years. AI moderation failures, unlike human ones, do not generate whistleblowers. There is no Frances Haugen equivalent who can leak internal data about what the models are getting wrong. The transparency mechanisms that existed for human-moderated systems - workers who could talk to journalists, lawyers who could depose managers, researchers who could audit decisions - do not map cleanly onto a system where the decision-maker is a neural network and the output is a binary action taken in milliseconds.

Meta will publish quarterly transparency reports. The Oversight Board will review its 50 cases per year. The EU's auditors will examine algorithmic risk assessments that are necessarily incomplete descriptions of systems too complex for full external review. And three billion people will continue to have their online speech governed by a black box that, by any honest accounting, is both more capable and less legible than what it replaces.

That is the deal being made. Nobody voted on it. Nobody was asked.

← Back to BLACKWIRE