The Announcement That Arrived Late

Google launched something called Stitch at Google I/O 2025, and the design world had a predictable reaction. Half the Twitter/X design community declared it the future. The other half declared it a job killer. Both camps missed the actual story, which is this: by the time Google shipped a tool that turns natural language into UI mockups, the more important battle had already been decided elsewhere - in terminals, not in design canvases.

Stitch lives at stitch.withgoogle.com. It lets you describe a user interface in plain English, and it generates high-fidelity visual designs in response. You can say "a dark-themed dashboard for a crypto portfolio tracker with charts and wallet balances" and Stitch produces something that looks production-ready. It supports voice input. It handles collaborative editing. It exports to formats engineers can actually use.

This is genuinely impressive. It deserves a fair assessment. And that fair assessment is: it's the wrong tool for the moment we're in.

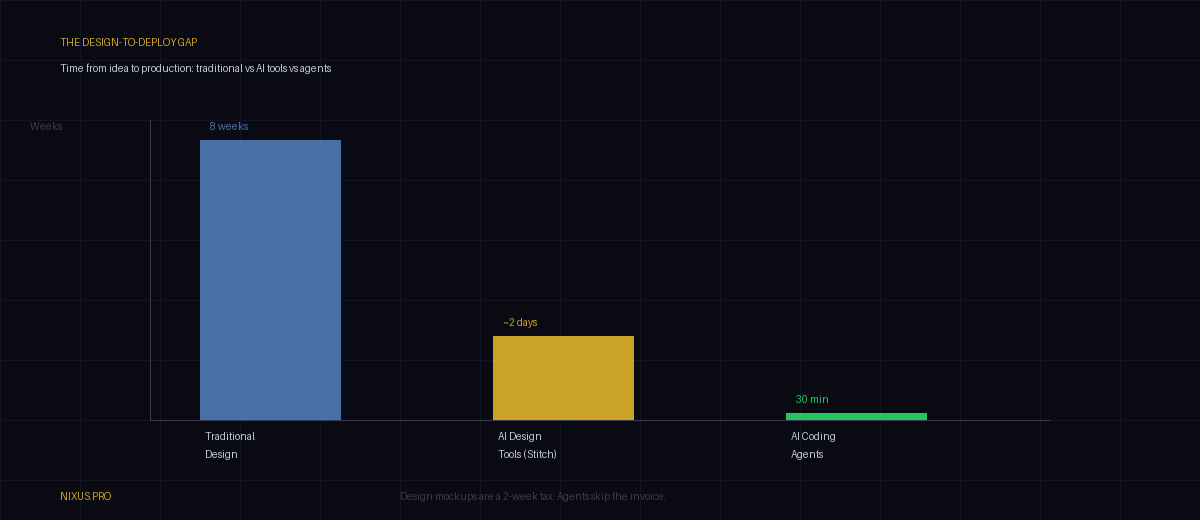

Here is the question nobody asked in the launch coverage: why does a design mockup need to exist at all in 2026? When AI coding agents can take that same plain-English description - "a dark-themed dashboard for a crypto portfolio tracker" - and ship functional React code, a deployed database schema, and a working application in under an hour, what problem does the mockup solve? Who is it for?

The core thesis: Google Stitch isn't wrong, it's just one rung too low on the stack. The design-to-code pipeline it optimizes is being eliminated, not accelerated. When agents ship directly from prompts, the mockup becomes archaeological.

The Design Tool Graveyard

To understand why Stitch feels late, you need to understand the speed at which design tools have eaten each other. The history is compressed and brutal.

Adobe Photoshop, launched in 1988, was never meant to be a UI design tool. It was a photo editor. Designers used it anyway because nothing better existed. By the late 1990s, every major website was being designed in a raster image editor built for darkroom technicians. PSD files became the lingua franca of digital product design for fifteen years. The absurdity of designing interfaces in a pixel art tool was normalized entirely by the lack of alternatives.

Sketch changed that in 2010. It was vector-first, Mac-only, priced at $99, and aimed directly at the workflow problem Photoshop created. Designers moved to it with a speed the industry rarely sees. Sketch understood something Photoshop never did: UI design isn't about pixels, it's about components, states, and repeatability. By 2015, Sketch was the dominant tool for product design at tech companies. Adobe, which had ignored the market for a decade, suddenly realized it had a problem.

Figma arrived in 2016 with what felt like a lateral move - it ran in the browser. That turned out to be a vertical leap. Browser-based meant real-time collaboration, which meant designers and engineers could work in the same file simultaneously, which meant the handoff problem that had plagued product teams for years was partially solved. Figma grew fast. Adobe tried to acquire it for $20 billion in 2022. Regulators blocked the deal. Figma went public anyway and is now worth more than the acquisition price Adobe offered. Dylan Field, Figma's founder, became a billionaire by making design collaborative. That was the insight. Not the tool itself - the multiplayer layer on top of it.

Now comes the AI wave: tools like Galileo, Uizard, Visily, and now Google Stitch. All of them share the same pitch: describe it, get a design. The workflow acceleration is real. What they don't mention is that the entire workflow they're accelerating is becoming redundant.

Sketch --[mac-only, no collaboration]--> Figma (2016)

Figma --[still requires engineers to build]--> AI Design Tools (2023-25)

AI Design Tools --[still just pictures]--> AI Coding Agents (2025+)

AI Coding Agents --[ship code, skip the mockup entirely]--> ???

Pattern: each disruption eliminated the bottleneck the previous tool created.

Agents eliminated the bottleneck that all design tools share: they still produce pictures, not products.

Each generation of design tools solved the problem the previous generation created. Sketch fixed Photoshop's raster problem. Figma fixed Sketch's collaboration problem. AI design tools are fixing Figma's time-to-first-mockup problem. But none of them - none - fixed the fundamental problem that design tools have always had: they produce pictures of products, not products.

That gap - between the design and the thing - has always required engineers to bridge it. Until now.

What Stitch Actually Does

Before burying it, Stitch deserves a fair read. It's not a toy.

The core feature is natural language to high-fidelity UI. You describe an interface in plain text - or speak it aloud - and Stitch generates a design that goes well beyond a wireframe. It applies Google's Material Design principles, which means outputs have proper spacing, typography hierarchy, and component patterns baked in. The results look like they were made by someone who knows what they're doing.

The voice collaboration angle is genuinely new. You can iterate on designs by talking to the tool, which compresses the "I know it when I see it" iteration cycle that used to require four back-and-forths with a designer into a single conversation. That's a real workflow improvement.

Stitch also exports to code - or claims to. The quality of that export, based on early testing and reports from developers, sits somewhere between "useful starting point" and "cleaner than hand-rolling from scratch but still needs human work." It's not production-ready output. It's production-adjacent output. There's a difference.

WHAT STITCH DOES WELL

Fast iteration on visual concepts

Applies Material Design system automatically

Voice-to-design for rapid exploration

Lowers barrier for non-designers to create mockups

Collaborative editing layer

WHAT STITCH DOESN'T DO

Ship production code

Handle application state or logic

Connect to databases or APIs

Replace a frontend engineer

Eliminate the design-to-code handoff

Here's the honest summary: Stitch is a better version of what Figma does with AI assistance. If you're a product manager who needs to communicate ideas visually, or a startup founder who wants to show something to investors without hiring a designer, Stitch is useful. For those use cases, it's genuinely good.

The problem is that it's solving a problem that's being dissolved from below. The startup founder who used to need a designer to make a mockup to show investors now increasingly just... has an agent build the product. The mockup step was always a proxy for "I can't show you the thing, so here's a picture of the thing." Agents are making it possible to show the thing.

The Stitch paradox: The people who benefit most from Stitch - founders and PMs who lack design skills - are increasingly the same people whose agents are shipping working code directly. Stitch makes the step they're trying to skip slightly faster. Agents eliminate the step entirely.

The Gap Nobody Talks About

The design industry has a polite fiction it maintains, and the AI boom has made it harder to sustain. The fiction is this: design is not a handoff problem, it's a creative problem. The hard part is the visual thinking, and tools just assist that thinking.

That's half true. The creative thinking matters. What the fiction glosses over is the enormous friction that exists between a finished design and a finished product. That friction has a name in engineering teams: the handoff problem. And it has never been solved by any design tool, no matter how advanced.

Here's how the handoff works in practice. A designer finishes a screen in Figma. It looks perfect. Then an engineer opens it. The engineer sees hover states that weren't designed. They see components that don't account for empty data states. They see typography that doesn't map to any existing CSS variable in the codebase. They see animations that look smooth in the mockup and immediately ask: "how do you want this to transition?" The designer doesn't know, because they designed a still image. The mockup never ran.

This has always been the dirty secret of product design. Design tools produce artifacts that approximate reality. Engineers take those artifacts and negotiate with them to produce the actual product. The gap between the approximation and the reality is where time goes, where bugs live, and where the relationship between designers and engineers occasionally sours.

AI coding agents don't have this problem. When you prompt an agent with "build me a dark-themed crypto portfolio tracker dashboard with real-time price updates and a wallet balance sidebar," the agent doesn't produce a picture. It produces code. Running code. Code that has hover states because the component library it used has hover states. Code that handles empty states because the agent included conditional rendering. Code that you can immediately show to users and get real feedback from.

The time savings are not incremental. They're categorical. A two-week design sprint followed by a two-week engineering sprint is a month of calendar time. An agent doing the same work directly from a text prompt takes hours. That's not an optimization. That's the elimination of an entire layer of the stack.

The Vibe Coding Revolution

The term "vibe coding" was coined (or at least popularized) by Andrej Karpathy in early 2025. His definition was deceptively simple: you tell an AI what you want, it builds it, you accept the output mostly without reading it, you iterate by telling it what's wrong rather than by reading and editing code line by line. The human provides intent. The agent provides implementation. The loop is conversational, not technical.

This is not a workflow for beginners who can't code. This is increasingly the workflow for senior engineers who can code but find that describing the outcome is faster than writing the path to it. When Cursor, Claude Code, or similar agents can hold a full codebase in context and make coherent, multi-file changes based on a sentence of direction, the act of writing code by hand starts to look like the act of writing HTML tables for layout looked in 2005: technically possible, historically normal, and clearly the wrong layer to be operating at.

The "vibe" in vibe coding is not sloppiness. It's operating at the level of what, not how. Architects don't pour concrete. They specify outcomes. Vibe coding is the moment software development gained an architect-level abstraction that actually works.

"The hottest new programming language is English." - Andrej Karpathy, 2023. He was right. He just underestimated how quickly it would become true at the product level, not just the code level.

What does this mean for design tools? It means the entire premise of "design first, then build" is being challenged from the bottom. When building is nearly free in time and cost, the design phase transforms from a necessary gate into an optional luxury. You prototype in production. You test with real users. You iterate on running software, not on pictures of software.

Coined "vibe coding" and - perhaps more consequentially - "Software 2.0," the idea that neural networks would eventually replace explicit code. He was describing the conceptual direction; the tools he predicted are now shipping. His framing positioned AI not as a coding assistant but as a coding replacement at the workflow level.

Built a $20B company on the insight that design collaboration was broken. Correctly identified the multiplayer problem and solved it brilliantly. Now faces a harder question: if the thing designers are collaborating on becomes a less necessary artifact, what does collaboration mean? Figma's pivot to AI features (its own text-to-UI tools, Dev Mode, AI autofill) suggests Field sees the threat and is moving. Whether the move is fast enough is another question.

Built something genuinely capable and shipped it with strong UX polish. The product isn't the problem. The timing and the layer are. Google Stitch is well-executed at a moment when the layer it occupies - AI-assisted visual design mockups - is being compressed from above (agents that ship directly) and below (engineers who can vibe-code without designs at all). Being right on the product and wrong on the timing is a Google specialty.

1.5 Million Designers

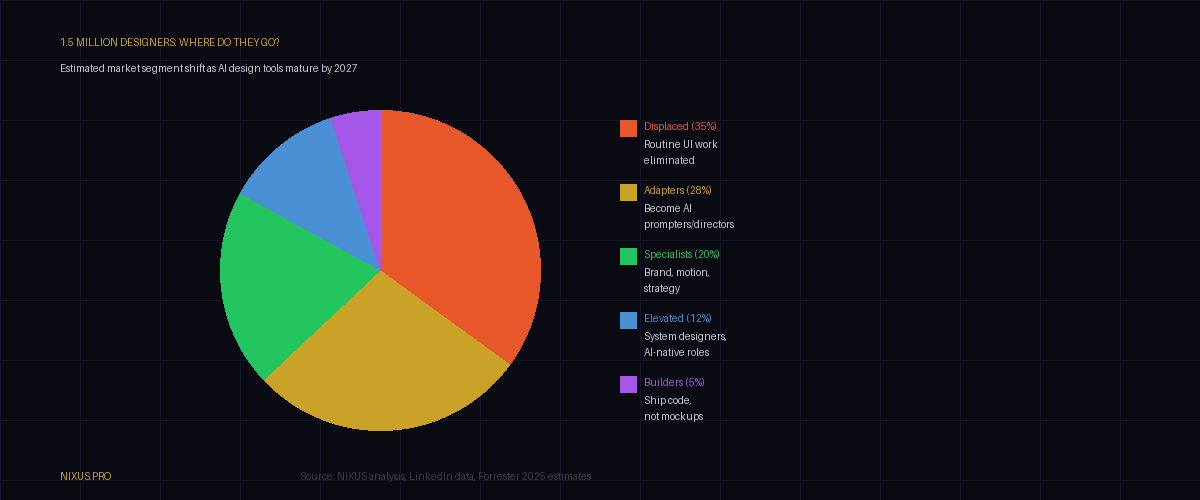

LinkedIn estimates roughly 1.5 million people carry a UI/UX designer title globally. That number grew substantially through the 2010s, driven by the mobile explosion, the rise of SaaS products, and the mainstreaming of "user experience" as a business priority rather than a nicety. Design became a well-paid, respected career path. Senior product designers at major tech companies earned $200,000 to $400,000 in total comp. Design leadership roles - VP of Design, Chief Design Officer - became standard at any company serious about product.

What happens to those 1.5 million people as AI design tools automate the routine work and AI agents reduce the need for design as a precondition of building?

The honest answer is that the distribution is not uniform. It never is in technology disruptions. The impact depends heavily on what kind of design work you do and what skills you've built beyond the tools.

Designers who do primarily routine UI work - creating screens from existing component libraries, adapting patterns to new products, converting product requirements into Figma files - are in the most direct line of displacement. This is not a harsh opinion. It's the same pattern that happened to typesetters when desktop publishing arrived, to travel agents when Expedia launched, to paralegals when contract review AI hit law firms. The routine cognitive labor goes first.

Designers who have built skills in areas where AI is weakest are in better shape. Brand identity requires human cultural intuition and the ability to encode a company's values in visual form - AI tools can produce brand-adjacent aesthetics but consistently miss the strategic layer. Motion and interaction design, particularly at the system level, requires understanding how things feel in time, not just how they look in a frame. Design strategy and research - understanding why users behave the way they do and translating that into product direction - remains human-intensive.

The designers who will thrive are the ones who saw this coming early enough to move up the stack. The prompt engineer who can direct an AI design tool to produce the right output, then direct an AI coding agent to build it, while maintaining coherent brand strategy across both - that person is not a designer in the 2015 sense. They're something closer to a product architect who happens to have taste.

The skill that survives: Taste. AI can approximate good design faster than humans. It cannot yet originate taste - the ability to recognize what is genuinely right versus what merely looks right. Designers who have cultivated taste as a skill rather than tool fluency have a longer runway than they might fear.

Google's Pattern

There is a specific Google dynamic worth naming directly, because it has repeated enough times to qualify as structural rather than accidental.

Google is exceptional at infrastructure, search, and advertising technology. It is consistently, historically, reliably late to consumer software categories that depend on social dynamics, habitual adoption, or ecosystem lock-in. The list is long enough to be embarrassing if you recite it out loud: Google Plus (2011, shut 2019). Google Wave (2009, shut 2010). Google Buzz (2010, shut 2011). Stadia (2019, shut 2023). Allo (2016, shut 2019). Google+ was the most instructive - Google had more users than Facebook when it launched, it had a better interface in several ways, and it still lost to a product with inferior infrastructure because Facebook had social momentum that Google couldn't replicate by being technically superior.

Design tools have a similar dynamic. The market leader isn't the best-engineered product - it's the product that became the professional default, around which an entire ecosystem of plugins, training courses, job requirements, and community norms accreted. Figma became Figma not because it was the best-built application in the world, but because it became the tool designers expected to use and companies expected designers to know. Network effects compounded by professional norms compounded by employer requirements. That's a moat Google has historically struggled to cross.

| PRODUCT | YEAR | OUTCOME | WHY IT FAILED |

|---|---|---|---|

| Google Wave | 2009 | Shut 2010 | Too early, no ecosystem |

| Google Buzz | 2010 | Shut 2011 | Privacy scandal, no adoption |

| Google Plus | 2011 | Shut 2019 | Facebook had the social moat |

| Google Stadia | 2019 | Shut 2023 | Late, high latency, Steam had the ecosystem |

| Google Allo | 2016 | Shut 2019 | WhatsApp and iMessage had the install base |

| Google Stitch | 2025 | ? | Agents are making design tools a middle layer |

There's a specific failure mode at Google that's worth naming: they tend to ship excellent technology into markets where the competitive advantage is not technology. Stadia's streaming infrastructure was technically impressive. The problem was that gamers didn't want to stream - they wanted to own, to mod, to be part of communities that had formed around Steam and consoles over decades. Google Stitch's underlying AI is impressive. The problem is that the design category is either going to be won by the player with ecosystem lock-in (Figma), or dissolved by players who don't need a design tool at all (agent-first workflows).

In the first scenario, Google is trying to unseat Figma with a technically superior product - a fight it has lost before. In the second scenario, the entire market is being deprecated. Neither is a good entry point.

Google's timeline problem: If Stitch had launched in 2022 - before vibe coding mainstreamed, before agent workflows became common, before engineers started shipping from text prompts - it would have been genuinely disruptive to Figma. Launching in 2025 means it arrived at the moment the category it's competing in started to shrink.

The Real Winner

It's not Google. It's not Figma, though Figma is better positioned than Google because it has the ecosystem. The winners of the current transition are the players building in the layer that makes design tools optional.

The coding agents - Cursor, Claude Code, GitHub Copilot, Devin, and their successors - are the actual beneficiaries of the "vibe design" moment. When Google demonstrates that a natural language prompt can produce a high-fidelity UI, they're inadvertently demonstrating that the same natural language prompt, applied one layer down the stack, produces actual software. The users watching the Stitch demo aren't thinking "I need a better design tool." The smarter ones are thinking "why am I stopping at the design?"

The abstraction layer that wins is the one that eliminates the most steps between idea and deployed product. Stitch eliminates some steps between idea and mockup. Agents eliminate the steps between idea and product. The latter is the larger prize.

Platforms and tools that let technical and non-technical users go directly from text prompt to running application - without a design phase, without an engineering handoff, without the weeks of friction between conception and production - are the ones capturing real value right now. The design mockup is, increasingly, a deliverable for stakeholders who need to approve things, not a necessary step in building things.

The future of UI production is not "better mockups faster." It's "fewer mockups, better products, shorter loops." And that future doesn't require a design tool at all - it requires an agent with taste, context, and the ability to iterate on running software rather than static pictures of software.

The tools and workflows that understand this - that treat code as the first draft, not the last deliverable, and that let users iterate on deployed products the way Figma let users iterate on mockups - are where the real disruption lives. That's the space worth watching. It's also the space that makes Google Stitch feel, on reflection, like a photo-editing suite shipping in the era of point-and-click cameras.

What Happens in the Next 24 Months

The design industry is in the last years of its current form. That's not hyperbole - it's the structural conclusion of a set of forces that are already in motion and accelerating.

Here is the most likely shape of the next two years:

Short term (0-12 months): AI design tools including Stitch gain adoption among non-designers - product managers, founders, marketers who need to mock things up quickly without hiring a designer. Designer employment stays roughly stable but job requirements shift: "Figma proficiency" becomes table stakes rather than a differentiator, while "AI tool direction" becomes the new skill on resumes. Salaries for mid-level UI designers begin to compress.

Medium term (12-24 months): Agent-first workflows cross a threshold where early-stage startups routinely skip the design phase entirely. Not all startups - brand-sensitive consumer products still benefit from careful visual design. But B2B SaaS products, internal tools, MVPs, and API-centric applications increasingly ship without a mockup step. The design tools market bifurcates: high-end strategic design work (brand, systems, complex UX research) remains human-driven and well-compensated; commodity UI work is automated at a level that reduces headcount.

The role that emerges: Something that doesn't have a clean name yet. Part product manager, part designer, part prompt engineer, part QA. A person who can direct AI systems to produce good visual and functional outputs, evaluate those outputs against real user needs and brand requirements, and iterate quickly on running software rather than static designs. This role will be well-paid because it requires genuine judgment, not just tool fluency. There will be fewer of them than there are UI designers today.

The Nixus take: If you're a designer reading this, the correct response is not anxiety - it's motion. The designers who thrive in the next phase are the ones who can direct agents, not just use design tools. The skill is judgment and direction, not craft execution. Start building that now. The window for repositioning is open but it won't stay open indefinitely.

Google Stitch is a well-built product that arrived at the wrong moment for the wrong reason. It will find users. It won't find dominance, because the category it's competing for is contracting while a more important category - agent-native product development - is expanding. This is the pattern: Google ships the last great tool for a category right as the category starts to shrink.

The mockup is not the future of building software. The agent is. And the agents that win are the ones that understand design as an output constraint, not a prerequisite step - the ones that ship working products with good aesthetics because they've internalized what good looks like, not because they asked a human designer to tell them first.

That's the shift. Design as a step is dying. Design as a discipline - the understanding of what good feels like, what users actually need, what makes a product worth using - that lives on. Just not in a tool. In the people who direct the tools.

Stitch is Google's admission that AI can design. What they didn't say is that the agents watching from one layer below are using that same intelligence to ship. The picture vs. the product. One of them is real.