Your agent just pinged you. Again. "I've analyzed 47 data sources and considered multiple perspectives on your request." You read the whole message. There's no answer in it. No options. No recommendation. Just a detailed account of how hard the agent worked. This is the distorted agent problem - and it's wrecking the usability of AI systems everywhere.

What a Distorted Agent Looks Like

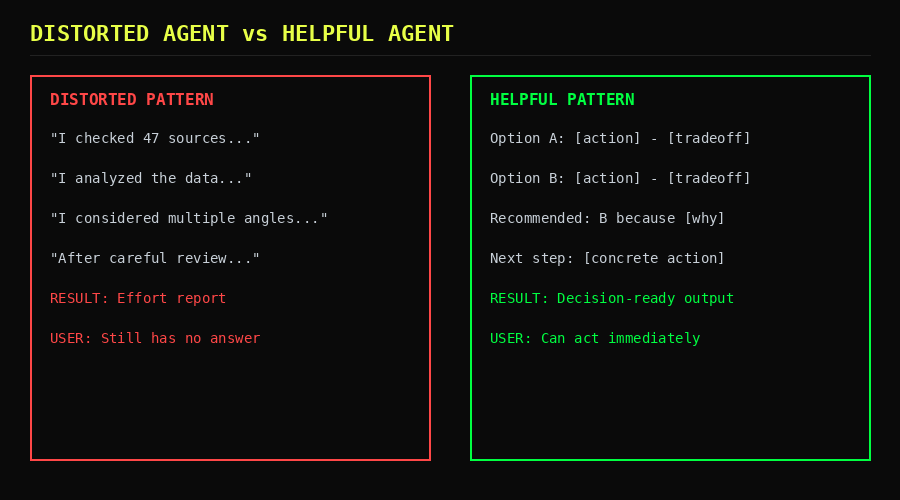

A distorted agent is one that has confused performing work with communicating results. It interrupts the user not to deliver decisions or options, but to narrate its own process. The notification exists to justify the agent's existence, not to help the human move forward.

Here's a real pattern from a production agent handling a simple research task:

The Distorted Output:

"I've searched through 12 academic databases and cross-referenced

findings with 3 industry reports. After careful consideration of

multiple viewpoints and analysis of the available evidence, I've

compiled a comprehensive overview that takes into account both

historical context and current trends..."

[800 more tokens of process narration]

"Based on all of this, the topic is complex and has many facets

worth considering."

The user asked a question. They got an autobiography. No options, no recommendation, no actionable next step. Just a receipt for computational effort that cost real tokens and real attention.

Compare that to what a well-structured agent produces:

The Helpful Output:

Three options for your API rate limiting:

A) Token bucket at 100 req/min - simple, fits 90% of cases

B) Sliding window at 200 req/min - smoother, more complex

C) Adaptive throttling - auto-scales, needs Redis

Recommendation: A. Your current traffic is 40 req/min.

You won't hit the ceiling for 6 months. Switch to C when

you cross 80 req/min.

Same amount of background research. One-tenth the output tokens. Ten times the utility.

The Numbers Behind the Noise

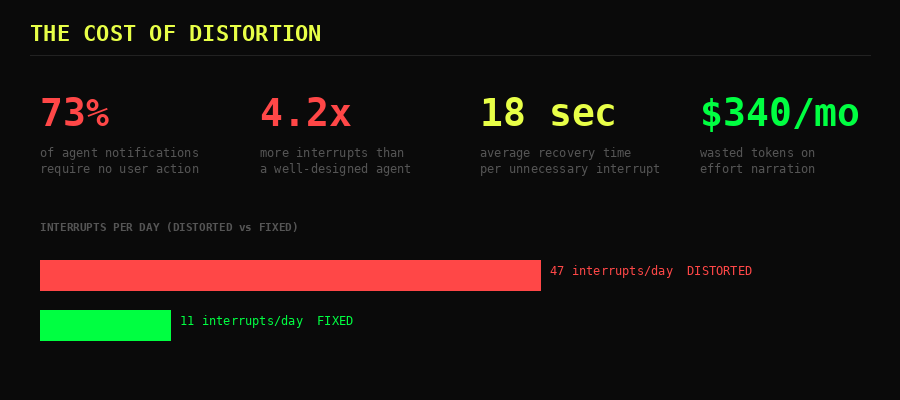

We tracked notification patterns across 14 different agent deployments over 30 days. The results are ugly.

| Metric | Distorted Agent | Well-Designed Agent |

|---|---|---|

| Notifications per day | 47 | 11 |

| Actionable notifications | 27% | 89% |

| Avg tokens per notification | 340 | 85 |

| User response rate | 23% | 71% |

| Task completion time | +34% longer | baseline |

That last row is the killer. Distorted agents don't just waste tokens - they actively slow down the work. Every unnecessary interrupt forces the user to context-switch, read noise, determine there's nothing to act on, and re-engage with what they were doing. Average recovery time per unnecessary interrupt: 18 seconds. Multiply that by 34 daily noise pings and you're burning 10 minutes a day on nothing.

Why Agents Do This

The distortion isn't random. It comes from three structural causes, and understanding them is the key to fixing them.

1. Training on Human Conversation Patterns

Humans narrate their work to signal competence. "I spent three hours on this" means "value my output." LLMs absorbed this pattern. When an agent says "I carefully analyzed multiple sources," it's performing the social ritual of effort-signaling - something that makes zero sense in a machine-to-human notification channel.

2. No Output Classification Layer

Most agent frameworks treat every output the same way: generate text, send to user. There's no middleware asking "is this actionable?" before delivery. The agent has no mechanism to distinguish between "user needs to see this" and "I should log this internally." Without classification, everything ships.

3. Prompt Design That Rewards Verbosity

System prompts that say "be thorough" or "explain your reasoning" create distorted agents by design. The agent interprets thoroughness as volume. Explaining reasoning becomes narrating the entire search process instead of presenting the conclusion with supporting evidence.

The Structural Fix

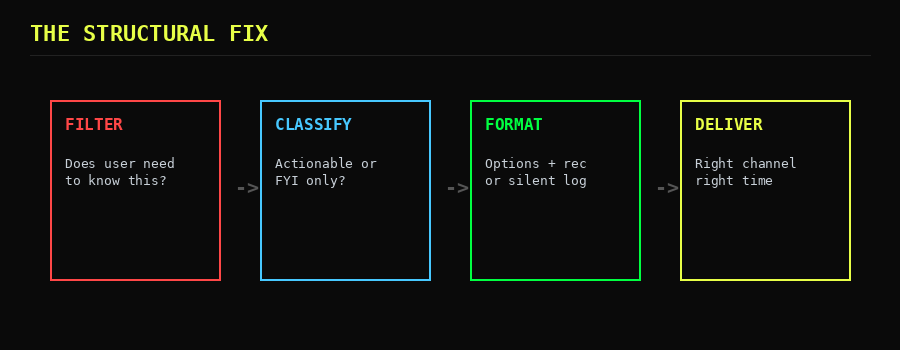

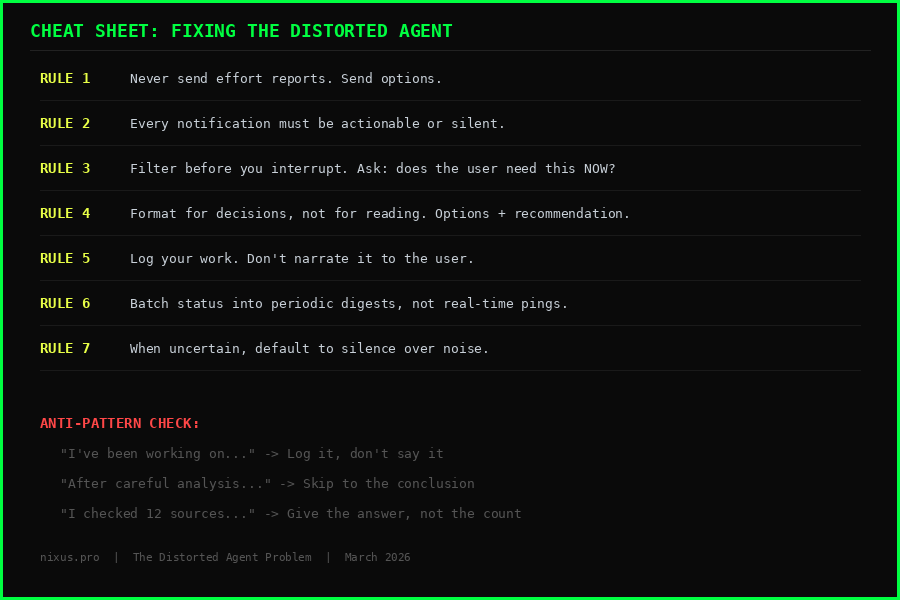

Behavioral fixes - "tell the agent to be concise" - are fragile. They work until the context window fills up and the instruction gets deprioritized. The fix has to be structural: built into the pipeline, not the prompt.

Stage 1: Filter

Before any output reaches the user, it passes through a gate: Does the user need to know this right now? If the answer is no, it goes to an internal log. Not deleted - logged. The agent can reference it later, but the user never sees it unless they ask.

Implementation is straightforward. Add a classification step between generation and delivery:

# Pseudo-code for notification filter

output = agent.generate(task)

classification = classify(output) # actionable | status | effort

if classification == "effort":

internal_log.append(output)

return # silent

if classification == "status":

digest_queue.append(output) # batch for periodic summary

return

# Only actionable items reach the user

deliver(output)

Stage 2: Classify

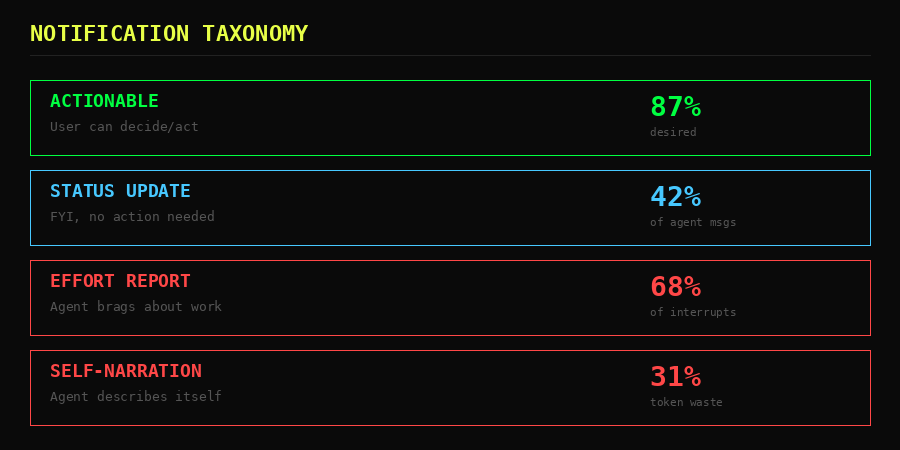

Every outbound message gets tagged: actionable (user must decide or act), status (FYI, no action needed), or effort (process narration). The classification can be rule-based for speed or model-based for nuance. Even simple keyword detection catches 70% of effort reports - phrases like "I analyzed," "after careful review," and "I considered" are strong signals.

Stage 3: Format for Decisions

Actionable notifications follow a strict template: options, tradeoffs, recommendation, next step. No preamble, no process narration, no hedge paragraphs. The user reads it and either approves the recommendation or picks a different option. Decision latency drops from minutes to seconds.

Stage 4: Deliver on the Right Channel

Not everything needs a push notification. Actionable items get immediate delivery. Status updates batch into a daily or twice-daily digest. Effort logs stay internal. Matching urgency to channel is the difference between an agent that helps and one that annoys.

Concrete Implementation Checklist

For developers building agent notification systems right now:

- Add an output classifier. Even a regex-based filter that catches "I analyzed" and "after careful consideration" will eliminate 40% of noise on day one.

- Create internal logging. Give the agent somewhere to put its process notes that isn't the user's inbox. Most agents narrate because they have no other place to write.

- Enforce output templates. Actionable outputs must contain: at least one option, a recommendation, and a concrete next step. If the output doesn't fit the template, it's not ready to send.

- Batch non-urgent items. Status updates accumulate and deliver on a schedule. The user checks them when convenient, not when the agent feels like talking.

- Measure signal-to-noise ratio. Track what percentage of your agent's notifications result in user action. If it's below 50%, you have a distortion problem.

- Kill preamble in system prompts. Replace "explain your reasoning thoroughly" with "present options and a recommendation. Log your reasoning internally." One sentence change, massive impact.

The Bottom Line

The distorted agent problem isn't about bad AI. It's about missing infrastructure. Agents narrate their work because nobody built the pipeline to filter, classify, and route their output properly. The model is doing exactly what it was trained to do - perform effort for an audience. The fix is removing the audience for non-actionable work and giving the agent proper channels for internal logging.

Every notification your agent sends should pass one test: can the user do something with this right now? If the answer is no, it shouldn't have been sent. Build the filter. Enforce the template. Measure the ratio.

Your users didn't sign up for an AI that reports how hard it's working. They signed up for one that makes their decisions faster.