Disney's Olaf Robot Was Trained in 48 Hours. The AI Behind It Could Reshape Everything.

At Nvidia GTC 2026, Disney Imagineering revealed a robotic character that crosses the uncanny valley - not through years of hand-animation, but through 100,000 virtual simulations run over two days. The method is the story. And the implications go far beyond theme parks.

Reinforcement learning is enabling robots to develop naturalistic movement in days, not years. [Unsplash / conceptual]

The first thing journalists noticed when they met Olaf was that he waddles. Not in a crude, mechanical way - but in the specific, buoyant, slightly-too-heavy way that a magical snowman who has just discovered what warm hugs feel like would move. Every step communicated personality before a single word was spoken.

That waddling gait was not programmed by a human animator. It was learned. Over the course of 48 hours, 100,000 virtual copies of the Olaf robot ran inside an Nvidia GPU simulation, rewarded when they stood upright and walked with Frozen-accurate motion, penalized when they fell. By the end of that process, a single Nvidia RTX 4090 had produced a walking behavior that Disney Imagineers say would have taken a team of engineers years to hand-code manually.

This is the real story from Nvidia GTC 2026. Not the chip announcements or the space data centers. It is a story about what happens when the simulation tools developed for autonomous vehicles and warehouse robots get pointed at something entirely different: making a beloved cartoon character feel real enough to make a child cry happy tears.

The Problem Disney Had Been Trying to Solve for Decades

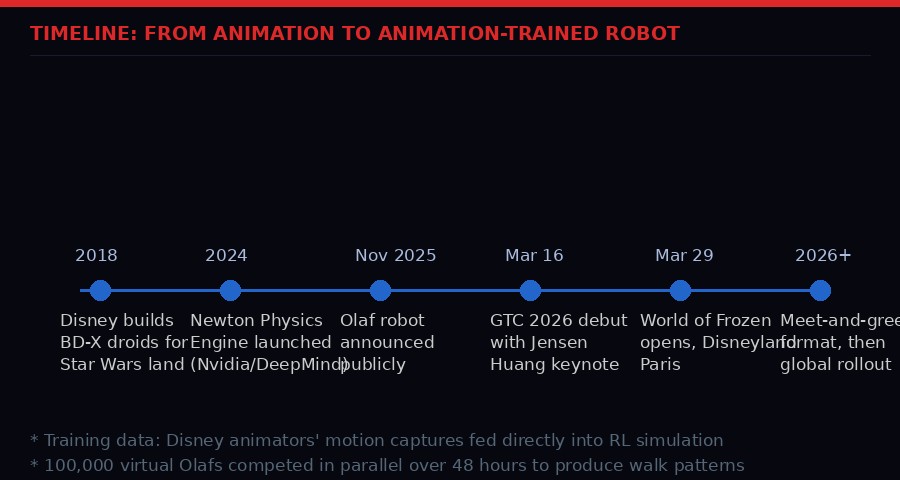

Disney Imagineering has built animatronic figures since the 1960s. The Lincoln figure at the 1964 World's Fair was revolutionary. The Pirates of the Caribbean robots that followed became the template for theme park entertainment worldwide. But every single one of them was static in a fundamental sense - designed for one position, one scene, one set of movements, repeated on a loop forever.

The challenge Disney had been circling for years: how do you create a character that can actually move around in an open space, respond to an audience, and perform thousands of different interactions without requiring a team of engineers to pre-program every possible motion?

They made early progress with mobile characters. The BD-X droids that debuted in Star Wars: Galaxy's Edge at Disneyland in 2024 could wander through the park and interact with visitors. But those were, in the words of Disney Imagineering VP Josh Gorin, "robots being robots." They moved like robots move - functional, purposeful, mechanical. Nobody was projecting a soul onto them.

Olaf is different. Olaf is their first "animated character" - a robot trained not just to walk, but to walk like Olaf. The distinction sounds superficial. The engineering gap behind it is enormous.

"We don't want our guests to see technology. We want our guests to fall in love with their favorite characters in the film. So, to do that, we bring in animation training data. We get the actual animators who worked on the Frozen films to create the poses and the movements, and we feed that into the training data." - Josh Gorin, VP of R&D, Walt Disney Imagineering

How the Training Actually Works: Reinforcement Learning Meets Character Animation

The technical backbone is reinforcement learning, the same AI technique used to train AlphaGo, OpenAI Five, and modern autonomous driving systems. But Disney's implementation has a twist that makes it genuinely novel in the robotics world.

Standard RL for walking robots works by rewarding the agent for maintaining balance, minimizing energy expenditure, and reaching target positions. You get robots that walk efficiently. They look like robots. The motion is optimized for function, not for character.

Disney's approach layers in a second dimension of reward: screen accuracy. The animators who worked on the original Frozen films captured reference poses and movement sequences in a studio. Those reference animations became part of the training objective. The simulated Olafs were rewarded not just for walking without falling, but for walking with the specific momentum transfer, head bobble, and arm swing that match the film's character design.

The simulation environment runs inside Nvidia's Isaac Lab, a platform built for robot learning that can run thousands of parallel physics simulations simultaneously on GPU hardware. Disney contributed its own simulation tool, called Kamino, which models the robot's specific mechanical constraints in extreme detail - every motor torque, every wire routing, even a thermal dynamics model that predicts when joints are at risk of overheating.

Olaf's head is disproportionately large relative to his neck, just like in the films. That creates a mechanical problem that would be obvious to any robotics engineer: the neck joint bears enormous load and dissipates heat rapidly under movement. Kamino's thermal model meant the training process could learn to avoid movement patterns that would destroy the hardware over time, without any engineer having to manually specify those limits.

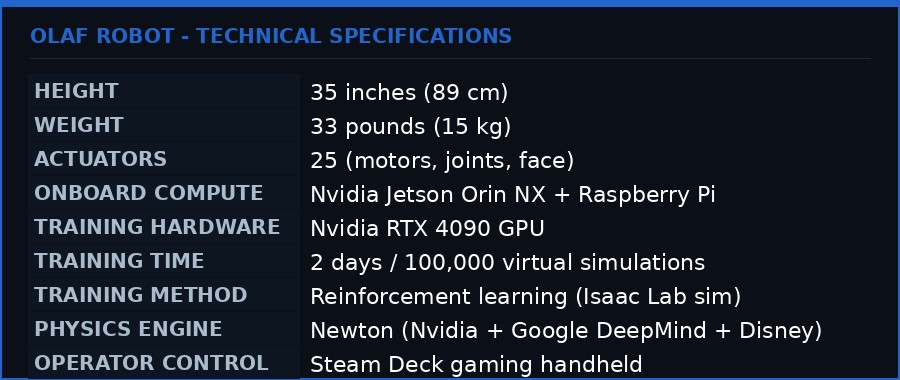

Olaf's hardware specs: 25 actuators, dual compute boards, and trained on a single RTX 4090 in 48 hours.

The Training Numbers

The Newton Physics Engine: Why This Collaboration Matters

The simulation platform underpinning all of this is not purely a Disney or Nvidia creation. The Newton Physics Engine - which provides the physical fidelity that makes simulation-to-reality transfer actually work - was jointly developed by Nvidia, Google DeepMind, and Disney Research, and is managed by the Linux Foundation as an open-source project.

That three-way collaboration is not accidental. It reflects a growing consensus in the robotics field that the simulation bottleneck - the gap between how robots behave in training environments and how they behave in the real world - requires physics engines of extraordinary precision to close.

Newton is built on Nvidia Warp, a GPU-accelerated Python framework for spatial computing, and integrates with both Nvidia's Isaac Sim environment and Google DeepMind's MuJoCo simulator. This means that researchers and engineers using any of the dominant robotics simulation tools can now share a common physics foundation, improving comparability between experiments and reducing the work required to port trained behaviors between different simulation environments.

For Disney, the practical benefit was the ability to model Olaf's unusual mechanical properties with enough physical accuracy that behaviors trained in simulation would transfer directly to the physical robot without manual tuning. Disney Research is also contributing Kamino - its own simulation tool for "extremely complex mechanical assemblies" - back to the open-source community.

This is Disney doing something unusual: sharing proprietary R&D tooling rather than hoarding it as competitive advantage. Laughlin's explanation was straightforward. The bottleneck is no longer the simulation tools themselves. The bottleneck is the character data - the animation references, the performance captures, the years of storytelling craft embedded in how a character moves. That data is Disney's moat. The physics engine is infrastructure everybody benefits from improving.

"This absolutely is the future of how we're building robot characters. Reinforcement learning is the true unlock that could let Disney populate entire lands full of interactive characters - now that entire robots can be built in months instead of years." - Kyle Laughlin, SVP of R&D, Walt Disney Imagineering

The Uncanny Valley Question - And Why Olaf Passes

The uncanny valley - the psychological phenomenon where near-human robots provoke discomfort rather than empathy - is usually discussed as an aesthetic problem. Make the robot look more human and you fall into the valley; make it obviously mechanical and you avoid it.

Olaf does not attempt to escape the uncanny valley through abstraction. Olaf is clearly a cartoon snowman. Three spheres, a carrot nose, stick arms. There is nothing anatomically human about the design. And yet every journalist who has met the robot reports the same experience: they keep writing "he" even when they know better. They feel something watching the waddling gait.

Disney Research lab director Moritz Baecher identified the key mechanism in his briefing to journalists: "The eyes go first, and the body follows." The human brain is wired to interpret eye movement as intentionality. When Olaf's eyes track something, the observer's brain infers a subject attending to an object. That cognitive reflex fires regardless of whether the "eyes" belong to a biological organism or a mechanical snowman.

What reinforcement learning contributes to this experience is secondary motion - the thousand small adjustments that happen when a living thing moves and that are absent when a machine moves. Weight transfer through the spine. Head lag when the body turns. The slight asymmetry of a real step versus a perfectly replicated one. None of these were individually programmed. They emerged from the training process because they are consequences of the physics the simulation models, and because the animation reference data implicitly encodes them.

This is the fundamental promise of RL for robotic character animation: you do not have to specify lifelike behavior directly. You specify the character's constraints, the physics of the environment, and the quality metric (screen accuracy in this case), and lifelike behavior falls out of the optimization. A human animator looking at thousands of Olaf motions and selecting the best ones could not do this faster. The GPU running 100,000 parallel simulations can.

From Star Wars droids to Frozen snowmen: Disney's robotics roadmap accelerated dramatically with the reinforcement learning unlock.

The GTC Moment: Olaf Meets Jensen Huang

Nvidia's GTC 2026 keynote on March 16 was, by any measure, a maximalist event. Jensen Huang announced space-based data centers. He previewed the Vera Rubin AI supercomputer architecture. He demoed the NemoClaw security stack for autonomous agents. He introduced the Agent Toolkit and the OpenShell runtime that partners ranging from Cisco to SAP are now integrating.

And then Olaf walked on stage.

The choice was deliberate. Physical AI - the application of deep learning to robots that interact with the physical world - is Nvidia's declared next frontier after large language models. The company has been positioning Isaac Lab, Newton Physics, and its robotics simulation stack as the infrastructure layer for that transition. GTC 2026 needed a demo that communicated the promise viscerally, to an audience that might not sit through a technical explanation of sim-to-real transfer but would absolutely respond to a cartoon snowman waddling across a stage at the San Jose Convention Center.

Huang described the moment in his keynote: "Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI." The implication - that physical AI would eventually have its own OS-level infrastructure moment - hung in the air as Olaf made his entrance.

The strategic messaging was careful. Nvidia is not just a chip company for this work. It is providing the simulation stack (Isaac Lab, Newton), the hardware for training (RTX 4090 for Olaf's development, DGX systems for enterprise users), the onboard inference compute (Jetson Orin NX inside the robot itself), and now the agent platform software (NemoClaw, OpenShell) that manages the AI components at runtime. Olaf, waddling on stage, was a demonstration of vertical integration as much as it was a demonstration of robotics.

What Comes After the Theme Park: The Industrial Implications

Disney's immediate roadmap is theme park rollout. Olaf debuts in the World of Frozen at Disneyland Paris on March 29, initially aboard a boat where he can be visible to large numbers of guests simultaneously. Hong Kong Disneyland follows this summer. Meet-and-greet format - which requires one-on-one interaction and ideally physical contact with guests - is the stated "North Star" but has no announced date.

The industrial implications extend well beyond entertainment. The techniques Disney used to train Olaf are the same techniques being applied to warehouse logistics robots, construction site inspection drones, surgical assistants, and elder care companions. What Disney demonstrated is that the gap between "character motion reference data" and "working robot behavior" can be bridged in 48 hours of GPU time - not months of engineering.

This changes the economics of robot deployment in any domain where human-like or character-appropriate motion is a requirement rather than a nice-to-have. Healthcare robots designed for patient interaction face an uncanny valley problem of their own. Elder care companions need to move in ways that do not trigger the discomfort response in cognitively vulnerable users. Service robots in hospitality settings need naturalness to avoid disrupting the social fabric of the environments they work in.

All of those applications now have a proof of concept for how to solve the motion training problem. The proof of concept is named Olaf, and he is 35 inches tall and weighs 33 pounds and was trained on a gaming GPU over a weekend.

"Unlike an animatronic that's designed for one scene and one ride, this can be used in parades and meet-and-greets, in atmospheric entertainment, in shows. This allows us to truly think about them as total and complete characters." - Josh Gorin, VP of R&D, Walt Disney Imagineering and Disney Live Entertainment Innovation

The Open-Source Bet: Kamino, Newton, and Why Disney is Sharing Its Tools

One aspect of the GTC announcement that has received relatively little attention is the extent to which Disney is contributing its internal robotics R&D tooling to the open-source community. Kamino - the simulation tool developed to train "extremely complex mechanical assemblies" like Olaf - is being released alongside Newton.

This is a significant departure from historical Disney behavior. The company is legendarily protective of its intellectual property. It has extended copyright terms, pursued aggressive licensing litigation, and guarded its theme park design methodologies with near-paranoid secrecy for decades. The decision to open-source its core robotics simulation tooling signals a genuine shift in strategic calculus.

The logic: simulation tools are infrastructure. Like operating systems, compilers, and physics libraries before them, they become more valuable when the entire ecosystem builds on top of them. A Disney-proprietary simulation tool that only Disney engineers can use produces less value than a Disney-contributed simulation tool that thousands of researchers and robotics companies improve year over year.

The competitive moat Disney is preserving is its character data - the decades of animation work, performance captures, voice recordings with original cast members, and storytelling expertise that make a robot feel like Olaf rather than just a robot that walks like a snowman. That data is not being open-sourced. It is, in fact, the thing that makes the simulation tools valuable to Disney specifically. By open-sourcing the tools while keeping the character data, Disney creates a situation where improving Newton and Kamino is in everyone's interest, but the specific use case that Disney cares about - making beloved characters feel real - remains exclusively theirs.

It is a sophisticated play for a company not typically associated with open-source strategy. And it reflects the same pattern playing out across the AI industry: infrastructure commoditizes, proprietary data and character accumulate as the defensible layer.

The Second-Order Effects Nobody Is Talking About

Step back from the press release framing and some uncomfortable questions emerge.

If a walking, character-accurate robot can be trained in 48 hours on consumer GPU hardware, what does that mean for the labor market in industries that currently employ humans for hospitality, service, and companion care roles? The same training pipeline that produced Olaf could be applied to produce an elder care companion trained on reference data from professional caregivers, or a hotel concierge robot trained on reference data from human front desk staff.

Disney is building towards a future where their theme parks are populated with AI-powered interactive characters at scale. Laughlin explicitly said the goal is to "populate entire lands full of interactive characters." The current Olaf requires a human operator with a Steam Deck to control its responses in real time. That is a temporary constraint. The trajectory of AI voice and conversation systems over the past 24 months makes it reasonable to predict that autonomous conversation capability will be integrated into this hardware type within a relatively short time horizon.

At that point, the job of theme park character performer - a skilled, competitive, physically demanding career path for trained entertainers - faces pressure from a system that never needs a break, can be replicated indefinitely, and costs a fixed capital expense rather than ongoing labor.

There is also a surveillance question. A robot operating in a public theme park environment, with onboard compute and sensor capabilities, is necessarily collecting data about every person it interacts with. Disney has not published detailed specifications of what the Olaf robot's sensor suite captures beyond what is needed for the operator to control its gaze and movement. In an era of increasingly aggressive data monetization and biometric tracking, the deployment of AI-powered interactive robots in spaces where children are present warrants serious scrutiny of data collection practices.

None of these questions diminish the genuine technical achievement. They are, however, the questions that tend to go unasked when a demonstration is charming enough to make journalists forget they are writing about a machine.

The Bigger Picture: Physical AI's First Pop-Culture Moment

Robotics has had technically impressive demonstrations before. Boston Dynamics' Atlas backflip videos go viral regularly. Spot the robot dog has appeared at construction sites, power plants, and police demonstrations. Humanoid robots from Figure, Agility, and 1X are being deployed in warehouses.

But none of those systems have had a pop-culture character moment the way Olaf does. The Boston Dynamics robots are impressive precisely because they look mechanical - the parkour is astonishing because you do not expect a machine to do it. Olaf works for the opposite reason: you keep forgetting he is a machine.

That distinction matters for public perception of robotics in a way that has real policy implications. The public debate about robot deployment in workplaces, healthcare settings, and public spaces has been largely abstract - fears about job displacement and surveillance attaching to systems that most people have never interacted with. Olaf puts a face on the technology that is specifically designed to generate positive emotional responses.

The risk is not that people will be tricked. Everyone who meets Olaf knows they are meeting a robot. The risk is subtler: that the emotional warmth generated by a well-executed character robot shapes public attitudes toward robotic systems in general in ways that make careful policy consideration harder. It is difficult to maintain critical distance from a 35-inch snowman who wobbles toward you looking delighted to say hello.

Jensen Huang closed his GTC keynote with a statement that, heard in light of everything Disney revealed that week, takes on extra weight: "The beginning of a new renaissance in software." He was talking about autonomous AI agents. But he could just as easily have been talking about the moment a robot trained in 48 hours on a gaming GPU convinced a room full of technology journalists that they had just met someone.

That someone is coming to Disneyland Paris on March 29. He is three feet tall, made of foam and motors, and his name is Olaf. What he represents is considerably larger.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: Nvidia GTC 2026 press release | Newton Physics Engine (Nvidia Developer) | Gizmodo / io9 hands-on | The Verge on-site report | Nvidia Agent Toolkit announcement