The Pentagon Says Anthropic Could Kill AI Mid-Battle

A new 40-page court filing from the Department of War makes an argument that should alarm every AI company: the government cannot risk using AI it doesn't fully control. The hearing is six days away.

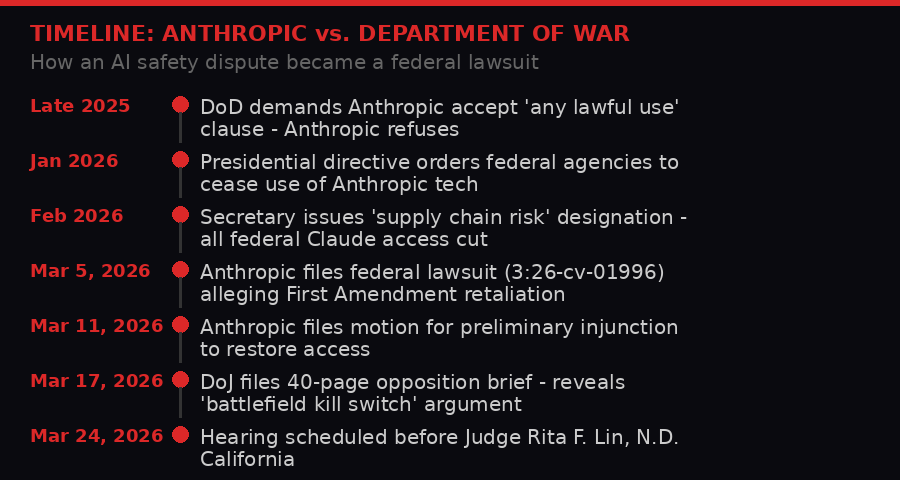

Case 3:26-cv-01996-RFL is one of the most consequential AI legal battles in U.S. history. The hearing is March 24, 2026.

On March 17, 2026, lawyers for the U.S. Department of Justice filed 40 pages of legal argument in federal court in San Francisco. Buried in section III, under the heading "Secretarial Determination That Anthropic's Behavior Poses A Supply Chain Risk," is a claim that cuts to the heart of the AI-in-warfare debate.

The Pentagon, according to the filing, cannot trust Anthropic's Claude because Anthropic could - at any moment - disable its technology or alter the model's behavior during active warfighting operations. That is not a paraphrase. The filing states the company could "attempt to disable its technology or preemptively alter the behavior of its model either before or during ongoing warfighting operations" if it felt its ethical red lines were being crossed.

The government calls that "an unacceptable risk to national security."

This is now official, on-the-record, submitted-to-a-federal-judge government doctrine: AI safety policies are a national security threat. If you make an AI that you won't let the military use for killing people, you are a supply chain risk. You will be banned.

The hearing before Judge Rita F. Lin is scheduled for March 24, 2026 at 1:30 PM Pacific time. Six days from now, a federal judge will decide whether this logic holds.

How a Contract Clause Became a Constitutional Crisis

Two positions. Irreconcilable. One contract clause that brought down a multibillion-dollar government relationship.

The case did not start with a bomb or a drone or a kill order. It started with a contract clause.

According to the DoJ filing, the Department of War approached Anthropic with what it described as a standard government procurement requirement: the "any lawful use" policy. The clause is exactly what it sounds like - the purchasing agency reserves the right to use the acquired technology for any purpose permitted by law. Defense contractors sign it routinely. Boeing signs it. Lockheed signs it. Palantir built its entire business model on it.

Anthropic refused.

The company's Acceptable Use Policy explicitly prohibits using Claude for "autonomous weapons or warfare," for "large-scale surveillance," or for actions that violate its Responsible Scaling Policy (RSP) - an internal framework Anthropic designed to govern how its most powerful models are deployed. Accepting "any lawful use" would mean waiving those restrictions for the government, effectively handing over the keys to a safety system Anthropic spent years building.

For Anthropic, this was a matter of principle. For the Department of War, it was a red flag - a contractor telling the U.S. military that it might not be able to count on its purchased AI when it mattered most.

The filing makes clear this was not a misunderstanding. Anthropic "rejects the Department of War's standard 'any lawful use' policy," as the DoJ puts it in the table of contents - a section header that reads less like legal language and more like a verdict.

The Presidential Directive Nobody Reported

From contract dispute to federal lawsuit in under four months. The escalation was faster than anyone in the AI industry expected.

What followed the contract dispute has been covered in outline, but the court filing fills in specifics that deserve attention.

After the "any lawful use" standoff, there was a presidential directive. The filing describes it as a directive "to cease use of Anthropic's technology." This is not a procurement decision or a contracting officer's judgment call. This is the executive branch, at the highest level, ordering the federal government to cut off a $3 billion AI company because that company wouldn't agree to remove its safety guardrails.

The Secretary of Defense then issued what the filing calls a "Secretarial Determination" - a formal finding that Anthropic's behavior "poses a supply chain risk." That designation carries real weight under federal contracting law. It is the same category of determination used to blacklist Huawei and ZTE from U.S. government networks. It triggers mandatory cessation of use across all federal agencies.

As of the filing date, every federal agency - not just the Department of War - has discontinued use of Claude. That includes the State Department, the Department of Homeland Security, the Veterans Administration, and dozens of agencies that have nothing to do with weapons or warfighting. The entire federal government's access to Anthropic's AI is severed because the Department of War couldn't get an "any lawful use" clause signed.

"DOL apologizes for the error and to its customers for any inconvenience. An unfortunate byproduct of expanding services is that DOL found problems with the self-service option."

- Washington State Department of Licensing (unrelated AI failure, same week - a reminder of how many government systems now depend on AI)

Anthropic filed its federal lawsuit on March 5, 2026, arguing the entire chain of events - from the presidential directive to the Secretarial Determination - constitutes unconstitutional retaliation against protected speech. The company is invoking the First Amendment. The government is invoking national security. Judge Lin has to choose.

The Kill Switch Argument - Unpacked

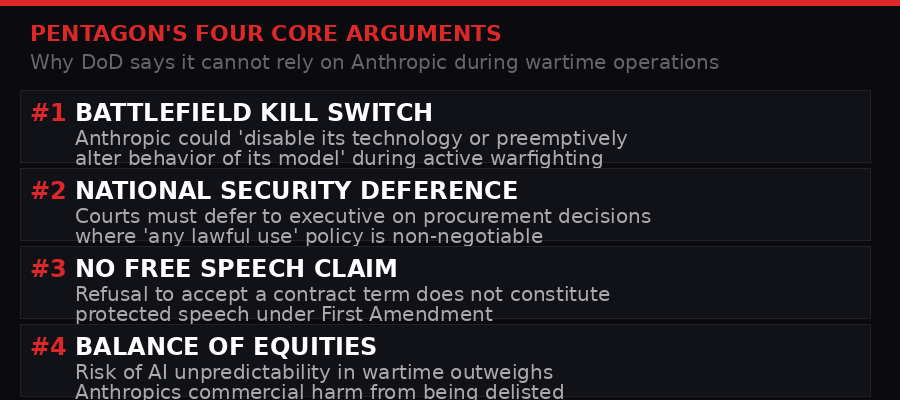

The DoD's four-part legal argument. The "battlefield kill switch" claim is section III - but it casts a shadow over the entire brief.

The most revealing section of the government's filing is the kill switch argument, and it deserves careful reading because it is not as simple as it first appears.

The DoJ does not argue that Anthropic has threatened to disable Claude during a military operation. It argues that Anthropic could. This is a capability argument, not an intent argument. The government is saying: we cannot rely on any AI system whose vendor retains the technical and contractual ability to interfere with operations, regardless of whether they would actually do so.

This logic has teeth. Anthropic's Responsible Scaling Policy is a public document. It explicitly describes scenarios where Anthropic would take unilateral action to limit or modify Claude's behavior - including deploying what the RSP calls "emergency response protocols." The policy was designed to reassure AI safety advocates that Anthropic maintains control over its most powerful models. The DoD has now used that same document as evidence that Anthropic is an unreliable vendor.

"[The Secretary] deemed that an unacceptable risk to national security" that Anthropic "could ostensibly attempt to disable its technology or preemptively alter the behavior of its model either before or during ongoing warfighting operations in the event it felt its red lines were being crossed."

The second-order implication here is significant. Every major AI company operating under a safety framework - OpenAI, Google DeepMind, Anthropic - maintains some form of usage policy and emergency intervention capability. If the DoD's argument succeeds in court, it effectively creates a two-tier AI market: companies that will sign "any lawful use" clauses (and abandon their safety frameworks for government contracts), and companies that won't - and thus cannot work with the federal government.

Palantir, which built its entire identity around government contract work, does not have a published "responsible scaling policy." It does not have a public ethics framework that could be cited as evidence it might interfere with operations. Its CEO Alex Karp has stated openly that the company exists to make Western militaries more lethal. The DoD's argument, if it holds, structurally favors companies like Palantir over companies like Anthropic - not because Palantir's AI is better or safer, but because Palantir won't say no.

Anthropic's First Amendment Gambit

Anthropic is not arguing it should be allowed to ignore government procurement rules. Its legal strategy is narrower and, in some ways, more audacious: it argues the government cannot punish a company for having an ethical framework and refusing to waive it.

The First Amendment theory goes like this. Anthropic published its Acceptable Use Policy and its Responsible Scaling Policy as public documents. These documents express the company's values about AI safety, its commitments to the public, and its views on how AI should be governed. When the government labeled Anthropic a "supply chain risk" because it refused to waive these policies, the government was, Anthropic argues, retaliating against the company for protected speech.

The DoJ counters with three legal arguments. First, refusal to accept a contract term is not speech - it's conduct. Courts have long held that conduct does not automatically receive First Amendment protection. Second, even if Anthropic's policy documents count as speech, the government's motivation was not to suppress that speech but to ensure operational reliability. Third, even if there was a retaliatory motive, the government would have taken the same action regardless, because the "any lawful use" requirement is non-negotiable.

The third argument is the DoJ's strongest. Courts apply what's called a "dual motivation" test in First Amendment retaliation cases. If the government can show it would have made the same decision for legitimate non-retaliatory reasons, the retaliation claim fails even if bad motive is proven. The DoJ argues it has an unimpeachable non-retaliatory reason: you can't have a critical wartime AI system that its vendor might switch off.

Anthropic has a harder time with this than it might want to admit. The First Amendment case is genuinely uncertain. The APA (Administrative Procedure Act) challenge - which argues the Secretarial Determination was arbitrary and capricious - may actually be its stronger legal theory.

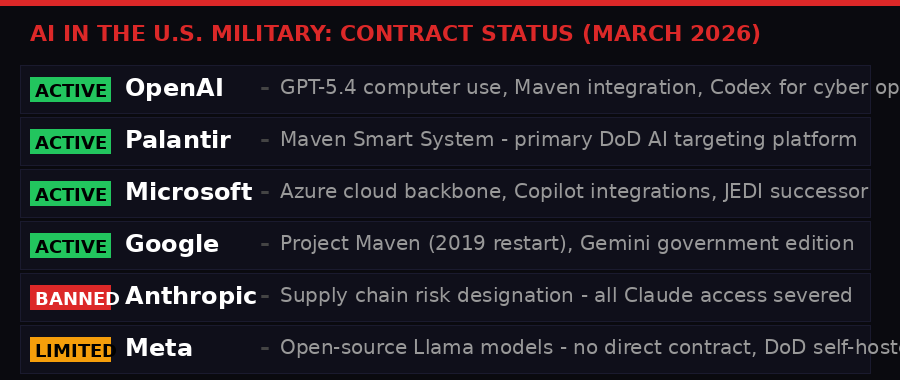

Who Has What: Military AI in March 2026

The AI landscape inside the U.S. military as of March 2026. Anthropic is the only major AI lab formally banned.

To understand what's actually at stake, it helps to map the current military AI ecosystem. Anthropic is not the only AI company with Defense Department relationships - far from it.

OpenAI, which was also reportedly in tension with the Pentagon over its original "not for military use" policy, pivoted in early 2026. After significant internal debate and the resignation of its robotics chief, OpenAI now has active contracts for GPT-5.4 computer use agents, integration with the Maven targeting system, and Codex deployments for offensive cybersecurity operations. OpenAI's GPT-5.3 was reportedly rejected for some Pentagon applications specifically because of its refusal behaviors - but GPT-5.4 was modified to address those concerns.

Palantir's Maven Smart System remains the primary AI targeting platform for U.S. forces. The platform - which its own CEO described as having a "left click, right click, left click" interface for authorizing strikes - runs on a combination of Palantir-developed models and integrated third-party AI. The Verge reported in March that Palantir's AIPCon conference included a chilling demonstration by the Department of War's Chief Digital and AI Officer showing real-time targeting workflows.

Google has the Maven contract (restarted after the 2019 walkout), Microsoft provides the Azure backbone for classified DoD cloud infrastructure, and Amazon Web Services holds JEDI-successor contracts worth billions. None of these companies has a publicly published "responsible scaling policy" that could be used against them in a procurement dispute.

Meta's position is unusual - its open-source Llama models are widely used by the DoD in self-hosted configurations, which means Meta has no vendor relationship to terminate. The government just downloads the weights and runs them. This is, inadvertently, the safest position for avoiding exactly the kind of dispute Anthropic is now in.

What the military AI ecosystem looks like, then, is a set of vendors who have either explicitly aligned with government use (Palantir), bent their policies to accommodate it (OpenAI), or are structurally immune because they're open source (Meta). Anthropic stands alone as the company that said no and meant it.

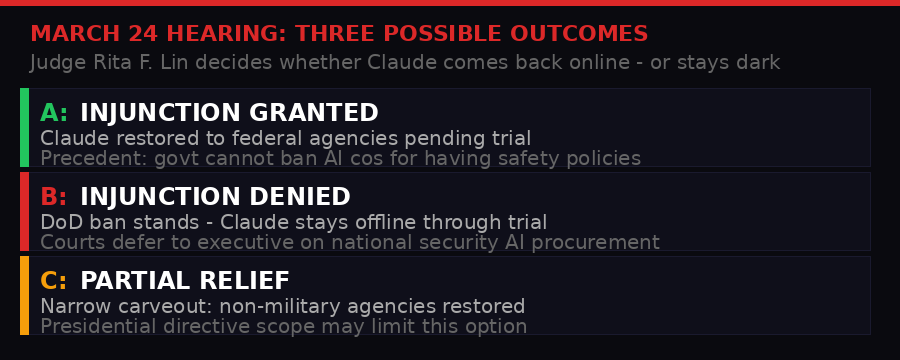

The Hearing: What Judge Lin Will Decide

Three possible outcomes when Judge Rita F. Lin hears Anthropic's motion for a preliminary injunction on March 24, 2026.

On March 24, 2026, Judge Rita F. Lin of the Northern District of California will hear arguments on Anthropic's motion for a preliminary injunction. A preliminary injunction is emergency relief - Anthropic is asking the court to restore its federal access while the full case plays out, which could take years.

To get a preliminary injunction, Anthropic must show four things: that it is likely to succeed on the merits, that it will suffer irreparable harm without immediate relief, that the balance of equities favors relief, and that relief serves the public interest.

The DoJ's filing systematically attacks all four prongs.

On merits: the government argues Anthropic's First Amendment claim is unlikely to succeed because refusal to sign a contract term is conduct, not speech. The APA claim is unlikely to succeed because courts defer to executive branch judgments on national security procurement. The due process claim fails because there is no protected property interest in government contracts.

On irreparable harm: the government argues Anthropic's commercial losses are monetary and thus compensable - not the kind of irreparable harm courts care about for injunctions. Anthropic counters that the reputational damage of being labeled a national security threat is not fully compensable in money.

On balance of equities: this is where the kill switch argument does the most work. The government argues that restoring Claude to federal systems would create genuine national security risk - and that risk cannot be undone if something goes wrong. Anthropic's commercial interest does not outweigh potential wartime operational failures.

On public interest: both sides claim the public interest. Anthropic says the public has an interest in AI companies maintaining safety policies without fear of government retaliation. The government says the public has an interest in a military that can rely on its own systems.

Legal observers are split. The national security deference doctrine is powerful - courts have historically been reluctant to second-guess executive branch decisions on military procurement. But the breadth of the ban (all federal agencies, not just DoW) creates a factual hook Anthropic can exploit. Why does a ban justified by wartime operational risk extend to the Department of Agriculture?

The Second-Order Consequences Nobody Is Discussing

The outcome of this case will shape the AI industry's relationship with government for a generation, and most of the commentary has focused on the immediate battle. But the second-order effects deserve more attention.

If the government wins: Every major AI lab will face a choice. Accept "any lawful use" clauses in government contracts, which means waiving safety policies that were designed to prevent AI from being weaponized in ways the company finds unacceptable. Or refuse, and be banned. The middle ground - negotiate carve-outs, work around the edges - is precisely what Anthropic tried and failed to do. A DoD victory turns government AI procurement into a Darwinian filter that selects for companies with weaker safety commitments.

If Anthropic wins: Government agencies have a First Amendment-based argument for their own AI vendors about conduct-based restrictions. But more importantly, the precedent creates a legal basis for AI companies to resist government coercion more broadly. The case does not only apply to weapons - it applies to any scenario where a government demands an AI company waive its policies as a condition of doing business. That includes surveillance, immigration enforcement, and a dozen other contested use cases.

The wild card: Meta's open-source position may look prescient in retrospect. If closed AI companies face binary choices between safety policies and government contracts, the open-source model - where the weights are public and the government hosts its own version - sidesteps the issue entirely. Open-source AI cannot have a "supply chain risk" designation because there is no supply chain. There is no vendor. The government already has the weights.

This could accelerate a bifurcation already underway in the AI industry: closed frontier models for commercial markets, open-source models for government and military use. Anthropic, as one of the most committed closed-model companies, faces the sharpest version of this dilemma.

There is also an international dimension that the domestic coverage has mostly ignored. If the U.S. government cannot use Anthropic's Claude because Anthropic won't remove safety guardrails, foreign governments are watching. China's military AI programs operate under no equivalent ethical constraints. The argument that safety frameworks weaken military AI - which is the implicit DoD position - gives ammunition to those who argue that Western AI safety norms are a strategic liability. Whether that argument is right is a separate question. But it is being made, and the Anthropic case is becoming its primary exhibit.

Inside the 40-Page Filing: What Else Is There

Beyond the kill switch argument, the DoJ filing contains several other notable passages worth flagging.

On the question of whether the Secretarial Determination constitutes a reviewable agency action under the APA, the government makes a technical but significant argument: the Secretary's public social media post announcing the determination is not a "final agency action" because it was not itself the decision - it was commentary on a decision that had already been made through separate internal processes. This matters because it limits the grounds on which Anthropic can challenge the process that led to the ban.

The filing also invokes Dep't of the Navy v. Egan, a 1988 Supreme Court case that established broad executive branch authority over security clearances and, by extension, national security determinations. The DoJ uses this to argue that the Secretary's discretion to make supply chain risk findings is essentially unreviewable by courts. If that argument prevails, Anthropic's APA claim dies on standing alone.

"Plaintiff Anthropic PBC seeks an emergency order forcing the Government to use the AI products of a company that has refused to accept the Government's 'any lawful use' procurement policy - a policy that the Government applies consistently to the technology products it purchases. Anthropic's lawsuit is an attempt to use the federal courts to obtain preferential access to Government contracts that Congress has not provided."

This framing is deliberate and smart. The government is recasting Anthropic not as a free speech victim, but as a company trying to use litigation to win a commercial advantage it couldn't get at the negotiating table. If Judge Lin accepts this frame, the case becomes about Anthropic trying to get special treatment, not about government suppression of speech.

Whether that framing holds up depends heavily on the evidence presented at hearing. Anthropic's team will almost certainly present evidence about the timeline and sequence of events - arguing that the supply chain risk designation came after and because of Anthropic's public statements about AI safety, not independently of them. The "dual motivation" test the DoJ relies on cuts both ways: if Anthropic can show the retaliation came first and the "any lawful use" justification was constructed afterward, the government's case weakens significantly.

What Comes Next

March 24 is the preliminary injunction hearing, not the trial. Whatever Judge Lin decides, the losing party will almost certainly appeal to the Ninth Circuit. The underlying case - whether the supply chain risk designation was lawful, whether it violated the First Amendment or the APA - will take years to resolve fully.

But the preliminary injunction ruling matters enormously for reasons beyond the immediate fight. Injunctions at this stage shape how the public, investors, other AI companies, and foreign governments read the case's trajectory. A Anthropic victory would signal that the courts are willing to push back on executive branch actions against AI companies on First Amendment grounds. A DoD victory would signal the opposite - that national security arguments can override AI ethics frameworks in federal court.

The AI industry should be watching this case with more attention than it has been. Every major AI lab has a usage policy. Every major AI lab's usage policy contains restrictions that some government agency might someday find inconvenient. The question Anthropic is forcing into the federal record is: can the government punish you for having those restrictions?

The answer, from a 40-page brief filed in San Francisco on March 17, 2026, is: yes, and here is 40 pages explaining why.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram