The AI Power War: How Your Electricity Bill Is Paying for the Chatbot Boom

MARCH 15, 2026 — 04:47 CET

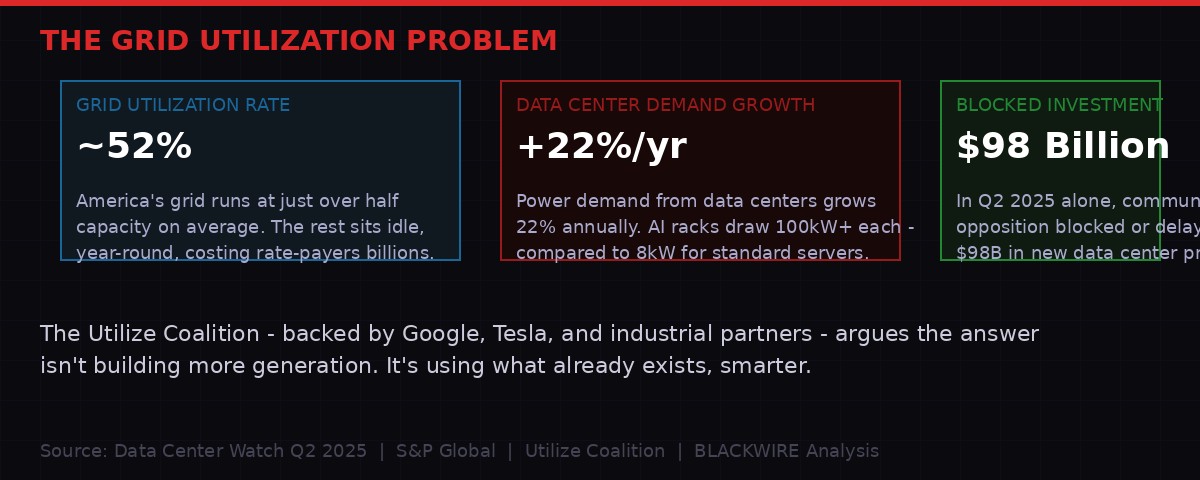

Google and Tesla just joined a coalition to squeeze more electricity out of America's aging grid. A single AI server rack draws as much power as 100 homes. And communities across the country are blocking $98 billion in data center projects because their utility bills have started to look like the grid itself is being held hostage.

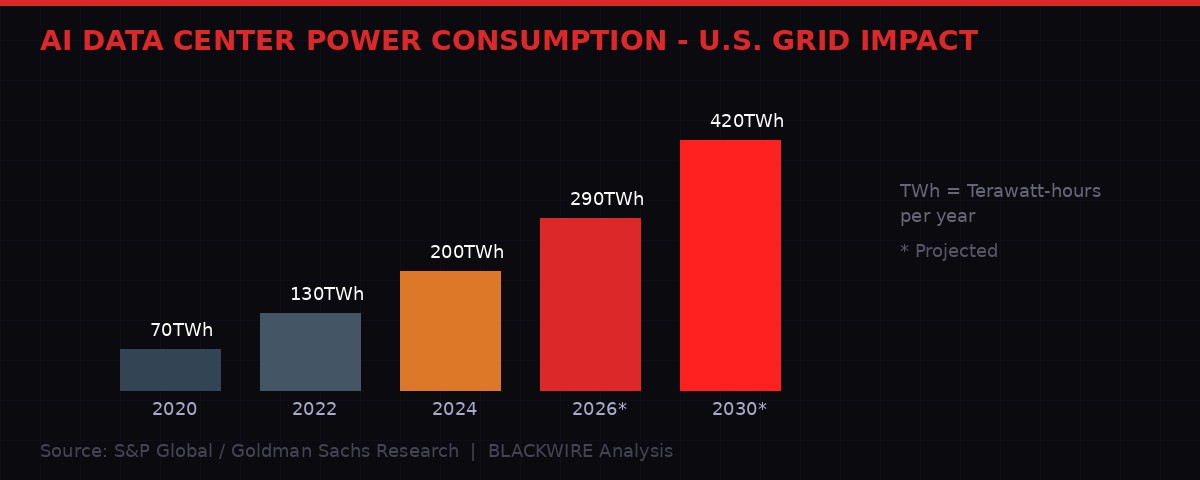

U.S. data center power demand, historical and projected through 2030. Source: S&P Global / Goldman Sachs Research / BLACKWIRE Analysis

This is not an abstract infrastructure debate. It is a physical fight over who bears the cost of the most energy-intensive technological buildout in American history. The AI industry needs power at a scale the grid was never designed to provide. The companies behind it have decided the answer is not to build new generation - it is to squeeze harder on what already exists, and pass the math problem to ratepayers.

The Utilize coalition, launched in early 2026 and backed by Google, Tesla, and a roster of utilities and technology service providers, wants to transform how America uses its existing grid. The pitch sounds reasonable on the surface: the grid runs at roughly 52 percent capacity on average. Use it smarter, and you can serve AI's appetite without building dozens of new power plants. The reality, as communities from Indiana to Louisiana to Memphis are discovering, is considerably more complicated.

America's Grid Was Built for a Different World

The U.S. electric grid is not a single system. It is a patchwork of regional grids, operated by different entities, built over a century of incremental investment, and designed around one core principle: have enough capacity to handle the absolute peak demand on the hottest summer afternoon of the year, then keep the lights on the rest of the time.

That design creates the utilization problem Utilize is pitching solutions to. On most hours of most days, massive amounts of generation capacity, transmission lines, and distribution infrastructure sit idle. The "restaurant that's always staffed but usually empty" analogy used by the coalition is accurate as far as it goes. Ratepayers subsidize that idle capacity through fixed infrastructure charges embedded in their electricity bills.

If you could flatten the demand curve - shift some consumption to off-peak hours, store cheap overnight electricity in batteries for daytime peaks, use demand response programs to temporarily reduce large industrial loads during crunch periods - the grid could theoretically handle significantly more total consumption without building new plants. This is the premise behind virtual power plants, battery storage aggregation, and grid-enhancing technologies.

"Electricity costs are driven by a simple equation: the cost of the grid divided by how much electricity we sell over it. Providing more power through the grid we have lowers costs for everyone." - Utilize Coalition, official statement, 2026

The logic holds under certain conditions. The problem is the sheer scale of what AI infrastructure is demanding, and how fast that demand is arriving.

The Scale Problem Nobody Is Talking About Honestly

Key statistics: grid utilization rate, data center demand growth, and blocked investment figures. Source: Data Center Watch Q2 2025 / S&P Global / BLACKWIRE Analysis

A standard corporate server rack draws roughly 8 kilowatts of power. A high-density AI rack - the kind needed to run modern inference workloads for large language models and image generators - draws between 80 and 100 kilowatts. That is the equivalent of 80 to 100 average American homes running simultaneously, from a single stack of equipment about the size of a wardrobe. According to S&P Global analyst Dan Thompson, power demand for data centers grew 22 percent in 2025 and is projected to nearly triple by 2030.

Goldman Sachs has modeled data center power demand hitting ~500 TWh annually by the end of the decade - a volume larger than the entire electricity consumption of France. Much of that growth is concentrated geographically: Northern Virginia (the densest data center market on Earth), Phoenix, Chicago, and Atlanta. Those grids are already straining.

The Utilize coalition's argument - use the grid smarter, fill in the off-peak valleys - runs into a hard physical constraint: AI inference workloads do not have off-peak hours. When someone queries ChatGPT at 3 AM, those servers are drawing full power. When a Fortune 500 company runs batch AI processing overnight because compute is cheaper, that is off-peak demand that the coalition wants to maximize. The consumption is continuous, not cyclical. You cannot shift it to Tuesday mornings to smooth the curve.

Battery storage can help, but not at the scale being discussed. A typical grid-scale battery installation covers a few hours of demand smoothing. The largest projects in the country cover tens of megawatts. A single hyperscale AI data center can draw hundreds of megawatts continuously. The math does not close without new generation - which is exactly what tech companies are trying to avoid, because new generation takes a decade to permit and build, and costs that they would eventually bear through grid connection charges.

The Communities Saying No

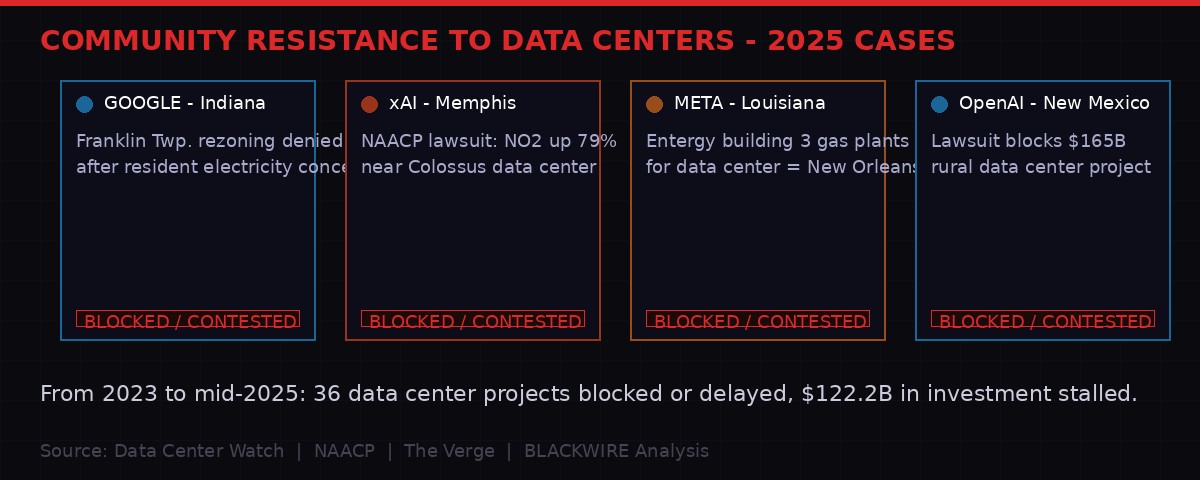

Selected cases of community resistance to data center projects in 2025-2026. Source: Data Center Watch / NAACP / BLACKWIRE Research

The resistance is not coming from environmental activists alone. It is bipartisan, local, and increasingly sophisticated about the specific economics at play. Data Center Watch, which tracks data center projects and opposition campaigns, reported that in Q2 2025 alone, community pushback blocked or delayed 20 projects representing $98 billion in proposed investment. From 2023 through mid-2025, a total of 36 projects worth $122 billion have been stalled.

The cases follow a consistent pattern. A tech company announces a data center. Local utilities start planning new generation to serve it. Rate cases are filed with public utility commissions. Existing ratepayers - homeowners, small businesses, local manufacturers - discover they are being asked to subsidize infrastructure costs for a facility that will employ hundreds of workers but consume the power of a small city.

In Richland Parish, Louisiana, Meta is building what it describes as its largest data center to date. To serve that facility, local utility Entergy broke ground on two of three planned gas-fired power plants. The facility's electricity demand is expected to reach triple the annual consumption of New Orleans. The Union of Concerned Scientists put it plainly: "Entergy Louisiana customers are now set to subsidize Meta's data center costs."

"No community should be forced to sacrifice clean air, clean water, or safe homes so that corporations and billionaires can build energy-hungry facilities." - NAACP Guiding Principles on Data Center Development, September 2025

In Memphis, the fight has turned into a public health dispute. Elon Musk's xAI built its Colossus supercomputer cluster there in 2024. Research commissioned by Time magazine from the University of Tennessee, Knoxville found that peak nitrogen dioxide concentration levels near the data center had jumped 79 percent since it began operating. The NAACP and Southern Environmental Law Center filed a threatened lawsuit. xAI is building a second, larger facility in the same area.

In Indiana, Google withdrew its own rezoning application for a massive data center in Franklin Township after the Indianapolis City-County Council signaled it would vote against approval. Residents had organized around concerns about electricity and water consumption. Google, notably, is also a member of the Utilize coalition arguing that smarter grid use will make everything cheaper for everyone.

What the Utilize Coalition Actually Is

The Utilize coalition presents itself as a consumer advocacy play. Its public messaging emphasizes lower electricity bills, faster grid interconnection for new customers, and economic growth. The framing is clever because it is not entirely wrong - unused grid capacity does cost ratepayers money, and increasing utilization does theoretically spread fixed costs over more consumption.

But the coalition's membership reveals its actual interests. Google and Tesla are not primarily interested in lowering residential electricity bills. They are facing a severe bottleneck: data center interconnection queues in major markets now stretch 5 to 10 years. Building new transmission lines takes a decade and billions of dollars in regulatory fights. Nuclear power is 15 years out. Utility-scale solar takes 3 to 5 years. The Utilize pitch - optimize the existing grid now, with virtual power plants and demand response - is a way to unlock capacity in the near term, for facilities that need power in the near term.

The coalition's target is state-level regulators and grid operators. It wants them to establish "technology-neutral grid utilization goals" - policy language that would push utilities to squeeze existing assets harder rather than building new ones. The bipartisan framing is intentional: grid efficiency plays well to conservatives (no new government spending on generation), while virtual power plants and batteries appeal to climate-focused progressives.

The Utilize Coalition's Core Members (March 2026):

Google, Tesla, a range of battery storage providers, demand-response aggregators, grid-enhancing technology vendors, utilities seeking to monetize existing assets, and consumer advocacy groups that have been funded, in some cases, by technology industry donors.

Notable absences: environmental groups that have opposed fossil-gas buildout to serve data centers, and ratepayer advocacy organizations in states with the heaviest data center presence.

The Hidden Subsidy and Who Pays for It

The economics of utility rate-setting are deliberately opaque, but the basic structure is not complicated. Utilities recover their costs - generation, transmission, distribution, administration - through rates approved by state public utility commissions. When a large new load connects to the grid, the costs of serving that load are supposed to be borne by that customer. In practice, they frequently are not.

The mechanism is something called "socialization" of costs. When a utility builds new infrastructure to serve an industrial customer - a data center, a factory, an aluminum smelter - the capital costs often get rolled into the rate base, spread across all customers. The large customer gets favorable interconnection terms to attract the investment and jobs. Residential customers absorb the infrastructure overhead. This has been standard practice in utility regulation for decades.

AI data centers have made this dynamic more visible because the scale is unprecedented. When a single facility requires the equivalent of a small city's worth of new power infrastructure, the socialization argument becomes politically untenable. The Louisiana and Indiana cases have forced utility commissions to confront the question directly: at what point does economic development policy shade into a straightforward transfer of wealth from residential ratepayers to technology corporations?

The Utilize coalition's answer is that smarter grid use avoids the need for that new infrastructure entirely. Virtual power plants - aggregations of residential batteries, EV chargers, and smart thermostats that can collectively reduce demand during peak periods - are presented as the solution that benefits everyone. The grid gets more headroom. Homeowners get payments for participation. Data centers get the power they need. No new gas plants required.

The problem is scale, again. Virtual power plant programs currently operating in the U.S. aggregate tens to hundreds of megawatts of flexible capacity. The data center buildout is being measured in gigawatts. Tesla's Powerwall-based virtual power plant in Texas, one of the largest in the country, can dispatch roughly 90 megawatts during a grid emergency. A single planned hyperscale AI campus can require 500 megawatts of dedicated capacity. The math still does not close.

The Timeline of a Grid Under Pressure

What Happens When the Grid Can't Keep Up

The consequence most regulators and tech companies are not discussing publicly is the reliability risk. The U.S. grid is already operating with thinner reliability margins than at any point since the 2003 Northeast blackout. NERC, the North American Electric Reliability Corporation, has flagged large portions of the Midwest and South as facing elevated reliability risk over the next decade due to generation retirements outpacing new capacity additions.

Adding large, continuous, non-deferrable AI loads to grids already running close to their reliability margins is not a theoretical concern. In 2021, the Texas grid came within minutes of a complete collapse during Winter Storm Uri - a failure that killed hundreds and left millions without power for days. Texas has since become one of the fastest-growing data center markets in the country. The lessons have not all been learned.

The Utilize coalition's virtual power plant approach does include demand response as a key tool - essentially, the ability to curtail large loads during grid emergencies. Data centers are listed as potential demand response participants. But data centers housing AI inference infrastructure have strict uptime requirements. A query to ChatGPT does not buffer politely while the grid recovers. The contracts that make large industrial customers viable demand response resources typically require them to accept curtailment only a few hours per year, under specific conditions. The reliability buffer this provides during a sustained heat wave or cold snap is marginal.

There is a second-order effect here that virtually no analysis has grappled with seriously. As AI becomes embedded in critical infrastructure - hospital systems, financial markets, logistics networks, emergency response coordination - the power systems that serve AI data centers become critical infrastructure themselves. A regional grid failure that took down AI systems five years ago would have been an inconvenience. The same failure today could cascade into healthcare delivery failures, supply chain breakdowns, and emergency response paralysis. The interdependency is growing faster than the resilience.

The Meta MTIA Signal: Even the Chips Are Changing

One data point from this week underscores how structural the power problem has become. Meta unveiled its new MTIA 300 chip - the Meta Training and Inference Accelerator - designed specifically for training ranking and recommendation systems across Instagram and Facebook. Future variants (MTIA 400, 450, and 500) are designed to handle generative AI inference at scale. The explicit goal is to reduce dependency on Nvidia GPUs, which draw enormous power and are constrained by export controls.

Custom silicon is one of the few levers available to tech companies trying to manage their energy footprint. Google's TPU line, Amazon's Trainium and Inferentia chips, and Meta's MTIA program are all partly about wringing more computation per watt than general-purpose GPU hardware allows. The efficiency gains are real - Google has claimed 2 to 3x better performance-per-watt on some workloads using TPUs versus GPUs. But those gains are getting competed away by increasing model sizes and expanding inference volumes. Training GPT-3 required roughly 1,300 megawatt-hours of electricity. Estimates for GPT-4 training run to 50,000 megawatt-hours. The next generation will be larger still.

Custom chips slow the growth curve. They do not reverse it. The fundamental problem - AI is becoming the largest incremental driver of electricity demand in the world, faster than any grid was built to accommodate - is not solvable with better silicon alone.

The Real Question Nobody Is Asking at the Commission Hearings

Utility commission hearings on data center rate cases tend to focus on interconnection costs, rate allocation methodologies, and demand forecasting. These are important. They are not the central question.

The central question is whether the United States has made a collective decision - through democratic processes, transparent policy debate, and public cost-benefit analysis - to prioritize AI infrastructure development above grid reliability, residential electricity affordability, and local environmental quality. The answer, so far, is that no such decision has been made. Instead, a series of individual corporate investment decisions, approved through fragmented regulatory processes in fifty different jurisdictions, are collectively reshaping the grid without anyone having voted on it.

The communities blocking data center projects in Indiana, Louisiana, and Memphis are not anti-technology. They are asserting that they did not agree to bear the costs of a transformation being decided entirely by private companies and their lobbying campaigns. The Utilize coalition's state-by-state strategy is, among other things, an attempt to pre-empt that assertion by embedding efficiency mandates in regulatory frameworks before the political opposition can organize at scale.

What's coming next is a clash of timelines. Tech companies need power within 2 to 3 years for facilities already under construction. Grid modernization and new transmission development operate on 10 to 15 year timelines. Virtual power plants and demand response can fill part of the gap. Community opposition is stalling billions in projects that would fill another part. Something in the middle has to give - and historically, in American utility regulation, that something is the ratepayer.

The Utilize coalition knows this. That is why it is moving now, at the state level, before the cost-allocation fights get to Congress. The grid that emerges from this period will be shaped by whoever wins those proceedings. Google and Tesla have already decided which side they are on.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram