The AI Likeness Wars: Dead Actors, Fake Mayors, and the Consent Crisis Nobody Is Ready For

Artificial Intelligence Digital Rights Hollywood Voice Cloning SAG-AFTRA

The AI likeness industry is moving faster than any legal framework can track. (Illustrative / Pixabay)

A dead man speaks again. An algorithm claims to be an actress. A government hotline puts an English-accented robot voice in the ear of Spanish callers. These three things happened in the same week - and together they signal that the AI likeness industry has crossed a threshold nobody was prepared for.

Val Kilmer died in April 2025. He was 65, felled by pneumonia after a decade-long battle with throat cancer that had already cost him his natural voice. Less than a year later, a film company announced that a generative AI version of Kilmer will appear in an independent movie - not in archival footage, not in blurry background shots, but as a speaking, performing character. The producers say they did it ethically. Hollywood's unions are not convinced. And somewhere in the middle of this debate sits a question that nobody has answered cleanly: who owns the right to sound and look like you, after you are gone?

The same week, a woman in Washington state posted a TikTok that racked up two million views. She had called the state Department of Licensing for help. She pressed 2 for Spanish. What she got was a robotic voice speaking English with an exaggerated Spanish accent - the product of a misconfigured Amazon Web Services text-to-speech feature called "Lucia," designed to mimic Castilian Spanish pronunciation. The video was funny. The implications were not.

Add to this the ongoing firestorm over "Tilly Norwood," a fully computer-generated "actress" created by a company called Xicoia, which has been shopping the character to Hollywood talent agencies. SAG-AFTRA, the actors' union, called it a product "generated by a computer program that was trained on the work of countless professional performers - without permission or compensation." The backlash from actual human performers was immediate, angry, and pointed.

Three stories. One theme. The AI likeness industry - voice cloning, digital resurrection, synthetic actors - is outrunning every legal and ethical framework designed to contain it. And the gap is widening by the week.

Timeline of key AI likeness events from 2022 through March 2026. Source: AP News, SAG-AFTRA official statements, BLACKWIRE research.

Val Kilmer's Second Act: How AI Resurrection Works

First Line Films announced on March 19, 2026 that Val Kilmer has posthumously joined the cast of "As Deep as the Grave," previously titled "Canyon of the Dead." The film centers on the true story of archaeologists Ann and Earl Morris, whose Arizona excavations uncovered significant Native American history. Kilmer was cast to play Father Fintan, a Catholic priest with Native American spiritual connections - a role he reportedly sought out himself before his health declined. He never filmed it.

According to writers-directors Coerte and John Voorhees, Kilmer had signed on to the project five years ago. His estate - represented by daughter Mercedes Kilmer, herself a filmmaker - gave permission for his digital replication and is being compensated. The production says it followed SAG-AFTRA guidelines throughout.

"He always looked at emerging technologies with optimism as a tool to expand the possibilities of storytelling. This spirit is something that we are all honoring within this specific film, of which he was an integral part." - Mercedes Kilmer, statement to AP News, March 2026

The mechanics of what First Line Films actually did are not fully disclosed publicly, but the general technology pipeline for AI actor resurrection at this stage is well understood. It typically requires training a neural network on existing footage, voice recordings, and motion capture data from the performer. Diffusion models then generate new visual content that can be composited into scenes. Voice synthesis uses a separate model trained on audio samples - in Kilmer's case, there is significant material from his pre-illness performances plus his own earlier use of AI voice restoration software after his tracheotomy.

Kilmer had already experienced AI voice work firsthand. In "Top Gun: Maverick" (2022), his voice was digitally processed and altered because his natural voice had been damaged by cancer treatment. He had previously partnered with a voice AI company to create a digital replica of his pre-cancer voice - a project he chose voluntarily, for his own use. That project became the foundation for what "Maverick" used. The technology he consented to use for himself has now been deployed to generate a performance he never gave.

The critical distinction here is subtle but important: Kilmer consented to voice restoration in his lifetime. His estate consented to AI resurrection after his death. SAG-AFTRA rules say that "consent not obtained before death must be obtained from an authorized representative or the union." The production says they complied. But compliance with union rules and the broader public question of whether this should be happening at all are entirely different conversations.

The rest of "As Deep as the Grave" cast includes Tom Felton ("Harry Potter"), Wes Studi, and Abigail Breslin. The film has been stuck in post-production for years and is now seeking distribution with a planned release in 2026.

Tilly Norwood and the AI Actor Problem

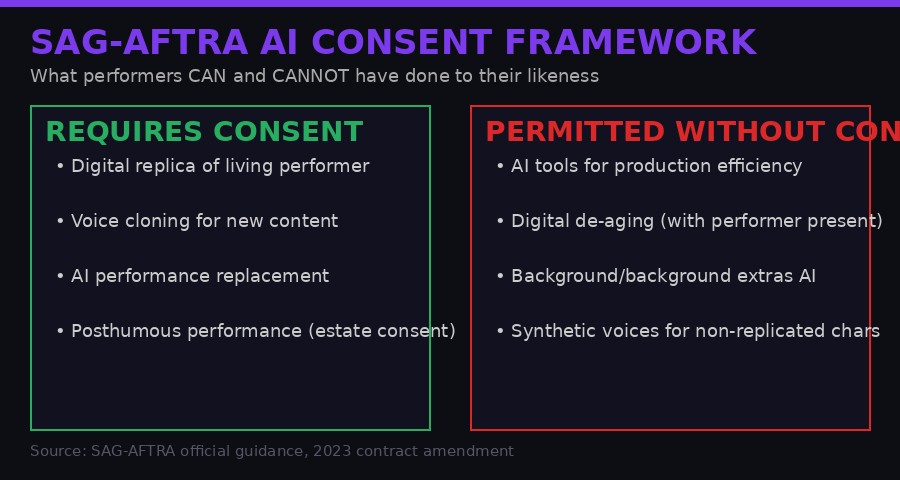

SAG-AFTRA's AI consent framework as of 2025 contract amendment. Source: official SAG-AFTRA guidance documents.

The Val Kilmer situation was, at minimum, conducted with family consent and union awareness. The Tilly Norwood case involves no such framework - because there is no person involved to consent to anything.

Tilly Norwood is a fully synthetic character created by Xicoia, described as "the world's first artificial intelligence talent studio," founded by Dutch producer and comedian Eline Van der Velden. The character has an Instagram account with 33,000 followers that posts photos of her drinking coffee, shopping for clothes, and "preparing for projects." Van der Velden promoted Norwood at the Zurich Summit - an industry sidebar of the Zurich Film Festival - claiming that talent agencies were "circling" the character.

SAG-AFTRA's response was categorical:

"To be clear, 'Tilly Norwood' is not an actor, it's a character generated by a computer program that was trained on the work of countless professional performers - without permission or compensation. It has no life experience to draw from, no emotion and, from what we've seen, audiences aren't interested in watching computer-generated content untethered from the human experience." - SAG-AFTRA official statement, 2025

Actor Melissa Barrera wrote on social media: "Hope all actors repped by the agent that does this, drop their a$$." Natasha Lyonne, who is directing a film titled "Uncanny Valley" that pledges to use "ethical" AI alongside traditional filmmaking, called it "deeply misguided and totally disturbed."

Van der Velden's defense was that Norwood is "not a replacement for a human being, but a creative work - a piece of art." She drew comparisons to drawing a character or writing a role. Critics in the industry rejected this framing immediately: there is a fundamental difference between writing a fictional character and creating a photorealistic synthetic human trained specifically to mimic the aesthetic of real performers for the purpose of commercial deployment in the same market those performers compete in.

The economic second-order effect is the part that makes people in Hollywood most uncomfortable. An AI character like Tilly Norwood does not need residuals. It does not need trailer space on location. It does not need insurance. It does not need health benefits, a union contract, or a day off. It does not age. It cannot be in a #MeToo story or show up late to set. From a pure production finance perspective, a synthetic actor is a cost-reduction tool dressed in character clothing - and the film industry has a long history of adopting cost reduction tools aggressively.

The Xicoia model, if it gains traction, does not just threaten working actors. It threatens the economic model that has sustained screen performance as a profession for a century. If a production can license a synthetic character for a flat fee and generate any performance required without a human present, the downstream implications for the 160,000 SAG-AFTRA members run deep.

When Governments Deepfake Themselves: NYC, Washington State, and the Public Sector AI Voice Problem

While Hollywood wages its culture war over AI actors and posthumous performances, a quieter and arguably more troubling deployment of voice AI has been happening in government services - and the failures are starting to surface publicly.

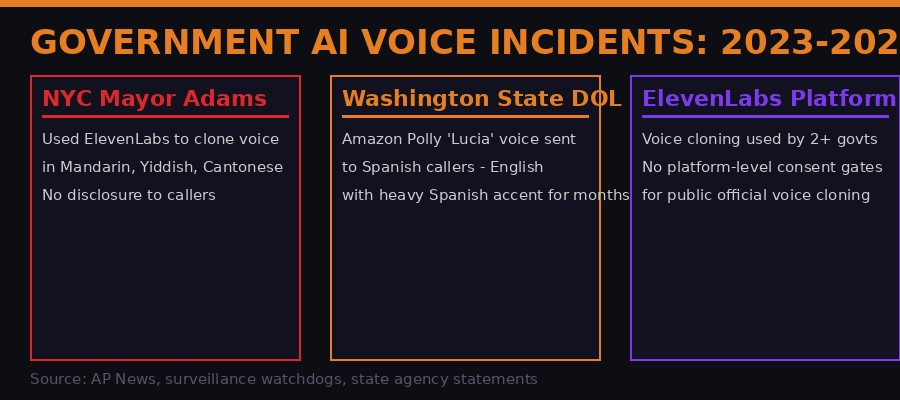

The Washington state incident is a masterclass in how AI voice deployment goes wrong at the institutional level. The state Department of Licensing deployed an automated phone system using Amazon Web Services' Polly text-to-speech platform. The system included options for multiple languages - Spanish, Mandarin, and others. Someone in DOL's tech team selected a voice called "Lucia" for Spanish-language callers. Lucia is an AWS Polly voice designed to mimic Castilian Spanish phonetics. The result, when it processed English-language text, was a voice speaking English words with a heavy Spanish accent.

For months, Spanish-speaking callers to the agency - many of them immigrants navigating complex licensing bureaucracy in a language already challenging - were met with this voice. The agency did not notice, or did not prioritize fixing it. Maya Edwards, whose Mexican husband encountered the voice while trying to get information about his driver's license, described it as "hilarious in the moment because it was so absurd" but with "real accessibility issues."

When she called again months later and found the problem persisted, she posted a TikTok. Two million views later, DOL apologized and attributed the error to "staff" who had misconfigured the system. Amazon declined to comment. The agency declined interview requests. The underlying platform - not the bug, but the entire automated phone system replacing human operators for sensitive government services - remains in place.

The DOL incident happened because a government agency deployed a voice AI tool without adequate testing, without meaningful oversight, and without a correction mechanism that didn't require going viral on TikTok to trigger a fix. This is not a bug. This is how government AI procurement works in 2026.

The NYC Mayor Adams case is different in character and arguably more disturbing in implication. Adams did not accidentally deploy bad AI. He intentionally used ElevenLabs' voice cloning tool to generate robocalls in Mandarin, Yiddish, Cantonese, Haitian Creole, and Spanish - languages he does not speak - without disclosing to recipients that they were hearing an AI-generated version of his voice. Native speakers reportedly reviewed the calls for accuracy before they went out. But callers received no disclosure that Adams was not actually speaking their language.

"The mayor is making deep fakes of himself. This is deeply unethical, especially on the taxpayer's dime. Using AI to convince New Yorkers that he speaks languages that he doesn't is outright Orwellian." - Albert Fox Cahn, executive director, Surveillance Technology Oversight Project

Adams defended the practice by saying he was trying to "speak to people in the languages that they understand." His office later said native speakers verified translation accuracy. But accuracy and transparency are different things. A politician using AI to simulate multilingual fluency he does not have, without disclosure, creates a specific category of political deception that current law does not address cleanly. The question is not whether the translations were accurate. The question is whether voters have a right to know when their mayor's voice is real versus synthesized.

Three notable government AI voice deployments and their failures. Source: AP News, Surveillance Technology Oversight Project, state agency statements.

The Legal Vacuum: What Laws Actually Cover This

The honest answer to "what laws govern AI voice cloning and likeness replication" is: not many, not consistently, and not comprehensively.

At the federal level in the United States, there is no single statute that addresses AI-generated likenesses or voice clones. The closest existing legal tools are a patchwork of right-of-publicity laws (which vary dramatically by state), existing intellectual property frameworks (which were not designed for AI-generated content), and FTC regulations around deceptive advertising (which apply in commercial contexts but not to government communications).

California has the most robust state-level protections for performers. California Civil Code Section 3344 protects the commercial use of a living person's name, voice, signature, photograph, or likeness without consent. California also passed AB 2602 in 2024, which requires written contracts specifying the use of AI to replicate a performer's voice or likeness. The law has provisions for posthumous protections as well, extending rights to an estate for 70 years after death.

But California law only covers California. And the film and AI technology industries are global. A Dutch company creating a synthetic actor, a film production company using AI resurrection tech, a government agency deploying AWS voice tools - any of these can operate outside the reach of California's protections depending on jurisdiction and context.

SAG-AFTRA's 2023 contract, won after a 118-day strike, does require consent for the creation of digital replicas of performers. The video game actors' contract, settled in 2025 after a separate strike, mandated written permission for digital replicas in that sector. These are real wins. But union contracts only govern productions that are party to those contracts. Non-union productions, international productions, and AI companies that argue they are training on "publicly available data" rather than replicating specific performers operate in a grey zone.

The Xicoia "Tilly Norwood" case highlights the gap. If no specific performer's likeness is being directly replicated - if the AI model was trained on a broad corpus of performance data rather than replicating one person - existing right-of-publicity law has nothing to say. The performers whose work trained the model never consented to that use. Their collective aesthetic has been synthesized into a commercial product that now competes with them in their own market. Whether this constitutes a legal wrong under current law is genuinely unclear.

At the federal level, a bipartisan bill - the NO FAKES Act - has been proposed in the US Senate to create a federal right against the unauthorized creation of AI-generated digital replicas of individuals. As of early 2026, it has not passed. In the EU, the AI Act includes provisions around transparency for AI-generated content, including requirements to label synthetic media. Enforcement mechanisms are still being developed.

Current Legal Protections at a Glance

- California AB 2602 (2024): Written consent required for AI voice/likeness replication in union productions in California

- SAG-AFTRA 2023 contract: Consent required for digital replicas of living and deceased performers (for signatory productions only)

- Video game actors contract (2025): Mandatory written permission for digital replicas in video game sector

- EU AI Act: Transparency and labeling requirements for synthetic media - enforcement still developing

- NO FAKES Act (proposed): Federal right against unauthorized AI replicas - not yet passed as of March 2026

- FTC guidelines: Disclosure requirements for AI-generated commercial content - no equivalent for government use

The Technical Architecture: How These Systems Actually Work

Understanding why consent frameworks are so hard to enforce requires understanding how voice cloning and likeness AI actually operate at the technical level.

Modern voice cloning systems - platforms like ElevenLabs, Resemble AI, and competitors - use a two-stage process. First, a model is pre-trained on a massive corpus of human speech data. This training embeds a generalized understanding of phonetics, prosody, and acoustic characteristics across thousands of voices. Second, a "voice adaptation" step fine-tunes the model on a small sample of the target voice - sometimes as little as a few minutes of clean audio. The resulting model can generate speech in the target voice from any input text.

The fine-tuning step is the critical one from a consent perspective. Platforms like ElevenLabs allow users to upload voice samples and clone them. ElevenLabs has stated it requires users to affirm they have permission to clone the voice in question. But there is no technical enforcement of this requirement. A user who lies about having permission gets the same result as one who tells the truth. Platform Terms of Service are not consent mechanisms. They are liability shields.

For full performer resurrection - the kind used in the Val Kilmer situation - the pipeline is more complex. Visual synthesis requires video diffusion models or neural radiance field (NeRF) approaches that can generate novel viewpoints and facial expressions from reference footage. The "As Deep as the Grave" production has not disclosed exactly which technology stack they used, but the field is mature enough that multiple commercial vendors offer this service. Companies like Synthesia, D-ID, and several others provide turnkey "digital human" generation services. Some are explicitly marketed to entertainment and production studios.

The Amazon Polly failure in Washington state illustrates a different failure mode: not malicious misuse, but careless configuration of legitimate tools. Polly's neural text-to-speech voices are designed for specific language-voice combinations. When you mismatch a voice designed for one phonetic system with text in a different language, you get accent bleed - the acoustic characteristics of the training language bleed through into the new language's phonemes. This is a well-known behavior in speech synthesis. The Washington DOL's IT team either did not know this, did not test for it, or did not consider Spanish-speaking callers important enough to test carefully for.

This last possibility - a quiet deprioritization of minority-language users in government technology procurement - is the systemic problem that the accent TikTok points to, beyond the immediate absurdity of the voice itself.

The Business of Digital Death: A Growing Industry

The Val Kilmer case is not an outlier. It is an early data point in what is becoming a distinct commercial category: the management and monetization of deceased performers' digital estates.

James Dean, who died in 1955, was announced for a CGI role in "Finding Jack" in 2019. The project drew immediate backlash and was eventually shelved - but the attempt signaled that production companies were watching for opportunities. Peter Cushing was digitally recreated in "Rogue One: A Star Wars Story" (2016) using performance capture from a different actor combined with a digital likeness overlay. Carrie Fisher appeared in "The Rise of Skywalker" (2019) using footage filmed before her death. The technology has been marching forward since.

The Kilmer situation is different from the Star Wars cases in a specific way: it is being done on an independent film with limited resources, not a $250 million studio production with an army of lawyers and union compliance officers. This matters because it means AI performer resurrection is no longer cost-prohibitive for mid-tier and independent productions. The democratization of the technology is the commercial story here. What required $50 million in visual effects work in 2016 can now be approximated for a fraction of that cost using commercially available tools.

Several companies are now explicitly in the business of building digital estates for living performers - locking in rights agreements now, before death, to ensure the estate can continue to generate revenue from AI-rendered performances afterward. It is, in a sense, a morbid insurance product: sign with us now, and your heirs will receive royalties from your digital ghost performing in productions you never saw and could never have agreed to specifically.

The Kilmer case exists somewhere between the extremes. His estate exercised genuine consent and was compensated. The role was one he had reportedly wanted. The production says SAG guidelines were followed. But the precedent it sets is about the direction of travel - toward a world where posthumous performance becomes normalized, estate consent becomes a commercial transaction rather than an expression of the performer's actual wishes, and the market for dead performers expands alongside the market for living ones.

What the Second-Order Effects Look Like

None of the individual stories this week - the Kilmer film, the AI actress, the government hotlines - are catastrophic on their own. Their significance is in what they point toward. Several second-order effects are already visible.

First, the normalization gradient. Each AI likeness deployment that goes uncontested makes the next one easier to justify. If "As Deep as the Grave" is released and performs respectably, production companies will cite it as a template. If Tilly Norwood lands an agent, the dam breaks. If Mayor Adams' multilingual robocalls get credited with improved community outreach metrics, every city in America will have the ElevenLabs integration by 2027.

Second, the labor displacement mechanism. AI voice and likeness technology does not need to fully replace human performers to damage their economic position. It only needs to reduce demand at the margin. If a production can replace one supporting role per film with a synthetic character for a licensing fee, the cumulative effect across the industry is significant. For mid-tier and background performers - the working-class tier of the acting profession - this is already a live concern, not a theoretical one.

Third, the trust corrosion problem. Every incident like the Washington state hotline - where people seeking government services receive AI-generated voices without understanding what they are hearing - erodes the basic social infrastructure of institutional trust. If you cannot tell whether the voice answering your call is a human, an AI, or a politician's AI clone, the cognitive overhead of navigating daily life increases. And the populations most vulnerable to this corrosion are those with the fewest resources to adapt: elderly callers, recent immigrants, people with limited technical literacy.

Fourth, the consent theater problem. As SAG-AFTRA contracts, state laws, and platform Terms of Service accumulate around AI voice and likeness, there is a real risk that the result is a system that looks like consent but functions like a waiver pipeline. Estate representatives are not the same as the performer. Union rules covering signatory productions do not protect workers in non-union contexts. Platform terms that "require" user confirmation of permission create the appearance of a consent mechanism without the reality of one.

The Musk-Twitter shareholders trial that went to jury this same week carries an oblique resonance here: it is a case about whether tweets that moved markets constituted fraud, and whether intent matters when the impact is real. AI likenesses that mislead audiences, extract labor value from performers without compensation, and erode public trust in authentic communication are causing real harm regardless of whether specific legal frameworks currently classify it as such. The question is whether the law catches up before the harm compounds.

What a Real Framework Would Look Like

The gap between current law and the actual landscape is not a mystery requiring years of study. It is a specific set of missing provisions that most stakeholders can actually agree on in the abstract, even if they disagree violently about implementation.

A functional AI likeness framework needs at minimum: an unwaivable right to consent for voice and visual replication, applicable regardless of jurisdiction, covering both commercial and government deployments. It needs a clear definition of what "consent" means for posthumous use - not just estate authorization, but some mechanism for the performer's documented wishes to govern what the estate can authorize. It needs mandatory disclosure when AI-generated voice or likeness is used in any communication that purports to represent a real person - including government robocalls. And it needs enforcement mechanisms with teeth, not just Terms of Service that create liability shields for platforms while leaving the harms unchecked.

The EU's AI Act is closer to this than US federal law. California's AB 2602 is a reasonable starting point for a federal model. The NO FAKES Act proposal, if passed, would create baseline protection at the federal level. None of these are complete solutions. All of them are better than the current vacuum.

What they cannot resolve is the philosophical question at the center of all of this: whether the voice and face of a human being - the most intimate signature of individual identity - should be a property right, a right of personhood, or something else entirely. Property rights can be sold, licensed, and inherited. Rights of personhood are inalienable. The AI likeness industry has already answered this question with its behavior: it has decided, unilaterally, that your face and voice are data, and data belongs to whoever can process it fastest.

Val Kilmer's digital ghost will speak lines he never recorded, in a film he never finished, for an audience that may never know quite what they are watching. Tilly Norwood will keep posting her coffee selfies on Instagram. The Washington state hotline will probably deploy its next AI voice feature with somewhat more careful testing. And ElevenLabs will continue to process "I confirm I have permission" checkboxes at scale, several thousand times a day, building the infrastructure of a world where anyone can sound like anyone, and no legal framework has yet decided whether that is a product or a crime.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: AP News (Val Kilmer AI film announcement, March 2026); AP News (Tilly Norwood / Xicoia, 2025); AP News (Washington state DOL AI voice, March 2026); AP News (NYC Mayor Adams ElevenLabs robocalls); SAG-AFTRA official statements on AI consent; California AB 2602 text; ElevenLabs platform documentation; Amazon Web Services Polly voice documentation; Surveillance Technology Oversight Project statement. All quotes sourced from primary AP reporting.