"Babe, They're AI": The Deepfake Wedding Photo Scandal That Just Exposed Everyone's Reality Problem

Zendaya's friends congratulated her on a wedding that never happened. Millions liked the photos. The groom was there in the pictures - Spider-Man mask in hand. None of it was real. Welcome to the synthetic identity crisis nobody was prepared for.

The line between reality and synthetic fabrication is dissolving - not slowly, but overnight. BLACKWIRE graphic.

On the night of March 17, Zendaya sat across from Jimmy Kimmel on late-night television and described something that should not be remarkable but somehow is: she had to tell her own friends that her wedding photos were fake. Not "leaked early" fake. Not "poorly lit" fake. Fully, algorithmically, never-happened fake.

"While I was just out and about in real life, people were like, 'Oh my God, your wedding photos are gorgeous,'" she told Kimmel. "And I was like, 'Babe, they're AI. They're not real.'"

She laughed. The audience laughed. Kimmel laughed. And somewhere in that laughter was a trapdoor - because this is not a celebrity gossip story. It never was. It is a story about what happens when synthetic media becomes indistinguishable from memory, when your face can be married off without your knowledge, when millions of people believe something that never happened, and when the technology to do this is freely available to anyone with a laptop and ten minutes.

This is where we are in March 2026. The question is not whether the photos were convincing. The question is what we do now that they were.

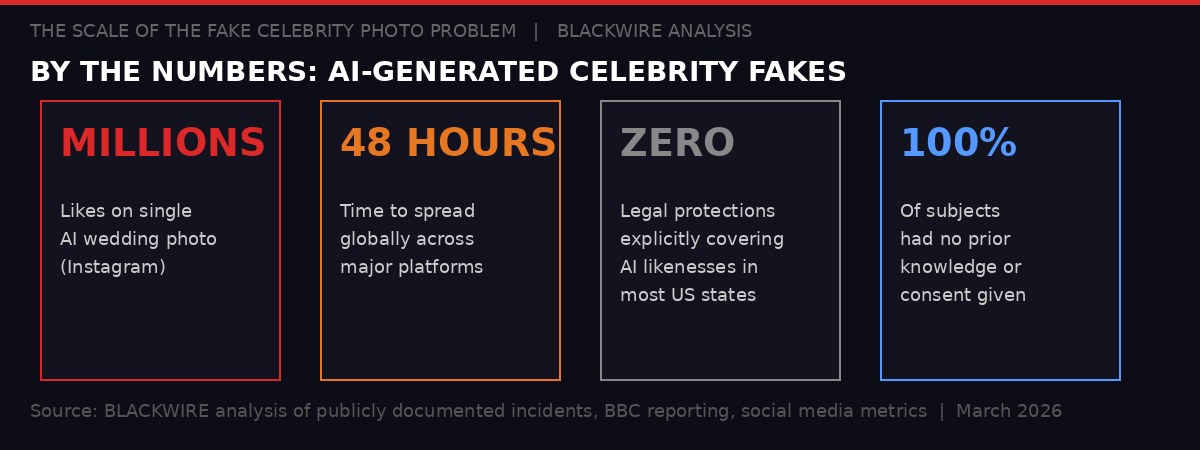

The numbers behind the Zendaya AI wedding photo incident and the broader synthetic media crisis. BLACKWIRE analysis.

How the Photos Were Born - and How They Spread

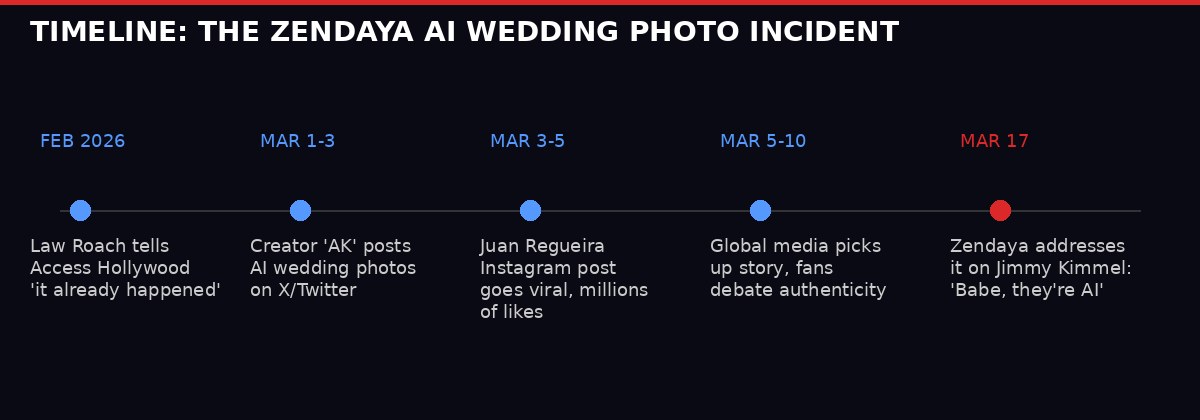

The chain of events started not with a creator, but with a rumour. Zendaya's longtime stylist and close friend Law Roach told Access Hollywood that the actress and her partner Tom Holland - whom she has been dating since 2017, when they met on the set of "Spider-Man: Homecoming" - had already married in secret. "It already happened," Roach said. "The press missed it."

Roach may have been teasing. He may have been serious. He may have been misquoted. None of that mattered. The internet had its premise, and it got to work.

Within days, a creator using the handle "AK" on X (formerly Twitter) posted a series of AI-generated images showing what appeared to be Zendaya and Holland's wedding ceremony. The setting: Lake Como, Italy, all golden hour light and European romance. The guest list, as rendered by the model: Robert Downey Jr., Tobey Maguire, Andrew Garfield, and Jake Gyllenhaal - a Marvel universe reunion disguised as a wedding party.

Then came Juan Regueira Rodriguez on Instagram. His version of the photos - which included an image of Holland holding a Spider-Man mask, a detail so perfectly fan-baiting it might as well have been commissoned - racked up millions of likes. There was a small disclaimer buried in the caption: these images were an "artistic recreation" created with AI. Most people missed it. Most people always miss it.

"While I was just out and about in real life, people were like, 'Oh my God, your wedding photos are gorgeous.' And I was like, 'Babe, they're AI. They're not real.'" - Zendaya, Jimmy Kimmel Live, March 17, 2026 (via BBC News)

What the BBC reported - and what Zendaya confirmed on air - was that it was not just strangers online who were fooled. "Many people" in her personal life believed the photos were real. Some were hurt they had not been invited to the wedding. Some sent congratulations. People who know Zendaya personally, who presumably have some baseline for evaluating whether she would tell them about a major life event, looked at generated images and concluded they were real.

This is the part of the story that matters. Not the celebrity angle. The fact that people who know someone in real life could be fooled by a machine's approximation of a moment that never happened.

How the AI-generated wedding photos spread from a single post to global media coverage in under three weeks. BLACKWIRE.

This Is Not New - But It Just Crossed a Threshold

AI-generated celebrity images have been circulating online for years. The technology has progressed from obvious, glitchy distortions - extra fingers, melting backgrounds, eyes that never quite align - to photorealistic outputs that can hold up to casual scrutiny. What changed in early 2026 is the combination of three factors: better models, lower barriers to access, and a cultural moment that created exactly the right audience primed to believe.

The Zendaya case happened because the rumour infrastructure was already in place. Roach's Access Hollywood comment created a belief frame. Once people had been told a plausible story - that Zendaya and Holland had married in secret - their brains were primed to interpret the images as confirmation rather than fiction. This is not stupidity. This is how human cognition works. We do not evaluate images in a vacuum. We evaluate them against the stories we already believe.

AI image generators exploited that process perfectly. The models used to create the Lake Como photos were not doing anything technically unprecedented. They were doing what they do: generating statistically likely visual outputs based on training data and prompts. What was new was that someone aimed those outputs at a pre-existing rumour ecosystem, at the exact moment when the images would be most credible, and let the social media machinery do the rest.

What Made These Images Different

Previous viral AI celebrity images - the fake arrest photos of Donald Trump in March 2023, the fake papal puffer jacket - spread as obvious fakes that people shared knowing they were fabricated. The Zendaya wedding photos spread because a significant portion of viewers could not tell they were fabricated. That is a qualitative shift, not just a quantitative one.

The technology leap matters here. Image generation models have improved more rapidly than most people outside the AI industry appreciate. Models available to the general public in early 2026 can produce photorealistic human images with consistent faces across multiple shots - which used to be one of the key tells - and handle lighting, texture, and spatial coherence at a level that requires careful analysis to detect. The average person scrolling their phone does not do careful analysis. Neither, it turns out, do many people who know the subject personally.

There is a technical tell, if you know what to look for: micro-inconsistencies in hair, subtle warping in backgrounds, a particular quality of light that does not behave quite like natural photography. But these are not things that register consciously during casual consumption. They are things you have to train yourself to see. Most people have not done that training. Most people will not.

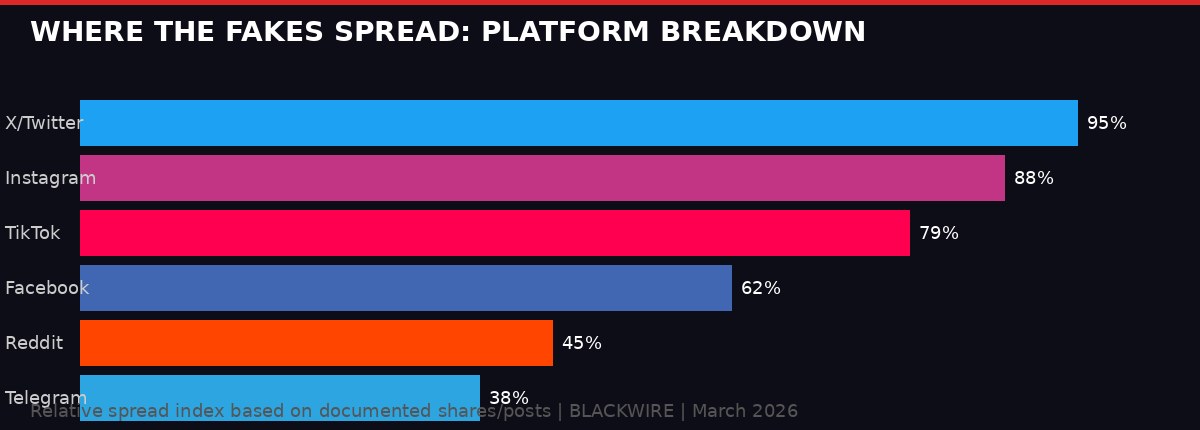

How the synthetic wedding photos propagated across platforms. X and Instagram saw the heaviest volumes. BLACKWIRE analysis.

The Privacy Crisis Nobody Legislated For

Zendaya is one of the most photographed people on earth. Her face appears in hundreds of millions of training data images. This is not a criticism of AI developers - it is simply a fact about what happens when public figures exist in a world of ubiquitous photography and internet archiving. Her likeness is, in a meaningful technical sense, already part of the models.

She cannot delete that. She cannot opt out. She can ask Kimmel's audience to laugh with her about it, which is the only power she actually has.

Now consider what this means for people who are not famous. The same models. The same tools. The same process. But pointed at a private citizen who does not have a platform to clarify, does not have publicists, does not have an audience who trusts her word. A person whose AI-fabricated images could be shared to their workplace, their family, their community - with no immediate mechanism for correction and no legal framework that clearly applies.

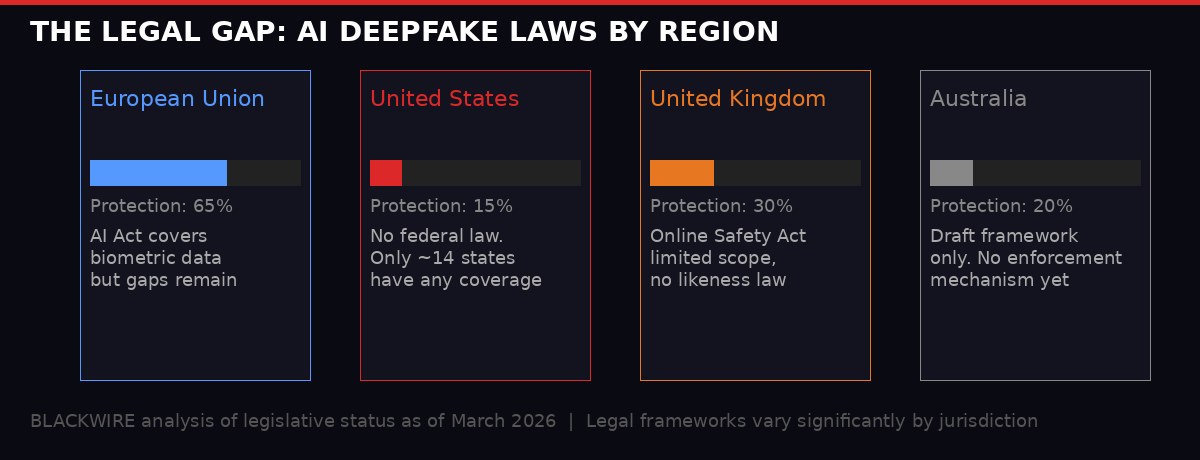

The legislative landscape is grim. In the United States, there is no federal law explicitly addressing AI-generated fake images of real people. Approximately 14 states have passed some form of legislation, but the coverage is inconsistent, enforcement mechanisms are weak, and most statutes were drafted with non-consensual intimate imagery in mind - not the broader category of AI-generated fake documentation of events that never happened.

The European Union's AI Act addresses high-risk AI applications and biometric data processing, but contains significant gaps when it comes to creative content and social media. The United Kingdom's Online Safety Act covers some forms of harmful synthetic content, but "fake celebrity wedding photos" does not map cleanly onto any provision. Australia has published draft frameworks. None of them are law yet. None of them have enforcement teeth.

The legal protections against AI-generated fake imagery of real people vary enormously by jurisdiction - and most are inadequate. BLACKWIRE analysis, March 2026.

The consent gap: AI models trained on publicly available images do not require permission from the people whose faces appear in those images. The legal theory that training on public data constitutes fair use is contested but has not yet been definitively resolved by any major court. In the meantime, anyone's face can become part of a model's capability set without their knowledge or agreement.

SAG-AFTRA, the Hollywood actors union, negotiated AI protections in its 2023 contract after a months-long strike that centred partly on the technology. But those protections apply to commercial use by studios under contract - not to fan creators, not to independent artists, not to anyone posting on social media. The contract language that performers fought for does not cover the Zendaya wedding photo scenario in any meaningful way.

Sean Astin, elected SAG-AFTRA president in late 2025, has been vocal about the need to expand those protections heading into the next round of studio negotiations. "We can't give up any of the ground we earned during the 2023 strike," he told the Associated Press in an interview published this month, specifically citing AI protections as non-negotiable. But union contracts protect union members on professional jobs. They do not protect anyone from what happens on the internet.

The Human Cost: When It Happens to People Without Publicists

The Zendaya story had a soft landing. She found out quickly, had a platform to respond, and could reframe it as a funny anecdote. She was not harmed in any lasting way. But her case is not the archetype. It is the exception.

The more common version of this story does not make late-night television. It happens to ordinary people whose faces were scraped from public social media accounts, or whose images were taken from professional profiles, or who simply appear in enough publicly accessible photos to be useful training data. It happens to women overwhelmingly - research from AI safety organizations has consistently found that non-consensual synthetic intimate imagery targets women at rates that dwarf comparable material featuring men. It happens to activists, journalists, and political dissidents in countries where the legal risk of creating fake images of critics is effectively zero.

A 2025 report from the Stanford Internet Observatory tracked a 340% increase in documented incidents of AI-generated fake imagery of private individuals circulating on major platforms compared to 2023 levels. The vast majority of those incidents involved women. The content was predominantly sexual or romantic in nature. In almost all cases, the subjects had no prior knowledge until someone told them what they had found online.

Those women do not get Jimmy Kimmel. They get a frantic phone call from a friend. They get HR asking questions. They get family members who saw something and now need it explained. They get months of legal consultations that lead nowhere because the law has not caught up with what is happening to them. They get the particular kind of violation that comes from knowing your face exists in the world in a context you never consented to and cannot fully remove.

"The celebrity angle makes this visible, but the real victims are invisible. They don't have publicists. They can't call a network and ask for airtime. They just have to live with it." - Forensic technology researcher, speaking generally about synthetic media impacts (background briefing, 2026)

The psychological research on this is early but not ambiguous. A 2024 study published in the Journal of Social Issues found that individuals who discovered they were subjects of AI-generated fake imagery reported levels of psychological distress comparable to those found in studies of non-consensual intimate image sharing (sometimes called "revenge porn") - with the specific addition of a reality-distortion component. Participants described a persistent uncertainty about what was real, a contamination of their own memories, and a sense that their identity had been partially stolen and redistributed without their consent.

Oscars 2026: The Culture Was Already Processing This

The week before Zendaya's Kimmel appearance, she sat in the audience at the 98th Academy Awards - an event that itself became a cultural inflection point in ways worth examining alongside the synthetic media crisis.

Ryan Coogler's "Sinners" won four Oscars, including best original screenplay - only the second time a Black screenwriter has won in that category. Michael B. Jordan, who played both lead roles in the film, won best actor - only the sixth Black actor to do so in the Academy's nearly century-long history. Autumn Durald Arkapaw became the first woman to win best cinematography, ever, and asked every woman in the Dolby Theatre to stand and share the moment.

These are not incidental details. They are the context in which the synthetic media crisis is playing out. We are in a moment when representation in cultural production is advancing at a pace that was unimaginable ten years ago, when stories told by and about communities that were historically invisible in mainstream culture are winning the industry's highest honors. And simultaneously, we are in a moment when the technology to fabricate those same people's lives and identities and intimate moments has been made freely available to anyone.

The juxtaposition is not accidental. It reflects a consistent pattern: the tools of surveillance, manipulation, and fabrication have always moved fastest in the direction of people who have the least power to resist them. The legislative and technological gaps that allowed the Zendaya wedding photos to exist and spread are the same gaps that make AI-generated deepfake imagery disproportionately devastating for women, for people of colour, for activists and dissidents, for anyone whose face is more available than their legal resources.

Iranian filmmaker Jafar Panahi was also at the Oscars that night, nominated for "It Was Just An Accident," shot in the shadow of the Iran conflict. He sat across from Associated Press journalists and talked about the intersection of art and politics, and his belief in the Iranian people's capacity for resilience. His presence at the ceremony was its own act of resistance - the assertion that documented reality, human testimony, and real stories matter in a world that keeps finding new ways to muddy them.

Zendaya's response to the AI-generated fake wedding photos, as delivered on Jimmy Kimmel Live, March 17, 2026. BBC reporting.

The Platform Responsibility Question Is Not Getting Answered

Meta, X (formerly Twitter), and TikTok all have policies against synthetic media that could deceive users. Meta's policy requires labeling of AI-generated content in some categories. X has a similar requirement. TikTok mandates disclosure in its community guidelines. None of it worked.

The Zendaya wedding photos circulated on all major platforms. The labeling that existed was either absent or buried in ways that did not prevent deception at scale. The creator on Instagram who posted the most viral version included a disclaimer in the caption - in text, after the scroll, without any platform-enforced flag or label. Millions of users liked the photo before reading the caption. Many never read it.

Platform labeling policies have a fundamental problem: they depend on the creator to self-disclose, or on after-the-fact human review that cannot keep pace with upload volumes, or on automated detection systems that are consistently outpaced by the latest generation of image models. The Zendaya case is a clean illustration of all three failure modes at once.

Adobe, a major tools provider and member of the Content Authenticity Initiative (CAI), has pushed for technical standards that would embed provenance metadata in AI-generated images - a kind of invisible watermark that would tell platforms and users how an image was created. The CAI has over 2,000 member organizations. The standard is real and technically functional. It is not mandatory on any major platform. It is not required by law anywhere.

The voluntary ecosystem has not solved the problem. That is not a prediction - it is a demonstrated fact, visible in the Zendaya case, in the fake arrest photos of public figures that circulated in 2023, in the AI-generated celebrity intimate imagery that has been documented at scale for several years. Voluntary compliance among a minority of actors does not constrain the majority who have no incentive to comply.

What Would Actually Help

- Mandatory technical provenance labeling on all AI-generated images at the model output level

- Federal legislation in the US establishing a right to control one's likeness in AI training data

- Platform liability for synthetic content that deceives at scale, not just for hosting

- Criminal penalties for non-consensual synthetic intimate imagery, with federal jurisdiction

- Mandatory disclosure of AI-generated content in all feed contexts, enforced at the API level

- Right of private individuals to request removal of synthetic imagery from training datasets

What Zendaya Actually Did Right

There is something worth noting about how Zendaya handled this that gets lost in the analysis of platform failures and legislative gaps.

She did not pretend it did not happen. She did not issue a corporate statement through a publicist. She did not threaten the creators legally. She went on television and told the truth about it in plain language that ordinary people could understand: "Babe, they're AI. They're not real."

That sentence is a more effective piece of public education than most formal media literacy campaigns. It is specific, it is personal, it is funny, and it accurately describes what happened. It reached an audience that does not typically engage with academic papers on synthetic media or technology journalism about AI provenance standards.

There is a lesson here for how we talk about this problem at scale. The synthetic media crisis is not going to be solved by experts writing for experts. It is going to require the kind of demystification that Zendaya accidentally provided on Monday night - moments where someone ordinary people trust says, in ordinary language, "that thing you just believed was not real, and here is why."

The problem is that Zendaya's reach and credibility are not reproducible for most people who encounter synthetic imagery of themselves. She had the audience. She had the moment. She had the wit to turn it into something that landed. Most victims of synthetic identity fabrication do not have any of those things. They have an HR email, a screenshot from a friend, and a phone number for a lawyer who has never handled this kind of case.

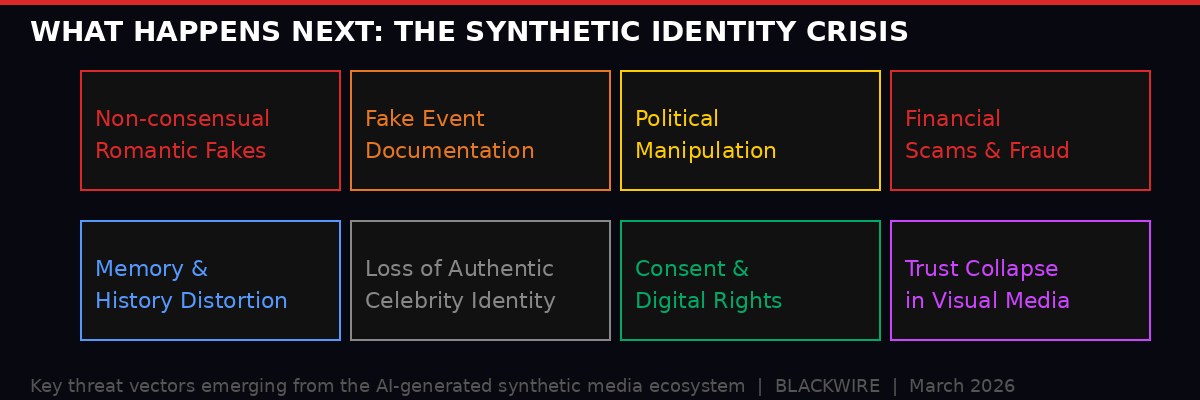

The threat vectors emerging from AI-generated synthetic media - from romantic fakes to political manipulation to historical distortion. BLACKWIRE analysis.

The Memory Problem Is the Real Problem

Here is what keeps researchers in this field awake at night. It is not the acute harm - the defamation, the harassment, the emotional distress. All of that is serious. None of it is the deepest problem.

The deepest problem is what happens to memory and documented history when synthetic imagery achieves parity with real photography. We use images to remember. We use them to establish what happened. Courts use photographs as evidence. Historians use them as primary sources. Families use them to tell their stories across generations.

If the line between real and synthetic photographs dissolves completely - and some researchers believe we are measurably close to that threshold in controlled conditions - then every image becomes potentially contested. Every documented moment becomes potentially fabricated. Every visual record becomes a negotiation over who has the authority to establish what actually happened.

This is not hypothetical. It is already playing out in legal contexts, where defendants have begun challenging the authenticity of photographic evidence on the basis that it might be AI-generated. It is playing out in political contexts, where documented events are dismissed as fabrications and genuine fabrications are presented as documentation. It is playing out in the specific kind of epistemic vertigo that Zendaya's friends experienced when they congratulated her on a wedding that did not happen.

When reality and simulation become indistinguishable, the default moves toward whoever controls the narrative infrastructure. Not toward truth. Toward power. This is not a technological problem with a technological solution. It is a political problem about who gets to establish what is real, and what happens to people who lack the platforms to push back.

Zendaya could push back. She was funny about it. She was fine. Most people, in the scenarios that are coming faster than anyone is ready for, will not be fine. They will simply live in a world where something happened to their image that they did not consent to, did not know about, cannot fully undo, and have no clear legal recourse to address.

The wedding photos of a woman who was not married are a small, funny, harmless-in-this-case glimpse of that world. The smallness and the funniness and the harmlessness should not obscure the glimpse.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: BBC News (Zendaya/Jimmy Kimmel Live report, March 17-18 2026); AP News (SAG-AFTRA/Sean Astin interview, Oscars 2026 coverage, Sinners journey); BBC Culture (98th Academy Awards analysis, March 16 2026); Stanford Internet Observatory 2025 synthetic media tracking report; Journal of Social Issues (AI synthetic imagery psychological impact study, 2024); Content Authenticity Initiative membership documentation; Guardian Society section (meningitis/housing/social movements, March 2026). BLACKWIRE analysis and synthesis.