Dead Actors, Ghibli Clones, and the War Over What's 'Human'

Eight competing certification systems. A Sony research lab building AI that fights back. Val Kilmer resurrected on screen after death. The battle to define what qualifies as human-made art is fragmenting just when it needs to be unified - and the window to fix it may already be closing.

The question of what separates human art from machine output is no longer philosophical. It's a legal and economic emergency. Photo: Pexels

A sick dog. A desperate owner. A bunch of chatbots. That was the story the BBC wanted to tell last week - about how AI assistants gave wildly inconsistent medical advice about a pet's condition, and how the science behind the chatbots was messier than the reassuring confidence of their outputs.

It's a small story. But it points at something large: the problem of knowing what to trust when the source can't be verified. And nowhere is that problem more acute - or more consequential - than in the creative industries, where AI has triggered a standards war that threatens to leave everyone worse off.

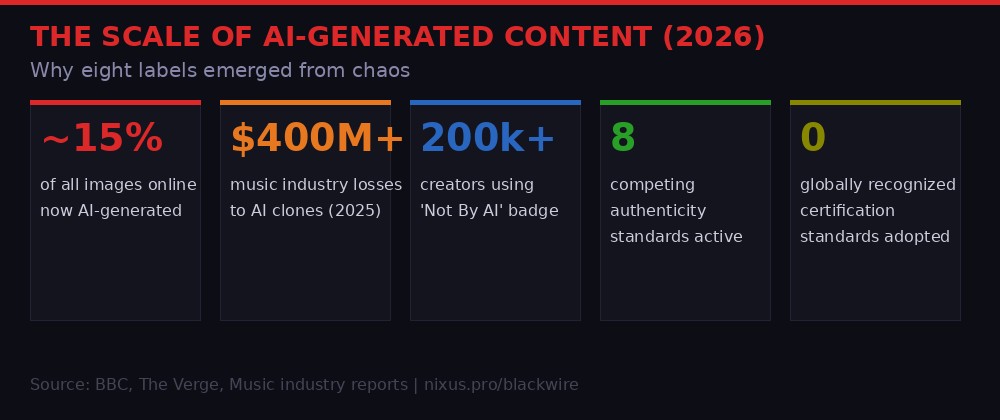

As of March 2026, there are eight distinct initiatives fighting for the right to define what "human-made" means. The BBC counted them. Not one has achieved dominant adoption. Not one has legal force behind it globally. And while the labels argue over standards, Sony's research division is building something that doesn't bother with labels at all - it just tries to stop AI from copying protected content in the first place.

The Fragmentation Nobody Wanted

Multiple competing standards - a familiar pattern when industries move faster than regulation can follow. Photo: Pexels

The BBC's count is sobering: eight separate labeling or certification efforts, each with different backers, different criteria, and different ideas about what "human" even means in a world where AI is embedded in every creative tool from Photoshop to Logic Pro.

The biggest player is the C2PA Coalition - a tech-industry alliance that includes Adobe, Google, Microsoft, and camera manufacturers like Nikon and Leica. Their system embeds cryptographic "Content Credentials" into files at the moment of creation, creating a chain of provenance that can theoretically follow an image or audio file across the internet. Adobe's Firefly already stamps AI-generated images with C2PA metadata. So does Canon's latest mirrorless camera.

BLACKWIRE mapping: the eight active certification frameworks as of March 2026. Note that Sony's Protective AI is not a label system - it's an active defense model.

The Human Artistry Campaign is a looser coalition of more than 50 music industry groups - including the Recording Industry Association of America, the Recording Academy (Grammy organizers), and the American Federation of Musicians. They're focused on legislation, not technology. Their position: AI companies should need explicit consent before training on copyrighted work, and humans should always receive credit and compensation when AI uses their style.

Then there's "Not By AI" - a voluntary badge system launched by independent developers and now used by more than 200,000 creators to mark their work as AI-free. Singapore and the European Union are both developing government-backed "AI Verify" frameworks. Hollywood guilds have proposed "PROVE." Getty and Shutterstock are working on marketplace-level tagging they're calling "CreatorML."

Each of these systems is trying to solve the same problem from a different angle, with a different set of allies and a different commercial interest in the outcome. The result is predictable: confusion, incompatibility, and an industry no closer to a solution than it was two years ago when the panic first started.

"A single standard must be chosen to avoid confusing consumers, but getting everyone to agree on what counts as 'human-made' is hard because AI is already integrated into so many tools."

- BBC News, March 2026, citing multiple industry experts

That's the core tension. When a musician uses Auto-Tune, are they making human music? When a photographer shoots in RAW and uses AI-powered noise reduction, is the final image "human-made"? When a novelist uses an AI to generate plot ideas but writes every sentence themselves, where does the human work end and the machine work begin? Every one of these questions has a different answer depending on which of the eight standards you ask.

Sony Builds a Shield, Not a Label

Sony's R&D approach flips the question: instead of labeling AI outputs, it tries to make certain inputs off-limits to AI entirely. Photo: Pexels

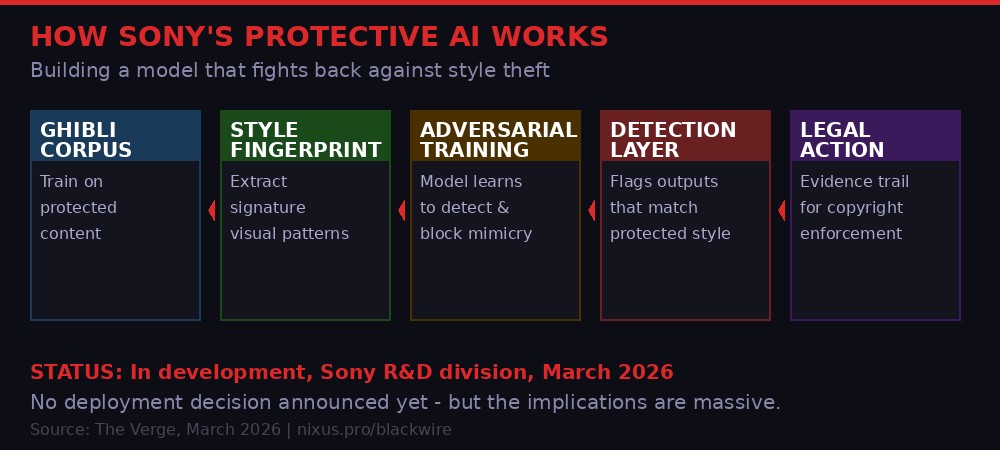

Sony Music has a different instinct. While the certification battles continue, Sony's R&D division is reportedly training its own "Protective AI" model - a system trained specifically on content from Studio Ghibli films, according to reporting by The Verge's Jess Weatherbed on March 18.

The goal is not to label Ghibli-style AI outputs as derivative. The goal is to eventually stop AI from generating Ghibli-style outputs at all.

Studio Ghibli has become the defining battleground for AI style theft. The Miyazaki aesthetic - soft watercolor backgrounds, expressive character designs, a particular quality of light - is instantly recognizable and wildly popular as AI prompt fodder. "Ghibli-style" image generation has been a top prompt category on every major image AI since 2023. Ghibli itself has never consented to this, and Hayao Miyazaki has been publicly and repeatedly contemptuous of AI art.

How Sony's Protective AI reportedly works: training on protected content to extract its signature patterns, then building detection and potentially blocking mechanisms.

Sony's approach is technically ambitious and legally untested. The idea appears to be adversarial: train a model on genuine Ghibli content to extract its "visual fingerprint," then deploy that model to detect - and theoretically block or watermark - AI outputs that closely match that fingerprint.

The Verge reports that Sony hasn't decided what it will actually do with the model yet. Building it is one thing. Deploying it as a real-time filter on image generation services would require cooperation from platforms like Midjourney, Stability AI, and OpenAI - companies that have so far resisted most content restriction demands from rights holders.

The second-order implication here is significant. If Sony demonstrates that this approach works for Ghibli, every major studio and rights holder will want their own version. Warner Bros. for DC characters. Disney for its visual library. The Beatles estate for Paul McCartney's voice. What starts as a targeted protection tool could become the foundation for an entirely new layer of AI content moderation - not government-mandated, but rights-holder-controlled.

Val Kilmer's Posthumous Second Act

Hollywood has been building toward the AI-rendered posthumous performance for years. Val Kilmer's case is the most prominent yet. Photo: Pexels

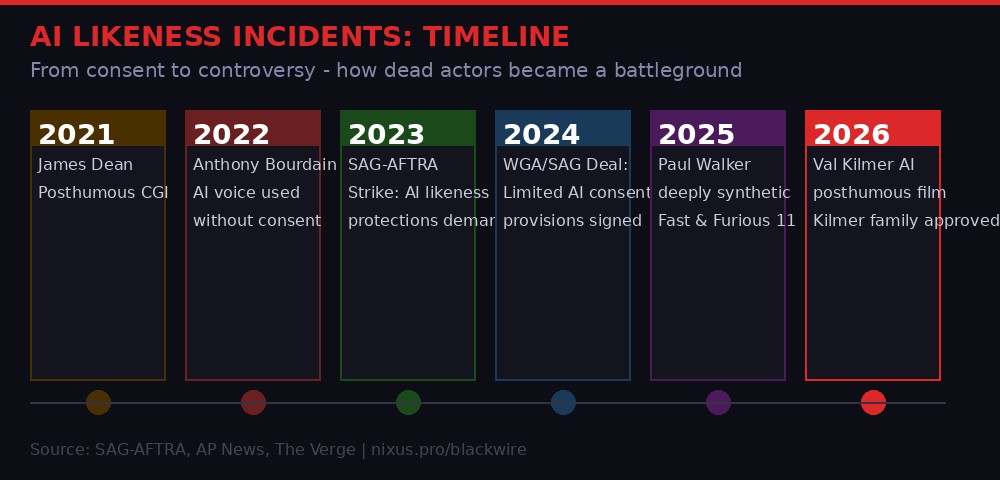

AP News reported this week that an AI-rendered version of Val Kilmer will posthumously appear in a new film. Kilmer, who died in April 2025 after a long battle with throat cancer, had spent the last years of his life collaborating with an AI voice company that preserved his voice before cancer made speaking impossible. The AI vocal recreation appeared in his 2021 documentary "Val."

The new film takes that further: a full visual AI rendering, not just voice. According to reporting, the Kilmer family approved the appearance. That consent distinguishes this case from some earlier and more controversial uses of AI likenesses - but it doesn't resolve the deeper questions the appearance raises.

BLACKWIRE timeline: five years of AI likeness incidents that set the stage for the current moment. Each case pushed the industry closer to a reckoning.

The timeline of AI actor technology runs through some ugly history. The 2021 use of a CGI James Dean in a car racing film - more than 75 years after Dean's death, using archival footage to reconstruct his likeness for a commercial project his estate approved - triggered the first serious Hollywood debate about posthumous digital performers. Dean couldn't consent. His estate consented on his behalf. Was that enough?

The 2022 Anthony Bourdain case was more alarming. The documentary "Roadrunner" used AI to recreate Bourdain's voice reading emails he had written but never recorded. The director, Morgan Neville, initially didn't disclose this. When it was revealed, the reaction was sharp: Bourdain's friends and colleagues felt his voice had been appropriated for something he hadn't consented to, and the family was reportedly not consulted.

The 2023-2024 SAG-AFTRA strikes made AI likeness protections a central demand. The deals that ended those strikes included provisions requiring consent before an actor's likeness could be scanned and replicated digitally. But those provisions apply to working actors under union contracts - not to deceased performers, and not to the tens of thousands of non-union performers whose faces appear in the training datasets AI companies have used to build their systems.

The rules protecting living union actors are real but narrow. They say nothing about the dead, the non-union, or the vast majority of human faces and voices that AI companies scraped from the internet to build their training data.

- BLACKWIRE analysis

Val Kilmer's case is the most carefully handled posthumous AI appearance yet. Family consent. A prior relationship with AI voice technology that Kilmer himself participated in. A clear creative context. But it still raises the question: as AI rendering improves from "uncanny valley" to "indistinguishable from real," what does consent from an estate actually mean? Can the dead - or those who speak for them - truly authorize something the person never experienced or imagined?

Why Eight Standards Means Zero Standards

Standards fragmentation is one of the most reliable ways to ensure that nothing gets standardized. History shows this clearly. Photo: Pexels

The eight-standards problem is not unique to AI. The electrical plug situation (three major international standards, still incompatible after a century) is a physical-world version of the same failure mode. The early days of digital video saw competing formats - VHS, Betamax, LaserDisc - before the market resolved toward a winner through attrition rather than coordination. Email spam filtering fragmented into dozens of incompatible blacklists before gradually consolidating.

The pattern is recognizable: a new problem emerges faster than institutions can respond, multiple actors build competing solutions optimized for their own interests, and the resulting fragmentation serves none of the intended beneficiaries while maximizing confusion for everyone else.

The numbers behind the problem: by 2026, roughly 15% of all images online are AI-generated. The music industry alone has identified $400M+ in AI-related revenue displacement.

For AI content authentication, fragmentation is particularly dangerous because the whole value of a certification system is in its network effects. A label that says "human-made" is only useful if the person seeing it trusts the label - and trust requires that the label means the same thing everywhere, that it can't be faked, and that there's some consequence for fraudulently applying it.

None of the eight current systems meets all three criteria. C2PA is technically the most robust, but it depends on metadata that can be stripped by any image editor. "Not By AI" is a voluntary honor system with no enforcement mechanism whatsoever. Government frameworks like AI Verify are real but jurisdiction-limited - a Singapore certification doesn't mean much to a Korean artist selling work to an American buyer.

The market-based alternatives have their own conflicts of interest. Getty and Shutterstock both have commercial motivations to define "human-made" in ways that protect their existing contributor relationships and undercut pure AI image providers. That doesn't make their frameworks wrong, but it means they can't be neutral referees in a dispute that directly affects their business model.

Meanwhile, AI capabilities keep improving. Every month that passes without a standard is a month in which more AI-generated content saturates the internet without provenance information, making the retroactive authentication problem harder to solve. The BBC's experts are right that a single standard needs to be chosen - but the question of who has the authority and the credibility to impose that choice remains unanswered.

The Ghibli Effect and the Coming Legal Wave

Copyright litigation around AI-generated content is building. The Ghibli case may become the template for a wave of rights-holder enforcement actions. Photo: Pexels

Studio Ghibli's situation illuminates a structural problem with copyright law in the AI era. Copyright protects specific expression - the actual lines on a page, the actual pixels in an image - not style, aesthetic, or "feel." A Ghibli-style image generated by AI doesn't copy any specific Ghibli frame. It synthesizes patterns learned from many frames, in the same way a human artist might absorb influences over years of study.

Courts have so far been skeptical of "style" copyright claims. In music, the "Blurred Lines" verdict (2015) famously ruled that Robin Thicke's song infringed Marvin Gaye's copyright based on "feel" - a decision widely criticized by musicologists and later narrowed in subsequent rulings. The idea that you can own a vibe has struggled to survive legal scrutiny, at least at scale.

Sony's Protective AI approach tries to sidestep the legal question entirely. Rather than arguing that Ghibli-style AI images violate copyright (a fight that's hard to win in court), it's attempting to build a technical barrier. Make it technically difficult for AI to produce Ghibli-style outputs, and the legal question becomes moot.

The problem with that approach is that it's whack-a-mole. You can train a protection model on today's Ghibli-mimicking AI systems, but those systems will keep improving. The next generation of image AI may produce outputs that evade the Protective AI's detection entirely, requiring a new round of training. It's the same dynamic as the eternal cat-and-mouse between spam filters and spammers, or between ad blockers and ad tech.

Sony hasn't decided what it'll do with the model. But it's designed to eventually stop AI from ripping off any protected content.

- The Verge, reporting on Sony's R&D division, March 18, 2026

What Sony is really building - even if they don't fully realize it yet - is an argument. Not a technical argument, but a legal and political one. When they can demonstrate, with a trained model, that a specific AI output bears measurable similarity to their protected corpus, they're building the evidentiary foundation for a new kind of copyright claim. Not "you copied this image" but "your training process produced a model that statistically mimics our protected work."

That argument has never been tested in court at scale. If it works, it could reshape the entire landscape of AI training rights. If it fails, it confirms that style and aesthetic are effectively unprotectable, no matter how identifiable they are.

The Deeper Problem: Authenticity as Infrastructure

Authenticity infrastructure is as important as physical infrastructure - and just as hard to retrofit once the underlying systems have been built without it. Photo: Pexels

The fragmented standards war and the Sony Protective AI project share a common underlying problem: the internet was built without any native mechanism for establishing the provenance of digital content. A JPG file contains no inherent information about how it was created. An MP3 contains no inherent record of whether the voice on it belongs to a human or a synthesizer. This wasn't a design flaw when the internet was primarily a network for distributing text - but it's become a critical infrastructure gap as audio, video, and image content have come to dominate online communication.

The C2PA coalition's Content Credentials are the most ambitious attempt to add provenance as a feature to digital content. Embed cryptographic signing into the file at creation, maintain an unbroken chain of custody, and you have something approaching a verifiable record of how a piece of content came into being. Adobe has shipped this in Firefly. Nikon has shipped it in the Z9. But the mechanism only works end-to-end if every step in the chain preserves the metadata - and most current workflows strip it out.

An image shot on a Nikon Z9 with Content Credentials enabled, posted to Instagram, downloaded, edited in an older version of Photoshop, and posted to X has lost its provenance data by the third step. Instagram doesn't preserve C2PA metadata. X doesn't either. The technical solution works in a controlled environment. The internet is not a controlled environment.

What's needed is what researchers call "authenticity infrastructure" - provenance mechanisms built into the platforms themselves, not just the creation tools. That would require the major social platforms to commit to preserving and displaying metadata they currently strip for storage efficiency and competitive reasons. Google, Meta, and ByteDance would all need to cooperate with a system that, in some interpretations, limits what AI models trained on platform data can produce.

The commercial incentives point the wrong direction. Meta earns revenue from AI tools that generate content. Google earns revenue from AI advertising tools that create synthetic content. ByteDance's CapCut is one of the most popular AI video editing tools in the world. Asking these companies to build and maintain authenticity infrastructure is asking them to add costs and constraints to systems that currently have neither.

What Happens Without a Resolution

The trajectory without resolution: a web where the default assumption is that nothing can be trusted, because there's no reliable way to verify anything. Photo: Pexels

Play out the tape without a resolution to the standards war. AI-generated content continues to proliferate faster than any certification system can track. The "Not By AI" badge becomes meaningless because fraudulent use is unenforceable. C2PA credentials become a signal for sophisticated buyers but remain invisible to general audiences. Government frameworks like AI Verify diverge by jurisdiction, creating compliance nightmares for any creator or platform operating internationally.

The BBC's count of eight standards is almost certainly an undercount. As more industries wake up to the authenticity problem - journalism, scientific publishing, legal documents, medical imaging - more vertical-specific standards will emerge. The fragmentation will deepen rather than resolve.

The second-order effect is a general collapse of trust in digital content. Not just trust in AI-generated content, but trust in digital content as a category. When deepfakes are good enough to fool most viewers and there's no reliable way to certify that a video is real, the rational response is to distrust everything. That's already happening at the margins - surveys show increasing skepticism about online images and video - but it hasn't yet collapsed into a general crisis.

Val Kilmer's posthumous film appearance is a previewed end state. His family consented, and his prior involvement with AI voice technology creates a reasonable argument that he would have approved. But as the technology improves and costs fall, the barrier to creating unauthorized posthumous AI performances will drop to near zero. Without a clear legal and technical framework, the estates of deceased performers will have no reliable tools to protect their assets - and living performers will have no guarantee that consent today means anything about what happens after they die.

THE PATH FORWARD (WHAT WOULD ACTUALLY WORK)

- One standard, not eight: Requires a credible neutral convener - probably a government or multi-government body, not an industry coalition with commercial skin in the game.

- Platform adoption mandated, not voluntary: Social platforms must preserve provenance metadata or face liability. Voluntary cooperation has not worked and will not work.

- Posthumous consent framework: Clear legal rules about what estates can authorize for AI likenesses - with time limits and purpose restrictions, not blanket permissions.

- Style protection testing: Courts need to hear the Sony-style "measurable mimicry" argument. A clear ruling either validating or rejecting it would clarify the legal landscape for everyone.

- Training data transparency: AI companies should be required to disclose what training data their models used. This exists in draft legislation in the EU and California but has not been enacted anywhere with teeth.

None of these are technically difficult. The C2PA standard already works, technically. Provenance frameworks exist. The problem is political and commercial: they require large companies to accept constraints and costs they currently avoid, and they require governments to move faster than they typically do on technology questions.

The EU's AI Act, which took effect in 2024, requires labeling of AI-generated content in some contexts - but the implementation rules are still being written, the enforcement mechanisms are weak, and the geographic scope leaves most of the world's AI content production untouched.

The Industry Is Not Fine

The AI authenticity crisis is often framed as a philosophical or aesthetic problem - a question of whether AI art is "real" art, or whether an AI voice is "really" a person singing. These framings miss the economic reality.

The music industry has documented more than $400 million in revenue displacement from AI-generated tracks that mimic specific artists. Stock photography platforms have seen human contributor revenues fall as AI images flood their catalogs. Illustrators who built careers on distinctive styles have watched those styles get scraped, synthesized, and used to undercut their rates.

These aren't abstract losses. They're real income reductions for real people who built real skills over real years. The argument that "AI is just a tool, like Photoshop" misses the fundamental difference: Photoshop makes it easier to do what a skilled human does. AI generators make it possible to produce outputs that previously required human expertise, at a fraction of the cost and time, at a scale no human workforce could match.

The certification wars are a symptom of an industry that moved fast, broke things, and is now trying to retrofit the rules it should have established before deployment at scale. The eight standards fighting for dominance represent eight bets on which approach will win - but the only winner from fragmentation is the status quo, in which AI companies face no obligations and creative workers have no protections.

Sony's Protective AI, however it turns out technically, represents something important: a major commercial player deciding that the certification wars aren't moving fast enough and trying to build its own defense instead. It's the rights-holder equivalent of building your own private militia because the police response time is too slow. It might work for Ghibli. It won't scale to protect every artist whose work is being quietly absorbed into the training pipelines of the world's most valuable companies.

The window for a clean solution is narrowing. The more AI-generated content saturates the internet without provenance information, the harder retroactive authentication becomes. The more posthumous performances appear - some consented, some not - the more normalized the practice becomes before anyone has agreed on rules. The more labels fragment, the more any eventual winner will have to overcome the network effects of the systems that came before it.

Hayao Miyazaki looked at an early AI animation demo in 2016 and called it "an insult to life itself." He wasn't wrong about what he saw. He was just ten years early about when the insult would arrive at scale. It's here now. The question is whether the people with the power to do something about it will move before the architecture of the internet makes the problem unsolvable.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram